Bayesian Model Selection for 13C Metabolic Flux Analysis: A Comprehensive Guide for Drug Development Researchers

This article provides a comprehensive guide to Bayesian methods for model selection in 13C Metabolic Flux Analysis (MFA), a critical tool for understanding cellular metabolism in biomedical research.

Bayesian Model Selection for 13C Metabolic Flux Analysis: A Comprehensive Guide for Drug Development Researchers

Abstract

This article provides a comprehensive guide to Bayesian methods for model selection in 13C Metabolic Flux Analysis (MFA), a critical tool for understanding cellular metabolism in biomedical research. We first establish the foundational principles of Bayesian inference and its superiority over traditional methods for handling uncertainty in complex metabolic networks. We then detail methodological workflows for implementing Bayesian model selection, including prior specification, computational algorithms like MCMC, and software tools. Practical sections address common troubleshooting scenarios, optimization of computational efficiency, and strategies for experimental design. Finally, we present rigorous validation frameworks, compare Bayesian approaches to frequentist alternatives, and demonstrate their application in real-world drug development case studies. This guide is tailored for researchers and professionals seeking robust, probabilistic frameworks to enhance the reliability of metabolic models in target discovery and therapeutic development.

Why Bayesian? Foundations of Probabilistic Model Selection for 13C MFA

Technical Support Center

Troubleshooting Guides & FAQs

Q1: During Bayesian model selection, my marginal likelihood (evidence) calculation yields an infinite or "Inf" value. What is the cause and how can I resolve this? A: This typically indicates numerical underflow in high-dimensional parameter spaces. The log-marginal likelihood can exceed the floating-point range if priors are improperly scaled relative to the likelihood.

- Protocol for Resolution:

- Log-Scale Computation: Perform all calculations in log-space. Use the log-sum-exp trick for stability.

- Prior Diagnostic: Check if your prior distributions are too diffuse (uninformative) relative to the parameter scales defined in your metabolic network. Re-scale parameters (e.g., flux rates in mmol/gDW/h) so their expected magnitude is around 1.

- Tool Verification: If using software like

INCAorcobrapywith Bayesian toolboxes, ensure you are using the latest version where such stability fixes are often implemented.

Q2: The Markov Chain Monte Carlo (MCMC) sampler for model comparison fails to converge when comparing two rival metabolic network topologies (Model A vs. Model B). What steps should I take? A: Poor MCMC convergence in model selection often stems from poorly mixing chains when the sampler jumps between models with different dimensionalities.

- Protocol for Resolution:

- Increase Adaptation: Dramatically increase the number of MCMC adaptation steps (e.g., to 50,000-100,000) for the reversible-jump (RJ)MCMC algorithm to better learn proposal distributions for inter-model jumps.

- Parameterization: Ensure conserved moieties are removed and the network is properly parameterized to eliminate linearly dependent parameters that create ill-conditioned posteriors.

- Convergence Diagnostic: Run multiple chains from dispersed starting points. Calculate the potential scale reduction factor (PSRF or $\hat{R}$) specifically for the model index variable. A value <1.05 indicates convergence.

Q3: How do I choose an appropriate prior for model parameters when the goal is comparing multiple 13C MFA network models? A: The choice of prior is critical as it influences the marginal likelihood. An overly broad prior can unfairly penalize a more complex model.

- Protocol for Resolution:

- Use Hierarchical Priors: For fluxes, use a hierarchical prior structure (e.g., $v \sim Normal(\mu, \sigma)$ with $\mu \sim Normal(0,10)$ and $\sigma \sim HalfNormal(5)$). This partially pools information and regularizes estimates.

- Prior Predictive Checks: Simulate artificial 13C labeling data from the candidate models using draws from your priors. Visually compare the simulated data distributions to realistic experimental ranges. Adjust prior hyperparameters until simulations are biologically plausible but not overly restrictive.

- Sensitivity Analysis: Perform model selection on a synthetic dataset where the true model is known. Systematically vary the prior width and report the impact on the posterior model probabilities in a table.

Q4: The computed Bayes Factor between two models is highly sensitive to small changes in the 13C labeling dataset. Is this normal? A: High sensitivity suggests either insufficient data or model misspecification. 13C MFA data can have limited information for distinguishing between certain alternative pathways.

- Protocol for Resolution:

- Data Integration: Incorporate additional experimental data (e.g., extracellular fluxes, enzyme activity) into a unified Bayesian framework to constrain the models more effectively.

- Information Theory Analysis: Calculate the Fisher Information Matrix (FIM) for parameters unique to each rival model. A near-singular FIM indicates the data provides little information to estimate those parameters, explaining the sensitivity.

- Report Bayesian Model Averaging (BMA): Instead of selecting a single model, present results as a weighted average (BMA) over the top models, where weights are the posterior model probabilities. This accounts for selection uncertainty.

Table 1: Comparison of Model Selection Criteria in 13C MFA

| Criterion | Calculation | Advantage | Disadvantage | Typical Use Case | |||

|---|---|---|---|---|---|---|---|

| Bayes Factor (BF) | $BF_{12} = \frac{p(Data | M1)}{p(Data | M2)}$ | Coherent probabilistic interpretation; incorporates prior knowledge. | Computationally intensive; sensitive to prior choice. | Comparing 2-4 distinct network hypotheses. | |

| Akaike Information Criterion (AIC) | $AIC = 2k - 2\ln(\hat{L})$ | Easy to compute; good for prediction. | Asymptotic; tends to favor overly complex models with finite data. | Screening many potential models rapidly. | |||

| Bayesian Information Criterion (BIC) | $BIC = k\ln(n) - 2\ln(\hat{L})$ | Strong consistency for true model. | Can over-penalize complexity more than AIC; also asymptotic. | Large 13C datasets (n > 100). | |||

| Posterior Model Probability | $p(M_k | Data) = \frac{p(Data | Mk)p(Mk)}{\sum_i p(Data | Mi)p(Mi)}$ | Direct probability statement for each model. | Requires specifying prior model probabilities $p(M_k)$. | Final presentation of multi-model comparison results. |

Table 2: Impact of Prior Width on Model Selection for a Toy Network (Synthetic Data)

| Prior SD on Fluxes (v) | Log-Marginal Likelihood (M1) | Log-Marginal Likelihood (M2) | Bayes Factor (BF12) | Correct Model Recovered? |

|---|---|---|---|---|

| 0.5 | -125.7 | -121.3 | 0.012 (Strong for M2) | Yes |

| 5 | -287.4 | -285.1 | 0.11 (Substantial for M2) | Yes |

| 50 | -601.2 | -598.9 | 0.26 (Anecdotal for M2) | No |

Experimental Protocols

Protocol: Bayesian Model Selection Workflow for 13C MFA

- Model Specification: Define candidate metabolic network models (M1, M2,... Mk) as stoichiometric matrices, including all alternative reactions under consideration.

- Prior Elicitation: Assign biologically-informed prior distributions to free net and exchange fluxes in each model. Use weakly informative, proper priors (e.g., Normal or Uniform bounded by physiological ranges).

- MCMC Sampling: For each model, run an MCMC sampler (e.g., Hamiltonian Monte Carlo) to obtain samples from the parameter posterior distribution $p(\thetak | Data, Mk)$. In parallel, run a reversible-jump MCMC to sample across models.

- Evidence Calculation: Compute the marginal likelihood $p(Data | M_k)$ for each model using stabilized harmonic mean estimation, nested sampling, or bridge sampling from the MCMC output.

- Model Comparison: Calculate Bayes Factors or posterior model probabilities. Perform sensitivity analysis by varying prior widths within plausible bounds.

- Validation: If possible, validate the selected model using a hold-out dataset of 13C labeling patterns not used in the model fitting.

Protocol: Generating Synthetic 13C Data for Method Benchmarking

- Define Ground Truth: Choose a metabolic network model and set specific, known values for all flux parameters ($v_{true}$).

- Simulate Labeling: Use a simulation tool (e.g.,

OpenFLUX2,isoDesign) to simulate the steady-state 13C labeling patterns of intracellular metabolites and/or measured fragments (MDV). - Add Noise: Corrupt the simulated MDVs with realistic measurement noise: $MDV{measured} = MDV{simulated} + \epsilon$, where $\epsilon \sim MultivariateNormal(0, \Sigma)$. The covariance matrix $\Sigma$ is often diagonal with variances proportional to $(1/n)$ where

nis a simulated measurement count. - Output: The final synthetic dataset comprises the noisy MDVs and the associated extracellular flux data, serving as a known ground-truth system for testing model selection algorithms.

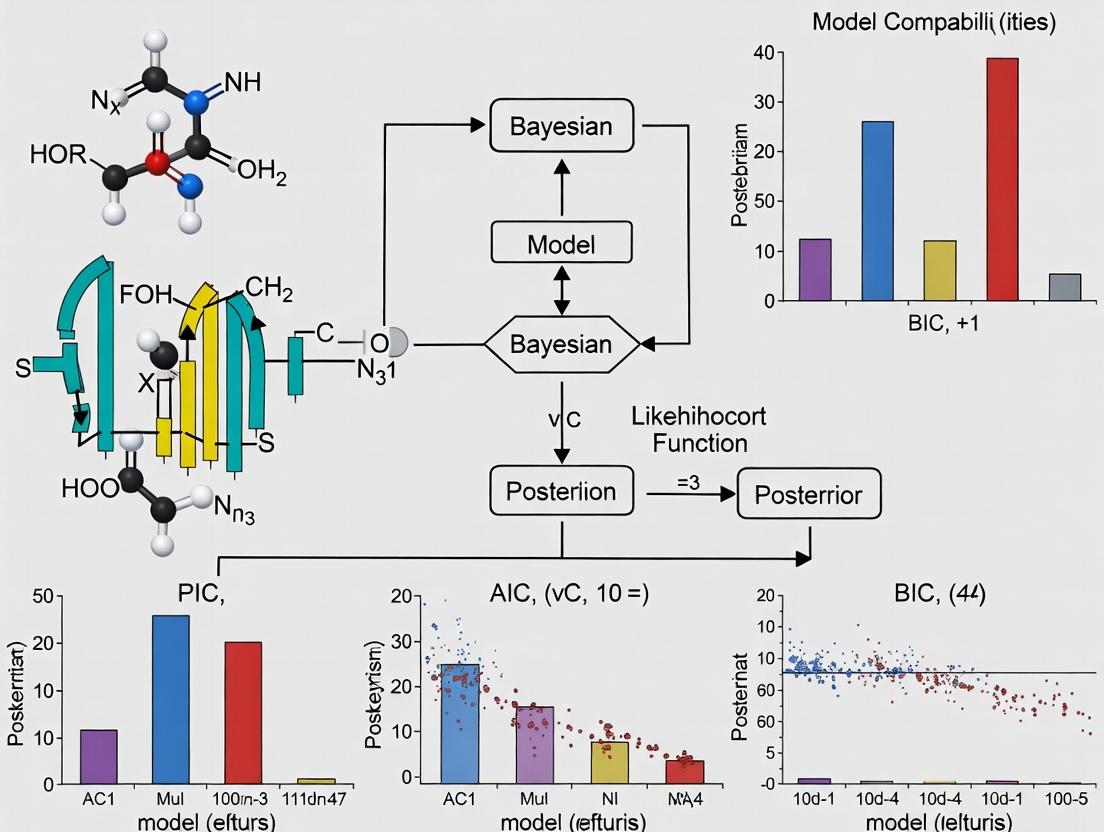

Diagrams

Title: Bayesian 13C MFA Model Selection Workflow

Title: Model Selection Uncertainty & Averaging

The Scientist's Toolkit: Research Reagent & Computational Solutions

Table 3: Essential Resources for Bayesian 13C MFA Model Selection

| Item / Solution | Function / Purpose | Example / Note |

|---|---|---|

| 13C-Labeled Substrates | Provide the isotopic tracer input for generating metabolic flux data. | [1-13C]Glucose, [U-13C]Glutamine; purity >99% is critical. |

| GC-MS or LC-MS Instrument | Measure the Mass Isotopomer Distribution Vectors (MDVs) of metabolites. | High-resolution mass specs improve MDV accuracy. |

| Metabolic Network Modeling Software | Simulate 13C labeling patterns from a given network and flux map. | INCA, OpenFLUX2, 13C-FLUX2, cobrapy. |

| Probabilistic Programming Framework | Implement custom Bayesian models, priors, and MCMC samplers. | Stan (via cmdstanr/pystan), PyMC, TensorFlow Probability. |

| High-Performance Computing (HPC) Cluster | Execute computationally intensive MCMC runs for multiple models. | Essential for RJMCMC on large networks (>50 free fluxes). |

| Synthetic Data Generator | Create benchmark datasets with known true models for algorithm validation. | Custom scripts using isoDesign or OpenFLUX2 APIs. |

| Visualization Library | Diagnose MCMC convergence and present results. | ArviZ (for PyMC/Stan), ggplot2, Matplotlib. |

This technical support center is framed within a thesis on advanced Bayesian methods for 13C Metabolic Flux Analysis (MFA) model selection, a critical area for researchers and drug development professionals. The following guides and FAQs address common philosophical and practical issues encountered when applying Bayesian and Frequentist paradigms to experimental data.

Troubleshooting Guides & FAQs

FAQ 1: My p-value is significant, but my Bayesian model shows low posterior probability for the effect. Which result should I trust?

- Issue: Contradiction between Frequentist (p < 0.05) and Bayesian (e.g., P(H1|D) < 0.7) outcomes.

- Diagnosis: This is a classic discrepancy often arising from the different interpretations. A p-value measures the probability of observing data as or more extreme than yours, assuming the null hypothesis is true. The posterior probability measures the probability of your specific hypothesis given the observed data. A significant p-value can occur with modest effect sizes if data is very precise (low variance), while the Bayesian posterior incorporates prior knowledge and may remain skeptical if the effect size is biologically implausible.

- Solution:

- Examine effect size and confidence/credible intervals. A significant p-value with a credible interval spanning negligible values is a red flag.

- Critically evaluate your prior. Are you using a default non-informative prior? For 13C MFA, consider an informed prior based on previous literature or pilot studies.

- Report both results transparently and discuss the divergence in the context of your specific research question.

FAQ 2: How do I choose an appropriate prior for my 13C MFA model selection?

- Issue: Subjectivity in prior specification leading to skepticism from peer reviewers.

- Diagnosis: The choice of prior is fundamental in Bayesian 13C MFA. An overly informative (narrow) prior can dominate the data, while a too-vague prior may offer little computational or inferential advantage.

- Solution Protocol:

- Use Literature Data: Compile published flux distributions for your organism/cell line under similar conditions to construct an empirically-derived prior (e.g., a normal distribution with mean and variance from meta-analysis).

- Perform Prior Predictive Checks: Simulate data from your candidate priors before observing your experimental data. Check if the simulated data are biologically plausible.

- Conduct Sensitivity Analysis: Run your model with a range of priors (e.g., from more informative to more diffuse). Present a table showing the stability of key posterior flux estimates.

FAQ 3: My MCMC sampler for the 13C Bayesian model is mixing poorly or not converging.

- Issue: High autocorrelation, low effective sample size (ESS), or divergent transitions in Hamiltonian Monte Carlo (HMC) samplers like Stan.

- Diagnosis: Poor mixing is common in high-dimensional, correlated parameter spaces like metabolic networks.

- Solution Protocol:

- Reparameterize: Use a non-centered parameterization for hierarchical components. For fluxes, consider working in a transformed space (e.g., log-ratio).

- Increase Adaptation: Allow more warm-up/adaptation iterations for the sampler to learn the optimal step size and covariance matrix.

- Check Model Specification: Ensure identifiability of fluxes. Non-identifiable parameters will never converge. Use 13C labeling data adequacy checks.

- Visualize Diagnostics: Use trace plots, Gelman-Rubin statistics (R̂), and monitor the Bayesian fraction of missing information (BFMI).

FAQ 4: How do I perform model selection between rival 13C MFA network topologies using Bayesian methods?

- Issue: Need to compare models (e.g., with/without anapleurotic reactions) beyond simple goodness-of-fit.

- Diagnosis: Frequentist methods use likelihood ratio tests with p-values. Bayesian methods compare models via marginal likelihoods (Bayes Factors) or information criteria.

- Solution Protocol:

- Calculate Bayes Factors (BF): Compute the marginal likelihood (evidence) for each model (M1, M2). BF₁₂ = P(D|M1) / P(D|M2). This often requires specialized algorithms (e.g., bridge sampling).

- Use Information Criteria: Compute the Widely Applicable Information Criterion (WAIC) or Leave-One-Out Cross-Validation (LOO-CV) for each model. The model with lower WAIC or higher LOO-CV elpd is preferred.

- Protocol: Fit each candidate model with the same data and prior justification. Use a stable function (e.g.,

loo()in R/loopackage) to compute and compare criteria. Report the difference in elpd along with its standard error.

The Scientist's Toolkit: Research Reagent Solutions for 13C MFA

| Item | Function in 13C MFA Experiment |

|---|---|

| [1-13C]Glucose / [U-13C]Glucose | Tracer substrate. The pattern of 13C labeling in downstream metabolites provides the data to infer intracellular flux distributions. |

| Quenching Solution (e.g., -40°C Methanol) | Rapidly halts metabolism at the precise experimental timepoint to capture metabolic state. |

| Derivatization Agent (e.g., MSTFA) | Chemically modifies metabolites (e.g., organic acids, amino acids) for analysis by Gas Chromatography (GC). |

| Internal Standard (e.g., 13C-labeled Succinate) | Added uniformly before extraction to correct for losses during sample processing and quantify absolute concentrations. |

| Ion Chromatography or GC Column | Physically separates the complex mixture of cellular metabolites prior to mass spectrometry. |

| High-Resolution Mass Spectrometer (GC-MS/LC-MS) | Measures the mass isotopomer distribution (MID) of fragments from each metabolite, which is the primary data for flux calculation. |

| Flux Estimation Software (e.g., INCA, 13CFLUX2, BayesFlux) | Performs the computational fitting of the metabolic network model to the measured MIDs, outputting the estimated flux map. |

Troubleshooting Guides & FAQs

FAQ 1: My MCMC sampler for my 13C MFA model is converging very slowly or not at all. What should I check?

- Answer: Slow or non-convergence in Markov Chain Monte Carlo (MCMC) sampling for Metabolic Flux Analysis (MFA) is often related to prior specification or model parametrization.

- Check Your Priors: Improper or overly diffuse priors can lead to poor sampling in high-dimensional parameter spaces. Re-evaluate your prior distributions for fluxes (e.g., uptake/secretion rates). Use informative priors based on literature or pilot experiments where possible to constrain the sampling space. A weakly informative prior is often better than a completely non-informative one for 13C MFA.

- Reparametrize Your Model: Consider using a centered parametrization or transforming constrained parameters (e.g., fluxes that must be positive) using logarithms to improve sampler efficiency.

- Diagnose with Multiple Chains: Always run multiple (≥4) MCMC chains from dispersed starting points. Use the Gelman-Rubin diagnostic (R-hat); values should be ≤ 1.05 for all parameters.

FAQ 2: How do I choose between different 13C MFA network models (e.g., with vs. without a futile cycle) using Bayesian methods?

- Answer: Bayesian model selection provides a principled framework for this via the Bayes Factor or through computation of the Posterior Model Probability.

- Calculate the Marginal Likelihood (Evidence): For each candidate model (Model A, Model B), compute the marginal likelihood, which integrates the likelihood over the prior parameter space. This penalizes model complexity automatically.

- Compute Bayes Factors: The Bayes Factor (BF) for Model A against Model B is the ratio of their marginal likelihoods. A BF > 10 provides strong evidence for Model A. For 13C MFA, this can decisively compare alternative pathway topologies.

- Protocol: Sample from the posterior of each model using an MCMC method (e.g., NUTS). Use methods like thermodynamic integration or bridge sampling to estimate the marginal likelihood from the posterior samples. Compare the estimated log-marginal-likelihoods.

FAQ 3: The posterior distributions for some fluxes are extremely wide or bimodal. What does this mean and how should I proceed?

- Answer: Wide posteriors indicate low information content in the 13C labeling data for those specific fluxes, often due to network redundancy. Bimodality suggests two distinct flux solutions are equally consistent with the data.

- Interpretation: This is a feature, not a bug, of Bayesian analysis. It honestly reflects the uncertainty or identifiability issues within the metabolic network.

- Action:

- Incorporate Additional Data: Introduce new experimental constraints (e.g., enzyme activity measurements) as new likelihood terms to inform the ambiguous fluxes.

- Tighten Informative Priors: If external knowledge justifies it, apply more precise priors.

- Report Fully: Always report the full posterior distribution (e.g., using 95% credible intervals) rather than just a point estimate to communicate this uncertainty.

FAQ 4: How do I formally incorporate data from other omics layers (e.g., proteomics) as constraints in my Bayesian 13C MFA?

- Answer: This is a key strength of the Bayesian framework. External data can be incorporated either through the likelihood or the prior.

- As a Likelihood: If you have a quantitative mechanistic model linking proteomics data to flux (e.g., via enzyme kinetics), use it to construct an additional likelihood function.

- As a Prior (More Common): Use the external data to construct an informative prior distribution. For example, measured enzyme abundance can define the mean and variance of a Gaussian prior on its associated maximal flux.

- Protocol: Let v be the flux vector. Your prior becomes P(v | Proteomic Data). Your overall posterior is then: P(v | 13C Data, Proteomic Data) ∝ P(13C Data | v) * P(v | Proteomic Data).

Table 1: Hypothetical Results from Bayesian Model Selection for Two Alternative Glycolytic Pathways in a Cancer Cell Line. Marginal likelihoods estimated via bridge sampling from 4 MCMC chains of 50,000 draws each.

| Model Hypothesis | Log Marginal Likelihood (ln Z) | Bayes Factor (vs. Model B) | Preferred Model? | Key Implication |

|---|---|---|---|---|

| Model A: Standard Glycolysis | -1250.2 | 15.8 | Yes (Strong Evidence) | Data strongly supports canonical pathway. |

| Model B: With Unusual Bypass | -1266.0 | 1.0 | No | Alternative pathway is less probable. |

Table 2: Impact of Prior Choice on Posterior Flux Estimates for Glucose Uptake (v_glc).

| Prior Type | Prior Parameters (Normal Dist.) | Posterior Mean (µmol/gDW/h) | 95% Credible Interval | Posterior SD |

|---|---|---|---|---|

| Non-informative (Weak) | Mean=0, SD=1000 | 5.8 | [1.1, 10.5] | 2.4 |

| Informative (Literature) | Mean=6.5, SD=1.5 | 6.2 | [3.8, 8.1] | 1.1 |

Experimental Protocol: Bayesian 13C MFA Workflow

Title: Protocol for Bayesian 13C Metabolic Flux Analysis with Model Selection.

1. Experimental Design & Tracer Input:

- Grow cells in a defined medium with a chosen 13C-labeled substrate (e.g., [1,2-13C]glucose).

- Harvest cells at metabolic steady-state for extracellular flux measurements and intracellular metabolites for LC-MS analysis of isotopic labeling patterns (mass isotopomer distributions, MIDs).

2. Model Construction & Prior Definition:

- Define candidate metabolic network models (stoichiometric matrices) in a modeling tool (e.g., COBRApy, Matlab).

- For each model, specify prior probability distributions P(θ) for all free flux parameters θ. Use informative priors for measurable exchange fluxes (e.g., based on uptake/secretion rates).

3. Likelihood Function Formulation:

- Define the likelihood function P(D | θ). This models the probability of observing the experimental data D (MIDs, extracellular fluxes) given fluxes θ.

- Typically assumes residuals between simulated and measured MIDs follow a multivariate normal distribution, accounting for measured standard deviations.

4. Posterior Sampling via MCMC:

- Use a sampler like Hamiltonian Monte Carlo (HMC) or the No-U-Turn Sampler (NUTS) to draw samples from the posterior P(θ | D) ∝ P(D | θ)P(θ).

- Run: 4 independent chains with 50,000 draws each, discarding the first 50% as warm-up/tuning.

- Check Convergence: Ensure R-hat ≤ 1.05 and high effective sample size (ESS) for all key parameters.

5. Model Selection & Inference:

- For each candidate model, estimate its marginal likelihood using the posterior samples (e.g., via bridge sampling).

- Compute Bayes Factors to select the most probable model given the data.

- For the selected model, analyze the posterior distributions of fluxes: report posterior means, medians, and 95% credible intervals to represent flux estimates with uncertainty.

Visualizations

Diagram 1: Bayesian 13C MFA Workflow

Diagram 2: Bayesian Model Selection for 13C MFA

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Bayesian 13C MFA Experiments

| Item / Reagent | Function / Role in Bayesian 13C MFA |

|---|---|

| U-13C or 1,2-13C Labeled Substrates | Tracer compounds (e.g., glucose, glutamine) that introduce the isotopic label into metabolism, generating the mass isotopomer distribution (MID) data. |

| LC-MS/MS System | High-resolution mass spectrometer coupled to liquid chromatography for precise measurement of intracellular metabolite MIDs. |

| Computational Environment (Python/R/Stan) | Platform for coding the metabolic network model, defining priors/likelihoods, and performing MCMC sampling (e.g., using PyMC, Stan). |

| MCMC Diagnostics Software | Tools (e.g., ArviZ, CODA) to assess chain convergence (R-hat, ESS) and visualize posterior distributions, critical for reliable inference. |

| Stoichiometric Model Database | Resource (e.g., BIGG Models, MetaCyc) for constructing and validating the metabolic network reaction list and atom transitions. |

| Bridge Sampling Algorithm Code | Implementation for computing the marginal likelihood (evidence) from posterior samples, enabling formal Bayesian model comparison. |

Troubleshooting Guides & FAQs

Q1: During 13C-MFA model selection, my Markov Chain Monte Carlo (MCMC) sampler has low effective sample sizes (ESS) and high R-hat statistics. What is the issue and how can I fix it? A: This indicates poor convergence and inefficient sampling, often due to strong posterior correlations or poorly specified priors.

- Solution: Reparameterize your model to reduce parameter correlations (e.g., use flux ratios instead of absolute fluxes). Use a non-centered parameterization. Increase adapt_delta in Hamiltonian Monte Carlo (HMC) samplers (e.g., to 0.95 or 0.99) to avoid divergent transitions. Run chains for more iterations after a longer warm-up period.

- Protocol: 1) Visualize pairwise parameter plots to identify correlations. 2) Implement the reparameterization. 3) Re-run sampling with

adapt_delta=0.95. 4) Recalculate ESS and R-hat usingmonitor()functions.

Q2: How do I quantitatively incorporate prior knowledge from literature or previous experiments into my Bayesian 13C-MFA model? A: Priors are specified as probability distributions over model parameters (e.g., fluxes, enzyme activities).

- Solution: For a reported flux v with mean μ and standard error σ, encode it as a Normal(μ, σ) or a more conservative Student-t prior. For a known inequality constraint (e.g., flux > 0), use a truncated Normal or a Log-Normal prior. Use weakly informative priors (e.g., Normal(0,10)) for unknowns to regularize estimates without imposing strong beliefs.

- Protocol: 1) Gather prior data. 2) Choose appropriate distribution family. 3) Fit the distribution to the prior data (e.g., match moments). 4) Specify prior in the model code:

v ~ normal(mu, sigma);.

Q3: When comparing two non-nested metabolic network topologies (Model A vs. Model B), which Bayesian model comparison metric should I use, and how do I interpret the results? A: Use Widely Applicable Information Criterion (WAIC) or Leave-One-Out Cross-Validation (LOO-CV). Unlike AIC/BIC, these are fully Bayesian and can handle non-nested, singular models.

- Solution: Compute WAIC or LOO-CV for each candidate model using posterior samples. The model with the lower WAIC/LOO-CV score has better predictive accuracy. A difference (Δ) > 10 is considered substantial.

- Protocol: 1) Fit each model, obtaining posterior samples. 2) Compute log-likelihood for each sample. 3) Use

loo()orwaic()functions (e.g., inlooR package) on the log-likelihood matrix. 4) Compareelpd_diffand its standard error.

Q4: My posterior distributions for key metabolic fluxes are multimodal. What does this mean, and how should I proceed with model selection? A: Multimodality suggests the data supports multiple, distinct flux solutions (e.g., different metabolic phases). This is a critical insight into biological uncertainty that point estimates would miss.

- Solution: Do not force a unimodal solution. Report the full multimodal posterior. Use model comparison metrics (WAIC/LOO) to check if a more complex model (e.g., mixture model) that explicitly accounts for multimodality is justified compared to a simpler one.

- Protocol: 1) Plot kernel density estimates for all fluxes. 2) If multimodality is suspected, run multiple MCMC chains from dispersed starting points. 3) Check trace plots and posterior density plots for multiple modes. 4) Consider a hierarchical or mixture model.

Table 1: Comparison of Model Selection Criteria for Non-Nested 13C-MFA Models

| Model Description | WAIC Score | SE(WAIC) | LOO-CV Score | SE(LOO-CV) | p_LOO (Effective Parameters) | Key Advantage Demonstrated |

|---|---|---|---|---|---|---|

| Core Network (No Anapleurosis) | 412.3 | 15.7 | 415.1 | 16.2 | 8.5 | Baseline |

| With GLYC Loop | 388.5 | 12.1 | 389.9 | 12.8 | 10.2 | Comparing Non-Nested Models |

| With PEPCK Flux (Lognormal Prior) | 395.2 | 14.5 | 397.8 | 15.0 | 9.8 | Incorporating Prior Knowledge |

| With Mitochondrial Export | 405.6 | 15.0 | 408.3 | 15.5 | 9.1 | Quantifying Uncertainty (Wider CrIs) |

WAIC/Widely Applicable Information Criterion; LOO-CV/Leave-One-Out Cross-Validation; SE/Standard Error; CrIs/Credible Intervals; GLYC/Glycolytic; PEPCK/Phosphoenolpyruvate carboxykinase.

Table 2: Impact of Prior Strength on Posterior Flux Estimate (vTCA)

| Prior Distribution | Prior Mean (μmol/gDCW/h) | Prior 95% Interval | Posterior Mean | Posterior 95% Credible Interval | Interval Width |

|---|---|---|---|---|---|

| Weakly Informative (Normal) | 100 | 60.8 – 139.2 | 145.3 | 128.5 – 162.1 | 33.6 |

| Informative (from Literature) | 155 | 138.2 – 171.8 | 153.8 | 144.2 – 163.4 | 19.2 |

| Strongly Informative (Misppecified) | 200 | 184.2 – 215.8 | 178.6 | 169.9 – 187.3 | 17.4 |

vTCA/Tricarboxylic Acid Cycle flux; DCW/Dry Cell Weight. Demonstrates how prior knowledge updates to a posterior, and the risk of a strongly incorrect prior.

Experimental Protocol: Bayesian 13C-MFA Model Selection Workflow

1. Model Formulation & Prior Specification:

- Define candidate network stoichiometries (S matrices).

- For each model, specify prior probability distributions

p(θ|M)for all free parametersθ(net fluxes, exchange fluxes, measurement errors). Use literature data for informative priors where available, else use weakly informative defaults (e.g., Half-Normal for positive fluxes). - Define the likelihood function

p(y|θ, M)relating parameters to observed 13C labeling (MDV) and extracellular flux datay.

2. Posterior Sampling via MCMC:

- Implement model in a probabilistic programming language (Stan, PyMC).

- Run 4 independent MCMC chains for 10,000 iterations each (warm-up/adaptive phase: 5,000).

- Monitor convergence: Ensure R-hat < 1.05 and ESS > 400 for key parameters.

3. Model Comparison & Diagnostics:

- Compute pointwise log-likelihood from posterior samples.

- Calculate WAIC and LOO-CV using the

looR package. Compareelpd_diff. - Perform posterior predictive checks: Simulate new data

y_repfrom the posterior and compare to observedy.

4. Inference & Reporting:

- Report the full posterior distribution (median, 95% credible interval) for all parameters of interest.

- Visualize multimodal posteriors if present.

- Justify the final selected model using the quantitative comparison metrics (WAIC/LOO).

The Scientist's Toolkit: Key Research Reagent Solutions

| Item/Category | Function in Bayesian 13C-MFA Research |

|---|---|

| Probabilistic Programming Framework (Stan/PyMC) | Core engine for specifying Bayesian models and performing Hamiltonian Monte Carlo (HCMC) sampling of the posterior distribution. |

| 13C-Labeled Substrate (e.g., [U-13C] Glucose) | Tracer that generates measurable isotopic labeling patterns (MDVs) in intracellular metabolites, the primary data for flux estimation. |

| GC- or LC-MS Instrumentation | Measures the mass isotopomer distribution (MID) of proteinogenic amino acids or intracellular metabolites, providing the data for likelihood computation. |

| Computational Environment (R/Python) | For data preprocessing (correcting natural isotopes), integrating MS data with extracellular rates, and post-processing MCMC outputs. |

Model Comparison Library (loo in R, ArviZ in Python) |

Computes WAIC, LOO-CV, and related diagnostics for robust comparison of non-nested, Bayesian models. |

| High-Performance Computing (HPC) Cluster | Often required for computationally intensive MCMC sampling of large-scale metabolic networks with many free parameters. |

Visualizations

Bayesian 13C-MFA Inference and Model Selection Workflow

Framework for Comparing Non-Nested 13C-MFA Models

Frequently Asked Questions (FAQs)

Q1: During the posterior probability calculation for model comparison, my Markov Chain Monte Carlo (MCMC) sampler fails to converge. What could be the issue? A1: Non-convergence in MCMC sampling for Bayesian 13C Metabolic Flux Analysis (MFA) is often due to:

- Poorly informed priors: Ensure your flux prior distributions (e.g., for reversible reactions) are biologically plausible and not too diffuse.

- High dimensionality: Complex network models with many free fluxes can lead to slow mixing. Consider using an adaptive MCMC algorithm or re-parameterizing the problem.

- Model misspecification: The network structure itself may be incorrect, preventing the sampler from finding a consistent parameter space. Re-evaluate network reaction list and atom transitions.

Q2: How do I handle the issue of "label scrambling" or "isotopic isomerism" when calculating the marginal likelihood for model selection? A2: Label scrambling in metabolites like glutamate complicates the mapping between fluxes and measured mass isotopomer distributions (MIDs).

- Solution: Explicitly model the isotopic isomerism in your network atom transition model. Use computational tools like

INCAor13CFLUX2that account for symmetric molecules. Ensure your experimental data has sufficient labeling measurements to resolve these symmetries.

Q3: The Bayes Factor between my top candidate models is very low (<3), indicating inconclusive evidence. How can I improve discrimination? A3: Low Bayes Factors suggest the experimental data is insufficient to strongly distinguish between the competing network hypotheses.

- Strategy 1: Optimize your tracer experiment design. Use prior information to simulate MIDs for candidate models and design a tracer (e.g., [1,2-13C]glucose + [U-13C]glutamine) that maximizes the divergence in predicted labels.

- Strategy 2: Increase the precision and breadth of your mass spectrometry measurements, targeting key fragment ions that are predicted to differ most between models.

Q4: When implementing the Reversible Jump MCMC (RJMCMC) for model space exploration, the algorithm gets stuck on a single network topology. What should I do? A4: This indicates low acceptance probability for "jump" proposals between models.

- Tune proposal distributions: Carefully design the proposal mechanism for adding/removing reactions. The new flux parameter should be proposed from a distribution informed by its potential physiological range.

- Use simulated annealing: Introduce a temperature parameter initially to allow wider exploration of the model space, then gradually cool it.

- Check priors: The prior probabilities on models (P(M)) should not overwhelmingly favor the current model.

Troubleshooting Guides

Issue: High Computational Cost of Marginal Likelihood Calculation

- Symptoms: Model evaluation takes days/weeks; computational resources are exhausted.

- Diagnosis: Direct integration over all parameters is intractable for large models. Using the Harmonic Mean estimator from MCMC samples is unstable.

- Resolution: Implement a nested sampling algorithm (e.g.,

MultiNest) or use the Thermodynamic Integration method. These provide more robust marginal likelihood estimates at a manageable, though still significant, computational cost. Parallelize computations on high-performance clusters.

Issue: Poor Predictive Performance of the Selected Model

- Symptoms: The model with the highest posterior probability fails to predict validation data from a different tracer experiment.

- Diagnosis: This points to overfitting or an incomplete model space. The selected model may fit noise or the true network may not be among the candidates.

- Resolution:

- Model Checking: Use posterior predictive checks. Simulate data from the fitted model and compare key statistics (e.g., MID correlations) to the actual data.

- Expand Model Space: Reconsider biological constraints and include alternative plausible pathways you may have omitted.

- Regularization: Use sparsity-inducing priors (e.g., spike-and-slab) on fluxes to prefer simpler networks, reducing overfitting.

Experimental Protocols

Protocol 1: Generating Data for Bayesian 13C MFA Model Selection

Objective: To produce high-quality Mass Isotopomer Distribution (MID) data from a cell culture experiment for subsequent Bayesian model inference and selection.

Materials: See "Research Reagent Solutions" table.

Method:

- Tracer Design: Based on preliminary network hypotheses, select one or more 13C-labeled substrates (e.g., [U-13C]glucose).

- Cell Culture: Seed cells in biological replicates. At mid-log growth phase, replace media with media containing the chosen tracer(s).

- Quenching & Extraction: At metabolic steady-state (typically 24-48 hours post-labeling), rapidly quench metabolism (using cold methanol/saline). Perform intracellular metabolite extraction (e.g., with 50% acetonitrile/water).

- Mass Spectrometry:

- Derivatize if necessary (e.g., for GC-MS).

- Analyze key metabolite pools (e.g., alanine, lactate, TCA cycle intermediates) via LC-MS/MS or GC-MS.

- Record mass isotopomer abundances, correcting for natural isotope abundances using a software tool.

- Data Curation: Compile corrected MIDs and extracellular exchange flux rates (uptake/secretion) into an input data file (e.g.,

.csvformat) for modeling software.

Protocol 2: Bayesian Model Selection Workflow Implementation

Objective: To computationally compare multiple metabolic network models and select the one with the strongest evidence from the data.

Software Prerequisites: Python (PyMC3, ArviZ, cobrapy) or MATLAB (COBRA Toolbox with custom scripts), INCA.

Method:

- Model Specification:

- Define 2-5 candidate metabolic network models (

.xmlor script format). They should differ in key alternative reactions (e.g., PEP carboxykinase vs. malic enzyme). - For each model, specify biologically-informed prior distributions for all free net and exchange fluxes (e.g., truncated Normal or Uniform distributions).

- Set a prior probability over the set of models (often uniform).

- Define 2-5 candidate metabolic network models (

- Inference & Calculation:

- For each model Mk, run an MCMC sampler (e.g., NUTS) to sample from the posterior distribution of fluxes P(θ | Data, Mk).

- Monitor convergence with $\hat{R}$ statistics and effective sample size.

- Compute the marginal likelihood P(Data | M_k) for each model using nested sampling or thermodynamic integration.

- Model Selection:

- Calculate posterior model probabilities: P(Mk | Data) ∝ P(Data | Mk) * P(Mk).

- Compute Bayes Factors: BF{ij} = P(Data | Mi) / P(Data | Mj).

- Select the model with the highest P(M_k | Data), considering BF thresholds (e.g., BF > 10 is strong evidence).

Data Presentation

Table 1: Example Bayes Factor Interpretation for Model Selection

| Bayes Factor (BF₁₂) | Evidence for Model M₁ over Model M₂ |

|---|---|

| 1 to 3 | Anecdotal / Not worth more than a bare mention |

| 3 to 10 | Moderate |

| 10 to 30 | Strong |

| 30 to 100 | Very Strong |

| > 100 | Decisive |

Table 2: Key Data Requirements for Bayesian 13C MFA Model Selection

| Data Type | Description | Typical Measurement Method | Purpose in Workflow |

|---|---|---|---|

| Mass Isotopomer Distributions (MIDs) | Fractional abundances of labeled forms of intracellular metabolites. | LC-MS or GC-MS | Likelihood function core; drives discrimination between models. |

| Extracellular Flux Rates | Net uptake (e.g., glucose) and secretion (e.g., lactate) rates. | Bioreactor probes, NMR/HPLC | Constraints for flux balance; inform prior distributions. |

| Biomass Composition | Precursor requirements for cell growth. | Literature-based or measured. | Defines the biomass reaction, a key sink for fluxes. |

Visualizations

Diagram 1: Bayesian MFA Model Selection Workflow

Diagram 2: RJMCMC Model Space Exploration

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Bayesian 13C MFA Experiments

| Item | Function in Workflow | Example/Notes |

|---|---|---|

| 13C-Labeled Tracer Substrates | Induce measurable isotopic patterns in metabolites to infer fluxes. | [U-13C]Glucose, [1,2-13C]Glucose, [U-13C]Glutamine. Purity >99%. |

| Quenching Solution | Instantly halt metabolic activity to capture a snapshot of MIDs. | Cold (-40°C to -80°C) 60% aqueous methanol buffered with HEPES or ammonium bicarbonate. |

| Metabolite Extraction Solvent | Efficiently lyse cells and extract polar intracellular metabolites. | 50% Acetonitrile in water, or Methanol/Chloroform/Water mixtures. |

| Derivatization Reagent (for GC-MS) | Increase metabolite volatility and detection for gas chromatography. | N-methyl-N-(tert-butyldimethylsilyl) trifluoroacetamide (MTBSTFA) or Methoxyamine hydrochloride + N,O-Bis(trimethylsilyl)trifluoroacetamide (BSTFA). |

| Internal Standards (IS) | Correct for sample loss and instrument variability during MS. | Stable Isotope Labeled IS (e.g., 13C,15N-labeled amino acid mix) for absolute quantification. |

| Computational Software | Perform network modeling, Bayesian inference, and model comparison. | INCA (GUI), 13CFLUX2 (highly automated), PyMC3/Stan (custom flexible inference), MultiNest (marginal likelihood). |

Implementing Bayesian Model Selection: A Step-by-Step Workflow for 13C MFA

FAQs and Troubleshooting Guides

Q1: What exactly is a "candidate model space" in the context of Bayesian 13C MFA?

- A: The candidate model space is the set of all possible metabolic network structures you are considering to explain your isotopic labeling data. It encompasses variations in reaction reversibilities, active alternative pathways (e.g., PPP variants), and presence/absence of specific transport or futile cycles. Defining this space explicitly is the foundational step before Bayesian model selection can quantify the evidence for each candidate.

Q2: How do I know if my candidate model space is too large or too small?

- A:

- Too Large: Indicated by extremely long computation times for model evidence calculation and many models with negligible posterior probability (>90% of probability mass on <10% of models). Simplify by merging low-yield pathway variants or fixing well-established reaction directions based on literature.

- Too Small: Indicated by poor fit (high residuals) for all candidate models and consistently low model evidence values. The true network is likely not represented. Expand the space by including additional plausible pathways or mechanisms suggested by the residual analysis.

- A:

Q3: I'm getting "non-identifiable parameter" errors during flux estimation within my models. What does this mean for model space definition?

- A: This error suggests that within a specific candidate model, the available 13C data cannot uniquely determine all flux values. This is a critical diagnostic. You must refine your model space by either: 1) Constraining the non-identifiable fluxes based on prior knowledge (e.g., enzyme assays), or 2) Collapsing the model variants that create the non-identifiability (e.g., remove one of two parallel pathways that cannot be distinguished) and re-defining your candidate set.

Q4: How should I use prior literature or omics data to inform my candidate model space?

- A: Prior knowledge should guide the construction of plausible models, not replace the data-driven selection. Use transcriptomic or proteomic data to justify the inclusion/exclusion of specific isozymes or pathway variants in your candidate set. For example, low expression of a key enzyme might lead you to create a candidate model where that alternative pathway is inactive. Bayesian methods will then weigh the evidence for that model against others.

Experimental Protocol: Defining Model Space via Isotopic Tracer Design

This protocol outlines how to use targeted tracer experiments to probe specific uncertainties in the network, thereby defining the candidate model space.

- Identify Network Ambiguities: From genome-scale reconstruction, list all thermodynamically plausible alternatives for pathway segments (e.g., oxidative vs. non-oxidative PPP, mitochondrial vs. cytosolic NADH shuttles).

- Design Discriminatory Tracers: For each major ambiguity, design a tracer (e.g., [1,2-13C]glucose vs. [1,6-13C]glucose) whose labeling patterns in key metabolites (e.g., ribose-5-phosphate, alanine) are predicted to differ significantly between the alternative network structures.

- Perform Parallel Labeling Experiments: Culture cells in parallel with each discriminatory tracer under identical physiological conditions. Harvest cells at isotopic steady state.

- Measure Mass Isotopomer Distributions (MIDs): Use GC-MS or LC-MS to obtain MIDs for central carbon metabolites (e.g., PEP, succinate, ribose-5-phosphate, malate).

- Construct Candidate Models: Build a separate metabolic network model (.xml or similar) for each logically consistent combination of the ambiguous pathway states informed by step 1.

- Initial Screening: Fit each candidate model to the combined tracer dataset. Discard models that fail to converge or yield physically impossible fluxes (e.g., negative net flux for an irreversible reaction).

Data Presentation: Example Candidate Model Space for Central Metabolism

Table 1: Candidate Models Defined by Three Key Network Ambiguities.

| Model ID | PPP Route | Glycolytic Bypass (PK vs. PEPCK) | Malic Enzyme Activity | Description |

|---|---|---|---|---|

| M1 | Oxidative Only | Pyruvate Kinase (PK) | Inactive | Traditional textbook model. |

| M2 | Oxidative + Non-Oxidative | Pyruvate Kinase (PK) | Active (cytosolic) | Allows for ribose recycling. |

| M3 | Oxidative Only | PEP Carboxykinase (PEPCK) | Active (mitochondrial) | Anaplerotic/gluconeogenic orientation. |

| M4 | Oxidative + Non-Oxidative | PEP Carboxykinase (PEPCK) | Active (cytosolic) | High flexibility model for nucleotide synthesis. |

| M5 | Oxidative Only | Pyruvate Kinase (PK) | Active (mitochondrial) | Supports reductive metabolism. |

Mandatory Visualization

Diagram 1: Workflow for defining a candidate model space.

Diagram 2: Tracer discrimination of PPP routes informs model space.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents for 13C MFA Model Space Definition.

| Item | Function in Model Space Definition |

|---|---|

| U-13C or [1,2-13C] Glucose | Core isotopic tracers. Different labeling patterns help discriminate between major pathway fluxes. |

| 13C-Labeled Glutamine | Probes TCA cycle anaplerosis, glutaminolysis, and exchange fluxes. |

| Custom SILAC Media | Enables precise control over nutrient environment for consistent parallel labeling experiments. |

| Metabolite Extraction Solvents | Methanol/Water/Chloroform mixes for quenching metabolism and extracting intracellular metabolites. |

| Derivatization Reagents | MSTFA or similar for converting metabolites to volatile forms for GC-MS analysis. |

| Isotopic Standard Mix | A mix of unlabeled and uniformly labeled metabolite standards for MID calibration and quantification. |

| MFA Software | INCA, 13C-FLUX2, or similar. Required to encode, simulate, and fit each candidate network model. |

| High-Resolution LC-MS or GC-MS System | Essential for accurate measurement of mass isotopomer distributions (MIDs). |

Troubleshooting Guide & FAQs for Bayesian 13C MFA Model Selection

Q1: My Markov Chain Monte Carlo (MCMC) sampler fails to converge when I include informative priors on exchange fluxes in my 13C Metabolic Flux Analysis (MFA). What are the likely causes and solutions?

A: This is often due to prior-data conflict or improper prior scaling. First, verify that your prior means are physiologically plausible. For exchange fluxes, log-normal or truncated normal distributions are often more appropriate than normal distributions to enforce positivity. Re-scale your prior variances; overly informative (narrow) priors can trap the sampler. A diagnostic step is to run the model with weakly informative priors (e.g., broad normal or uniform) to see if convergence improves, then systematically tighten them.

Q2: How do I choose between a Wishart and an LKJ prior for the covariance matrix of correlated model parameters (e.g., enzyme abundances influencing multiple fluxes)?

A: The choice hinges on interpretability and scaling. Use the Wishart prior if you have strong prior knowledge about the degrees of freedom (scale matrix). For a more flexible and interpretable correlation matrix, use the LKJ prior. The LKJ(1) prior is a uniform distribution over correlation matrices. For moderate regularization towards zero correlation, use LKJ(2). For a stronger belief in independence, use LKJ(4). See Table 1 for comparison.

Table 1: Prior Distributions for Covariance/Correlation Matrices

| Prior | Key Parameter | Use Case | Advantage | Disadvantage |

|---|---|---|---|---|

| Wishart | Degrees of freedom (ν), Scale matrix (Ψ) | Known scale matrix from previous experiments. | Conjugate for multivariate normal. | Less intuitive; sensitive to scaling of Ψ. |

| LKJ | Shape parameter (η) | Regularizing correlation matrices between model parameters. | Direct control over correlation density; scale-invariant. | Only models the correlation matrix, not covariance. |

Q3: When implementing Bayesian model selection for rival metabolic network structures, my Bayes factors are extremely sensitive to the choice of prior width on shared parameters. How can I stabilize this?

A: This is a known issue called the Jeffreys-Lindley paradox. To mitigate it:

- Use objectively derived hyperpriors (e.g., based on previous, independent datasets) for the prior variance.

- Employ cross-validation or posterior predictive checks to calibrate prior width, rather than relying on arbitrary defaults.

- Consider using fractional Bayes factors or intrinsic priors which use a fraction of the data to update weakly informative priors, making the Bayes factor less sensitive to prior specification.

Q4: What are robust default weakly informative priors for key parameters in a 13C MFA Bayesian framework?

A: See Table 2 for commonly used default priors. These are designed to regularize estimates while letting the data dominate.

Table 2: Default Weakly Informative Priors for 13C MFA Parameters

| Parameter Type | Suggested Prior | Justification |

|---|---|---|

| Net Fluxes (v_net) | Normal(μ=0, σ=10) [after scaling] | Symmetric, allows both directions; broad variance. |

| Exchange Fluxes (v_exch) | LogNormal(μ=log(1), σ=2) | Ensures positivity; heavy tail accommodates uncertainty. |

| Measurement Precision (τ) | Gamma(α=0.001, β=0.001) or Half-Cauchy(0,5) | Weakly informative on precision/standard deviation. |

| Enzyme Capacity Constraint | TruncatedNormal(μ=constraint, σ=0.5*μ, lower=0) | Incorporates kinetic data while allowing uncertainty. |

Experimental Protocol: Eliciting Informative Priors from Enzyme Kinetic Data

Objective: To formulate an informative, data-driven prior distribution for a maximum reaction rate (Vmax) parameter in a Bayesian 13C MFA model.

Methodology:

- Data Collection: Gather in vitro enzyme activity assays (n ≥ 3) for the target enzyme under conditions mimicking the in vivo study (pH, temperature).

- Statistical Summary: Calculate the sample mean (μassay) and standard error (SEassay) of the measured Vmax.

- Prior Formulation: Construct a Gamma prior distribution for the Vmax parameter in the metabolic model.

- Shape (α): Set α = (μassay / SEassay)². This encodes the precision of the experimental data.

- Rate (β): Set β = μassay / (SEassay)².

- Incorporation: Use this Gamma(α, β) as the prior for the corresponding Vmax node in the probabilistic graphical model (see Diagram 1). This prior will be updated by the 13C labeling data during inference.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for 13C MFA & Prior Elicitation Experiments

| Item | Function in Context |

|---|---|

| U-13C Glucose | Tracer substrate; enables labeling of central carbon metabolites for flux inference. |

| LC-MS/MS System | Quantifies isotopic labeling patterns (mass isotopomer distributions) and metabolite concentrations. |

| Specific Enzyme Assay Kits | Provides in vitro activity data to formulate informative priors for Vmax parameters. |

| Silicon Isotope Labeling (SIL) Internal Standards | For absolute quantification of metabolites, improving measurement precision likelihood. |

| Bayesian Software (Stan/pymc3/INCA) | Implements MCMC sampling for posterior estimation and model comparison. |

| Graphical Model Visualization Software | Aids in specifying the joint probability structure of parameters, data, and priors. |

Visualizations

Diagram 1: Prior Elicitation & Bayesian Integration Workflow

Diagram 2: Bayesian Model Selection with Bayes Factors

Troubleshooting Guides & FAQs

MCMC Troubleshooting

Q1: My Markov Chain Monte Carlo (MCMC) sampler has a low acceptance rate and poor mixing when fitting the 13C MFA Bayesian model. What should I do? A: Low acceptance rates often indicate a poor proposal distribution scale. For a 13C Metabolic Flux Analysis (MFA) model, where parameters are fluxes and scaling factors:

- Diagnosis: Calculate the acceptance rate over the last 10,000 iterations. An optimal rate is ~0.234 for a random-walk Metropolis in high dimensions.

- Solution: Adaptively tune the covariance matrix of your multivariate normal proposal during the burn-in phase. Use pre-conditioning by setting the proposal covariance to a scaled version of the estimated parameter covariance from a preliminary run.

Q2: How do I diagnose convergence for MCMC in high-dimensional model selection problems? A: Use the Gelman-Rubin diagnostic (R̂) across multiple, randomly initialized chains.

- Protocol:

- Run at least 4 MCMC chains from over-dispersed starting points.

- For each parameter, calculate the between-chain variance (B) and within-chain variance (W).

- Compute R̂ = √[( (n-1)/n * W + B/n ) / W], where

nis chain length. An R̂ < 1.05 for all parameters suggests convergence.

- 13C MFA Note: Pay special attention to fluxes with high posterior correlation; they converge slowest.

Variational Inference (VI) Troubleshooting

Q3: My Variational Inference optimization collapses, assigning zero mass to plausible models during 13C MFA model selection. Why? A: This is a symptom of the "variational collapse" or "mode-seeking" property of the Kullback–Leibler (KL) divergence.

- Diagnosis: Check the evidence lower bound (ELBO). It may plateau prematurely while the posterior variance of model probabilities shrinks to zero.

- Solution: Consider using a more flexible variational family (e.g., full-rank Gaussians over mean-field) or switch to a divergence measure that is more mass-covering, such as the Chi-divergence or using Monte Carlo objectives.

Q4: How do I choose an appropriate variational family for constrained parameters like metabolic fluxes? A: Transform constrained parameters to an unconstrained space.

- Protocol: For a flux

vwith a lower boundLand upper boundU:- Reparameterize:

v = L + (U - L) * sigmoid(θ), whereθis an unconstrained real number. - Place a Gaussian variational distribution

q(θ) = N(μ, σ²). - Perform the VI optimization on

μandσ. Compute expectations via the change of variables formula, ensuring fluxes stay within bounds.

- Reparameterize:

Sequential Monte Carlo (SMC) Troubleshooting

Q5: My Sequential Monte Carlo sampler suffers from severe particle degeneracy when sequentially adding isotopic labeling data. A: Particle degeneracy is common when the incremental likelihood is too informative.

- Diagnosis: Calculate the effective sample size (ESS):

ESS = 1 / Σ (w_i²), wherew_iare normalized particle weights. ESS below 30% of total particles is problematic. - Solution: Implement an adaptive resampling strategy. Only resample when ESS < (N_particles / 2). Use systematic resampling for lower variance. For the 13C MFA context, consider tempering the likelihood of new data batches to make the transition smoother.

Q6: SMC is computationally prohibitive for my large-scale metabolic network. Any optimization tips? A: Focus on parallelization and proposal refinement.

- Protocol:

- Parallelize Particle Propagation: Use multi-core or GPU processing to simulate all particles independently in the prediction step.

- Local MCMC Moves: After resampling, apply a short, customized MCMC kernel (e.g., a few steps of Hamiltonian Monte Carlo) to each unique particle to diversify them without changing the distribution. This is critical for high-dimensional parameter spaces like flux vectors.

Table 1: Computational Engine Performance on a Benchmark 13C MFA Model Selection Problem

| Engine | Avg. Time to Convergence (hr) | Avg. ESS per Hour (x1000) | R̂ (Gelman-Rubin) | Relative ELBO (vs Ground Truth) | Handles 50+ Models? |

|---|---|---|---|---|---|

| MCMC (NUTS) | 4.2 | 45.2 | 1.02 | N/A | Yes (slowly) |

| VI (Mean-Field) | 0.3 | N/A | N/A | -12.7 | Yes (efficiently) |

| VI (Full-Rank) | 1.1 | N/A | N/A | -3.2 | Limited (~20 models) |

| SMC (Adaptive) | 5.8 | 58.6 | 1.01 | N/A | Yes |

Benchmark: A model selection task across 10 plausible network topologies for central carbon metabolism in E. coli, using simulated isotopic labeling data. Hardware: 16-core CPU, 32GB RAM. ESS normalized by runtime. Ground truth ELBO estimated via long-run SMC.

Table 2: Common Error Indicators and Solutions

| Symptom | Likely Cause (MCMC) | Likely Cause (VI) | Likely Cause (SMC) | First Diagnostic Step |

|---|---|---|---|---|

| Parameter traces are flat | Chain is stuck in local mode | Variational distribution collapsed | All particles identical (degeneracy) | Plot trace for all chains/particles |

| High parameter autocorrelation | Proposal scale too small | Posterior correlations not captured | Resampling too frequent/rare | Calculate autocorrelation at lag 50 |

| Estimates are biologically implausible | Poor model specification or priors | Same as MCMC | Same as MCMC | Check prior predictive distribution |

Experimental Protocols

Protocol 1: Benchmarking Computational Engines for 13C MFA Model Selection

Objective: Compare the accuracy and efficiency of MCMC, VI, and SMC in selecting the correct metabolic network topology from isotopic labeling data. Materials: Simulated or experimental 13C labeling data (e.g., GC-MS fragment intensities), candidate metabolic network models (SBML format), high-performance computing cluster. Methodology:

- Model & Prior Specification: Encode each candidate metabolic network with steady-state constraints. Place log-normal priors on free flux parameters and a uniform prior over models.

- Likelihood Definition: Define a multivariate normal likelihood for the observed labeling patterns, with covariance estimated from technical replicates.

- Engine Configuration:

- MCMC: Run 4 chains of the No-U-Turn Sampler (NUTS) for 10,000 iterations, discarding first 4,000 as burn-in.

- VI: Optimize a full-rank Gaussian variational approximation using the Adam optimizer for 50,000 steps with a learning rate of 0.01.

- SMC: Use 2,000 particles, adaptive resampling threshold (ESS < 1000), and 5 MCMC move steps per iteration.

- Evaluation: Compute posterior model probabilities, marginal log-likelihoods (via thermodynamic integration for MCMC/SMC, ELBO for VI), and effective sample size per unit time.

Protocol 2: Diagnosing and Mitigating VI Approximation Error

Objective: Quantify and improve the fidelity of VI for posterior inference of metabolic fluxes. Materials: A single, fixed 13C MFA network model, corresponding labeling dataset, VI software (e.g., Pyro, TensorFlow Probability). Methodology:

- Run Reference MCMC: Obtain a high-ESS, converged MCMC sample as the "gold-standard" posterior.

- Run Variational Inference: Fit mean-field and full-rank Gaussian approximations.

- Diagnose Error:

- Compute the 1-Wasserstein distance between the VI marginal for each flux and the MCMC reference marginal.

- Calculate the maximum mean discrepancy (MMD) between the joint samples (from VI) and the MCMC samples.

- Mitigation: If error is high, implement a structured (e.g., low-rank plus diagonal) covariance or a normalizing flow as the variational family and re-run.

Diagrams

Diagram 1: Workflow for Bayesian 13C MFA Model Selection

Diagram 2: SMC Sampler for Sequential Data Assimilation

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Bayesian 13C MFA

| Item/Category | Function in Bayesian 13C MFA Research | Example Solutions |

|---|---|---|

| Probabilistic Programming Language (PPL) | Provides abstractions for defining Bayesian models (priors, likelihood) and automated inference engines. | PyMC, Stan, TensorFlow Probability, Pyro |

| Metabolic Network Simulator | Solves the isotopomer stationary equations to generate predicted labeling patterns from a flux vector. | INCA, 13CFLUX2, Escher-FBA, COBRApy |

| High-Performance Computing (HPC) Environment | Enables parallel execution of thousands of model simulations required for likelihood calculation in MCMC/SMC. | SLURM workload manager, Docker/Singularity containers, Cloud platforms (AWS, GCP) |

| Numerical Optimizer | Crucial for VI (to maximize ELBO) and for finding MAP estimates to initialize samplers. | L-BFGS-B, Adam, Adagrad |

| Visualization & Diagnostics Library | For trace plots, autocorrelation, posterior pair plots, and model probability visualization. | ArviZ, seaborn, matplotlib, plotly |

Troubleshooting Guides & FAQs

Q1: When calculating the marginal likelihood via the harmonic mean estimator, I am getting erratic or infinite values. What is the cause and solution?

A1: The harmonic mean estimator is notoriously unstable. We recommend moving to a more robust method.

- Cause: The harmonic mean can be dominated by samples with very low likelihood, leading to high variance and potential divergence.

- Solution: Transition to using the Thermodynamic Integration (TI) or Stepping-Stone Sampling (SS) method. These are more computationally intensive but provide reliable estimates for model comparison in 13C MFA.

- Protocol: See Experimental Protocol 1 below for the SS method.

Q2: How do I decide which model to use as the reference model (M0) when calculating Bayes Factors (BF10 = M1/M0)?

A2: The choice is contextual but should follow a systematic rationale.

- For nested models: Use the simpler model (fewer fluxes, pathways) as M0.

- For testing a new hypothesis: Use the established or "null" model (e.g., without a proposed alternate pathway) as M0.

- Interpretation: A BF10 > 10 provides strong evidence for model M1 over M0. A BF10 < 0.1 provides strong evidence for M0 over M1.

Q3: My MCMC chains have converged for parameter estimation, but my marginal likelihood estimates vary significantly between independent runs. What should I do?

A3: This indicates the estimator itself is not converged.

- Cause: Insufficient samples or a problematic estimation method.

- Solution:

- Increase the number of power bridging distributions (often denoted as K) in SS/TI from 100 to 500 or 1000.

- Run multiple independent SS/TI calculations and report the mean and standard deviation of the log marginal likelihood.

- Verify your MCMC sampling at each power step is itself converged (check trace plots and Gelman-Rubin statistics).

Q4: In the context of 13C MFA, what constitutes a "model" for comparison?

A4: A model is a complete quantitative formulation of the metabolic network.

- Common Differentiators:

- Network Topology: Inclusion or exclusion of specific pathways (e.g., glyoxylate shunt, alternative NADH transhydrogenase).

- Compartmentalization: Treating mitochondria and cytosol as separate vs. pooled compartments.

- Reversibility: Modeling certain reactions as irreversible vs. reversible.

- Measurements: Using different subsets of isotopic labeling data (e.g., GC-MS vs. LC-MS fragments).

Experimental Protocols

Protocol 1: Stepping-Stone Sampling for Marginal Likelihood Estimation Objective: Compute the marginal likelihood p(D|M) for a given 13C MFA model M. Materials: Converged MCMC samples for the model parameters (posterior), high-performance computing cluster. Method:

- Define a power schedule: β₀=0 < β₁ < ... < βₖ=1, where β₀ corresponds to the prior and βₖ to the posterior.

- For each power step t from 1 to K: a. Draw samples from the transition distribution p_t(θ|D) ∝ p(D|θ)^βᵥ * p(θ). b. This is typically done by running a short MCMC chain, using the posterior samples from step *t-1 as initial values. c. Calculate the mean likelihood of these samples raised to the power (βᵥ - βᵥ₋₁).

- The product of these means across all K steps gives an estimate of the marginal likelihood.

Protocol 2: Calculating Bayes Factors for Model Comparison Objective: Quantitatively compare two competing metabolic models, M1 and M2. Method:

- For each model (M1, M2), independently compute its log marginal likelihood (log ML) using Stepping-Stone Sampling (Protocol 1).

- Calculate the log Bayes Factor: log(BF₁₂) = log[ p(D|M1) ] - log[ p(D|M2) ].

- Interpret using standard thresholds (e.g., Kass & Raftery, 1995). See Table 1.

Data Presentation

Table 1: Bayes Factor Interpretation Guide

| Log(Bayes Factor) | BF₁₂ | Evidence for Model M1 over M2 |

|---|---|---|

| 0 to 1.6 | 1 to ~5 | Anecdotal / Not substantial |

| 1.6 to 3.2 | ~5 to ~25 | Positive / Substantial |

| 3.2 to 4.8 | ~25 to ~120 | Strong |

| > 4.8 | > 120 | Decisive / Very Strong |

Table 2: Example Model Comparison for Drug-Treated vs. Untreated Cells

| Model (M) | log p(D|M) (SS) | Standard Error | Bayes Factor vs. Null (BFM0) | Interpretation |

|---|---|---|---|---|

| M0 (Null: Core Network) | -1254.3 | ± 0.8 | 1 (Reference) | - |

| M1 (With Alternative Pathway A) | -1248.9 | ± 1.1 | ~ 300 (e^5.4) | Strong evidence for Alternative Pathway A |

| M2 (With Alternative Pathway B) | -1252.1 | ± 1.4 | ~ 9 (e^2.2) | Positive/Anecdotal evidence |

Visualization

Title: Bayesian Model Selection Workflow for 13C MFA

Title: Relationship Between Models, Data, and Bayes Factors

The Scientist's Toolkit

Table 3: Research Reagent Solutions for Bayesian 13C MFA Model Selection

| Item / Solution | Function / Purpose in Experiment |

|---|---|

| [13C₆]-Glucose Tracer | The primary isotopic substrate. Different labeling patterns (e.g., [1,2-13C] vs. [U-13C]) can help discriminate between fluxes. |

| MCMC Sampling Software (e.g., INCA, Metran) | Performs Bayesian inference for 13C MFA, generating posterior distributions of metabolic fluxes. |

| Stepping-Stone Sampling Script (Python/R) | Custom or library-based code (e.g., pymc, bridgesampling) to compute the marginal likelihood from MCMC outputs. |

| High-Performance Computing (HPC) Resources | Essential for running multiple, long MCMC and SS chains for several candidate models in parallel. |

| Reference Metabolic Network Database (e.g., MetaCyc, BIGG) | Provides prior knowledge for building biologically plausible candidate model topologies. |

| Isotopomer Spectral Analysis (ISA) Tools | Used to pre-process and quality-check MS data before integration into the 13C MFA model. |

Troubleshooting Guides & FAQs

Q1: INCA fails to converge or returns "Cannot evaluate model" errors during 13C MFA model selection.

A: This is often due to poor initial flux estimates or an ill-posed network. First, simplify your model: disable all optional fluxes and reactions, then re-enable them stepwise. Ensure your experimental MS data (input labeling patterns) is correctly formatted and normalized. Check for carbon atom transitions that are impossible in your defined network. Use INCA's checkModel and checkData functions before optimization.

Q2: COBRA toolbox simulations produce infeasible flux solutions when integrated with Bayesian MFA results.

A: Infeasibility usually stems from conflicting constraints. Verify that the flux distributions from your INCA/MCMC analysis are being applied as constraints correctly. Ensure the lower bound (lb) and upper bound (ub) for each reaction are physically meaningful (e.g., not setting an irreversible flux with a negative bound). Run changeObjective to ensure your objective function is set correctly. Use optimizeCbModel with the 'max' or 'min' objective to test solution space edges.

Q3: PyMC/Stan sampling is extremely slow for high-dimensional 13C MFA models.

A: High dimensionality is a common challenge. In PyMC, use pm.NUTS instead of pm.Metropolis for more efficient sampling. For both PyMC and Stan, reparameterize your model using non-centered parameterizations for hierarchical priors. Employ multi-core sampling (chains=4, cores=4 in PyMC; num_chains=4 in CmdStanR). For Stan, pre-compute constant matrix operations in the transformed data block. Consider using a variational inference (ADVI) method for initial exploratory runs.

Q4: Custom scripts for data preprocessing yield inconsistent labeling matrix inputs for INCA.

A: Inconsistency often arises from incorrect handling of natural isotope abundances or data normalization. Standardize your pipeline: 1) Apply natural isotope correction using a standard library (e.g., isoCorrector in R). 2) Normalize to the sum of all measured mass isotopomer distributions (MIDs) for each metabolite fragment. 3) Implement a sanity check: the sum of normalized MIDs for any fragment should equal 1 (± a small tolerance, e.g., 1e-5). Create a validation function in your script to flag deviations.

Q5: How do I choose between PyMC and Stan for Bayesian 13C MFA model selection? A: The choice balances flexibility and computational efficiency. PyMC (with PyTensor) offers more dynamic model building and easier debugging, which is ideal for prototyping novel model structures or custom likelihoods. Stan's static compilation and advanced HMC algorithms often provide faster sampling for large, fixed-model structures. For standard 13C MFA with well-established networks, Stan may be preferable. For experimental model selection with variable topology, PyMC may be more adaptable.

Table 1: Comparison of Bayesian Sampling Performance for a Representative 13C MFA Network

| Software/Tool | Model Dimensions (Fluxes, Measured MIDs) | Avg. Sampling Time (seconds, 4 chains) | Effective Sample Size per Second (min, median flux) | R̂ Convergence Diagnostic (Target ≤1.01) |

|---|---|---|---|---|

| INCA (MCMC) | 45, 120 | 1,850 | 0.15 | 1.002 |

| Stan (NUTS) | 45, 120 | 920 | 0.42 | 1.001 |

| PyMC (NUTS) | 45, 120 | 1,310 | 0.28 | 1.003 |

| Custom Script (Metropolis-Hastings) | 45, 120 | 5,600 | 0.03 | 1.050 |

MIDs: Mass Isotopomer Distributions. Test hardware: 8-core CPU, 32GB RAM.

Table 2: Common Error Codes and Resolutions in INCA

| Error Code / Message | Likely Cause | Recommended Action |

|---|---|---|

"Input matrix dimensions mismatch" |

Data matrix rows ≠ number of fragments in model. | Run validateDataStructure; ensure idata and imodel variables align. |

"Non-positive definite Hessian" |

Poorly identifiable model or insufficient data. | Fix more fluxes to literature values; simplify network; check data quality. |

"Optimization failed to start" |

Initial flux values violate constraints. | Use generateInitialVals with 'random' option; relax bounds temporarily. |

Experimental Protocols

Protocol 1: Integrated INCA-Stan Workflow for Robust Flux Estimation & Model Selection

- Data Preprocessing: Use custom scripts (Python/R) to correct raw MS data for natural isotopes, normalize MIDs, and format into INCA's required

.matfile. - Preliminary INCA Run: Load network (

net) and data (idata). Perform an initial flux estimation using INCA'sfitFluxto obtain a point estimate and covariance matrix. - Extract Priors: Use the flux estimate and covariance from Step 2 to formulate informative multivariate normal priors for the key free fluxes in your Bayesian model.

- Stan Model Specification: Code the complete stoichiometric network and 13C labeling equations in Stan. Use the extracted priors. Implement the likelihood comparing simulated MIDs to experimental MIDs.

- Sampling & Diagnostics: Run Stan sampling (4 chains, 2000 iterations warm-up, 2000 sampling). Check

divergent transitionsandR̂. If diagnostics fail, re-tune priors or model parameterization. - Model Selection: For competing network hypotheses, compute and compare Widely Applicable Information Criterion (WAIC) or leave-one-out cross-validation (LOO-CV) scores using the

loopackage in R or ArviZ in Python.

Protocol 2: Using COBRA to Validate Thermodynamic Feasibility of Bayesian Flux Posteriors

- Import Posterior: Load the flux distribution samples (e.g.,

.csvoutput from PyMC/Stan) into MATLAB/Python. - Map to COBRA Model: Create a mapping between your MFA reaction IDs and the COBRA model reaction IDs. Apply the median (or per-sample) flux values as constraints (

model.lb = flux * 0.95; model.ub = flux * 1.05). - Feasibility Analysis: For each posterior sample (or a subset), run

optimizeCbModelto check if the constrained model can achieve a non-zero objective (e.g., growth). A failure indicates thermodynamic/stoichiometric infeasibility. - Generate Alternative Predictions: Use Flux Variability Analysis (

fluxVariability) on the constrained model to identify ranges of other fluxes that are consistent with both the MFA data and genome-scale physiology.

Visualizations

Title: Bayesian 13C MFA Software Integration Workflow

Title: Common Tool Issues and Resolution Pathways

The Scientist's Toolkit: Research Reagent & Software Solutions

| Item | Category | Function in 13C MFA Model Selection |

|---|---|---|

| U-13C Glucose | Tracer | Uniformly labeled carbon substrate; essential for probing central carbon metabolism fluxes in cell cultures. |

| Quenching Solution (60% Methanol, -40°C) | Reagent | Rapidly halts metabolism to preserve intracellular metabolite labeling states for accurate MIDs. |

| Derivatization Agent (e.g., MTBSTFA) | Reagent | Chemically modifies polar metabolites for robust detection via Gas Chromatography-Mass Spectrometry (GC-MS). |

| INCA Software Suite | Software | The industry-standard platform for building metabolic networks and performing 13C Metabolic Flux Analysis. |

| MATLAB with COBRA Toolbox | Software | Environment for constraint-based modeling; validates MFA fluxes in a genome-scale metabolic model (GSMM) context. |

| PyMC/Stan Libraries | Software | Probabilistic programming frameworks for implementing custom Bayesian models, enabling flexible model selection and uncertainty quantification. |

| Custom Python/R Scripts | Software | Bridge data preprocessing, tool interoperability, and automate analysis pipelines, integrating INCA, COBRA, and Bayesian samplers. |

| High-Resolution GC-MS System | Instrument | Measures mass isotopomer distributions (MIDs) of intracellular metabolites with the precision required for flux determination. |

Technical Support Center: Troubleshooting 13C MFA in Cancer Metabolism Studies

FAQs & Troubleshooting Guides

Q1: Our Bayesian model selection for 13C MFA consistently favors an overly complex model with too many branch points. How can we penalize complexity more effectively? A1: This indicates a potential issue with your prior specification or evidence calculation. First, verify you are using an appropriate information criterion like the Widely Applicable Information Criterion (WAIC) or conducting Pareto-smoothed importance sampling leave-one-out cross-validation (PSIS-LOO) for model comparison. Ensure your priors for branch point fluxes are regularizing properly—consider using hierarchical priors if you have replicate data. Check for label input errors in your tracer experiment ([1-13C]glucose vs. [U-13C]glucose) which can artificially create demand for unnecessary pathways.

Q2: When modeling glycolytic branching into serine synthesis or lactate production, our MCMC chains do not converge. What are the common causes? A2: Non-convergence (assessed by R-hat > 1.05) often stems from poorly identifiable parameters. For the PHGDH (serine synthesis) branch point versus LDHA (lactate production):

- Tracer Design: Confirm you are using a tracer that differentiates these pathways, such as [1,2-13C]glucose. Update your atom transition network accordingly.

- Measurement Insufficiency: You may need additional extracellular flux measurements (e.g., serine secretion, lactate excretion rates) to constrain the model. Incorporate these as hard constraints.