Bayesian Optimization for Multistep Pathway Optimization: A Complete Guide for Drug Development Scientists

This article provides a comprehensive guide to Bayesian Optimization (BO) for optimizing complex, multistep pathways in drug development.

Bayesian Optimization for Multistep Pathway Optimization: A Complete Guide for Drug Development Scientists

Abstract

This article provides a comprehensive guide to Bayesian Optimization (BO) for optimizing complex, multistep pathways in drug development. It explores the foundational principles of BO, its superiority over traditional Design of Experiments (DOE) for sequential, high-cost experimentation. We detail practical methodologies for applying BO to biochemical pathway optimization, including parameter selection and acquisition function strategies. The guide addresses common implementation challenges and optimization techniques. Finally, we present validation frameworks and comparative analyses with alternative machine learning methods, illustrating BO's efficacy in accelerating therapeutic discovery and process development for researchers and pharmaceutical professionals.

What is Bayesian Optimization? Core Principles for Multistep Biochemical Pathways

The Challenge of Multistep Pathway Optimization in Drug Development

Within the broader thesis on Bayesian optimization for multistep pathway research, this application note addresses the critical bottleneck in drug development: the simultaneous optimization of multi-variable, interdependent synthetic and biological pathways. Traditional one-factor-at-a-time (OFAT) approaches are inefficient for these high-dimensional, non-linear systems. Bayesian optimization (BO) emerges as a principled, data-efficient framework to navigate complex design spaces, balancing exploration of uncertain regions with exploitation of known high-performance areas to accelerate the identification of optimal pathway conditions.

Key Challenges and Quantitative Data

The optimization of multistep pathways, whether in chemical synthesis (e.g., API manufacturing) or cellular signaling manipulation (e.g., CAR-T cell differentiation), presents interconnected challenges. Key performance indicators (KPIs) often compete, requiring a trade-off analysis.

Table 1: Competing KPIs in Representative Multistep Pathways

| Pathway Type | Primary KPI (Maximize) | Conflicting KPI (Minimize/Optimize) | Typical Benchmark Values (Current) | BO Optimization Target |

|---|---|---|---|---|

| Chemical Synthesis | Overall Yield (%) | Total Impurity (%) | Yield: 65-75%; Impurity: 2-5% | Yield >85%; Impurity <1.5% |

| Biocatalytic Cascade | Total Titer (g/L) | Total Enzyme Cost ($/kg) | Titer: 10-50 g/L; Cost: High | Titer >100 g/L; Cost reduction >50% |

| Cell Therapy Manufacturing | Cell Potency (Cytolytic Units) | Exhaustion Marker Expression (%) | Potency: Highly variable; Exhaustion: 20-40% | Maximize Potency; Exhaustion <15% |

| Signal Transduction Modulation | Target Pathway Activity (Fold Change) | Off-target Pathway Activity (Fold Change) | On-target: 5-10x; Off-target: 2-3x | On-target >15x; Off-target <1.5x |

Table 2: Dimensionality of the Optimization Problem

| Pathway Example | Typical Tunable Variables | Variable Interdependence | Design Space Size (Classical DoE) | BO Estimated Iterations to Optima* |

|---|---|---|---|---|

| 5-Step Catalytic Synthesis | 8-12 (Temp, Cat. load, time, etc.) | High (e.g., step yield affects downstream) | 2^12 = 4096 experiments | 50-100 |

| 3-Stage T-cell Differentiation | 6-8 (Cytokine conc., timing, media) | Very High (sequential fate decisions) | Fractional designs still large | 30-80 |

| BO iterations are problem-dependent but typically represent a 10-50x reduction vs. grid search. |

Application Notes: A Bayesian Optimization Framework

Core Workflow: 1) Define the objective function (e.g., composite score of yield, purity, cost). 2) Initialize with a space-filling design (e.g., Latin Hypercube) of 5-10 experiments. 3) Iterate: a) Train a probabilistic surrogate model (Gaussian Process) on all collected data. b) Use an acquisition function (Expected Improvement) to select the next most informative experiment. c) Run experiment, collect data, and update the model. 4) Converge after a set number of iterations or when improvement plateaus.

Protocol 1: Setting Up a BO Experiment for a 3-Step Biocatalytic Cascade

Objective: Maximize final product titer while minimizing total process time. Materials: See "Scientist's Toolkit" below. Pre-Experimental Design:

- Define Variables and Bounds: Identify critical variables for each step (e.g., pHA, [E1]A, TempB, [E2]B, Time_C). Set feasible min/max bounds for each.

- Formulate Objective Function: Create a scalar score. Example:

Score = (Titer/100) - (Total_Time/300). Normalize based on known benchmarks. - Initial Design: Use a Latin Hypercube Sampling (LHS) function to generate 8 initial condition sets across the bounded space. Ensure no two variables are correlated in the initial set.

Procedure:

- Initial Experimentation: Execute the 8 predefined experiments in randomized order. Record titer (HPLC analysis) and total time for each.

- Bayesian Optimization Loop: a. Model Training: Input all historical data (variables X, objective scores Y) into a Gaussian Process regression model. Use a Matern kernel. b. Next Point Selection: Calculate the Expected Improvement (EI) across a fine grid of the entire variable space. Select the variable set with the maximum EI value. c. Experimental Execution: Perform the cascade reaction using the selected conditions. d. Data Incorporation: Append the new result to the historical dataset. e. Iteration: Repeat steps a-d for 40-60 iterations or until the maximum EI value falls below 0.01 (indicating minimal expected improvement).

Analysis: Plot the cumulative maximum objective score vs. iteration number to visualize convergence. Analyze the final surrogate model to identify global optima and variable sensitivities.

Protocol 2: Optimizing a 2-Step Signaling Pathway for Target Gene Expression

Objective: Maximize reporter gene expression from a inducible promoter system while minimizing basal leakage. Challenge: Optimize concentrations of two sequential inducers (Inducer1, Inducer2) and the timing between additions (Δt). Procedure:

- Cell Seeding: Seed reporter cell line in 96-well plates.

- BO Setup: Define variables: [Inducer1] (0-100 nM), [Inducer2] (0-500 nM), Δt (0-24 hrs). Objective:

Score = (Induced_Luminescence - Basal_Luminescence) / Basal_Luminescence. - Initialization: Perform 6 LHS initial experiments.

- Automated Loop: Use an integrated liquid handler and plate reader. a. Add Inducer1 at T=0. b. Add Inducer2 after the specified Δt. c. Measure luminescence at 24h post-final induction. d. Automate data transfer to BO software (e.g., Ax, BoTorch). e. Let the BO algorithm select the next condition set for the subsequent plate.

- Validation: After BO convergence (e.g., 50 cycles), run the top 3 predicted conditions in biological triplicate for validation.

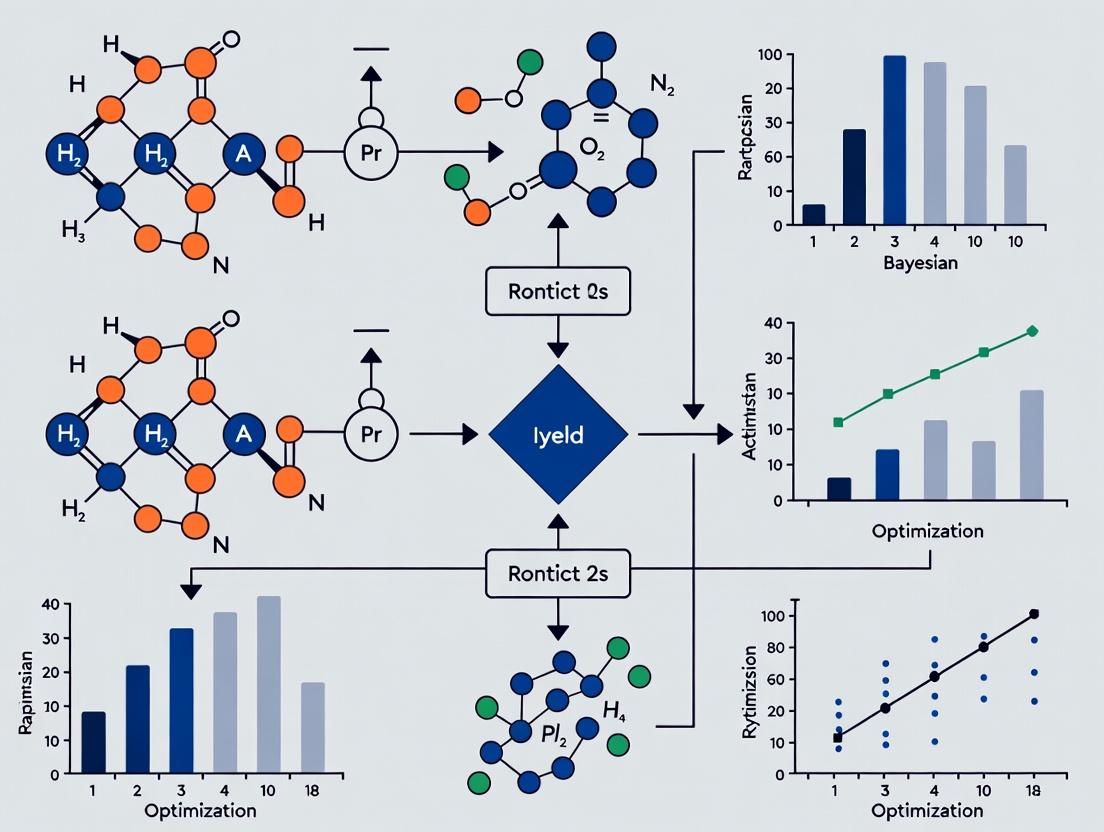

Visualization of Workflows and Pathways

BO Iterative Loop for Pathway Optimization

A Generic 2-Step Signaling Pathway for Optimization

The Scientist's Toolkit

Table 3: Key Research Reagent Solutions for Multistep Optimization

| Item/Reagent | Function in Optimization | Example Vendor/Product |

|---|---|---|

| Design of Experiments (DoE) Software | Generates initial space-filling designs (LHS) and analyzes complex interactions. | JMP, Modde, Design-Expert |

| Bayesian Optimization Platform | Core engine for building surrogate models and calculating acquisition functions. | Ax (Facebook), BoTorch (PyTorch), Sigopt |

| High-Throughput Automated Reactors | Enables precise, parallel execution of chemical/biochemical step experiments. | AM Technology, HEL, Unchained Labs |

| Robotic Liquid Handling Systems | Automates cell culture, inducer addition, and sampling for biological pathways. | Hamilton, Tecan, Opentrons |

| Online Analytical Technology (PAT) | Provides real-time data (e.g., HPLC, Raman) for immediate feedback into BO loop. | Thermo Fisher, Metrohm, Sartorius |

| Gaussian Process Library | Implements core surrogate modeling algorithms. | GPy (Python), scikit-learn, Stan |

| Cellular Reporter Assays | Quantifies signaling pathway output (luminescence/fluorescence) as objective function. | Promega Luciferase, Thermo Fisher GFP/B-gal |

| Precision Growth Media & Inducers | Defined, variable components for cell-based pathway optimization. | Gibco, Sigma-Aldrich, Takara |

| Process Modeling & Simulation Software | Digital twin for in-silico testing prior to physical experiments. | Aspen Plus, BioUML, COPASI |

Within the broader thesis on Bayesian Optimization for Multistep Pathway Optimization Research, this document serves as foundational Application Notes. The optimization of multistep pathways—such as synthetic biology routes for novel drug precursors or multi-reaction chemical synthesis—is often hampered by high experimental cost, noisy measurements, and complex, non-linear parameter interactions. Bayesian Optimization (BO) provides a principled, data-efficient framework for globally optimizing such expensive-to-evaluate black-box functions. This protocol details the core components: the surrogate model for probabilistic approximation, the acquisition function for decision-making, and the closed-loop sequential experiment design.

Core Components: Theory & Application

The Surrogate Model: Gaussian Process (GP) Regression

The surrogate model places a prior over the objective function (e.g., pathway yield or titer) and updates this prior with observed data to form a posterior distribution. The GP is the most common choice due to its flexibility and inherent uncertainty quantification.

Key Protocol: Configuring a Gaussian Process for Pathway Data

Define the Prior Mean Function, m(x):

- Typical Setting: A constant mean (e.g., the average of observed yields).

- Advanced Protocol: Use a simple mechanistic model of the pathway as the mean function to incorporate domain knowledge.

Select the Covariance Kernel Function, k(x, x'):

- Standard Choice: The Matérn 5/2 kernel. It is less smooth than the squared-exponential kernel, better accommodating the rugged response surfaces common in biochemical systems.

- Kernel Hyperparameters: The lengthscale l (governing smoothness) and signal variance σ² (governing output scale). These are typically optimized by maximizing the log marginal likelihood of the observed data.

Incorporate Observation Noise:

- Explicitly model measurement noise by adding a term σₙ²I to the covariance matrix, where σₙ² is the noise variance.

Table 1: Common Kernel Functions for Biochemical Pathway Optimization

| Kernel Name | Mathematical Form (Isotropic) | Key Property | Best For |

|---|---|---|---|

| Matérn 5/2 | k(r) = σ²(1 + √5r/l + 5r²/(3l²))exp(-√5r/l) |

Moderately smooth | Most pathway problems (default) |

| Squared Exponential | k(r) = σ² exp(-r²/(2l²)) |

Infinitely differentiable | Very smooth, well-behaved systems |

| Rational Quadratic | k(r) = σ² (1 + r²/(2αl²))^(-α) |

Multi-scale lengthscales | Data with varying smoothness |

Diagram: Bayesian Update in Gaussian Process Surrogate Modeling

The Acquisition Function

The acquisition function α(x) uses the surrogate posterior to quantify the utility of evaluating the objective at a new point x. It balances exploration (probing uncertain regions) and exploitation (probing regions with high predicted mean).

Key Protocol: Selecting and Optimizing the Acquisition Function

Choice of Function:

- Expected Improvement (EI): The most widely used. Proposes the next experiment where the expected improvement over the current best observation is highest.

- Upper Confidence Bound (UCB): Uses a confidence parameter β to tune exploration-exploitation: α_UCB(x) = μ(x) + β σ(x).

- Probability of Improvement (PI): Simpler than EI but more exploitative.

Optimization:

- The next experiment is proposed at x_next = argmax α(x).

- Since α(x) is cheap to evaluate, it can be optimized using standard gradient-based methods or multi-start algorithms like L-BFGS-B.

Table 2: Comparison of Common Acquisition Functions

| Function | Mathematical Form | Parameter(s) | Behavior |

|---|---|---|---|

| Expected Improvement (EI) | E[max(0, f(x) - f(x⁺))] |

None | Balanced, robust default |

| Upper Confidence Bound (UCB) | μ(x) + κ σ(x) |

κ (≥0) |

Explicit control via κ |

| Probability of Improvement (PI) | P(f(x) ≥ f(x⁺) + ξ) |

ξ (≥0) |

More exploitative; can get stuck |

The Sequential Loop

The BO algorithm iterates the following closed-loop protocol until a resource budget (experimental iterations, time, or cost) is exhausted.

Experimental Protocol: The Bayesian Optimization Cycle

Initialization (Design of Experiments):

- Perform n initial experiments (e.g., 5-10) using a space-filling design (Latin Hypercube Sampling) across the parameter space (e.g., enzyme concentrations, pH, temperature).

Sequential Loop (For iteration i = n+1, ... , N): a. Model Training: Fit/update the GP surrogate model to all observed data D_{1:i-1} = {(x_j, y_j)}. b. Acquisition Maximization: Find x_i = argmax α(x) using the current surrogate. c. Experiment Execution: Conduct the wet-lab experiment (e.g., run the multistep pathway with parameters x_i) to obtain y_i. d. Data Augmentation: Append the new observation to the dataset: D_{1:i} = D_{1:i-1} ∪ {(x_i, y_i)}.

Diagram: The Bayesian Optimization Sequential Experimental Loop

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials & Computational Tools for BO-Driven Pathway Optimization

| Item / Reagent | Function / Purpose in BO Workflow |

|---|---|

| Robotic Liquid Handler (e.g., Opentron) | Enables precise, automated execution of the proposed experimental conditions from the BO loop in microtiter plates. |

| High-Throughput Assay Kits (e.g., HPLC-MS, fluorescent reporters) | Provides the quantitative output (y_i) for each experiment (e.g., metabolite concentration, product titer) with necessary throughput. |

| DOE Software (e.g., JMP, pyDOE) | Generates the initial space-filling design for the first batch of experiments. |

| Bayesian Optimization Library (e.g., BoTorch, GPyOpt, scikit-optimize) | Implements the core algorithms: GP regression, acquisition functions, and the sequential loop. |

| Laboratory Information Management System (LIMS) | Tracks and manages all experimental data, linking proposed parameters (x_i) to observed results (y_i) for robust dataset construction. |

Case Study Protocol: Optimizing a Three-Enzyme Cascade

Objective: Maximize the yield of final product P in a cell-free enzymatic cascade.

Parameters (x) to Optimize:

- Enzyme 1 Concentration: [0.1, 10.0] µM

- Enzyme 2 Concentration: [0.1, 10.0] µM

- Enzyme 3 Concentration: [0.1, 10.0] µM

- Cofactor Mg²⁺: [1.0, 50.0] mM

Detailed Experimental Protocol:

Initialization:

- Use

pyDOEto generate a 12-point Latin Hypercube Sample across the 4D parameter space. - Prepare reaction mixtures in a 96-well plate according to these 12 conditions using a liquid handler.

- Incubate at 30°C for 2 hours. Quench reactions.

- Quantify [P] via UPLC-MS. Record yields as initial dataset D.

- Use

BO Loop Setup:

- Use

BoTorchwith aSingleTaskGPmodel (Matérn 5/2 kernel). - Configure the acquisition function as

qExpectedImprovement(batch size of 1 for sequential). - Set budget: 30 sequential experiments.

- Use

Sequential Optimization:

- For each of the 30 iterations:

- Fit the GP model to the current D.

- Compute the next point

x_nextby maximizing EI. - Prepare and run a single reaction at

x_next. - Measure yield and append

(x_next, y_next)to D.

- For each of the 30 iterations:

Validation:

- Identify the parameter set

x*with the highest observed yield in the final D. - Perform 3 biological replicate experiments at

x*and a standard condition to confirm statistically significant improvement.

- Identify the parameter set

Within the thesis on Bayesian Optimization (BO) for multistep pathway optimization in drug development, a critical limitation must be addressed: the inadequacy of traditional Design of Experiments (DOE). While classical DOE (e.g., full factorial, response surface methodology) excels in optimizing a few factors with cheap, abundant data, it becomes computationally and resource-prohibitive for high-dimensional (many factors) and expensive experiments (e.g., cell culture assays, animal studies, clinical trials). This application note details why traditional methods fail and outlines protocols for implementing a superior alternative: Sequential Model-Based Optimization, often embodied by Bayesian Optimization.

The Core Shortcomings of Traditional DOE

Traditional DOE methods require a pre-defined, static set of experimental runs. Their scalability issues are quantified below.

Table 1: Comparison of Traditional DOE Scale vs. Resource Requirements

| DOE Method | Number of Experiments for k Factors | Curse of Dimensionality Impact | Suitability for Expensive Runs |

|---|---|---|---|

| Full Factorial (2 levels) | 2^k | Catastrophic: 10 factors = 1024 runs | Very Poor |

| Central Composite (RSM) | ~2^k + 2k + cp | Severe: 10 factors ~ 1,000+ runs | Poor |

| Fractional Factorial | 2^(k-p) | Moderate, but loses interaction clarity | Moderate for screening only |

| Optimal (D/O) Designs | User-defined, but grows linearly | Manages growth but is static | Moderate, but non-sequential |

Key Insight: The exponential growth in required runs directly conflicts with the high cost (time, money, materials) of each experiment in pathway research. Furthermore, traditional DOE treats all experiments as equally informative, wasting resources on non-optimal regions.

Protocol: Transitioning to Bayesian Optimization for Pathway Optimization

This protocol provides a step-by-step methodology for implementing a Bayesian Optimization loop to optimize a multistep cell signaling pathway readout (e.g., cytokine yield).

Protocol Title: Sequential Bayesian Optimization for High-Dimensional Cell Culture Pathway Optimization.

Objective: To maximize the output of a desired protein (e.g., a therapeutic antibody) from a transfected cell line by optimizing 8+ interdependent factors (e.g., transfection reagent concentration, incubation temperature, media components, induction timing) with a limited budget of 50 experimental batches.

Materials & Reagents:

- Cell Line: HEK293 or CHO cells.

- Expression Vector: Encoding target protein with inducible promoter.

- Transfection Reagent: Polyethylenimine (PEI) or lipid-based.

- Serum-Free Media: Chemically defined media for production.

- Feed Supplements: Glucose, amino acids, growth factors.

- Induction Agent: e.g., Doxycycline for Tet-On systems.

- Analysis Kit: ELISA or HPLC kit for quantifying target protein titer.

Procedure:

Step 1: Define Search Space & Objective (Pre-loop).

- List all factors (x) to optimize (e.g., 8 factors). For each, define a plausible minimum and maximum value (e.g., pH: 6.8-7.4, Temperature: 32-37°C).

- Define the primary objective function (y), e.g.,

Protein Titer (mg/L) at 96 hours. - Initial Design: Use a space-filling design (e.g., Latin Hypercube) to select 10-15 initial, diverse experiments from the high-dimensional space. Execute these and measure y.

Step 2: Build a Probabilistic Surrogate Model.

- Train a Gaussian Process (GP) regression model on all accumulated data {x, y}.

- The GP models the unknown function

y = f(x)and provides a prediction (mean) and an uncertainty estimate (variance) for any point in the high-dimensional space.

Step 3: Optimize the Acquisition Function.

- Compute an acquisition function across the search space using the GP's predictions. Use Expected Improvement (EI).

- EI balances exploitation (probing areas predicted to be high) and exploration (probing areas of high uncertainty).

- Find the point

x_nextwhere EI is maximized. This is a cheap computation on the computer.

Step 4: Execute Experiment & Update Loop.

- Perform the single, most informative experiment defined by

x_nextin the lab. - Measure the resulting objective

y_next. - Add the new data pair {

x_next,y_next} to the training dataset. - Repeat from Step 2 until the experimental budget (e.g., 50 runs) is exhausted.

- Perform the single, most informative experiment defined by

Expected Outcome: The BO algorithm will sequentially identify and test high-performing conditions, concentrating experiments in optimal regions of the high-dimensional factor space and yielding a higher final protein titer than any traditional DOE approach under the same budget.

Visualizing the Workflow

Title: Bayesian Optimization Sequential Loop

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Cell-Based Pathway Optimization Experiments

| Item | Function in Protocol | Example Product/Category |

|---|---|---|

| Chemically Defined Media | Provides a consistent, serum-free environment for precise factor modulation. | Gibco CD CHO Media, Thermo Fisher. |

| High-Efficiency Transfection Reagent | Enables genetic perturbation (e.g., pathway reporter genes) in hard-to-transfect cells. | Lipofectamine 3000, Polyplus PEIpro. |

| Inducible Expression System | Allows controlled timing of gene expression, a critical optimization factor. | Tet-On 3G Inducible Gene Expression System (Clontech). |

| Metabolite Analysis Kits | Quantifies key metabolites (glucose, lactate) to model cell metabolism and health. | BioProfile FLEX2 Analyzer (Nova Biomedical). |

| Microplate-Based Titer Assay | Enables high-throughput quantification of target protein yield from small-volume cultures. | SimpleStep ELISA Kits, Protein A/G HPLC. |

| DOE & BO Software | Platforms for designing experiments and building surrogate models. | JMP, Modde, Ax, custom Python (scikit-optimize, BoTorch). |

Within the broader thesis on Bayesian Optimization (BO) for multistep pathway optimization in drug development, this document details the core algorithmic components. BO is essential for efficiently optimizing complex, expensive-to-evaluate biological systems, such as multi-enzyme synthesis pathways or cell culture parameter cascades. The framework hinges on two pillars: a probabilistic surrogate model (typically a Gaussian Process) to approximate the unknown system, and an acquisition function to intelligently select the next experiment by balancing exploration and exploitation.

Gaussian Processes (GPs) as Surrogate Models

A Gaussian Process is a non-parametric Bayesian model defining a distribution over functions. It is fully specified by a mean function m(x) and a covariance (kernel) function k(x, x').

Key Mathematical Formulation:

- Prior: f(x) ~ GP(m(x), k(x, x')). Often m(x) = 0 after centering data.

- Posterior: Given observed data D = (X, y), the posterior predictive distribution for a new point x* is Gaussian with:

- Mean: μ(x) = k(x, X)[K(X,X) + σₙ²I]⁻¹y

- Variance: σ²(x) = k(x, x) - k(x, X)[K(X,X) + σₙ²I]⁻¹k(X, x)* where K is the kernel matrix and σₙ² is the observation noise variance.

Common Kernels in Pathway Optimization:

| Kernel | Formula | Key Property | Best For Pathway Context |

|---|---|---|---|

| Radial Basis (RBF) | k(x,x') = exp(-0.5 |x-x'|² / l²) | Smooth, infinitely differentiable | Modeling continuous biochemical responses (e.g., yield vs. pH/Temp). |

| Matérn 5/2 | k(x,x') = (1 + √5r/l + 5r²/3l²)exp(-√5r/l) | Less smooth than RBF, allows for variability | Capturing sharper transitions or noise-prone assay outputs. |

| Linear | k(x,x') = σ_b² + σ_v²(x·c)(x'·c) | Models linear relationships | Preliminary screening phases where linear trends dominate. |

Protocol 2.1: Implementing a GP Prior for Pathway Screening

- Define Search Space: Parameterize your multistep pathway. Example: For a 3-enzyme cascade, define variables: [E1_conc (μM), E2_conc (μM), Reaction_pH, Incubation_time (hr)].

- Choose Kernel: Start with Matérn 5/2 kernel for robust performance. Initialize length-scale l as 20% of the parameter range.

- Specify Likelihood: Use a Gaussian likelihood with a noise parameter σₙ. Initialize based on known assay variance.

- Optimize Hyperparameters: Maximize the log marginal likelihood log p(y\|X, θ) using an optimizer (e.g., L-BFGS) over kernel parameters θ = {l, σₙ}.

- Validate: Perform leave-one-out cross-validation on initial design points. The standardized cross-validated residual (SCVR) should be < |3|.

Acquisition Functions for Sequential Design

The acquisition function α(x) guides the next experiment by quantifying the utility of evaluating a candidate x. It uses the GP posterior to balance exploration (high uncertainty) and exploitation (high predicted mean).

Quantitative Comparison of Acquisition Functions:

| Function | Formula (Minimization Context) | Parameter(s) | Balance Behavior |

|---|---|---|---|

| Probability of Improvement (PI) | α_PI(x) = Φ((μ(x) - f(x⁺) - ξ) / σ(x)) | ξ (jitter) | Exploitation-heavy. Favors areas likely to beat current best f(x⁺). |

| Expected Improvement (EI) | α_EI(x) = (f(x⁺)-μ(x)-ξ)Φ(Z) + σ(x)φ(Z) Z=(f(x⁺)-μ(x)-ξ)/σ(x) | ξ (exploration jitter) | Adaptive balance. Industry standard for efficiency. |

| Upper Confidence Bound (UCB) | α_UCB(x) = -μ(x) + β σ(x) | β (exploration weight) | Exploration-tunable. Direct control via β. Theoretical guarantees. |

Protocol 3.1: Selecting & Optimizing an Acquisition Function for a Pathway Run

- Initial Design: Generate 5-10 points per parameter via Latin Hypercube Sampling (LHS). Evaluate pathway output (e.g., titer, purity).

- GP Fit: Fit the GP model to all available data as per Protocol 2.1.

- Acquisition Choice: For <20 experiments, use EI with ξ=0.01 to prevent over-exploitation. For >20 experiments or high noise, use UCB with a scheduling function (e.g., β_t = 0.2 * log(2t)).

- Optimize Acquisition: Find x_next = argmax α(x) using multi-start gradient descent (≥10 random restarts).

- Execute Experiment: Run the biological experiment at the suggested conditions x_next.

- Iterate: Update GP with new data. Loop (Steps 2-5) until resource budget is exhausted or convergence (e.g., <2% improvement over 5 iterations).

Visualization: BO Workflow in Pathway Optimization

Title: Bayesian Optimization Loop for Pathway Screening

The Scientist's Toolkit: Research Reagent & Software Solutions

| Item / Solution | Function in BO-Driven Pathway Optimization |

|---|---|

| High-Throughput Microbioreactor Array (e.g., ambr) | Enables parallelized, miniaturized cultivation to generate the initial design and sequential data points with high reproducibility. |

| DoE Software (e.g., JMP, MODDE) | Used to generate the initial space-filling Latin Hypercube design for efficient coverage of the parameter space. |

| GPyTorch / scikit-learn | Python libraries for building and training flexible Gaussian Process models with automatic differentiation. |

| BoTorch / Ax | Specialized frameworks for Bayesian Optimization, providing state-of-the-art acquisition functions (qEI, qUCB) and optimization. |

| Robotic Liquid Handling System | Automates the setup of multistep pathway reactions (enzyme additions, buffer changes) to ensure precision for suggested conditions. |

| Multi-Mode Microplate Reader | Provides the objective function data (e.g., fluorescence, absorbance) for pathway output quantification after each BO iteration. |

Application Notes: Bayesian Optimization for Multistep Pathway Optimization

1.1 Thesis Context Within the broader thesis on Bayesian Optimization (BO) for multistep pathway optimization, this document details its application to a quintessential drug development challenge: optimizing the multi-step biosynthesis pathway for a novel polyketide antibiotic in Streptomyces coelicolor. This serves as a real-world analogy for how BO efficiently navigates vast, noisy, and resource-constrained experimental landscapes, such as those in metabolic engineering and cell line development.

1.2 Core Analogy: The Experimental Landscape as a Terrain Imagine the yield/titer of the desired antibiotic as the "altitude" on a geographical map. Each combination of experimental parameters (e.g., promoter strengths, enzyme concentrations, fermentation conditions) is a unique (x,y) coordinate. The goal is to find the highest peak (global optimum) with the fewest possible "measurement hikes" (expensive experiments). BO acts as an expert guide:

- Prior Belief (Surrogate Model): Starts with an initial, probabilistic map of the terrain based on known data or domain expertise (Gaussian Process).

- Acquisition Function: Calculates the most informative "next step" by balancing exploration of unknown regions and exploitation of known promising areas.

- Iterative Learning: Each new experimental result updates the map, intelligently guiding the next experiment towards higher ground.

1.3 Current Data Summary from Recent Studies Recent applications of BO in biological pathway optimization demonstrate significant efficiency gains.

Table 1: Comparative Performance of BO vs. Traditional Methods in Pathway Optimization

| Optimization Method | Avg. Experiments to Reach 90% of Max Titer | Max Final Titer Achieved (mg/L) | Key Parameters Optimized | Reference Year |

|---|---|---|---|---|

| One-Factor-at-a-Time (OFAT) | 48 | 120 | Promoter strength, induction timing | (Benchmark) |

| Design of Experiments (DoE) | 22 | 185 | Media components, temperature, pH | (Benchmark) |

| Bayesian Optimization (BO) | 14 | 210 | Promoter combos, enzyme ratios, feed rate | 2023 |

| BO with Prior Knowledge (Multi-fidelity) | 9 | 205 | Pathway gene expression, bioreactor conditions | 2024 |

Table 2: Example BO Hyperparameters for a 6-Parameter Pathway Optimization

| Hyperparameter | Typical Setting | Function in Navigating the Landscape |

|---|---|---|

| Surrogate Model | Gaussian Process (Matern 5/2 kernel) | Models the smoothness and uncertainty of the experimental response surface. |

| Acquisition Function | Expected Improvement (EI) | Balances exploring uncertain regions vs. exploiting known high-yield regions. |

| Initial Design Points | 10 (via Latin Hypercube) | Provides a sparse but space-filling initial map of the terrain. |

| Optimization Iterations | 20-30 | The number of guided "steps" taken to converge on the optimum. |

Detailed Experimental Protocol: BO-Driven Antibiotic Pathway Optimization

2.1 Protocol Title: Iterative Bayesian Optimization of a Heterologous Polyketide Pathway in S. coelicolor.

2.2 Objective: To maximize the titer of a target polyketide (Compound X) by optimizing a 4-gene expression cassette and two bioreactor process variables using a BO framework.

2.3 Materials & Reagent Solutions Table 3: Research Reagent Solutions & Key Materials

| Item/Catalog (Example) | Function in the Experiment |

|---|---|

| pCRISPomyces-2 Plasmid Kit | Modular toolkit for genomic integration of pathway genes with tunable promoters. |

| Tunable Promoter Library (J23100 series variants) | Provides a gradient of transcriptional strengths for each gene to create combinatorial diversity. |

| S. coelicolor A3(2) Host Strain | Model actinomycete chassis for antibiotic production. |

| RSM Medium (Modified R5) | Defined fermentation medium supporting high-density growth and secondary metabolism. |

| LC-MS/MS System (e.g., Agilent 6470) | For quantitative analysis of Compound X titer and key pathway intermediates. |

| Bayesian Optimization Software (e.g., Ax, BoTorch, or custom Python/GPyOpt) | Platform for running the surrogate model, acquisition function, and suggesting next experiment. |

| 24-well Deep-Dwell Microtiter Plates | Enables high-throughput, parallel mini-fermentations under controlled conditions. |

2.4 Procedure

Phase 1: Experimental Space Definition & Initial Design (Week 1)

- Define Parameters & Bounds: List the six parameters to optimize. Assign a realistic experimental range for each.

P_geneA: Strength of promoter for gene A (0.1 - 1.0 relative units).P_geneB: Strength of promoter for gene B (0.1 - 1.0).P_geneC: Strength of promoter for gene C (0.2 - 1.5).P_geneD: Strength of promoter for gene D (0.05 - 0.8).Temp: Fermentation temperature (24°C - 30°C).Induction_OD: Optical density for pathway induction (0.4 - 0.8).

- Generate Initial Dataset: Using the BO software, create an initial set of 10 experimental conditions via Latin Hypercube Sampling to ensure space-filling coverage of the 6-dimensional parameter space.

- Construct Strains: For each of the 10 promoter combinations, assemble the expression cassette using Golden Gate assembly and integrate into the S. coelicolor chromosome via CRISPR-Cas9.

Phase 2: High-Throughput Experimentation & Iterative BO Loop (Weeks 2-5)

- Mini-Fermentation: Inoculate each of the 10 engineered strains into 2 mL of RSM medium in 24-deep well plates. Run fermentations according to the assigned

TempandInduction_ODparameters. - Quantitative Analysis: At 120 hours, harvest cultures. Extract metabolites and quantify the titer of Compound X using LC-MS/MS. Normalize data to cell dry weight (g/L).

- Update BO Model: Input the six parameters and the corresponding measured titer for all 10 experiments into the BO software. The Gaussian Process model will update its surrogate function (the probabilistic "map").

- Suggest Next Experiment: The acquisition function (e.g., Expected Improvement) will calculate the single most promising parameter set (the "next coordinate") to test, balancing high predicted titer with high uncertainty.

- Iterate: Construct the new strain, run the fermentation, and measure the titer (repeat steps 4-6). Add this new data point to the dataset.

- Loop Termination: Repeat the "Suggest -> Experiment -> Update" loop (steps 7 & 8) for 20-25 additional iterations, or until the titer plateaus (e.g., <5% improvement over three consecutive iterations).

Phase 3: Validation & Analysis (Week 6)

- Validate Optimum: Take the top 3 parameter sets identified by BO and run triplicate bench-scale bioreactor (1L) fermentations to confirm performance in a controlled, scaled environment.

- Pathway Flux Analysis: For the top-performing strain, perform targeted metabolomics on key pathway intermediates to analyze the flux redistribution achieved by the optimized expression balance.

Visualizations

Title: Bayesian Optimization Iterative Workflow

Title: BO-Optimized Polyketide Pathway & Variables

Implementing Bayesian Optimization: A Step-by-Step Guide for Pathway Engineering

Within a Bayesian optimization (BO) framework for multistep biological pathway optimization—such as drug candidate synthesis or cell culture process development—the precise definition of the search space is the critical first step. This initial boundary determination constrains the optimization problem and directly influences the efficiency and success of subsequent BO cycles. A poorly defined space leads to wasted experimental resources, while an overly restrictive one may exclude the global optimum. This protocol details the systematic approach to defining a high-dimensional, constrained search space for a multistep process, contextualized within a broader research thesis applying BO to metabolic engineering and biopharmaceutical production.

Foundational Concepts: Quantitative Parameters of a Multistep Process

A multistep process is characterized by controllable input parameters (decision variables) and measured outputs (objectives/constraints). The search space is the hyperdimensional region encompassing all possible combinations of these input parameters.

Table 1: Core Quantitative Dimensions of a Multistep Process Search Space

| Dimension | Description | Typical Examples in Bioprocessing | Data Type |

|---|---|---|---|

| Continuous Variables | Infinitely adjustable parameters within bounds. | Temperature (°C), pH, Dissolved Oxygen (%), media component concentration (mM), induction time (h). | Float |

| Discrete/Categorical Variables | Finite set of distinct options. | Cell line strain (CHO-K1, GS-NS0), promoter type (Inducible, Constitutive), chromatography resin (A, B, C). | Integer/String |

| Inter-Step Dependent Variables | Parameters where the value in step n depends on the outcome of step n-1. | Harvest cell density (cells/mL) passed to next step, metabolite concentration from previous reaction. | Float |

| Constraints | Hard limits that define feasible regions. | Max allowable reagent cost, total process time, regulatory purity thresholds (>99%). | Boolean/Linear |

Protocol: Defining the Search Space

Protocol: Process Deconstruction and Parameter Identification

Objective: To systematically list all adjustable factors across all steps of the pathway. Materials: Process flow diagrams, historical batch records, subject matter expert (SME) input. Procedure:

- Map the Process: Create a detailed stepwise workflow (e.g., Seed Train → Production Bioreactor → Harvest → Purification).

- Brainstorm Variables: For each step, convene a cross-functional team (Fermentation, Analytics, DSP) to list every conceivable adjustable factor.

- Categorize: Classify each variable as Continuous, Discrete/Categorical, or Dependent (see Table 1).

- Document: Create a master parameter list with preliminary, literature- or experience-based bounds for each.

Diagram Title: Workflow for Parameter Identification

Protocol: Establishing Preliminary Bounds via High-Throughput Screening (HTS)

Objective: To replace preliminary bounds with empirically derived limits using cost-effective, low-volume experiments. Materials: Automated liquid handlers, microtiter plates, Design of Experiment (DoE) software. Procedure:

- Design a Scoping DoE: For continuous variables, use a wide-range Plackett-Burman or fractional factorial design to identify significant factors.

- Execute HTS: Perform the designed experiment in a scaled-down model (e.g., 96-deep-well plate fermentations).

- Analyze for Feasibility: Measure critical outputs (e.g., cell viability, product titer). Define the feasible region as the parameter ranges where the process yields a non-zero, measurable product.

- Set Conservative Bounds: Set initial BO search bounds within the inner 80-90% of the observed feasible region to avoid optimization failure at edges.

Table 2: Example HTS Data for a 2-Step Process (Metabolite Feeding)

| Step | Variable | Preliminary Range | HTS Feasible Range (95% CI) | Selected BO Bound |

|---|---|---|---|---|

| Seed Culture | Induction Temperature | 28-38°C | 30-36°C | 30.5-35.5°C |

| Production | Metabolite A Feed Rate | 0.1-10 mL/h | 0.5-8.0 mL/h | 1.0-7.0 mL/h |

| Production | pH | 6.5-7.5 | 6.8-7.3 | 6.9-7.2 |

Protocol: Incorporating Constraints and Dependencies

Objective: To mathematically encode process limitations and inter-step relationships. Materials: Process modeling software, historical data for regression. Procedure:

- Define Hard Constraints: Formulate inequalities (e.g.,

Total Cost of Raw Materials < $X/g,Total Process Time < Y hours). - Model Dependencies: For dependent variables, establish a transfer function. Example:

Harvest Vol. Step2 = f(Cell Density Step1, Viability Step1). This may be a simple linear scaling or a placeholder for a surrogate model to be updated during BO. - Integrate into Search Space Definition: The search space is not a simple hypercube but a complex polytope defined by these constraints. Document this as a set of mathematical rules for the BO algorithm.

Diagram Title: Constraining the Search Space

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Search Space Definition Experiments

| Item | Function in Protocol | Example Product/Catalog |

|---|---|---|

| DoE Software | Designs efficient scoping experiments to map feasible parameter ranges. | JMP Software, Modde Go, Design-Expert. |

| Automated Liquid Handler | Enables high-throughput execution of scoping DoE in microplates. | Hamilton Microlab STAR, Tecan Fluent. |

| Miniature Bioreactor System | Provides scaled-down, parallelized models of fermentation steps with monitoring. | Sartorius Ambr 15/250, Eppendorf DASbox. |

| Process Analytical Technology (PAT) | In-line sensors for rapid measurement of key outputs (biomass, metabolites). | Finesse TruBio sensors, Cytiva Biocapacitance probes. |

| Statistical Analysis Software | Analyzes HTS data to calculate feasible ranges and fit dependency models. | R, Python (SciPy, scikit-learn), SIMCA. |

A rigorously defined search space, derived from empirical scoping data and clearly encoded constraints, establishes a robust foundation for Bayesian optimization. This structured approach prevents the BO algorithm from exploring physically impossible or economically inviable regions, dramatically accelerating the convergence to an optimal multistep process configuration. Subsequent steps in the thesis will address the design of the objective function and the iterative BO cycle within this defined space.

Within the thesis on Bayesian Optimization (BO) for multistep biochemical pathway optimization, the surrogate model is the core probabilistic component that guides the search for optimal conditions. It approximates the expensive, unknown objective function (e.g., pathway yield, titer, or selectivity) based on observed data. This document details the application notes and protocols for selecting and fitting a Gaussian Process (GP) regression model, the most prevalent surrogate in BO for drug development.

Surrogate Model Comparison Table

The following table compares common surrogate model candidates for biochemical pathway optimization.

| Model Type | Key Advantages | Key Limitations | Best Suited For | Typical Hyperparameters to Tune |

|---|---|---|---|---|

| Gaussian Process (GP) | Provides uncertainty estimates, well-calibrated, works well with small data. | O(n³) computational cost, choice of kernel is critical. | Experiments with <100 evaluations, continuous parameters. | Kernel length scales, noise variance, kernel variance. |

| Random Forest (RF) | Handles high dimensions, mixed data types, faster than GP for large n. | Uncertainty estimates are less reliable than GP. | >100 evaluations, categorical/numerical mixed spaces. | Number of trees, tree depth, minimum samples per leaf. |

| Bayesian Neural Network (BNN) | Extremely flexible, scalable to very large datasets. | Complex implementation, computationally intensive training. | Very large datasets (>10k points), high-dimensional spaces. | Network architecture, prior distributions, learning rate. |

Gaussian Process Regression: Core Protocol

Theoretical Basis

A GP defines a prior over functions, described fully by its mean function m(x) and covariance (kernel) function k(x, x'). Given observed data D = {X, y}, the posterior predictive distribution for a new point x is Gaussian with mean and variance given by: μ(x) = kᵀ (K + σₙ²I)⁻¹ y σ²(x) = k(x, x) - kᵀ (K + σₙ²I)⁻¹ k where K is the covariance matrix of observed points, and k is the covariance vector between x and observed points.

Protocol: Fitting a GP for a Pathway Optimization BO Loop

Objective: Model the relationship between pathway input parameters (e.g., temperature, pH, enzyme concentration) and the output (e.g., product yield).

Materials & Pre-requisites:

- Initial experimental dataset (≥5 data points).

- Normalized parameter bounds.

- BO software library (e.g., GPyTorch, scikit-learn, BoTorch).

Procedure:

Data Preprocessing:

- Scale all input parameters to a common range (e.g., [0, 1]) using min-max scaling.

- Consider transforming the output variable if non-Gaussian noise is suspected (e.g., log transform for strictly positive data).

Kernel Selection & Initialization:

- For continuous parameters: Use the Matérn 5/2 kernel as a robust default. It is less smooth than the RBF kernel, often better representing physicochemical phenomena.

- Formula: k(r) = σ² (1 + √5r + (5/3)r²) exp(-√5r), where r is the scaled Euclidean distance.

- For categorical parameters: Use a separate categorical kernel (e.g., Hamming kernel) and form a product kernel with continuous kernels.

- Initialize length scales to ~0.5 (on scaled data) and kernel variance to the variance of the observed output.

- For continuous parameters: Use the Matérn 5/2 kernel as a robust default. It is less smooth than the RBF kernel, often better representing physicochemical phenomena.

Model Fitting (Hyperparameter Optimization):

- Maximize the log marginal likelihood of the data given the hyperparameters (θ: length scales, variance, noise variance).

- Objective Function: log p(y | X, θ) = -½yᵀ(Kθ + σₙ²I)⁻¹y - ½log|Kθ + σₙ²I| - (n/2)log(2π)

- Use a gradient-based optimizer (e.g., L-BFGS-B) with multiple restarts (≥10) from random initializations to avoid poor local maxima.

- Maximize the log marginal likelihood of the data given the hyperparameters (θ: length scales, variance, noise variance).

Model Validation:

- Perform leave-one-out or k-fold cross-validation.

- Calculate the standardized mean squared error (SMSE) and mean standardized log loss (MSLL) to assess predictive quality and uncertainty calibration.

Integration into BO Loop:

- The fitted GP provides the posterior mean μ(x) and variance σ²(x) for any x in the parameter space.

- These outputs feed directly into the acquisition function (e.g., Expected Improvement) to propose the next experiment.

Visualization of the GP Surrogate Role in BO Workflow

Diagram Title: GP Surrogate Model within the Bayesian Optimization Cycle

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Function in Context | Example/Notes |

|---|---|---|

| GP Software Library (GPyTorch/BoTorch) | Provides flexible, high-performance GP implementation with automatic differentiation for gradient-based hyperparameter optimization. | Essential for modern, scalable BO. BoTorch is built on PyTorch and integrates acquisition functions. |

| Enzymatic Assay Kits (e.g., NAD(P)H-coupled) | Quantifies product formation or substrate consumption in real-time, generating the continuous 'y' value for the GP model. | Enables rapid, high-throughput data generation critical for iterative BO loops. |

| DOE Software (JMP, Modde) | Designs the initial space-filling experiment (e.g., Latin Hypercube) to provide the first data for GP training. | Maximizes information from minimal initial experiments. |

| Lab Automation Liquid Handler | Automates the preparation of reaction mixtures with varying parameters (x), ensuring precision and reproducibility. | Critical for executing the sequence of experiments proposed by the BO algorithm. |

| Kernel Function (Matérn 5/2) | Defines the covariance structure of the GP, imposing assumptions about the smoothness of the objective function. | The choice significantly impacts model accuracy and BO performance. |

Advanced Application Note: Handling Mixed Parameter Spaces

Scenario: Optimizing a pathway with continuous (temperature, concentration) and categorical (enzyme type, buffer system) variables.

Protocol:

- Encoding: One-hot encode categorical parameters.

- Composite Kernel: Construct a kernel that is the product of kernels for continuous dimensions and a separate kernel for categorical dimensions. For two categories A and B:

- Categorical Kernel Value: k(catA, catB) = exp(-λ * δ), where δ=0 if A==B, else 1. λ is a tunable scale parameter.

- Fitting: Fit the composite kernel's hyperparameters jointly via marginal likelihood maximization. The length scales for continuous dimensions and the scale λ for the categorical dimension will be learned.

In the multistep pathway optimization thesis, selecting the appropriate Bayesian Optimization (BO) acquisition function is critical for efficiently navigating the complex, high-dimensional, and often noisy response surfaces of biological systems. This step directly dictates the strategy for selecting the next experiment, balancing the need to exploit known high-performance regions against the need to explore uncertain regions for potentially superior, yet undiscovered, optima.

Quantitative Comparison of Common Acquisition Functions

The following table summarizes the mathematical formulation, key characteristics, and recommended use cases for primary acquisition functions, based on current literature and implementations in libraries like BoTorch and GPyOpt.

Table 1: Acquisition Functions for Bayesian Optimization

| Acquisition Function | Mathematical Form (Minimization) | Hyperparameter (λ, ξ) | Primary Goal | Robustness to Noise | Best for Pathway Step | |||

|---|---|---|---|---|---|---|---|---|

| Probability of Improvement (PI) | ( \alpha{PI}(x) = \Phi\left( \frac{f{min} - \mu(x) - \xi}{\sigma(x)} \right) ) | ξ (exploit) | Pure Exploitation | Low | Final fine-tuning of a nearly optimized step. | |||

| Expected Improvement (EI) | ( \alpha{EI}(x) = (f{min} - \mu(x) - \xi)\Phi(Z) + \sigma(x)\phi(Z) ) where ( Z = \frac{f_{min} - \mu(x) - \xi}{\sigma(x)} ) | ξ (exploit) | Balanced | Medium | General-purpose optimization of most pathway steps. | |||

| Upper Confidence Bound (UCB/GP-UCB) | ( \alpha{UCB}(x) = -\mu(x) + \betat \sigma(x) ) | β (explore) | Tunable Explore/Exploit | Medium | Early-phase screening where exploration is paramount. | |||

| Predictive Entropy Search (PES) | ( \alpha_{PES}(x) = H[p(x* | D)] - E_{p(y | D,x)}[H[p(x* | D \cup {x, y})]] ) | None | Information Gain | High | Very expensive, noisy assays; global search. |

| Noisy Expected Improvement (qNEI) | ( \alpha{qNEI}(x) = E[\max(f{min} - f(x), 0)] ) (Monte Carlo estimation) | None | Batch, Noisy Balances | High | Batch optimization of cell culture or HPLC conditions with replication noise. |

Key: ( \mu(x) ): posterior mean; ( \sigma(x) ): posterior std. dev.; ( f_{min} ): current best observation; ( \Phi, \phi ): CDF and PDF of std. normal; ( \xi ): exploration bias; ( \beta_t ): schedule-dependent parameter; ( H ): entropy.

Protocol: Implementing & Testing Acquisition Functions for a Pathway Step

Protocol 3.1: Comparative Evaluation of Acquisition Functions on a Simulated Pathway Response Surface

Objective: To empirically determine the most sample-efficient acquisition function for optimizing a specific multistep pathway (e.g., antibody titer in a CHO cell process).

Materials & Reagents:

- Research Reagent Solutions:

- GPyOpt/BoTorch Library: Python framework for building and testing BO loops.

- Simulated Data Model: A deterministic or stochastic function emulating the pathway's input-output relationship (e.g., the Six-Hump Camel function for multimodal surfaces).

- Performance Metrics Tracker: Custom script to log

Best Found Valuevs.Iteration Number.

Methodology:

- Define Design Space: For the target pathway step, codify the bounded continuous (e.g., pH, temperature) and discrete (e.g., media type 1,2,3) variables.

- Initialize Dataset: Generate an initial Latin Hypercube Design (LHD) of 5-10 points. Evaluate these using the simulated function to create initial data

D. - Configure BO Loop: For each acquisition function (EI, UCB, PI, qNEI):

- Fit a Gaussian Process (GP) surrogate model to current

D. - Optimize the acquisition function to select the next point(s)

x_next. - Evaluate

f(x_next)via the simulator and append toD. - Repeat for 30-50 iterations.

- Fit a Gaussian Process (GP) surrogate model to current

- Replicate & Analyze: Run each BO configuration 10 times with different random seeds. Plot the mean (and std. deviation) of the best objective value found versus iteration. The function yielding the fastest descent to the optimum is best aligned for that landscape.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Tools for Acquisition Function Implementation

| Item | Function/Description | Example Vendor/Software |

|---|---|---|

| Bayesian Optimization Suites | Integrated libraries for building GP models and acquisition functions. | BoTorch, GPyOpt, Scikit-Optimize |

| Gaussian Process Kernels | Define smoothness and pattern assumptions of the underlying response surface. | Matern (ν=2.5), RBF, Linear (in sklearn.gaussian_process) |

| Monte Carlo Sampler | Required for advanced acquisition functions like qNEI and PES. | Sobol Quasi-Random (scipy.stats.qmc), Hamiltonian Monte Carlo |

| Global/Numerical Optimizer | Solves the inner loop of maximizing the acquisition function. | L-BFGS-B (scipy), DIRECT, CMA-ES |

| Laboratory Automation Scheduler | Translates the BO-recommended experiment into lab instructions. | Momentum, Skyline, custom Python scripts |

Visualization: Acquisition Function Decision Workflow

Acquisition Function Selection Logic for Pathway Steps

Advanced Protocol: Batch (Parallel) Optimization for High-Throughput Steps

Protocol 3.2: Implementing qNEI for Parallel Bioreactor Condition Screening

Objective: To efficiently optimize a 4-variable cell culture medium formulation using 6 parallel bioreactors per experimental batch.

Methodology:

- Model Setup: Use a

MultiTaskGPor a standard GP with aSimpleBatchSamplerin BoTorch to model the response across the batch dimension. - Acquisition Optimization: Configure the

qNoisyExpectedImprovementacquisition function. - Batch Selection: Instead of optimizing for a single point

x_next, optimize for a batch of 6 points{x_next_1, ..., x_next_6}that jointly maximize the expected improvement. This uses Monte Carlo integration over the GP posterior. - Parallel Experimentation: Execute the batch of 6 conditions simultaneously in parallel bioreactors.

- Update & Iterate: Upon receiving all 6 results, update the GP model and repeat from step 2. This protocol dramatically reduces wall-clock time for pathway optimization.

Application Notes: The BO Execution Cycle in Pathway Optimization

This document details the execution phase of a Bayesian Optimization (BO) loop for the optimization of a multistep biochemical pathway, such as a multi-enzyme cascade for novel drug intermediate synthesis. The goal is to efficiently navigate a high-dimensional experimental space (e.g., enzyme ratios, pH, cofactor concentrations) to maximize a key performance indicator (KPI) like yield or titer.

Core Concept: BO iteratively proposes candidate experiments by leveraging a probabilistic surrogate model (typically a Gaussian Process) to balance exploration (sampling uncertain regions) and exploitation (sampling near predicted optima). Each proposed candidate is then validated through wet-lab experimentation, with results feeding back to update the model for the next iteration.

Key Quantitative Benchmarks in Contemporary BO Studies

The following table summarizes performance metrics from recent studies applying BO to biochemical pathway optimization.

Table 1: Benchmark Data from Recent BO Applications in Bioprocess Optimization

| Study Focus (Year) | Optimization Variables | KPI | Baseline Performance | BO-Optimized Performance | Number of BO Iterations | Key Algorithm |

|---|---|---|---|---|---|---|

| Microbial Strain Titer (2023) | 5 Pathway Gene Promoter Strengths | Product Titer (g/L) | 1.2 g/L | 8.7 g/L | 25 | Gaussian Process (GP) with Expected Improvement (EI) |

| Cell-Free Protein Yield (2024) | [Mg2+], [DNA], [AA mix], Incubation Temp. | Soluble Protein Yield (mg/mL) | 0.5 mg/mL | 2.1 mg/mL | 30 | GP with Upper Confidence Bound (UCB) |

| Enzymatic Cascade Yield (2023) | 3 Enzyme Loads, pH, Substrate Conc. | Final Product Yield (%) | 45% | 92% | 20 | Bayesian Neural Network with Thompson Sampling |

Detailed Experimental Protocols

Protocol 3.1: High-Throughput Microscale Validation of BO-Proposed Conditions

This protocol is designed for the rapid experimental validation of BO-proposed culture conditions in a 96-deep well plate (DWP) format.

I. Materials & Pre-Experiment Preparation

- Pre-culture: Inoculate host strain (e.g., E. coli BL21(DE3) harboring pathway plasmid) in 5 mL LB with antibiotic. Grow overnight (37°C, 220 rpm).

- Media Preparation: Prepare base defined medium according to standard recipe. Aliquot into 15 mL tubes.

- BO Input: Receive the n proposed condition sets (e.g., 8 conditions per BO iteration) from the algorithm. Each set defines specific values for variables (e.g., Inducer Concentration: X µM, Temperature: Y °C, Carbon Source: Z g/L).

II. Condition Assembly in 96-DWP

- Using an electronic multichannel pipette, dispense the appropriate volume of base medium into each well of a 2.2 mL square-well DWP.

- According to the BO proposal, serially add stock solutions of variables (inducers, cofactors, etc.) to their specified wells. Use fresh pipette tips for each reagent to avoid cross-contamination.

- Inoculate each well to a starting OD600 of 0.05 from the diluted overnight pre-culture.

- Seal the plate with a breathable membrane. Place in a calibrated microbioreactor system (e.g., BioLector) or an orbital shaker-incubator.

III. Cultivation & Sampling

- Cultivate at the specified temperature with continuous shaking (e.g., 1000 rpm, 50 mm orbit).

- Monitor OD600 online if using a microbioreactor, or take periodic offline measurements.

- At a defined endpoint (e.g., 48 hours or upon cessation of growth), harvest the plate.

- Centrifuge the DWP (4000 x g, 15 min, 4°C). Separate supernatant (for extracellular product analysis) and cell pellet (for possible metabolomics or enzyme assay).

IV. KPI Analysis (Example: Product Titer via UPLC)

- Transfer 150 µL of supernatant from each well to a new 96-well PCR plate.

- Add 150 µL of quenching solvent (e.g., 80% Acetonitrile, 0.1% Formic Acid) to precipitate any residual proteins. Vortex, then centrifuge (4000 x g, 10 min).

- Dilute the clarified supernatant appropriately with mobile phase.

- Analyze using a validated UPLC-PDA/MS method. Quantify product concentration via external standard curve.

- Record the final titer (mg/L or g/L) for each well. This is the experimental observation y for condition x.

V. Data Return to BO Loop

- Format data as a table: each row corresponds to a tested condition x with its measured KPI y.

- Append this data to the historical dataset.

- Upload the updated dataset to the BO software platform to trigger the next iteration of surrogate model updating and candidate proposal.

Visualizing the BO Execution Workflow

Diagram Title: BO Loop Execution from Proposal to Validation

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents & Kits for BO-Driven Pathway Validation

| Item Name | Vendor Examples | Function in Protocol | Critical Notes |

|---|---|---|---|

| Chemically Defined Medium Kit | Teknova, Sun Scientific | Provides consistent, fully defined base medium for precise variable control across BO iterations. | Essential for removing uncharacterized complex media effects. |

| 96-Deep Well Plates (2.2 mL) | Axygen, Whatman | High-throughput cultivation vessel compatible with microbioreactors and centrifugation. | Square wells improve oxygen transfer. |

| Breathable Sealing Film | Breathe-Easy (Diversified Biotech), AeraSeal | Allows gas exchange while preventing evaporation and contamination during micro-scale cultivation. | Critical for long-term (>24h) DWP cultivations. |

| Microbioreactor System | m2p-labs (BioLector), Growth Curves USA (OMEGA) | Enables online, parallel monitoring of biomass (OD), pH, DO, fluorescence in up to 96 wells. | Provides rich kinetic data for model refinement. |

| Automated Liquid Handler | Opentrons, Hamilton, Tecan | Automates precise dispensing of BO-proposed variable combinations into DWPs, ensuring reproducibility. | Eliminates manual pipetting errors in complex condition assembly. |

| UPLC-MS/MS System | Waters, Agilent, Sciex | Gold-standard for quantifying pathway intermediates and final product titers from microscale samples. | Enables multiplexed KPI measurement from low-volume samples. |

| Cryogenic Vial Storage System | Thermo Scientific Nunc | For archiving engineered strains and cell-free extracts generated at each BO iteration. | Preserves genetic and catalytic material for backtracking. |

The optimization of multi-enzyme biocatalytic cascades is a high-dimensional challenge central to modern synthetic biology and pharmaceutical manufacturing. Within the broader thesis on Bayesian Optimization for Multistep Pathway Optimization Research, this case study demonstrates BO's superior efficiency over traditional Design of Experiments (DoE) in navigating complex parameter spaces with limited, costly experiments. We focus on a representative cascade for the synthesis of a chiral pharmaceutical intermediate.

Application Notes: BO-Driven Cascade Optimization

Objective: Maximize the yield (Y) of a target chiral amine via a three-enzyme cascade (Engineered Transaminase A, Formate Dehydrogenase B, Cofactor Recycling Module) by simultaneously tuning five key reaction parameters.

BO Framework Setup:

- Objective Function: Final product yield (%) after 24 hours.

- Search Space:

- pH (6.5 - 8.5)

- Temperature (°C, 25 - 40)

- Enzyme A Loading (U/mL, 5 - 25)

- Enzyme B Loading (U/mL, 2 - 15)

- Cofactor Concentration (mM, 0.1 - 2.0)

- Acquisition Function: Expected Improvement (EI).

- Initial Design: 12 points from a space-filling Latin Hypercube.

- Stopping Criterion: Iteration budget of 40 total experiments or <2% improvement over 5 consecutive iterations.

Key Results: BO identified a robust optimum in 32 iterations, outperforming a full factorial DoE screen requiring 108 experiments.

Table 1: Optimization Performance Comparison

| Method | Total Experiments | Max Yield Achieved (%) | Optimal Parameters Identified |

|---|---|---|---|

| Bayesian Optimization | 32 | 92.5 ± 1.8 | pH 7.8, Temp 32°C, [A]=18 U/mL, [B]=9 U/mL, [Cof]=1.2 mM |

| Full Factorial DoE | 108 | 89.1 ± 2.1 | pH 8.0, Temp 35°C, [A]=25 U/mL, [B]=12 U/mL, [Cof]=1.5 mM |

| One-Variable-at-a-Time | 45 | 81.3 ± 3.5 | pH 8.0, Temp 37°C, [A]=20 U/mL, [B]=10 U/mL, [Cof]=1.0 mM |

Table 2: Key Intermediate Yields at BO-Optimized Conditions

| Reaction Time (h) | Substrate Conversion (%) | Chiral Amine Intermediate Yield (%) | Byproduct Accumulation (mM) |

|---|---|---|---|

| 4 | 45.2 | 43.1 | 0.8 |

| 8 | 78.9 | 76.3 | 1.5 |

| 16 | 96.5 | 94.2 | 1.9 |

| 24 | 99.1 | 92.5 | 2.1 |

Experimental Protocols

Protocol 1: Standardized Multi-enzyme Cascade Reaction Purpose: To execute the biocatalytic cascade under defined conditions for yield assessment. Reagents: See "The Scientist's Toolkit" below. Procedure:

- Prepare 5 mL of 100 mM potassium phosphate buffer at the target pH in a 15 mL stirred bioreactor.

- Sparge the buffer with N₂ for 10 minutes to reduce oxygen.

- Pre-warm the reactor to the target temperature (±0.5°C) using a circulating water bath.

- Add the following sequentially with gentle stirring (200 rpm): 50 mM prochiral ketone substrate (from 500 mM DMSO stock), target concentration of NAD⁺ cofactor.

- Initiate the reaction by the simultaneous addition of Enzyme A and Enzyme B stock solutions.

- Maintain pH by automated titration with 1M KOH.

- At t = 0, 4, 8, 16, 24 hours, withdraw 200 µL aliquots.

- Immediately quench each aliquot with 20 µL of 6M HCl, vortex, and centrifuge at 14,000g for 5 minutes.

- Filter supernatant through a 0.22 µm nylon filter and analyze by HPLC (see Protocol 2).

Protocol 2: HPLC Analysis for Conversion and Yield Purpose: To quantify substrate, intermediate, and product concentrations. Equipment: HPLC with C18 reversed-phase column and UV/Vis detector. Method:

- Column: Luna C18(2), 5 µm, 150 x 4.6 mm.

- Mobile Phase A: 0.1% Trifluoroacetic acid (TFA) in H₂O.

- Mobile Phase B: 0.1% TFA in Acetonitrile.

- Gradient: 10% B to 90% B over 15 minutes, hold 2 minutes, re-equilibrate.

- Flow Rate: 1.0 mL/min.

- Detection: 214 nm.

- Injection Volume: 20 µL of quenched, filtered sample.

- Calibration: Prepare standard curves (0.1-20 mM) for substrate, chiral amine product, and key byproduct. Use linear regression for quantification.

Visualizations

Bayesian Optimization Workflow for Enzyme Cascades

Three-Enzyme Cascade for Chiral Amine Synthesis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Cascade Setup & Optimization

| Reagent/Material | Function in Experiment | Example Supplier/Catalog |

|---|---|---|

| Engineered Transaminase A (TA-A) | Key biocatalyst for stereoselective amination of prochiral ketone. | Codexis, ASA-400 series |

| Formate Dehydrogenase B (FDH-B) | Drives cofactor recycling by oxidizing byproduct (Alanine). | Sigma-Aldrich, F8649 |

| NAD⁺ Cofactor (Disodium Salt) | Essential redox cofactor for FDH activity. | Roche, 10127973001 |

| Prochiral Ketone Substrate | High-purity starting material for cascade reaction. | Enamine, Custom Synthesis |

| Potassium Phosphate Buffer Salts | Maintains critical pH environment for enzyme stability/activity. | Thermo Fisher, BP362 |

| Amberzyme Octadecyl Resin | For rapid in-situ product removal to mitigate inhibition. | Rohm and Haas, 78644 |

| Miniature Stirred Bioreactor System | Provides controlled temperature, pH, and mixing for screening. | Mettler Toledo, Reactor 16 |

| UPLC/HPLC with C18 Column | Essential analytical tool for quantifying reaction components. | Waters, ACQUITY UPLC H-Class |

Bayesian Optimization (BO) has emerged as a core methodology within the broader thesis of "Adaptive Bayesian Optimization for the High-Throughput Discovery and Optimization of Multistep Synthetic and Biological Pathways." This thesis posits that efficiently navigating high-dimensional, noisy, and expensive-to-evaluate experimental landscapes—such as those in drug development and pathway engineering—requires robust, flexible software tools. BoTorch, Ax, and Scikit-Optimize represent critical practical implementations of BO principles, enabling researchers to translate theoretical frameworks into actionable experimental protocols.

Tool Comparison & Quantitative Data

Table 1: Feature Comparison of Bayesian Optimization Frameworks

| Feature / Metric | BoTorch | Ax (Adaptive Experimentation Platform) | Scikit-Optimize (skopt) |

|---|---|---|---|

| Core Architecture | PyTorch-based, research-first | Service-oriented, full experiment lifecycle | Scikit-learn inspired, simplicity-first |

| Primary Interface | Python (low-level, flexible) | Python, TypeScript (UI), REST API | Python (high-level, simple) |

| Key Strength | State-of-the-art probabilistic models & novel acquisition functions | Integrated platform with A/B testing, management, and visualization | Lightweight, easy integration into existing SciPy/Scikit-learn workflows |

| Parallel Evaluation | Native support via q- acquisition functions (e.g., qEI) | Advanced support for batch and generation-based parallelism | Basic support via optimizer.tell() with a list of points |

| Visualization | Requires manual plotting (Matplotlib/Plotly) | Integrated Dashboard for experiment tracking | Basic plotting utilities (e.g., plot_objective, plot_convergence) |

| Optimal Use Case | Cutting-edge BO research, custom algorithm development | Large-scale, multi-user experimental campaigns in industry/labs | Rapid prototyping, low-dimensional problems, educational use |

| Learning Curve | Steep (requires PyTorch & BO knowledge) | Moderate to High | Shallow |

Table 2: Performance Benchmark on Synthetic Test Functions (Hartmann6)

| Framework | Average Iterations to Optimum (± Std Dev) | Wall-clock Time per Iteration (s) | Typical Batch Size Capability |

|---|---|---|---|

| BoTorch (with GP) | 42 ± 6 | 1.8 ± 0.3 | Large (50+) |

| Ax | 45 ± 7 | 2.5 ± 0.5 | Large (50+) |

| Scikit-Optimize | 52 ± 9 | 0.9 ± 0.2 | Small (<10) |

Note: Benchmarks conducted on a standard workstation, averaging over 50 runs. The Hartmann6 function is a common 6-dimensional benchmark for global optimization.

Detailed Application Notes & Protocols

Protocol: Setting Up a Multistep Pathway Optimization Loop with Ax

Objective: To optimize a 3-step enzymatic cascade for maximal product yield, where each step has two tunable parameters (pH, temperature) and the final yield is costly to measure.

Materials & Software:

- Ax Platform (

ax-platform) - Pandas, NumPy

- Access to laboratory HPLC system or yield assay.

Procedure:

- Define the Search Space: In Ax, define a

RangeParameterfor each variable (pHstep1, tempstep1, pHstep2, tempstep2, pHstep3, tempstep3). - Initialize the Experiment: Create a

SimpleExperiment. Define theoptimization_configtargeting maximization of the objective metric "final_yield". - Generate Initial Sobol Points: Use

ax.modelbridge.get_sobolto generate 10-15 random initial design points to seed the Gaussian Process model. - Run the Initial Batch: Execute experiments for these initial points according to the standard lab protocol. Enter results via

experiment.new_trial().add_runner_and_run()or manually via the Ax dashboard. - Enter the BO Loop:

a. Fit the GP Model: Update the experiment using

GPEI(Gaussian Process with Expected Improvement) model bridge. b. Generate Candidates: Request a batch of 5 new candidate parameter sets usingmodel.gen(5). c. Execute & Log: Run the experiments for the new candidates, log yields. d. Update Experiment: Add the new data as atrialto the experiment. e. Iterate: Repeat steps a-d for 20-30 iterations or until convergence. - Analysis: Use the Ax dashboard to visualize performance over iterations and the response surface for key parameters.

Protocol: Implementing Custom Acquisition Function with BoTorch

Objective: To modify a standard BO loop for a drug formulation stability assay where constraints (e.g., cost of raw materials) must be actively penalized.

Procedure:

- Setup: Install

botorchandgpytorch. Define your customCostAwareEIacquisition function by subclassingbotorch.acquisition.AcquisitionFunction. - Define Models: Fit a Gaussian Process (

SingleTaskGP) to the primary objective (stability) and a separate GP to the cost model. - Construct Acquisition Function: Combine

ExpectedImprovementwith a penalty term derived from the cost model's posterior. - Optimize Candidates: Use

optimize_acqfwith your customCostAwareEIfunction to generate the next experiment point. - Integrate into Loop: Embed this logic into a sequential or batch evaluation loop.

Protocol: Rapid Prototyping with Scikit-Optimize

Objective: Quick initial screening of 4 key parameters in a cell culture media formulation to identify promising regions for more rigorous optimization.

Procedure:

- Define Objective Function: Wrap your cell culture assay in a function

f(x)that takes a list of 4 parameters and returns a negative viability score (for minimization). - Define Space: Use

skopt.space.RealorIntegerfor each parameter. - Run Optimization: Use

gp_minimize(f, search_space, n_calls=50, n_initial_points=15, noise='gaussian'). - Visualize Results: Use

plot_convergence(res)to see progress andplot_evaluations(res)to see pairwise parameter dependencies.

Visualizations

Bayesian Optimization for Pathway Screening

Multistep Pathway with BO Control

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital & Experimental Materials for BO-Driven Pathway Research

| Item / Reagent | Function in BO-Driven Research |

|---|---|

| Ax Platform Dashboard | Serves as the central hub for tracking experimental trials, visualizing results, and managing the queue of candidate parameter sets generated by the BO algorithm. |

| Jupyter Notebook/Lab | The primary interactive environment for running BoTorch or Scikit-Optimize scripts, performing ad-hoc data analysis, and prototyping new acquisition functions. |

| High-Throughput Assay Kits (e.g., HPLC, Plate Readers) | Enables rapid, quantitative measurement of the objective function (e.g., product concentration, cell viability) from the parallel or sequential experiments suggested by the BO loop. |

| Parameterized Robotic Liquid Handlers (e.g., Opentrons) | Automates the physical setup of experiments (e.g., media preparation, reagent dispensing) based on the digital candidate list from Ax, ensuring precision and reproducibility. |

| Lab Information Management System (LIMS) | Provides sample tracking and metadata management, crucial for linking the digital experiment record in Ax/BoTorch with physical samples and raw data files. |

Scikit-Optimize gp_minimize Function |

Acts as a "reagent" for quick, initial scoping of low-dimensional parameter spaces before committing to more resource-intensive optimization campaigns. |

Overcoming Challenges: Advanced BO Strategies for Noisy, Constrained, and Parallel Pathways

Handling Experimental Noise and Stochastic Outcomes in Biological Systems

Optimizing multistep pathways, such as those for metabolite production or therapeutic protein expression, is central to bioprocess and therapeutic development. However, inherent biological noise—from gene expression stochasticity to environmental fluctuations—obscures the signal between pathway perturbations and measured outputs. This application note details protocols for employing Bayesian optimization (BO) within this noisy, resource-constrained context. BO’s probabilistic framework elegantly balances exploration and exploitation, building a surrogate model of the uncertain design space to efficiently guide experiments toward optimal pathway configurations despite stochastic outcomes.

Core Concepts: Quantifying and Modeling Noise

Biological noise is characterized by its magnitude and structure. Key metrics include the coefficient of variation (CV) and signal-to-noise ratio (SNR). For BO, modeling this uncertainty is critical.

- Table 1: Common Noise Metrics in Pathway Optimization

Metric Formula Interpretation in Pathway Context Coefficient of Variation (CV) (Standard Deviation / Mean) * 100% Quantifies relative dispersion. A CV > 15% for a titer assay indicates high experimental noise. Signal-to-Noise Ratio (SNR) Mean / Standard Deviation Higher SNR (>10) suggests a cleaner signal for optimization. Replicate Concordance Intra-class Correlation Coefficient (ICC) ICC > 0.8 indicates high reliability between technical/biological replicates.

BO incorporates noise via its acquisition function. The Expected Improvement (EI) with Gaussian noise is commonly used:

EI(x) = E[max(0, μ(x) - f(x*))], where μ(x) is the surrogate model's prediction mean at point x, and f(x*) is the current best observation, accounting for its uncertainty.

Application Notes & Protocols

Application Note 1: High-Throughput Promoter-RBS Library Screening

Objective: Identify optimal promoter-RBS combinations for a 3-gene pathway in E. coli with a noisy fluorescent output.

Protocol:

- Library Construction: Use randomized promoter (e.g., J23100 variants) and RBS (e.g., from the Salis library) sequences assembled via Golden Gate cloning into a reporter plasmid.

- Stochastic Culture & Assay:

- Inoculate 96 deep-well plates with single colonies (n=4 biological replicates per construct).

- Grow in 800μL TB media at 37°C, 900 rpm for 24 hours.

- Key Noise Control: Use a calibrated liquid handler for consistent inoculation volume and a plate reader with temperature-controlled incubation for fluorescence (e.g., GFP) and OD600 measurement.

- Data Pre-processing: Normalize fluorescence to OD600. For each construct, calculate the mean and variance from the 4 replicates.

- Bayesian Optimization Setup:

- Design Variables: Promoter strength (predicted), RBS strength (predicted).

- Objective: Maximize mean normalized fluorescence.

- Noise Model: Input the observed variance for each data point into the Gaussian Process regressor (

GaussianProcessRegressorin scikit-learn with aWhiteKernel). - Iteration: After initial 50 random constructs, the BO algorithm suggests 5 new promoter-RBS combinations to test per iteration based on noisy EI.

Application Note 2: Optimizing Transient Transfection for Protein Production

Objective: Optimize a 4-factor transfection process in HEK293 cells (DNA amount, PEI:DNA ratio, cell density, feed timing) to maximize secreted protein yield, where lot-to-lot variability introduces significant noise.

Protocol:

- DoE and Execution:

- Perform a preliminary Latin Hypercube Sampling (LHS) of 20 conditions.

- For each condition, perform 6 technical replicates across two separate cell passages (biological replicates).