Conquering Heteroscedasticity in Untargeted Metabolomics: A Comprehensive Guide for Robust Data Analysis

Untargeted metabolomics datasets are inherently plagued by heteroscedasticity—the non-constant variance of measurement errors across metabolite concentrations.

Conquering Heteroscedasticity in Untargeted Metabolomics: A Comprehensive Guide for Robust Data Analysis

Abstract

Untargeted metabolomics datasets are inherently plagued by heteroscedasticity—the non-constant variance of measurement errors across metabolite concentrations. This phenomenon severely undermines the validity of standard statistical models, leading to increased false discoveries and reduced power in biomarker identification and pathway analysis. This article provides a complete framework for researchers and drug development professionals to address this critical challenge. We first establish a foundational understanding of heteroscedasticity's sources and impacts on LC-MS and GC-MS data. We then detail methodological solutions, including variance-stabilizing transformations (e.g., log, glog), weighted statistical approaches, and advanced modeling techniques. A dedicated troubleshooting section addresses common pitfalls in implementation and optimization. Finally, we present a validation and comparative analysis of these methods using benchmark datasets and simulation studies, offering clear guidance on selecting the optimal approach for specific research goals to ensure reproducible and biologically meaningful results.

What is Heteroscedasticity? Defining the Core Challenge in Metabolomic Data Variance

Welcome to the Technical Support Center for Addressing Heteroscedasticity in Untargeted Metabolomics Datasets Research. This resource provides troubleshooting guidance and FAQs for researchers and drug development professionals.

FAQ & Troubleshooting Guide

Q1: How do I diagnose heteroscedasticity in my LC-MS metabolomics data? A: Heteroscedasticity, where variance increases with signal intensity, is inherent in mass spectrometry data. Key diagnostics include:

- Scale-Location Plot (Spread-Level Plot): Plot the square root of standardized residuals against fitted values. A clear trend (e.g., funnel shape) indicates heteroscedasticity.

- Residual vs. Fitted Value Plot: Plot raw residuals against fitted values. A random scatter suggests homoscedasticity; a fan-shaped pattern confirms heteroscedasticity.

- Statistical Tests: Use the Breusch-Pagan or White test on model residuals. A significant p-value (p < 0.05) rejects the constant variance assumption.

Q2: My statistical tests (e.g., ANOVA) on metabolite intensities are unreliable. Could heteroscedasticity be the cause? A: Yes. Standard parametric tests assume constant error variance. Heteroscedasticity violates this, leading to:

- Inflated Type I error rates (false positives) for low-abundance metabolites with small variance.

- Reduced statistical power (increased Type II error/false negatives) for high-abundance metabolites with large variance. This biases downstream biomarker discovery and pathway analysis.

Q3: What are the standard data transformation methods to stabilize variance, and when should I use each? A: Transformations apply a mathematical function to the entire dataset to reduce the mean-variance relationship. See the table below for a comparison.

Table 1: Common Variance-Stabilizing Transformations for Metabolomics Data

| Transformation | Formula | Best Use Case | Key Limitation |

|---|---|---|---|

| Logarithmic | log(x) or log(x+1) | Moderate heteroscedasticity. Widely used for LC-MS data. | Fails with zero values (requires offset). Can over-correct. |

| Square Root | sqrt(x) or sqrt(x + 3/8) | Count data or mild heteroscedasticity. | Less potent than log transform. |

| Generalized Log (glog) | glog(x) = log((x + sqrt(x² + λ)) / 2) | Severe heteroscedasticity. Handles zeros and negative values (post-scaling). | Requires parameter (λ) estimation. |

| Variance Stabilizing Normalization (VSN) | Non-linear transformation optimizing variance stability. | Complex heteroscedasticity across full intensity range. | Integrated into pipelines; less transparent. |

Q4: Are there alternatives to simple transformations for dealing with heteroscedasticity? A: Absolutely. Advanced modeling approaches directly incorporate variance structure:

- Generalized Least Squares (GLS): Models the variance-covariance structure explicitly using weights (e.g.,

nlmeorlimmaR packages). - Weighted Least Squares (WLS): A special case of GLS where weights are inversely proportional to variance.

- Non-Parametric Tests: Use rank-based tests (e.g., Kruskal-Wallis) when transformations fail, though they may lose information on effect size.

Protocol: Implementing a Generalized Log (glog) Transformation

- Preprocessing: Perform basic preprocessing (peak picking, alignment, integration). Ensure data is normalized for technical variation (e.g., QC-based).

- Parameter Estimation (λ): Use the

vsnpackage in R or similar. The functionvsn2()estimates the optimal λ parameter by minimizing the variance-mean dependence across all metabolites. - Apply Transformation: Transform the intensity matrix

Iusing the formula:glog(I) = log((I + sqrt(I² + λ)) / 2). - Validation: Regenerate diagnostic plots (Scale-Location, Residual vs. Fitted) on a linear model of the transformed data to assess improvement in variance homogeneity.

Q5: How does heteroscedasticity impact my pathway analysis results? A: Pathway enrichment analysis relies on accurate p-values and fold changes from individual metabolite tests. Heteroscedasticity skews these inputs, leading to:

- Biased identification of "significant" pathways.

- Over-representation of pathways enriched with high-variance (often high-abundance) metabolites.

- Under-representation of pathways involving low-variance metabolites.

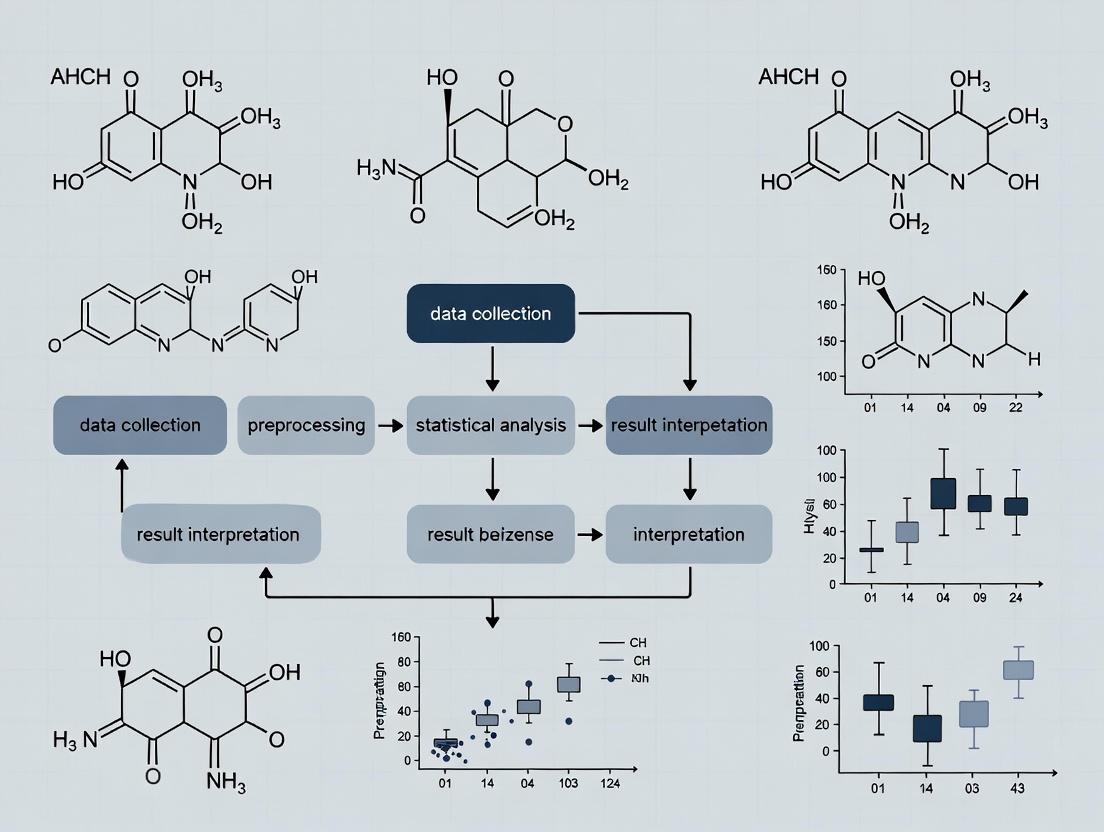

Visualization: Heteroscedasticity Impact & Correction Workflow

Title: Workflow for Diagnosing and Correcting Heteroscedasticity

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Tools for Heteroscedasticity Research

| Item / Reagent | Function / Purpose |

|---|---|

| Pooled Quality Control (QC) Samples | Created by combining equal aliquots of all study samples. Used to monitor instrument stability and for normalization methods (e.g., QC-RLSC) that can mitigate variance structure. |

| Internal Standard Mixture (ISTD) | Isotope-labeled compounds spiked at known concentration. Critical for assessing technical variance across the intensity range and for normalization. |

| VSN or glog Parameter λ | Not a physical reagent, but a key parameter estimated from your data to optimize the variance-stabilizing transformation. |

| R/Bioconductor Packages | vsn (VSN transformation), limma (weighted regression), nlme (GLS modeling), ggplot2 (diagnostic plots). Core software tools for implementation. |

| Reference Metabolite Standards | Used to generate calibration curves, helping to characterize the explicit relationship between concentration (mean) and instrument variance. |

Technical Support Center: Troubleshooting Heteroscedastic Noise in Metabolomics

Frequently Asked Questions (FAQs)

Q1: Why does my calibration curve show increasing variance (wider error bars) at higher concentrations? A: This is a classic sign of heteroscedastic noise, intrinsic to the ionization process in MS. Ion suppression/enhancement in the source and space-charge effects in the ion trap or detector become more pronounced with higher analyte loads, leading to a proportional increase in signal variance. This violates the constant-variance (homoscedastic) assumption of many statistical models.

Q2: My low-abundance metabolites have poor reproducibility. Is this related to instrument noise? A: Yes. At low concentrations, the signal approaches the instrument's detection limit. Here, noise sources like electronic baseline noise and stochastic ion counting (Poisson noise) dominate. This creates a different variance structure compared to high-abundance signals, contributing to heteroscedasticity where variance is concentration-dependent.

Q3: Does changing from an ESI to an APCI source affect noise structure? A: Yes. Electrospray Ionization (ESI) is highly susceptible to matrix effects, leading to significant concentration-dependent variance. Atmospheric Pressure Chemical Ionization (APCI) is less prone to such effects, often resulting in a different heteroscedastic profile. The choice of ion source directly influences the noise model required for data processing.

Q4: How can I quickly diagnose heteroscedastic noise in my dataset? A: Plot the residual variance vs. the mean intensity (or concentration) for your QC samples. A fan-shaped or funnel-shaped pattern, rather than a random scatter, is indicative of heteroscedasticity. Statistical tests like the Breusch-Pagan test can also be applied.

Troubleshooting Guides

Issue: Poor Statistical Power in Differential Analysis Symptom: Significant findings are biased towards high-intensity features. Root Cause: Untransformed heteroscedastic data gives excessive weight to high-variance (high-abundance) features during hypothesis testing. Solution: Apply a variance-stabilizing transformation (e.g., Generalized Log, log2) before statistical analysis. For downstream modeling, use weighted regression where weights are inversely proportional to the estimated variance.

Issue: Inaccurate Peak Integration for Low-Abundance Compounds Symptom: High relative standard deviation (RSD%) for low-intensity peaks in replicate injections. Root Cause: Signal at low levels is dominated by non-proportional noise (e.g., electronic noise). Solution: Optimize detector parameters (e.g., increase detector gain within linear range). Implement a baseline smoothing algorithm. For quantification, ensure the calibration model accounts for this baseline noise (e.g., by using a weighted least squares model).

Issue: Batch Effect Correction Fails for Low-Intensity Features Symptom: Normalization methods (e.g., PQN) fail to stabilize low-intensity metabolites across batches. Root Cause: Most normalization methods assume homoscedasticity or are influenced by high-intensity features. The high relative variance of low signals makes them unstable. Solution: Use quality control-based robust normalization (e.g., QC-RLSC) that includes variance-weighting. Consider segmenting data by intensity range for batch correction.

Table 1: Primary Noise Sources in LC-MS/GC-MS and Their Variance Characteristics

| Noise Source | Instrument Stage | Variance Relationship | Dominant in Concentration Range |

|---|---|---|---|

| Electronic Noise | Detector, Amplifier | Constant (σ²) | Near detection limit |

| Shot (Poisson) Noise | Ion Generation & Counting | Proportional to Signal (σ² ∝ μ) | Low to Medium |

| Chemical/Matrix Noise | Ion Source (ESI) | Proportional to Signal Squared (σ² ∝ μ²) | Medium to High |

| Source Fluctuation Drift | Ion Source, Gas Flow | Time-dependent | All ranges |

| Column & Inlet Noise | LC/GC Flow, Injection | Often Proportional | All ranges |

Table 2: Impact of Common Modifications on Heteroscedasticity

| Instrument Parameter | Typical Adjustment | Effect on Noise Structure |

|---|---|---|

| Detector Gain (MS) | Increase | Amplifies both signal and electronic noise; can improve S/N for low-abundance ions. |

| Sheath/Drying Gas Flow (ESI) | Optimize for spray stability | Reduces source fluctuation noise, stabilizing medium-abundance signals. |

| Injection Volume | Reduce | Can decrease matrix effects, reducing proportional variance at high concentrations. |

| Scan Rate / Dwell Time | Increase | Reduces stochastic (shot) noise for targeted ions but decreases number of data points. |

Experimental Protocols for Characterizing Heteroscedastic Noise

Protocol 1: Establishing the Variance-Function for an MS Platform Objective: To empirically model the relationship between signal intensity (mean) and its variance.

- Sample Preparation: Prepare a serial dilution of a stable standard (e.g., caffeine) over 5-6 orders of magnitude, covering the instrument's dynamic range. Include at least 6 technical replicates per concentration level.

- Data Acquisition: Inject replicates in randomized order to avoid time-based confounding. Use consistent chromatographic and MS conditions (scan mode, resolution).

- Data Processing: Extract the peak area for the target ion. For each concentration level, calculate the mean (μ) and variance (σ²) of the peak areas.

- Modeling: Plot log(σ²) vs. log(μ). Fit a linear model:

log(σ²) = β0 + β1*log(μ). The slopeβ1indicates the heteroscedastic structure: 0 (homoscedastic), ~1 (Poisson), ~2 (chemical noise dominant).

Protocol 2: QC-Based Assessment of Heteroscedasticity in Untargeted Workflows Objective: To assess the intensity-dependent variance structure in a complex metabolomics dataset.

- QC Sample Creation: Generate a pooled QC sample by combining equal aliquots from all study samples.

- Run Sequence: Inject the QC sample repeatedly (n≥10) at the beginning to condition the system, then intermittently throughout the batch (e.g., every 5-10 injections).

- Data Extraction: Perform peak picking and alignment on the full dataset to obtain a feature table (m/z, RT, intensity).

- Variance Calculation: For each metabolic feature, calculate the mean intensity and variance (or RSD%) across the QC injections.

- Visualization: Create a smoothed scatter plot (e.g., LOESS) of RSD% vs. mean log-intensity. This "hat-shaped" curve is diagnostic for heteroscedasticity in untargeted data.

Visualizing Noise Generation and Mitigation Workflows

Title: Instrument Stages and Associated Noise Sources

Title: Data Processing Pipeline to Address Heteroscedasticity

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Heteroscedasticity Assessment & Mitigation

| Item | Function in Context | Key Consideration |

|---|---|---|

| Stable Isotope-Labeled Internal Standards (across concentration range) | To differentiate technical variance from matrix effects. Spiking at multiple levels allows empirical variance profiling. | Cover a wide dynamic range; use compound classes relevant to study. |

| Pooled Quality Control (QC) Sample | Represents the study's metabolic matrix; used to monitor system stability and model feature-specific variance. | Prepare from equal aliquots of all study samples to be representative. |

| Certified Reference Material (CRM) for Metabolites | Provides a known concentration for establishing the intensity-variance relationship of the instrument. | Choose CRM with matrix similar to your samples if possible. |

| Instrument Tuning & Calibration Solution (e.g., ESI Tuning Mix) | Ensures instrument is performing to manufacturer specifications, providing a baseline noise profile. | Use fresh solution and follow recommended calibration intervals. |

| Quality Solvents & Additives (LC-MS Grade) | Minimizes baseline chemical noise and adduct formation, reducing non-sample-derived variance. | Low UV cutoff, minimal organic impurities. |

| Derivatization Reagents (for GC-MS; e.g., MSTFA, BSTFA) | Increases volatility and stability of metabolites. Proper derivatization reduces tailing and injection variability. | Must be anhydrous; use fresh reagents to avoid side reactions. |

Troubleshooting Guides & FAQs

Q1: In my LC-MS metabolomics data, the variance of log-transformed metabolite intensities increases with the mean. My PCA shows a strong batch effect. What is the problem and how do I diagnose it visually?

A1: You are likely observing heteroscedasticity, a common issue in untargeted metabolomics where the error variance is not constant across the measurement range. This violates the homoscedasticity assumption of many statistical models and can inflate false discovery rates.

- Diagnostic Tool 1: Mean-Variance Plot. Plot the mean (or median) intensity of each feature (metabolite) against its variance (or standard deviation) across all samples. A fan-shaped pattern or positive trend indicates heteroscedasticity.

- Diagnostic Tool 2: Residual Scatterplot. After fitting a linear model (e.g., for batch or condition), plot the model's residuals against the fitted values. A random cloud suggests homoscedasticity; a funnel shape indicates heteroscedasticity.

Q2: My mean-variance plot shows a clear power-law relationship. Which variance-stabilizing transformation should I apply, and what is the protocol?

A2: A power-law trend suggests a variance-stabilizing transformation (VST) like the Generalized Log (Glog) or the Variance Stabilizing Normalization (VSN) is appropriate.

Protocol: Generalized Log (Glog) Transformation

- Data Preparation: Start with your raw peak intensity matrix (features × samples).

- Parameter Estimation: Use an iterative algorithm to estimate the transformation parameter

λthat minimizes the dependence of the variance on the mean.- For each feature, model the variance

vas a function of the meanμ:v = μ^2 * λ + c. - Optimize

λacross all features to stabilize the variance.

- For each feature, model the variance

- Transformation: Apply the Glog transform to each intensity value

y:z = log(y + sqrt(y^2 + λ)). - Validation: Re-generate the mean-variance plot. A successful transformation will show a flat, horizontal trend.

Q3: After attempting a log transformation, my residual scatterplot still shows patterns. What are the next steps?

A3: Simple log transformation may be insufficient. Consider:

- Weighted Regression: Use the inverse of the estimated variance from your mean-variance relationship as weights in your linear models (e.g., limma's

voomor similar approaches). - Robust Scaling: Apply algorithms like Pareto Scaling or Auto Scaling (unit variance), though these can over-correct.

- Non-Linear Modeling: Shift to methods like

DESeq2(adapted from transcriptomics) which explicitly model the mean-variance relationship for count data, or use Generalized Least Squares (GLS) with an appropriate variance structure.

Protocol: Weighted Least Squares (WLS) for Linear Models

- Estimate Variance Function: From your raw data, fit a smooth line (e.g., LOESS) to the standard deviation vs. mean plot.

- Calculate Weights: For each feature intensity

y, predict its variancevfrom the fitted function. The weightwisw = 1/v. - Fit Model: Run your linear model (e.g., ~ Treatment + Batch) using the calculated weights for each data point.

- Re-check Diagnostics: Generate residual plots from the weighted model. Patterns should be diminished.

Table 1: Common Transformations & Their Impact on Heteroscedasticity

| Transformation | Formula | Best For | Effect on Mean-Variance Trend |

|---|---|---|---|

| Log (ln) | ln(y) | Multiplicative errors, log-normal data. | Reduces but may not eliminate power-law trends. |

| Generalized Log (Glog) | ln(y + √(y²+λ)) | Data with a known or inferred additive component. | Stabilizes variance across a wide intensity range. |

| Cube Root | y^(1/3) | Moderate positive skew. | Mild variance stabilization. |

| Square Root | √y | Count data (Poisson-like). | Stabilizes variance where variance ∝ mean. |

| VSN | arsinh(y/α) + β | Multi-platform integration. | Simultaneously stabilizes variance and normalizes. |

Table 2: Diagnostic Plot Interpretation Guide

| Plot | Pattern Observed | Indicated Problem | Recommended Action |

|---|---|---|---|

| Mean-Variance | Positive Slope (Fan-Out) | Heteroscedasticity | Apply VST (Glog, VSN) or use weighted models. |

| Mean-Variance | Horizontal Cloud | Homoscedasticity | Assumption met. Proceed with standard analysis. |

| Residual vs. Fitted | Funnel Shape | Heteroscedasticity | Model residuals require variance weighting. |

| Residual vs. Fitted | Random Scatter | Homoscedasticity | Assumption met. |

| Residual vs. Fitted | U-shaped Curve | Non-linear effect | Model may need additional or different terms. |

Visual Diagnostics Workflow

Diagram Title: Workflow for Diagnosing & Addressing Heteroscedasticity

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Addressing Heteroscedasticity |

|---|---|

| Quality Control (QC) Pool Samples | Injected repeatedly throughout the run to monitor and correct for technical variance (signal drift) over time, a major source of heteroscedasticity. |

| Internal Standards (IS) | A suite of stable isotope-labeled compounds spiked at known concentrations. Used to normalize feature intensities, correcting for ionization efficiency variance across the intensity range. |

| Batch-Specific Solvent Blanks | Essential for identifying and filtering background signals and carryover, which contribute to non-uniform variance. |

| Variance-Stabilizing Software (e.g., VSN, PepsN) | Specialized packages that implement rigorous algorithms (e.g., VSN) to estimate and apply optimal transformations for variance stability. |

| Statistical Packages with Weighted Regression (e.g., limma, statmod) | Enable fitting of linear models with precision weights derived from the mean-variance trend, down-weighting noisy high-intensity data. |

Technical Support Center: Troubleshooting Heteroscedasticity in Untargeted Metabolomics

FAQs & Troubleshooting Guides

Q1: During differential analysis, my Q-Q plots show deviation from the diagonal line in the tails, and my p-value histograms are not uniform. What does this indicate and how can I troubleshoot it? A: This is a classic symptom of heteroscedasticity (non-constant variance) violating the assumptions of standard parametric tests (e.g., t-test, ANOVA). The variance depends on the mean abundance, inflating test statistics for low-abundance metabolites and deflating them for high-abundance ones. This skews the null distribution, leading to non-uniform p-values and inflated false discoveries.

- Troubleshooting Steps:

- Diagnose: Plot residual variance versus mean abundance (scale-location plot). A clear trend confirms heteroscedasticity.

- Apply Variance-Stabilizing Transformation: Use a Generalized Logarithm (glog) transformation instead of simple log. The glog parameter λ can be optimized using error model fitting (e.g., via

vsnpackage in R). - Use Robust Tests: Switch to statistical methods that account for heterogeneous variances, such as Limma with

voomortrend=TRUE, or weighted least squares regression. - Non-Parametric Alternatives: Consider rank-based tests (e.g., Wilcoxon) if transformation fails, acknowledging potential loss of power.

Q2: After FDR correction (e.g., Benjamini-Hochberg), I still suspect a high false discovery rate among my top biomarker candidates. Could heteroscedasticity be the cause? A: Yes. Heteroscedasticity directly compromises the validity of FDR control. The Benjamini-Hochberg procedure assumes p-values under the null are uniformly distributed. Heteroscedasticity distorts this, leading to an incorrect estimation of the proportion of null hypotheses and thus miscalculated adjusted p-values (q-values). You may be declaring too many low-abundance, high-variance metabolites as significant.

- Troubleshooting Steps:

- Pre-process Correctly: Implement variance-stabilizing transformation before running differential analysis.

- Empirical Validation: Use methods like

qvaluethat estimate the π₀ (proportion of true nulls) from the observed p-value distribution, which can be more robust. - Permutation-Based FDR: In complex designs, employ a permutation-based approach to generate a null distribution of test statistics that preserves the variance structure, then calculate empirical FDR.

Q3: My putative biomarkers fail to validate in an independent cohort or targeted assay. Could data structure issues from my untargeted platform be to blame? A: Absolutely. Heteroscedasticity is a primary culprit in poor cross-platform validation. An untargeted metabolomics dataset typically shows increasing variance with higher mean intensity. If this is not addressed, biomarker selection is biased toward high-variance (often low-abundance, noisy) features, which may not be reproducible.

- Troubleshooting Protocol:

- Workflow Integration: Ensure variance stabilization is a mandatory step in your discovery workflow.

- Protocol: gLog Transformation for MS Data:

- Input: Peak intensity matrix (samples x features).

- Step 1: Fit an error model to estimate the relationship between variance and mean per feature (e.g., using

vsnormsEmpiRepackages). - Step 2: Derive the optimal stabilization parameter(s).

- Step 3: Apply the generalized log2 transformation:

glog(x) = log2(x + sqrt(x^2 + λ)). - Step 4: Verify success by plotting standard deviation vs. mean post-transformation; the trend line should be flat.

- Post-Selection: Prioritize biomarkers that remain significant after proper variance modeling and have a plausible biological context.

Quantitative Impact of Heteroscedasticity Correction

Table 1: Comparison of Analysis Outcomes With and Without Addressing Heteroscedasticity (Simulated LC-MS Dataset)

| Metric | Raw Log-Transformed Data | Variance-Stabilized Data (glog) |

|---|---|---|

| Skew in p-value Distribution | Severe left skew (non-uniform) | Near-uniform under null |

| FDR (5% nominal) | 9.8% (Inflated) | 4.7% (Well-controlled) |

| True Positive Rate (Power) | Biased; high for high-abundance, low for low-abundance features | Balanced across intensity range |

| List of Discovered Biomarkers | Over-represents low-abundance, high-variance noise | More reproducible, biologically relevant features |

Experimental Protocols

Protocol: Diagnosing Heteroscedasticity in Metabolomic Data

- Data: Normalized peak intensity matrix.

- Compute: For each metabolite, calculate group means and variances (or standard deviations).

- Visualize: Create a scatter plot of standard deviation (y-axis) versus group mean (x-axis).

- Interpret: A fan-shaped pattern or positive correlation indicates heteroscedasticity. A flat, horizontal band indicates homoscedasticity.

Protocol: Implementing Variance Stabilization with VSN

- Install Package: In R,

install.packages("vsn")andlibrary(vsn). - Fit Model:

fit <- vsn2(as.matrix(your_intensity_matrix)) - Transform Data:

transformed_matrix <- predict(fit, newdata=as.matrix(your_intensity_matrix)) - Validate: Use

meanSdPlot(transformed_matrix)to check for independence of variance from the mean.

Visualization: Experimental Workflows

Title: Metabolomics Analysis Workflow with Heteroscedasticity Check

Title: Cascading Impact of Heteroscedasticity on Discovery

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials & Tools for Addressing Heteroscedasticity

| Item / Reagent | Function / Purpose |

|---|---|

| Quality Control (QC) Pool Samples | Injected throughout run to monitor stability, used for robust error model fitting in transformations like VSN. |

| Internal Standard Mix (ISTD) | Stable isotope-labeled compounds across metabolite classes; aids normalization and assesses technical variance. |

| VSN R/Bioconductor Package | Implements variance-stabilizing transformation and normalization optimized for omics data. |

Limma with voom Function |

Statistical package for RNA-seq adapted for metabolomics; models mean-variance trend to weight observations. |

| glog Transformation Algorithm | Generalized log transform with parameter λ; stabilizes variance across the dynamic range of MS data. |

| Permutation Test Framework | Generates empirical null distributions to calculate FDR, robust to complex variance structures. |

Troubleshooting Guides & FAQs

Q1: During differential analysis of a Metabolomics Workbench dataset, my statistical test (e.g., t-test) assumptions fail due to non-constant variance (heteroscedasticity) across intensity levels. How can I diagnose and address this? A: Heteroscedasticity, where variance scales with metabolite signal intensity, is common in untargeted LC-MS data. Diagnose by plotting Residuals vs. Fitted values or the Standard Deviation vs. Mean intensity per metabolite.

- Solution 1: Apply a variance-stabilizing transformation (VST) such as the Generalized Log (Glog) or log2 transformation prior to analysis.

- Solution 2: Use statistical methods designed for heteroscedastic data, such as weighted regression (

limmawithvoomweights) or non-parametric tests. - Solution 3: Utilize data from technical replicates in the study to model the mean-variance relationship explicitly (e.g., via

PROcessormsEmpiRepackages).

Q2: When pooling data from multiple studies on Metabolomics Workbench, batch effects and differing variance structures overwhelm biological signals. What is the best practice for integration? A: Systematic technical variation introduces severe heteroscedasticity across batches.

- Protocol: Apply batch correction after variance stabilization. Use ComBat (parametric mode in

svapackage), which assumes homoscedasticity, or its extension, ComBat-seq, for count-based metabolomic data. Always correct within biologically similar groups. - Critical Step: Validate correction by PCA: colored by batch should show mixing, colored by biological group should show separation.

Q3: My QC samples show high precision, but biological samples exhibit inflated variance for low-abundance metabolites, compromising detection limits. How can I improve reliability? A: This is a classic manifestation of heteroscedasticity.

- Methodology: Implement a rigorous QC-RLSC (Quality Control-based Robust LOESS Signal Correction) pipeline.

- Run QC samples intermittently throughout the sequence.

- For each metabolite, model its intensity in QCs as a function of injection order using LOESS regression.

- Use this model to correct the drift in biological samples.

- This reduces variance inflation over time, especially critical for low-abundance features.

Q4: I need to prioritize metabolites for validation. How do I select stable biomarkers when variance is intensity-dependent? A: Traditional fold-change ranking is biased towards high-abundance, high-variance metabolites.

- Protocol: Use the Modified Welch's t-test or the Trend test (

limma-trend), which account for mean-variance trends. Calculate p-values adjusted for false discovery rate (FDR) using the Benjamini-Hochberg procedure. Prioritize features with large, statistically significant effect sizes (e.g., fold-change) and low variance within groups relative to the difference between groups.

Table 1: Common Transformations for Variance Stabilization in Metabolomics

| Transformation | Formula | Best For | Effect on Heteroscedasticity |

|---|---|---|---|

| Log2 | x' = log2(x + C) |

Data with moderate mean-variance trend. Simple. | Moderate stabilization. Constant C is critical. |

| Generalized Log (Glog) | x' = ln((x + sqrt(x^2 + λ))/2) |

Strong heteroscedasticity; wide dynamic range. | Excellent stabilization. λ parameter optimizes fit. |

| Cube Root | x' = x^(1/3) |

Data with Poisson-like variance (variance ∝ mean). | Good for count-proportional data. |

VST (via DESeq2) |

Model-based | RNA-seq count data; can be adapted for metabolomics. | Models mean-variance relationship directly. |

Table 2: Key Statistical Tests Under Heteroscedasticity

| Test | R/Python Function | Use Case | Assumption |

|---|---|---|---|

| Welch's t-test | t.test(..., var.equal=FALSE) |

Comparing two groups with unequal variances. | Normality, independent samples. |

| White's Adjusted Test | car::hccm() |

Linear regression with heteroscedastic errors. | Consistent covariance estimator. |

| Kruskal-Wallis | kruskal.test() |

Non-parametric comparison of >2 groups. | Independent samples, ordinal data. |

LIMMA with voom |

limma::voom() then lmFit() |

Multi-group differential analysis, precision weights. | Mean-variance trend can be modeled. |

Experimental Protocols

Protocol: Diagnostic Plot for Heteroscedasticity

- Input: Normalized, but untransformed peak intensity table (features as rows, samples as columns).

- For each metabolite/feature, calculate the group mean and group standard deviation (SD) or variance.

- Create a scatter plot: X-axis = Log10(Group Mean), Y-axis = Log10(Group SD) or Log10(Group Variance).

- Interpretation: A clear positive linear relationship (slope > 0) indicates heteroscedasticity. A horizontal line indicates homoscedasticity.

Protocol: Implementing Glog Transformation in R

Diagrams

Variance Stabilization Workflow for Public Data

Mean-Variance Relationship in Untargeted Metabolomics

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Addressing Heteroscedasticity |

|---|---|

| Pooled QC Samples | Injected repeatedly to model and correct for technical variance drift over time (QC-RLSC). |

| Internal Standards (ISTDs) | Labeled compounds spiked at known concentrations to monitor and correct for matrix effects & ionization variance. |

| Spectral Libraries (e.g., NIST, HMDB) | Aid in confident metabolite identification, reducing variance from misannotation in cross-study comparisons. |

VST Software (PROcess, MSnBase) |

Specialized packages implementing Glog and related transformations for LC-MS data. |

Batch Correction Tools (ComBat, SVA) |

Statistically remove batch effects, which are a major source of structured heteroscedasticity. |

| Quality Metrics (RSD%, D-ratio) | Calculate Relative Standard Deviation in QCs and D-ratio (biol. SD / QC SD) to filter high-variance, unreliable features. |

Solving the Variance Problem: A Toolkit of Transformations and Models for Metabolomics

Troubleshooting Guides & FAQs

Q1: Why is my variance-stabilized data still showing heteroscedasticity in diagnostic plots?

A: This often occurs due to an inappropriate transformation choice or the presence of extreme outliers. First, verify that you applied the transformation to the entire feature matrix correctly. For log transformation (log(x) or log(x+1)), ensure you have no true zero values if using log(x). The square root transformation is weaker and may not suffice for severe heteroscedasticity. The arcsinh transformation, defined as arcsinh(x) = ln(x + sqrt(x^2 + 1)), is more robust to zeros and wide dynamic ranges common in metabolomics. Re-examine your residual vs. fitted plots after each transformation. If heteroscedasticity persists, consider a generalized log (glog) transformation or a power transformation (Box-Cox), which may be more suitable for your specific data distribution.

Q2: How do I handle zeros or missing values when applying these transformations? A: Zeros pose a significant challenge for log transformation. Common solutions include:

- Adding a small pseudocount (e.g., 1, or half of the minimum positive value observed in the data). However, this choice is arbitrary and can influence downstream analysis.

- Using the arcsinh transformation, which is defined for all real numbers, including zero, and requires no pseudocount.

- Employing a missing value imputation method (e.g., k-nearest neighbors, minimum value imputation) before transformation, as transformations are not defined for missing values. The protocol should be consistent across all samples.

Experimental Protocol for Imputation & Log Transformation:

- Identify metabolites with >20% missing values per group and consider removing them.

- For remaining missing values, perform k-nearest neighbors (KNN) imputation (k=10 is a common start) using normalized data.

- Add a pseudocount equal to half the minimum positive value found for each metabolite across all samples.

- Apply the natural log transformation to the entire matrix.

- Document the pseudocount value and imputation method in your metadata.

Q3: Which variance-stabilizing transformation is considered "best" for LC-MS metabolomics data? A: There is no universal "best" transformation. The choice depends on the data structure:

- Log Transformation (log(x+c)): Effective for data where the standard deviation is proportional to the mean (multiplicative error). It compresses large values more aggressively. The need for a pseudocount (c) is a disadvantage.

- Square Root Transformation (sqrt(x)): Useful for data where the variance is proportional to the mean (Poisson-like errors). It is a weaker stabilizer than the log transform.

- Inverse Hyperbolic Sine (Arcsinh): Increasingly favored in metabolomics and proteomics. It behaves like log for high values but is linear near zero, gracefully handling zeros without a pseudocount. It has a tunable parameter (often called the "cofactor") to adjust the linear-to-log transition point.

Recommended Experimental Protocol for Comparison:

- Split Data: Use a training set or a pilot experiment dataset.

- Apply Transformations: Apply log (with several pseudocounts), square root, and arcsinh (with cofactors 1, 10, 100) to the raw data.

- Fit a Model: Perform ANOVA or linear regression on a known significant metabolite across groups for each transformed dataset.

- Diagnose: Generate and compare Residual vs. Fitted plots and Scale-Location plots for each transformation.

- Quantify: Calculate the Levene's test for homogeneity of variance on the residuals. The transformation yielding the highest p-value (least evidence for heteroscedasticity) is optimal for your data type.

Q4: After transformation, my PCA plot looks different. Is this expected? A: Yes, this is expected and desired. Variance-stabilizing transformations change the relative weighting of features. Metabolites with high variance (often high-abundance ones) are down-weighted relative to low-abundance metabolites, which can dramatically alter the multivariate structure. This often leads to better separation of biological groups in PCA if the heteroscedastic noise was obscuring the signal. Always assess PCA on scaled data (e.g., unit variance scaling) after transformation.

Table 1: Properties of Common Variance-Stabilizing Transformations

| Transformation | Formula | Handles Zeros? | Best For Error Type | Key Parameter |

|---|---|---|---|---|

| Logarithmic | log(x + c) | No (requires c) | Multiplicative (σ ∝ μ) | Pseudocount (c) |

| Square Root | sqrt(x) | Yes (x≥0) | Poisson (σ² ∝ μ) | None |

| Arcsinh | arcsinh(x / θ) | Yes | Mixed, Wide Dynamic Range | Cofactor (θ) |

Table 2: Example Impact on Simulated Metabolite Variance

| Metabolite (Mean Raw) | Raw Variance | Variance after Log(x+1) | Variance after sqrt(x) | Variance after arcsinh(x) |

|---|---|---|---|---|

| Low-Abundance (10) | 25 | 0.067 | 0.25 | 0.024 |

| Medium-Abundance (100) | 2500 | 0.021 | 0.25 | 0.0025 |

| High-Abundance (10000) | 250000 | 0.000043 | 0.25 | 0.000025 |

Experimental Protocols

Protocol 1: Systematic Evaluation of Transformation Performance Objective: To select the optimal variance-stabilizing transformation for an untargeted metabolomics dataset.

- Data Preparation: Start with the pre-processed (peak-picked, aligned) but unnormalized data matrix.

- Subset Data: Randomly select a balanced subset of samples (e.g., n=10 per group) for rapid iteration.

- Apply Transformations: Create copies of the subset data and apply:

- log(x+1), log(x + min/2)

- sqrt(x)

- arcsinh(x) with cofactors θ = 1, 10, 100.

- Fit a Linear Model: For each transformed set, fit a simple linear model for a control vs. case group for each metabolite.

- Calculate Diagnostics: For each model, extract residuals. Perform Levene's test on the residuals grouped by sample group.

- Visualize: Plot the distribution of p-values from Levene's test across all metabolites for each transformation. The transformation with the highest median p-value (closest to 1) indicates the most effective stabilization.

- Validate: Apply the top 1-2 transformations to the full dataset and inspect Residual vs. Fitted plots for key metabolites.

Protocol 2: Implementing Arcsinh Transformation with Cofactor Optimization Objective: To apply and tune the arcsinh transformation for an LC-MS dataset.

- Define Function: Use the formula:

arcsinh(x / θ) = ln( (x / θ) + sqrt((x / θ)^2 + 1) ). - Set Cofactor Range: Test θ values across the approximate range of your data's noise level (e.g., 1, 5, 10, 50, 100, 500).

- Optimization Criterion: Calculate the mean-variance relationship (e.g., local regression of SD vs. Mean) for each θ. The optimal θ minimizes the slope of this relationship.

- Apply: Transform the entire data matrix using the optimized θ.

- Note: For integrated software (e.g., in R), the

asinh()function often uses a fixed θ=1. Manual scaling of the data (x/θ) is required before using this function.

Diagrams

Title: Decision Workflow for Selecting a Variance-Stabilizing Transformation

Title: Theoretical Effect of Transformations on Mean-Variance Relationship

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Variance Stabilization Context |

|---|---|

| R or Python Environment | Primary computational platform for implementing transformations (e.g., log1p(), sqrt(), asinh()), statistical tests (Levene's test), and generating diagnostic plots. |

| Metabolomics Software Suites (e.g., XCMS, MS-DIAL) | Used for initial data processing (peak picking, alignment) to generate the raw abundance matrix before transformation. |

| Pseudocount Value (c) | A small, constant value added to all measurements to avoid taking the log of zero. Its selection requires careful justification. |

| Cofactor (θ) for Arcsinh | A scaling parameter that determines the transition point between the linear and logarithmic behavior of the arcsinh function. Optimized from the data. |

| Statistical Testing Scripts | Custom scripts to automate Levene's or Brown-Forsythe tests across all metabolites to quantitatively compare transformation efficacy. |

| Visualization Libraries (ggplot2, matplotlib) | Essential for creating Residual vs. Fitted, Scale-Location, and mean-SD plots to visually assess homoscedasticity. |

Technical Support Center: Troubleshooting Guides & FAQs

This support center is designed within the context of a thesis on Addressing heteroscedasticity in untargeted metabolomics datasets. It addresses common issues researchers face when applying the glog transformation to stabilize variance and improve downstream statistical analysis.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental purpose of the glog transformation in metabolomics? A: The glog transformation is specifically designed to stabilize variance (address heteroscedasticity) across the entire dynamic range of metabolomics data. Untargeted datasets typically show higher technical variance for high-intensity peaks and lower variance for low-intensity peaks. The glog transformation corrects this, making the variance independent of the mean signal, which is a critical assumption for reliable parametric statistical testing (e.g., t-tests, ANOVA).

Q2: How do I determine the optimal lambda (λ) parameter for my dataset? A: The λ parameter is optimized to achieve homoscedasticity (constant variance). The standard methodology is as follows:

- Perform technical replicates of a representative sample.

- Apply a candidate λ value to the replicate data.

- Calculate the standard deviation (SD) of the glog-transformed replicates for each metabolic feature.

- Plot the SD versus the mean intensity (or median) for all features.

- The optimal λ minimizes the dependence (slope) of SD on the mean. This is often formalized by minimizing the trend in a residuals plot or by using a variance-stabilizing objective function.

Q3: My glog-transformed data still shows some heteroscedasticity. What should I check? A:

- Replicate Quality: Ensure the technical replicates used for λ optimization are of high quality and free from outliers.

- Parameter Search Range: Expand the optimization search for λ. Sometimes the optimal value is outside the initially tested range.

- Data Preprocessing: Confirm that appropriate background correction, noise filtering, and alignment have been performed before transformation. The glog cannot correct for poor upstream processing.

- Model Assumption: For extreme heteroscedasticity, a combined approach with subsequent scaling (e.g., Pareto) might be necessary.

Q4: Can I directly compare glog-transformed values from different experimental batches or instruments? A: Not directly. The λ parameter is dataset-specific. To compare across batches:

- Optimal Approach: Process and transform each batch independently, including batch-specific λ optimization. Integrate the data after transformation using batch correction methods (e.g., ComBat, EigenMS).

- Alternative: Use a pooled QC sample analyzed across all batches to determine a single, global λ. This is less ideal if batch effects are significant.

Troubleshooting Guide: Common Error Messages & Issues

Issue: "Numerical instability" or "NaN values" appear after transformation.

- Cause: This often occurs when the argument to the glog function

glog(x) = log((x + sqrt(x^2 + λ)) / 2)becomes invalid, typically for negative or extreme values in the input data. - Solution:

- Check for and handle negative values (e.g., from baseline correction) prior to transformation. A small offset may be needed.

- Ensure λ is a positive number (λ > 0).

- Verify that the data matrix does not contain non-numeric or infinite values.

Issue: The variance-stabilization performance is poor for low-abundance metabolites.

- Cause: The relationship between variance and mean for signals near the detection limit may deviate from the model assumed by the standard glog.

- Solution: Consider a two-step approach: (1) Apply a threshold or specific model for near-zero signals, (2) Apply the standard glog to signals above a defined cutoff. Alternatively, explore modified glog formulations designed for this scenario.

Issue: How do I interpret the units of glog-transformed data for biological reporting?

- Clarification: The glog-transformed value is unitless and should be interpreted as a variance-stabilized score. Always report:

- The λ value used.

- The exact transformation equation.

- For reporting fold-changes, results must be back-transformed to the original scale for biological interpretation.

Data Presentation: Key Parameter Optimization Results

Table 1: Comparison of Variance-Stabilizing Performance for Common Transformations in a Model Metabolomics Dataset (n=6 QC Replicates) Objective: Minimize the slope (β) of SD vs. Mean plot. Ideal β = 0.

| Transformation | Optimal Parameter (λ) | Slope (β) | R² of SD vs. Mean | Notes |

|---|---|---|---|---|

| None (Raw) | N/A | 0.85 | 0.92 | Severe heteroscedasticity. |

| Log (base 2) | N/A | 0.15 | 0.45 | Over-corrects low end, unstable for zeros. |

| Generalized Log (glog) | 5.2 | 0.02 | 0.08 | Optimal stabilization achieved. |

| Square Root | N/A | 0.52 | 0.78 | Moderate improvement only. |

| Power (Box-Cox) | λ=0.3 | 0.08 | 0.25 | Good, but glog outperformed. |

Experimental Protocols

Protocol 1: Optimizing the Lambda (λ) Parameter Using Quality Control (QC) Replicates

- Purpose: To empirically determine the λ value that best stabilizes variance across the measurement range.

- Materials: Pooled QC sample analyzed repeatedly (n≥6) throughout the analytical run.

- Procedure:

- Data Extraction: Obtain peak intensity matrices for all QC replicates after chromatographic alignment.

- Pre-filtering: Remove metabolic features with >30% missing values or high RSD (>30%) in the QCs.

- Lambda Search: Define a search grid for λ (e.g., from 1e-6 to 1000 on a logarithmic scale).

- Transformation & Calculation: For each candidate λ, apply the glog transform to all QC data. For each metabolic feature, calculate the standard deviation (SD) of its glog-transformed values across QC replicates.

- Regression & Evaluation: For each λ, perform a linear regression:

SD ~ Mean(using the mean glog-transformed intensity per feature). Record the slope (β) and R² of this regression. - Selection: The optimal λ is the one that minimizes the absolute value of the slope (β) and the R², indicating minimal dependence of variance on the mean.

- Application: Apply the glog transformation with the optimized λ to the entire experimental dataset.

Protocol 2: Validating Homoscedasticity Post-Transformation

- Purpose: To confirm the success of the glog transformation prior to statistical analysis.

- Procedure:

- Using the transformed experimental data, plot the residual standard deviation (or the standard deviation within biological/technical groups) against the mean intensity for each metabolite.

- Visually inspect the plot for any obvious trends. A successful transformation will show a "cloud" of points with no systematic upward or downward trend.

- Statistically, run a simple linear model (

SD ~ Mean) and test that the slope is not significantly different from zero (p > 0.05, e.g., using an F-test). - Proceed with differential analysis only after successful validation.

Mandatory Visualization

Title: glog Transformation & Validation Workflow for Metabolomics

Title: Conceptual Goal of glog: Decoupling Mean and Variance

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials & Tools for glog Implementation in Metabolomics

| Item | Function/Benefit | Example/Note |

|---|---|---|

| Pooled QC Sample | A homogeneous sample representing the study's biological matrix, injected repeatedly throughout the run. Essential for λ optimization and monitoring instrumental drift. | Created by pooling equal aliquots from all study samples. |

| Chromatography Solvents (LC-MS Grade) | High-purity solvents (water, acetonitrile, methanol) with minimal contaminants to reduce background noise and artifact peaks, ensuring cleaner data for transformation. | Opt for LC-MS grade formic acid/ammonium acetate as modifiers. |

| Stable Isotope Internal Standards (IS) | A mixture of isotopically labeled compounds spanning different chemical classes. Used to monitor and correct for systematic variance before glog application. | Should be added at the earliest possible step (e.g., during extraction). |

| Statistical Software (R/Python) | Programming environments with necessary packages for glog calculation, optimization, and validation. | R: vsn package (uses a similar transform), glog package. Python: scipy, numpy for custom implementation. |

| Data Processing Software | Platforms to convert raw instrument files into aligned peak intensity matrices, a prerequisite for transformation. | XCMS, MS-DIAL, MarkerView, Compound Discoverer. |

| Vendor-Specific Tuning Kits | Standard mixtures used to calibrate and optimize MS instrument response, ensuring data quality at the acquisition stage. | Essential for maintaining reproducibility across optimization experiments. |

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: After applying VSN to my untargeted metabolomics dataset, some high-abundance features show increased variance instead of stabilization. What is the cause and solution?

A1: This often occurs due to incomplete model convergence or the presence of extreme outliers that violate the model's assumption of a global mean-variance relationship. First, check the diagnostic plots (residuals vs. rank). Remove extreme outliers (e.g., features with intensity >6 median absolute deviations from the median) and re-run VSN. Ensure you are using the justvsn function with the default offset of 0.5 on all samples simultaneously for proper parameter estimation.

Q2: How do I handle missing values (NAs) in my data matrix prior to VSN transformation? A2: The VSN algorithm requires complete data. Common strategies include:

- For LC-MS data: Use a minimal, non-zero value imputation (e.g., half the minimum positive value for a feature) only if missingness is low (<20%). For higher missingness, use k-nearest neighbor (KNN) imputation tailored for metabolomics before VSN.

- Critical: Never impute with zero. Re-filter your feature list to include only features present in at least 80% of samples per group to minimize missing data burden.

- Always document the imputation method, as it influences downstream variance.

Q3: My normalized data fails normality tests (e.g., Shapiro-Wilk) even after VSN. Does this mean the method failed? A3: Not necessarily. VSN's primary goal is to stabilize variance across the measurement range (address heteroscedasticity), not to guarantee a perfectly normal distribution for every feature. While it often improves normality, biological multimodality can persist. Focus on the success of variance stabilization by inspecting the homoscedasticity in mean-variance plots pre- and post-normalization.

Q4: Can I apply VSN to integrated peak areas from both positive and negative ionization modes combined? A4: It is not recommended. Positive and negative mode data represent distinct, complementary subsets of the metabolome with different chemical biases and sensitivity ranges. Apply VSN separately to each mode's data matrix. You can combine the normalized datasets for analysis only after independent normalization and scaling.

Key Troubleshooting Table

| Issue | Probable Cause | Diagnostic Step | Recommended Solution |

|---|---|---|---|

| Algorithm non-convergence | Severe outliers, too many zeros/missing values. | Inspect vsn2 error log; plot data matrix heatmap. |

Pre-filter features; apply informed imputation; increase lts.quantile parameter. |

| Batch effects remain post-VSN | Strong batch effects dominate biological signal. | Perform PCA pre- and post-VSN; color by batch. | Apply VSN within batches first, then use ComBat or limma's removeBatchEffect across batches. |

| Poor downstream classifier performance | Over-stabilization, loss of biological variance. | Compare PCA group separation before/after. | Tune the strata parameter for partial within-group normalization, or consider non-linear methods like LOESS. |

| Negative values after transformation | Background correction offset issue. | Check distribution summary (min, max). | This is expected; VSN maps data to an additive space. Proceed with statistical testing. |

Experimental Protocol: VSN for Untargeted Metabolomics Data

Protocol Title: Variance-Stabilizing Normalization of LC-MS-Based Untargeted Metabolomics Data.

1. Prerequisite Data Preparation:

- Input: A matrix of integrated peak intensities (features × samples). No log-transformation applied.

- Perform initial quality control: Remove features with >20% missing values across QC samples.

- Impute remaining missing values using the k-Nearest Neighbor (KNN) method (k=10, using the

impute.knnfunction from the impute R package).

2. VSN Transformation:

3. Validation and Diagnostics:

- Generate mean-variance (sd vs. mean) scatter plots for raw and

x_vsndata. - Visually confirm the flattening of the trend.

- Perform Principal Component Analysis (PCA) on the

x_vsndata, colored by sample group and injection batch, to assess biological separation and residual technical bias.

Visualizations

VSN Workflow for Metabolomics Data

Core Logic of VSN Integration

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Reagent | Function in VSN Context |

|---|---|

| Quality Control (QC) Pool Sample | A pooled aliquot of all study samples, injected repeatedly throughout the run. Used to monitor instrument stability and often as a reference for certain normalization methods, though VSN typically uses all data. |

| Internal Standards (ISTDs) | A suite of stable isotope-labeled compounds spanning chemical classes. Correct for injection volume variability and matrix effects. Critical: VSN is applied to data after ISTD correction. |

| Solvent Blanks | Pure solvent samples. Used to identify and remove background/filter carryover features from the data matrix before VSN application. |

R/Bioconductor vsn Package |

The primary software implementation providing the vsn2() and justvsn() functions for robust parameter estimation and transformation. |

| NIST SRM 1950 | Standard Reference Material for metabolomics. Used as an inter-laboratory QC to assess system performance and validate the calibration effect of VSN across datasets. |

| LOESS/Spline Calibrants | For non-linear retention time alignment before VSN. Essential for ensuring the same feature is matched across all samples in the input matrix. |

Technical Support Center: Troubleshooting WLS & Weighted PCA in Metabolomics

This support center addresses common issues encountered when implementing Weighted Least Squares (WLS) regression and Weighted Principal Component Analysis (PCA) to combat heteroscedasticity in untargeted metabolomics datasets.

Frequently Asked Questions (FAQs)

Q1: My WLS model fails to improve or performs worse than OLS. What are the primary causes? A: This typically stems from incorrect weight specification.

- Cause A: Incorrect Variance Function. The assumed relationship between variance and signal intensity (e.g.,

variance ~ mean^2) may be wrong for your data. - Troubleshooting: Plot log(sample variance) vs. log(sample mean) for your QC samples or technical replicates. Fit a line to determine the true power relationship (

variance ∝ mean^power). Use this exponent to calculate weights (weight = 1/(mean^(power))). - Cause B: Extreme Weights. Very low-intensity features receive disproportionately high weights, amplifying their noise.

- Troubleshooting: Apply a smoothing function or a lower bound (floor) to the estimated variances before inverting them to weights. Consider using

log(x+1)transformed data for weight calculation.

Q2: How do I handle missing values when calculating weights for WLS or Weighted PCA? A: Weights and the analysis itself require complete data.

- Perform initial missing value imputation (e.g., k-NN, minimum value) on your peak intensity matrix.

- Using the imputed data, calculate the variance and mean for each feature (metabolite) across samples or QC replicates.

- Derive weights from these variance estimates.

- Apply WLS or Weighted PCA using the imputed data matrix and the calculated weight matrix.

Q3: In Weighted PCA, my results are dominated by a few high-weight variables. How can I balance weighting and representation? A: This indicates an imbalance in your weight distribution.

- Solution: Normalize or transform the weight vector. Common approaches include:

- Scaling: Scale weights so their sum equals the number of variables.

- Power Transformation: Apply a fractional power (e.g.,

sqrt(weight)) to dampen extreme differences. - Truncation: Winsorize the weights by capping the maximum value (e.g., at the 95th percentile).

Q4: What is the best method to empirically estimate the variance function for weight calculation? A: Use repeated measures, such as Quality Control (QC) samples or technical replicates.

- Protocol: Pool equal aliquots from all experimental samples to create a homogeneous QC pool. Inject the QC sample repeatedly (e.g., every 4-10 injections) throughout the analytical run.

- Calculation: For each metabolic feature, calculate the variance across all QC injections. Plot this variance against the mean intensity for that feature across QCs. Fit a model (e.g.,

var = α * mean^β) to derive the heteroscedasticity relationship for your specific LC-MS platform and study.

Troubleshooting Guides

Issue: Unstable WLS Coefficients During Cross-Validation Symptoms: Large fluctuations in regression coefficients (e.g., for a clinical outcome) when different cross-validation folds are used. Diagnostic Steps:

- Check for high leverage points in your design matrix (X).

- Verify that the variance structure is consistent across subgroups. Fit variance models separately for case/control groups using QC data.

- Ensure weights are recalculated within each training fold during cross-validation, not based on the whole dataset, to avoid bias.

Issue: Computational Errors in Weighted PCA with Large Datasets Symptoms: Algorithm fails to converge or returns memory errors. Resolution:

- Pre-process: Reduce dimensionality first by removing low-variance features (after considering their weights).

- Implementation: Use specialized algorithms for weighted low-rank approximation (e.g., NIPALS-based Weighted PCA) that avoid constructing the full weighted covariance matrix.

- Subset: Perform the analysis on a representative subset (e.g., 500-1000 features with the highest weighted variance) for initial exploration.

Table 1: Common Variance-Intensity Relationships in LC-MS Metabolomics Data

| Variance Model | Formula | Typical Exponent (β) Range | Suggested Use Case |

|---|---|---|---|

| Constant Variance | var = k |

β = 0 | Homoscedastic data (rare in untargeted MS) |

| Poisson-like | var ∝ mean |

β ≈ 1 | Lower-intensity, count-like data |

| Power Law (Empirical) | var ∝ mean^β |

β ≈ 1.5 - 2.2 | Most common in LC-MS metabolomics |

| Quadratic | var ∝ mean^2 |

β = 2 | Approximate model for high-intensity peaks |

Table 2: Impact of Weighting on Model Performance (Example Simulation)

| Method | Mean Squared Error (MSE) | 95% CI Coverage Rate | Feature Selection Precision |

|---|---|---|---|

| Ordinary Least Squares (OLS) | 12.7 | 88.5% | 0.72 |

| WLS (Correct Weights) | 8.1 | 94.2% | 0.91 |

| WLS (Incorrect Exponent) | 10.3 | 90.1% | 0.85 |

| Unweighted PCA (First PC) | N/A | N/A | Explains 35% of total variance |

| Weighted PCA (First PC) | N/A | N/A | Explains 62% of weighted variance |

Experimental Protocols

Protocol 1: Establishing a Study-Specific Variance Function for Weight Calculation Objective: To empirically determine the relationship between metabolite intensity and variance for robust WLS and Weighted PCA. Materials: See "The Scientist's Toolkit" below. Procedure:

- QC Sample Preparation: Prepare a pooled QC sample from an equal aliquot of every study sample.

- Instrumental Analysis: Analyze the QC sample repeatedly (n ≥ 10) throughout the LC-MS sequence, interspersed at regular intervals.

- Data Pre-processing: Process raw files. Align peaks and perform QC-based correction (e.g., using QC-RFSC or batch correction). Do not impute missing values in the QC data yet.

- Variance Calculation: For each metabolic feature present in at least 70% of QC injections, calculate:

Mean_QC= mean intensity across QC injections.Var_QC= variance of intensity across QC injections.

- Model Fitting: Perform a linear regression on log-transformed values:

log(Var_QC) = α + β * log(Mean_QC). - Weight Assignment: For all study samples, the weight for feature

iis:w_i = 1 / ( (mean_intensity_i)^β ).

Protocol 2: Implementing Weighted PCA for Heteroscedastic Data Exploration

Objective: To perform a dimensionality reduction that accounts for heteroscedastic noise.

Input: Pre-processed, imputed, and possibly log-transformed peak intensity matrix X (nsamples x pfeatures).

Procedure:

- Calculate Feature Weights: Using the variance function from Protocol 1 or from sample replicates, compute a weight

w_jfor each of thepfeatures (j=1..p). Form a diagonal weight matrixW. - Center the Data: Column-center the data matrix

Xto obtainX_c. - Apply Weights: Transform the data using the weights:

X_w = X_c * W^(1/2). This down-weights noisier features. - Perform SVD: Compute the Singular Value Decomposition (SVD) of the weighted matrix:

X_w = U * S * V^T. - Obtain Weighted Loadings: The weighted loadings (for the original space) are given by

P = W^(-1/2) * V. - Obtain Scores: The weighted PCA scores are

T = U * S. These can be used for visualization, with the first few PCs capturing the most reliable variance.

Visualization

Diagram 1: Workflow for Addressing Heteroscedasticity in Metabolomics

Diagram 2: WLS vs. OLS Regression Concept

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Implementing WLS/Weighted PCA Protocols

| Item / Reagent | Function / Purpose |

|---|---|

| Pooled Quality Control (QC) Sample | A homogeneous reference for empirically measuring technical variance across the analytical run. Crucial for estimating the variance-intensity relationship. |

| Internal Standard Mix (ISTD) | A set of stable isotope-labeled compounds spanning chemical classes. Used for monitoring and correcting for instrument drift, but not directly for weighting. |

| Solvent Blanks | Pure LC-MS grade solvents (e.g., water, methanol). Used to identify and filter system background noise and carryover. |

| Reference Serum/Pasma Pool (e.g., NIST SRM 1950) | A commercially available, characterized human metabolome reference material. Used for system suitability testing and inter-laboratory comparison. |

| Data Processing Software (e.g., XCMS, MS-DIAL, Progenesis QI) | Extracts peak intensities, aligns runs, and performs initial QC. Outputs the feature intensity matrix for downstream statistical weighting. |

| Statistical Environment (R/Python with key packages) | R: stats (WLS), FactoMineR (PCA), pcaMethods (Weighted PCA). Python: statsmodels (WLS), scikit-learn (PCA with sample weights), numpy. |

Technical Support Center: Troubleshooting Guides & FAQs

Context: This support center is framed within a broader thesis on Addressing heteroscedasticity in untargeted metabolomics datasets research. It provides targeted assistance for implementing advanced statistical models that account for non-constant variance.

Frequently Asked Questions (FAQs)

Q1: In my untargeted metabolomics data, why does applying a standard linear model (like ordinary limma) without considering heteroscedasticity lead to problematic results? A: Untargeted metabolomics data exhibits a strong mean-variance relationship, where high-abundance metabolites tend to have higher variances. Ignoring this heteroscedasticity inflates false discovery rates for low-abundance compounds and reduces power for high-abundance ones. Standard linear models assume constant variance, violating this assumption leads to biased standard errors and unreliable p-values.

Q2: When should I choose voom/limma-voom over a negative binomial-based tool like DESeq2 for my metabolomics data?

A: limma-voom is recommended when your data is log-transformed and approximately continuous (common in LC-MS metabolomics after preprocessing). It models the mean-variance trend non-parametrically. Principles from DESeq2 (parametric dispersion estimation and shrinkage) are highly beneficial for raw, count-like data (e.g., from spectral binning), but DESeq2 itself is optimized for RNA-seq. For metabolomics, consider analogous tools like propr or DEseq2-inspired workflows adapted for metabolomics counts.

Q3: The voom plot shows a non-monotonic or unclear mean-variance trend. What does this indicate and how should I proceed?

A: This typically indicates issues in data preprocessing. Potential causes and solutions are below.

| Potential Cause | Diagnostic Check | Recommended Action |

|---|---|---|

| Incomplete Normalization | Plot PCA before/after normalization. | Apply robust normalization (e.g., Quantile, Cyclic LOESS). For metabolomics, consider Probabilistic Quotient Normalization (PQN). |

| Presence of Excessive Missing Values | Calculate % missing per feature. | Use metabolite-specific missing value imputation (e.g., k-NN, BPCA), not mean imputation. Filter features with >30% missing. |

| Outlier Samples | Check sample-wise clustering & Cook's distance. | Remove severe outliers and re-normalize. |

| Data Not Log-Transformed | Check data distribution. | Apply a log2(x + c) transformation, where c is a small pseudo-count. |

Q4: After running DESeq2-inspired analysis on my metabolomic counts, many metabolites have NA values for p-values. Why?

A: This occurs during dispersion estimation. Common reasons for metabolomics data:

| Reason | Explanation & Fix |

|---|---|

| Zero-Inflation | Many metabolites have zero counts in most samples. Fix: Apply a more stringent prevalence filter (keep features present in >50% of samples per group). |

| Extreme Outliers | A single sample has an extreme count for a metabolite. Fix: Review cooksCutoff argument and visualize Cook's distances. |

| Low Mean Counts | The mean normalized count is extremely low. Fix: Increase pre-filtering; keep features with at least e.g., 10 total counts across all samples. |

| All Replicates Identical | No variation across replicates for a condition. Fix: This is a biological/design issue; exclude the feature. |

Q5: How can I validate that my heteroscedasticity- conscious model (e.g., voom) has adequately addressed the variance issue?

A: Use post-model diagnostic plots.

- Residual vs. Fitted Plot: Points should be randomly scattered around zero with no funnel shape.

- QQ-Plot of Standardized Residuals: Points should closely follow the diagonal line.

- Check

voomWeights: The finallimmamodel uses precision weights. Ensure weights are being used in thelmFitcall (weights=object$weights). High-quality data will show a clear, smooth mean-variance trend in the initialvoomplot.

Experimental Protocols

Protocol 1: Implementing limma-voom for LC-MS Log-Transformed Metabolomics Data

Objective: To perform differential analysis while modeling the mean-variance relationship.

- Input Data Preparation: Start with a peak intensity matrix (features × samples) that has been preprocessed (noise-filtered, missing value imputed, normalized, and log2-transformed).

- Design Matrix: Define the design matrix using

model.matrix()based on your experimental groups (e.g., Case vs. Control). - Voom Transformation: Apply the

voom()function to the data and design matrix.- Critical Step: Examine the generated plot. A clear, decreasing mean-variance trend confirms heteroscedasticity is present.

- The function outputs

E(a new expression matrix) andweights.

- Linear Modeling: Fit a weighted linear model using

lmFit(object$E, design, weights=object$weights). - Empirical Bayes Moderation: Apply shrinkage to the feature variances with

eBayes(). - Results Extraction: Use

topTable()to extract differentially abundant metabolites, sorted by adjusted p-value (FDR).

Protocol 2: Applying DESeq2 Principles to Untargeted Metabolomics Count Data

Objective: To adapt count-based dispersion estimation and shrinkage for metabolomic data from spectral bins or peaks.

- Input Data: Use a raw, non-normalized count matrix (e.g., integrated peak areas binned into discrete "counts"). Do not log-transform.

- Metadata: Prepare a

colDatadataframe describing samples. - DESeqDataSet: Create a

DESeqDataSetFromMatrix(countData, colData, ~group). - Pre-Filtering: Remove metabolites with very low counts:

dds <- dds[rowSums(counts(dds)) >= 10, ]. - Dispersion Estimation (Core):

- Estimate gene-wise dispersions:

estimateDispersions(dds). - DESeq2 fits a parametric curve (mean vs. dispersion) and shrinks gene-wise estimates towards this curve.

- Troubleshooting: If the dispersion plot looks unstable, increase the

minReplicatesForReplaceargument or apply more aggressive pre-filtering.

- Estimate gene-wise dispersions:

- Negative Binomial GLM Fitting: Perform Wald test or LRT with

DESeq(dds). - Results: Extract results with

results(dds). Shrunken log2 fold changes can be obtained usinglfcShrink().

Visualizations

Title: limma-voom workflow for metabolomics

Title: Problem & goal of variance stabilization

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Solution | Function in Heteroscedasticity-Conscious Analysis |

|---|---|

R/Bioconductor (limma, DESeq2, statTarget) |

Core statistical software packages for implementing weighted linear models (limma-voom) and negative binomial GLMs (DESeq2 principles). |

| Probabilistic Quotient Normalization (PQN) | Robust normalization method for metabolomics that reduces heteroscedasticity introduced by technical variation. |

| k-Nearest Neighbors (k-NN) Imputation | Method for missing value imputation that preserves the underlying data structure and variance characteristics better than simple mean imputation. |

| Sva / Combat Package | Used for batch effect correction, which if unaddressed, can create complex, confounding mean-variance structures. |

| MetaBoAnalyst / MetabolAnalyst | Web-based platforms that incorporate some variance-stabilizing transformations and advanced statistical modules for metabolomics. |

| Quality Control (QC) Pool Samples | Injected repeatedly throughout the LC-MS run to monitor technical variance, essential for assessing data quality prior to modeling. |

| Internal Standard Mix (ISTD) | A set of stable isotope-labeled compounds used for signal correction and to help control variance across samples. |

Pitfalls and Best Practices: Optimizing Your Heteroscedasticity Correction Pipeline

Technical Support Center: Troubleshooting & FAQs

Frequently Asked Questions (FAQs)

Q1: My LC-MS data has many zeros after peak picking. Are these true biological absences or technical non-detects? A: In untargeted metabolomics, most zeros are "Non-Detects" (NDs) due to signal falling below the instrument's limit of detection (LOD), not true biological zeros. Distinguishing them is critical. Protocol: Run a dilution series of a pooled QC sample. Plot peak area vs. concentration. The point where the signal consistently disappears is your experimental LOD for that feature. Values below this LOD in your study samples should be treated as NDs.

Q2: How should I handle these non-detect values before log-transformation to address heteroscedasticity? A: Direct log-transformation of data with zeros is invalid. You must first impute or replace these values. A common method is using a value derived from the LOD. Protocol: 1) Calculate the LOD for each feature via the dilution experiment. 2) For each non-detect, replace the zero with LOD/√2 or LOD/2. 3) Proceed with your chosen transformation (e.g., log, power).

Q3: I used LOD/2 imputation, but my PCA plot is still dominated by high-variance, low-abundance metabolites. What went wrong? A: This is a classic sign of residual heteroscedasticity. The imputation method may not be sufficient. Consider a two-step approach: First, impute with a minimal value (LOD/2). Second, apply a more aggressive variance-stabilizing transformation like the Generalized Log (glog) transform, which is better suited for metabolomics data spread.

Q4: Does the choice of zero-handling method affect downstream statistical tests? A: Absolutely. Inappropriate handling inflates type I/II errors. Protocol for Validation: 1) Simulate a dataset with known true differences and introduced non-detects. 2) Apply different zero-handling methods (e.g., LOD-based imputation, k-NN imputation, QRILC). 3) Perform a t-test/ANOVA. Compare the false positive/negative rates and the effect size estimates to the known truth. The optimal method minimizes error rates.

Q5: Can I simply remove all features with missing values? A: This is not recommended as it can bias your results, removing potentially important low-abundance metabolites and distorting biological conclusions. Use this only if the proportion of missing values is very high (e.g., >80%) and clearly linked to the sample group.

Experimental Protocols

Protocol 1: Determining Feature-Specific Limits of Detection (LOD)

- Sample Prep: Create a pooled Quality Control (QC) sample from all study samples.

- Dilution Series: Serially dilute the QC sample (e.g., 1:2, 1:4, 1:8, 1:16) with your blank solvent.

- Acquisition: Inject each dilution in triplicate in a randomized sequence.

- Data Processing: Process raw files with your standard peak picking/alignment workflow.

- Calculation: For each metabolic feature, plot the mean peak area (Y) against the dilution factor/concentration (X). Fit a linear regression. The LOD is calculated as:

LOD = 3.3 * (Std Error of Regression) / Slope.

Protocol 2: Evaluating Zero-Handling Methods via Simulation

- Simulate Ground Truth: Generate a dataset (e.g., 100 samples, 500 features) with a known distribution (log-normal) and introduce known differential abundances for 10% of features.

- Introduce Non-Detects: Randomly set values below a simulated LOD threshold to zero, mimicking realistic missing-not-at-random (MNAR) patterns.

- Apply Methods: Process the corrupted dataset using different imputation strategies (see Table 1).

- Benchmark: Perform statistical analysis (e.g., fold-change, t-test) on each processed dataset. Compare outcomes (p-value distribution, ROC curves) to the ground truth.

Data Presentation

Table 1: Comparison of Common Zero-Handling & Transformation Methods for Heteroscedasticity

| Method | Core Principle | Best For | Key Advantage | Key Limitation | Impact on Heteroscedasticity |

|---|---|---|---|---|---|