From Raw Data to Biological Insight: A Step-by-Step Guide to Modern Metabolomics Data Preprocessing

This comprehensive guide provides researchers, scientists, and drug development professionals with a structured framework for metabolomics data preprocessing.

From Raw Data to Biological Insight: A Step-by-Step Guide to Modern Metabolomics Data Preprocessing

Abstract

This comprehensive guide provides researchers, scientists, and drug development professionals with a structured framework for metabolomics data preprocessing. The article covers foundational concepts of raw data, explores essential methodologies from peak picking to normalization, addresses common pitfalls and optimization strategies, and compares leading software and validation approaches. The goal is to equip practitioners with best practices to transform complex spectral data into reliable, biologically interpretable results for robust biomarker discovery and pathway analysis.

Demystifying Raw Metabolomics Data: Understanding Your Starting Point

Within the framework of best practices for metabolomics data preprocessing workflow research, a rigorous understanding of the raw spectral signal is paramount. Mass Spectrometry (MS) and Nuclear Magnetic Resonance (NMR) spectroscopy are the two pillars of high-throughput metabolomic analysis. The raw data from these instruments are complex, containing the true analytical signal of interest (peaks) obscured by systematic and random artifacts, primarily noise and baseline drift. Effective preprocessing, which is critical for accurate biological interpretation in drug development and biomarker discovery, requires a foundational knowledge of this anatomy.

Core Components of a Raw Spectrum

The Analytical Signal: Peaks

A peak is the localized increase in signal intensity corresponding to the detection of an ion (in MS) or a nucleus (in NMR). Its characteristics are fundamental for compound identification and quantification.

Peak Attributes:

- Centroid / m/z (MS) or Chemical Shift δ (NMR): The location on the x-axis, the primary identifier.

- Amplitude / Intensity (Height): The signal strength at the peak maximum, often related to concentration.

- Area / Integral: The total area under the peak curve, a more robust measure of abundance.

- Full Width at Half Maximum (FWHM): A measure of peak width, indicating resolution and possible co-elution/overlap.

- Shape: Ideal peaks are symmetrical (e.g., Gaussian or Lorentzian). Deviations indicate issues like peak tailing in chromatography or magnetic field inhomogeneity in NMR.

The Unwanted Background: Noise

Noise is the stochastic, high-frequency fluctuation superimposed on the true signal. It limits the detection of low-abundance metabolites and the precision of quantification.

Types of Noise:

- Chemical Noise: Arises from contaminants, column bleed (LC-MS), or solvent impurities.

- Instrumental Noise: Includes electronic (Johnson) noise, detector shot noise, and source instability.

- Fundamental Noise: In NMR, this includes thermal noise from the coil and sample.

The Signal-to-Noise Ratio (SNR) is the key metric, defined as the peak height divided by the standard deviation of the noise. A common threshold for peak detection is SNR ≥ 3.

The Systematic Drift: Baseline

The baseline is the low-frequency, non-analytical background upon which peaks and noise rest. An ideal baseline is flat and at zero intensity.

Common Baseline Artifacts:

- Offset: A constant vertical displacement from zero.

- Drift: A slow, monotonic increase or decrease across the spectral range (common in GC-MS due to column temperature programming).

- Curvature / Warbling: Complex, non-linear undulations, often seen in NMR due to imperfect solvent suppression or in MS from ion source instability.

Quantitative Comparison of MS and NMR Spectral Features

Table 1: Characteristic Parameters of Raw MS and NMR Spectral Data

| Feature | Mass Spectrometry (MS) | Nuclear Magnetic Resonance (NMR) |

|---|---|---|

| X-Axis | Mass-to-Charge Ratio (m/z) | Chemical Shift (δ, ppm) |

| Peak Shape | Near-Gaussian (LC-MS) / Asymmetric tailing possible | Lorentzian or mixed Lorentzian-Gaussian |

| Dynamic Range | Very High (≥ 10⁵) | Moderate (10² - 10⁴) |

| Typical SNR Range | 10 - 10⁵ (instrument dependent) | 100 - 10,000 (for 1D ¹H) |

| Major Noise Source | Electronic & Shot Noise (Detector), Chemical Background | Thermal Noise (Coil), Digital Quantization |

| Baseline Artifact | Prominent drift, especially in GC-MS; offset | Pronounced curvature from solvent signal; phase distortion |

| Key Resolution Metric | Resolution at a given m/z (e.g., FWHM) | Spectral Width / Number of Data Points; Linewidth at half-height |

Experimental Protocols for Assessing Spectral Quality

Protocol 1: Measuring Signal-to-Noise Ratio (SNR) in a ¹H NMR Spectrum

- Data Acquisition: Acquire a standard 1D ¹H NMR spectrum of a reference sample (e.g., 1mM sucrose in D₂O) with 128 scans.

- Region Selection: In processing software (e.g., MestReNova, TopSpin), identify a well-resolved, representative singlet peak.

- Noise Measurement: Select a region of the spectrum (≥ 1000 data points) known to contain only noise (e.g., δ 9.5 - 10.0 ppm for aqueous samples).

- Calculation: Compute the standard deviation (σ) of the intensity values in the noise region. Measure the peak height (H) from the baseline. SNR = H / σ.

- Reporting: Report SNR alongside acquisition parameters (field strength, probe, number of scans, temperature).

Protocol 2: Characterizing Baseline Drift in GC-MS Data

- Run a Blank: Perform a GC-MS run with solvent only, using identical method parameters (temperature gradient, flow rate).

- Data Extraction: Export the Total Ion Chromatogram (TIC) intensity values over time.

- Peak-Free Region Identification: Visually or algorithmically identify time segments in the sample run TIC with no detectable peaks (confirmed by blank comparison).

- Trend Analysis: Fit a polynomial (typically 1st to 5th order) or a loess smoother to the intensity values in these peak-free regions. The coefficients of the polynomial or the smoothed curve define the baseline drift.

- Quantification: Report the maximum absolute deviation of the fitted baseline from the zero or initial intensity level.

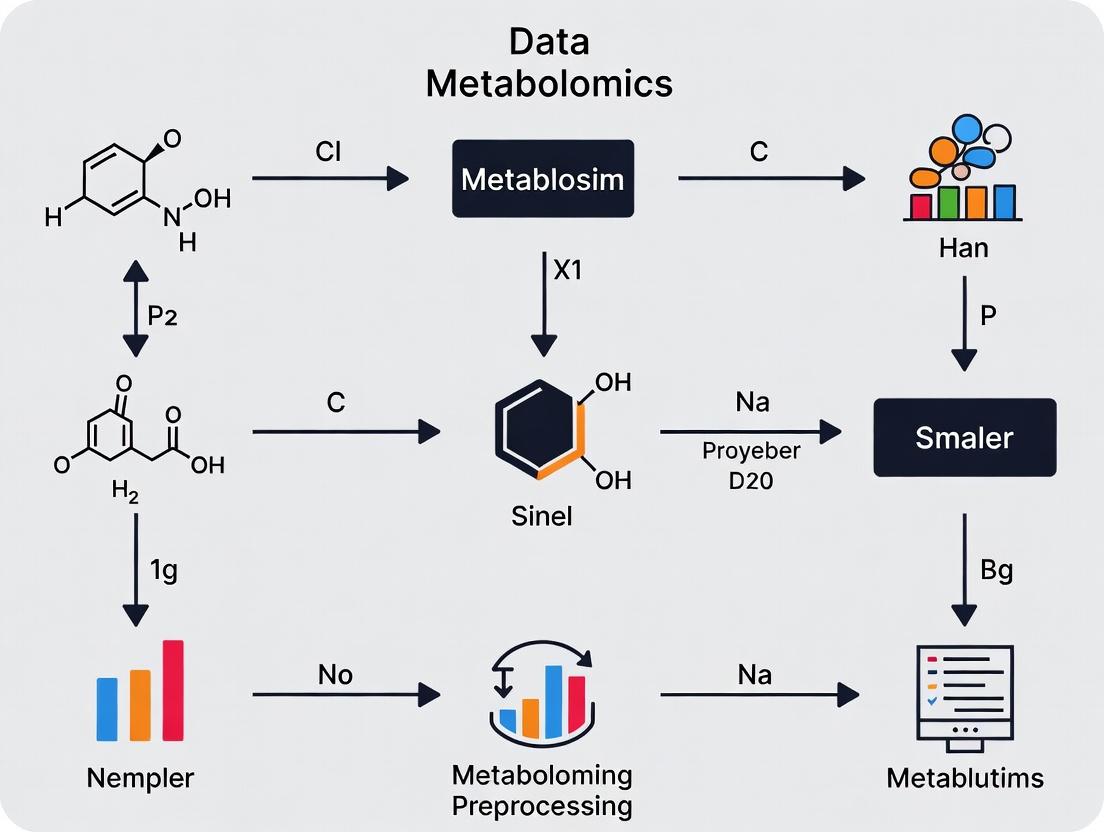

Visualizing the Metabolomics Preprocessing Workflow Context

Title: Spectral Anatomy Informs Preprocessing Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Materials for Metabolomic Spectral Quality Control

| Item Name | Function in Spectral Analysis | Typical Application |

|---|---|---|

| Deuterated Solvents (e.g., D₂O, CD₃OD, CDCl₃) | Provides NMR lock signal; minimizes solvent interference in ¹H spectrum. | NMR sample preparation for solvent suppression and stable frequency locking. |

| Chemical Shift Reference Standards (e.g., TMS, DSS-d₆) | Provides a known reference peak (0 ppm) for chemical shift calibration in NMR. | Added to every NMR sample to ensure consistent, accurate peak assignment. |

| MS Calibration Standards | Provides known m/z ions for mass accuracy calibration and instrument tuning. | Routinely run to calibrate MS (e.g., ESI Tuning Mix for LC-MS, perfluorotributylamine for GC-MS). |

| NIST/EPA/NIH Mass Spectral Library | Database of reference electron ionization (EI) mass spectra for compound identification. | Used to match acquired GC-MS spectra for metabolite annotation. |

| Processed Water & LC-MS Grade Solvents | Minimizes chemical noise and background ions from impurities. | Essential for preparing mobile phases and samples in LC-MS to reduce baseline artifacts. |

| Quality Control (QC) Pool Sample | A homogeneous mixture of all study samples used to monitor instrument stability. | Injected repeatedly throughout an LC/GC-MS batch to assess signal drift, noise, and reproducibility. |

| Standard Reference Material (e.g., NIST SRM 1950) | A plasma sample with certified metabolite concentrations. | Used as a benchmark to validate entire workflow, from preprocessing to quantification. |

Within the broader thesis on best practices for metabolomics data preprocessing workflow research, the pre-analytical phase is paramount. The quality, reliability, and biological interpretability of final data are irrevocably determined by decisions and actions taken prior to instrumental analysis. This guide details the core technical pillars of this phase: robust sample preparation, rigorous quality control (QC), and comprehensive metadata collection.

Sample Preparation: From Biological System to Analytical Sample

The goal is to rapidly inactivate metabolism, extract a broad range of metabolites with minimal bias, and prepare samples in a form compatible with the analytical platform (typically LC-MS or GC-MS).

Key Protocols

Protocol 1: Quenching and Extraction for Mammalian Cells (Dual-Phase Methanol/MTBE/Water Method)

- Reagents: -80°C 100% Methanol, Methyl-tert-butyl ether (MTBE), LC-MS grade Water.

- Procedure:

- Rapidly aspirate culture medium.

- Immediately add 1 mL of -80°C methanol to the plate/plate well. Scrape cells and transfer suspension to a precooled 2 mL microcentrifuge tube.

- Add 750 μL of ice-cold MTBE. Vortex vigorously for 10 seconds.

- Add 188 μL of LC-MS grade water. Vortex for 10 seconds.

- Centrifuge at 14,000 x g for 10 minutes at 4°C to achieve phase separation.

- Carefully collect the upper (MTBE, lipid-rich) and lower (aqueous methanol, polar metabolite-rich) phases into separate tubes.

- Dry under a gentle stream of nitrogen or in a vacuum concentrator.

- Reconstitute in appropriate solvent for analysis (e.g., 100 μL 50:50 acetonitrile:water for the aqueous phase).

Protocol 2: QC Sample Preparation (Pooled QC)

- Procedure:

- After all study samples are prepared, take an equal aliquot (e.g., 10 μL) from each.

- Combine these aliquots into a single QC pool sample.

- Prepare multiple identical injections of this pooled QC (typically 6-10) to be run at the beginning of the sequence to condition the system, and then interspersed evenly throughout the analytical run (every 4-10 study samples).

Quantitative Considerations in Sample Preparation

Table 1: Impact of Sample Preparation Variables on Metabolite Recovery

| Variable | Typical Range/Choice | Effect on Metabolome Coverage | Best Practice Recommendation |

|---|---|---|---|

| Quenching Delay | 0 sec vs. 30 sec delay | Up to 30% change in labile metabolites (e.g., ATP, NADH) | Rapid quenching (<10 sec) using cold organic solvent. |

| Extraction Solvent | Methanol, Acetonitrile, Chloroform | Polar vs. non-polar recovery varies by >50% | Use biphasic methods (e.g., Methanol/MTBE/Water) for broad coverage. |

| Sample-to-Solvent Ratio | 1:3 to 1:10 (w/v) | Low ratios yield incomplete extraction (<70% recovery). | Optimize for tissue type; 1:10 is often a safe starting point. |

| Storage Temp (-80°C) | 1 month vs. 12 months | Degradation of certain metabolites (e.g., glutathione) can exceed 20% per year. | Analyze samples in a single batch if possible; minimize freeze-thaw cycles (<3). |

Quality Control (QC) Strategy

A multi-tiered QC system is essential to monitor and correct for instrumental drift and batch effects.

Table 2: Types of Quality Control Samples in a Metabolomics Workflow

| QC Sample Type | Composition | Primary Purpose | Frequency in Sequence |

|---|---|---|---|

| System Suitability QC | Reference compound mix | Verify instrument performance (sensitivity, resolution) at start. | Beginning of sequence. |

| Processed Blank | Extraction solvents only | Identify background & contamination from reagents/columns. | Beginning, middle, end. |

| Pooled QC (Most Critical) | Aliquot of all study samples | Monitor system stability, correct for drift, filter non-reproducible features. | Every 4-10 injections. |

| Reference/Matched Plasma | Commercially available reference material | Long-term inter-laboratory reproducibility and calibration. | Per batch/plate. |

Metadata Collection: The Foundation of Context

Comprehensive metadata must be captured using standardized ontologies (e.g., MetaboLights, ISA-Tab framework).

Table 3: Essential Metadata Categories for Metabolomics Studies

| Category | Sub-Category Examples | Reporting Standard | Importance for Preprocessing |

|---|---|---|---|

| Study Design | Grouping, randomization, blinding. | ISA-Tab Investigation file | Defines the biological model and contrasts. |

| Sample Information | Species, tissue, time point, subject ID, dose. | ISA-Tab Sample file | Critical for batch correction and annotation. |

| Sample Preparation | Quenching method, solvent volumes, storage time. | MetaboLights Sample file | Identifies sources of technical variance. |

| Analytical Protocol | Column type, gradient, ionization mode, MS settings. | MetaboLights Assay file | Required for data alignment and integration. |

| Data Processing | Software, parameters, normalization method. | Derived data file | Ensures reproducibility of preprocessing. |

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents and Materials for Pre-Experimental Metabolomics

| Item | Function / Role | Critical Consideration |

|---|---|---|

| LC-MS Grade Solvents (Water, Methanol, Acetonitrile, Chloroform, MTBE) | Sample extraction, reconstitution, and mobile phase preparation. | Minimizes background chemical noise and ion suppression. Essential for blanks. |

| Internal Standard Mix (Isotope Labeled) | e.g., ¹³C, ¹⁵N-labeled amino acids, fatty acids. Added at quenching/extraction. | Corrects for losses during sample preparation and matrix effects during ionization. |

| Derivatization Reagents (for GC-MS) | e.g., MSTFA (N-Methyl-N-(trimethylsilyl)trifluoroacetamide), Methoxyamine. | Increases volatility and thermal stability of polar metabolites for GC-MS analysis. |

| Processed Blank Matrix | Solvent-only or charcoal-stripped biological matrix. | Serves as a negative control to identify and subtract systemic contamination. |

| Commercial Reference Plasma/Serum | e.g., NIST SRM 1950. | Provides a benchmark for inter-laboratory comparison and long-term performance monitoring. |

| Stable Isotope Tracer Compounds | e.g., ¹³C₆-Glucose, ¹⁵N-Ammonium Chloride. | Enables flux analysis to probe active metabolic pathways in the biological system. |

| Certified Vials/Inserts & Caps | Sample storage for LC/GC autosampler. | Prevents leaching of contaminants (e.g., plasticizers) that create spectral interference. |

Within the metabolomics data preprocessing workflow, the initial and critical step is the acquisition and handling of raw data files. The choice of file format directly impacts downstream processing, analysis reproducibility, and data longevity. This guide provides a technical examination of four core data file formats—mzML, mzXML, CDF, and proprietary RAW files—framed within the thesis of establishing best practices for robust metabolomics preprocessing. The selection of an appropriate format balances openness, metadata completeness, and computational efficiency, forming the foundation for reliable biological interpretation.

Technical Specifications and Comparative Analysis

The following table summarizes the key architectural and functional characteristics of the four primary mass spectrometry data formats in metabolomics.

Table 1: Comparative Analysis of Mass Spectrometry Data File Formats

| Feature | mzML | mzXML | CDF (NetCDF) | Vendor RAW Files |

|---|---|---|---|---|

| Format Type | Open, XML-based | Open, XML-based | Open, Binary (NetCDF) | Proprietary, Binary |

| Standardization | HUPO-PSI Standard | Trans-Proteomic Pipeline | IUPAC / ASTM Standard | Vendor-specific |

| Data Structure | Comprehensive metadata, indexed spectra | Simplified metadata, spectrum-centric | Array-oriented, time-series data | Instrument-specific raw data |

| Compression | Supported (zlib) | Supported | Not typically used | Vendor-specific, often none |

| Software Support | Universal (OpenMS, MZmine, etc.) | Widely supported | Legacy support, limited | Vendor software only (e.g., XCalibur, MassLynx) |

| Primary Use Case | Current gold standard for data exchange & archiving | Legacy data exchange, simpler applications | GC-MS data, legacy LC-MS data | Initial data acquisition, vendor processing |

Table 2: Quantitative Performance Metrics (Typical Experimental Run)

| Metric | mzML (zlib compression) | mzXML (zlib compression) | CDF | Thermo .RAW |

|---|---|---|---|---|

| File Size (for 60-min LC-MS) | ~1.2 GB | ~1.5 GB | ~800 MB | ~2.0 GB |

| Write Speed | Medium | Medium-Fast | Fast | Very Fast (during acquisition) |

| Read/Parse Speed | Medium (with index) | Medium | Slow | Fast (in vendor software) |

| Metadata Completeness | 95-100% (CV-controlled) | ~70% | ~40% | 100% (instrument-specific) |

Detailed Format Architectures and Conversion Protocols

mzML: The Controlled Vocabulary Standard

mzML, governed by the HUPO Proteomics Standards Initiative (PSI), is the recommended format for data sharing and archiving. Its strength lies in its use of controlled vocabularies (CV) to annotate every instrument setting and data processing step unambiguously.

Experimental Protocol: Converting Vendor RAW to mzML Using MSConvert (ProteoWizard)

- Objective: To transform proprietary raw data into an open, standardized format with maximal metadata preservation.

- Reagents & Software: Vendor RAW file, ProteoWizard MSConvert GUI (v3.0+), sufficient disk space (2x RAW file size).

- Procedure:

- Launch MSConvert. Add the input RAW file(s).

- Select

mzMLas the output format. - In the

Filterstab, apply:peakPicking: Apply vendor algorithm to centroid profile data.titleMaker: Embed original filename in spectrum titles.

- In the

Advancedoptions, setwriteIndextotruefor random access. - Set

zlib compressiontotrue. - Execute conversion. Validate output with

xmllintor open in a tool likems-scan.

mzXML: The Transitional XML Format

mzXML served as a crucial transitional open format, introducing the benefits of XML structure to MS data. While largely superseded by mzML, it remains prevalent in legacy datasets and some pipelines due to its simpler schema.

CDF: The NetCDF-Based Standard for Chromatography

Common Data Format (CDF), based on NetCDF, is historically significant, especially in GC-MS. It stores data as multidimensional arrays (e.g., scan index, intensity), making it efficient for sequential read/write but slow for random access.

Experimental Protocol: Reading and Processing CDF Files in Python

- Objective: Programmatically extract chromatographic and spectral data from a CDF file for custom preprocessing.

- Reagents & Software: Python 3.8+,

netCDF4library,numpy,matplotlib. - Procedure:

- Import libraries:

import netCDF4 as nc, numpy as np. - Load file:

dataset = nc.Dataset('chromatogram.cdf', 'r'). - Inspect variables:

print(dataset.variables.keys())to list data arrays. - Extract total ion chromatogram (TIC):

scan_index = dataset.variables['scan_index'][:]intensity_values = dataset.variables['intensity_values'][:]- Reconstruct TIC by aggregating intensities per scan.

- Always close the file:

dataset.close().

- Import libraries:

Vendor RAW Files: The Proprietary Source

Vendor-specific formats (e.g., Thermo .raw, Waters .raw, Agilent .d) contain the complete, unprocessed data stream from the instrument, including all detector events and full instrument control logs. They are essential for initial processing with vendor algorithms but pose a long-term accessibility risk.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Software and Library Tools for Data Format Handling

| Tool / Reagent | Primary Function | Application in Preprocessing Workflow |

|---|---|---|

| ProteoWizard MSConvert | Universal format converter. | Converts proprietary RAW files to open mzML/mzXML; applies basic filters (centroiding, thresholding). |

| Thermo Fisher Scientific Freestyle | RAW file reader and parser. | Accesses .RAW files directly for quality control and metadata extraction without vendor license. |

| NetCDF Libraries (C/Fortran/Python) | Low-level CDF file I/O. | Enables custom script development for reading, writing, and validating CDF files. |

| pyOpenMS / pymzML | Python APIs for mzML. | Allows programmatic, high-level access to mzML data for building custom preprocessing pipelines. |

Bioconductor (R) - MSnbase |

R package for MS data. | Provides infrastructure for manipulating, processing, and visualizing mzML/mzXML data in statistical environment. |

| HUPO-PSI Validator | Schema and CV validator. | Checks mzML file compliance with PSI standards, ensuring data integrity and interoperability. |

Workflow Integration and Strategic Recommendations

The optimal data preprocessing workflow must begin with a strategic decision regarding file formats. The recommended practice is a two-stage process:

- Acquisition & Primary Processing: Use vendor RAW files and software for initial instrument control, data acquisition, and vendor-specific peak picking or calibration.

- Exchange, Archiving & Secondary Analysis: Immediately convert to mzML with zlib compression and full metadata upon completion of primary processing. This mzML file becomes the shared input for all downstream open-source or commercial third-party software (e.g., MZmine, XCMS, OpenMS) for peak detection, alignment, and identification.

This approach mitigates vendor lock-in, ensures data reproducibility, and fulfills journal and repository mandates for open data formats.

Diagram 1: Metabolomics Data Flow from Acquisition to Analysis

Diagram 2: Evolution and Relationships of MS Data Formats

Within a robust thesis on best practices for metabolomics data preprocessing workflow research, the initial data preparation phase is not merely a preliminary step but the critical determinant of all downstream biological interpretation and statistical inference. Metabolomics, the comprehensive analysis of small-molecule metabolites, generates complex, high-dimensional, and noisy datasets from analytical platforms like mass spectrometry (MS) and nuclear magnetic resonance (NMR) spectroscopy. The central goals of preprocessing are to transform raw instrument data into a reliable, biologically meaningful data matrix, ensuring that the observed variance reflects true biological variation rather than technical artifact. Clean data is everything because conclusions on biomarker discovery, pathway analysis, and therapeutic target identification are only as valid as the data upon which they are built.

Core Preprocessing Goals and Quantitative Impact

Preprocessing aims to address specific technical variances. The quantitative impact of these steps is summarized in Table 1.

Table 1: Quantitative Impact of Key Preprocessing Steps on Data Quality

| Preprocessing Step | Primary Goal | Typical Metric for Success | Reported Impact (Range) |

|---|---|---|---|

| Peak Picking | Detect true metabolite signals from noise | Signal-to-Noise Ratio (SNR) increase | 5-20 fold SNR improvement |

| Retention Time Alignment | Correct for drifts in chromatographic separation | Reduction in RT deviation | Deviation reduced from 0.5-2 min to < 0.1 min |

| Peak Integration | Accurately quantify metabolite abundance | Coefficient of Variation (CV) for technical replicates | CV reduced from 20-30% to 5-15% |

| Normalization | Remove systematic bias (e.g., sample concentration, batch effects) | Median Fold Change of QC samples | Post-normalization, >70% of QCs within 20% of median |

| Scaling & Transformation | Prepare data for statistical analysis (e.g., achieve homoscedasticity) | Variance stabilization | Makes data conform to parametric test assumptions |

Detailed Experimental Protocols for Validation

Protocol 1: Evaluating Normalization Methods Using Pooled Quality Control (QC) Samples

- Sample Preparation: Inject a pooled QC sample (a mixture of all study samples) at regular intervals (e.g., every 5-10 samples) throughout the analytical run.

- Data Acquisition: Analyze samples using LC-MS/MS under consistent chromatographic conditions.

- Preprocessing: Apply peak picking and integration to the entire dataset.

- Normalization Testing: Apply multiple normalization methods (e.g., Probabilistic Quotient Normalization (PQN), Median Fold Change (MFC), or QC-based Robust LOESS) to the data matrix.

- Assessment: Calculate the coefficient of variation (CV) for each metabolite detected in the QC samples before and after normalization. The optimal method minimizes the median CV across all metabolites, indicating reduced technical variability.

Protocol 2: Assessing Peak Alignment Algorithm Performance

- Dataset: Use a test set where a subset of samples is analyzed with a minor, deliberate modification to chromatographic gradient conditions to induce retention time (RT) shifts.

- Reference Selection: Designate a sample with median RT properties as the reference.

- Alignment Execution: Apply alignment algorithms (e.g., correlation optimized warping (COW), dynamic time warping (DTW), or XCMS-based obiwarp).

- Performance Metrics: For a set of anchor metabolites (e.g., internal standards spiked in all samples), measure: a) the standard deviation of RT across all samples post-alignment, and b) the percentage of peaks correctly aligned within a defined RT tolerance (e.g., 0.1 min).

Logical Workflow of Metabolomics Data Preprocessing

Diagram 1: Core preprocessing workflow for metabolomics data.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Reagents and Materials for Metabolomics Preprocessing Validation

| Item | Function in Preprocessing Context |

|---|---|

| Deuterated Internal Standards Mix | Added to all samples pre-extraction to monitor and correct for technical variability in peak integration and instrument response. |

| Pooled Quality Control (QC) Sample | A homogenized mixture of all study samples; analyzed repeatedly to track system stability and for QC-based normalization. |

| Process Blank Solvent | A solvent-only sample; used to identify and filter out background noise and contamination peaks during data filtering. |

| Retention Time Index Markers | A series of chemically inert compounds eluting across the chromatographic run; used as landmarks for precise retention time alignment. |

| Standard Reference Material (SRM) | A well-characterized biological sample (e.g., NIST SRM 1950) used to benchmark overall preprocessing workflow performance and cross-lab reproducibility. |

| Stable Isotope-Labeled Metabolite Extracts | Used as spike-ins to evaluate the accuracy of peak deconvolution and quantification algorithms in complex biological matrices. |

Signaling Pathway of Data Quality Decisions

Diagram 2: Decision pathway for iterative preprocessing optimization.

Achieving the central goals of preprocessing—noise reduction, artifact correction, and biological signal preservation—is a non-negotiable foundation for any credible metabolomics workflow research. By implementing rigorous, QC-driven protocols, leveraging essential reagent tools for validation, and making informed decisions at each step, researchers transform volatile raw data into a robust and clean dataset. This clean data matrix is the essential substrate for all subsequent statistical and bioinformatic analyses, ultimately determining the validity and translational impact of metabolomics research in drug development and biomedical science.

Essential Tools and Platforms for Initial Data Exploration

Within the metabolomics data preprocessing workflow, initial data exploration is a critical first step that determines the direction of all subsequent analysis. This phase involves assessing data quality, identifying patterns, detecting outliers, and forming hypotheses. A rigorous, tool-driven exploration is foundational to the broader thesis of establishing best practices for robust and reproducible metabolomics research, directly impacting downstream interpretation in biomarker discovery and drug development.

Core Tools and Platforms for Exploration

The following tools are categorized by their primary function in the initial exploration of raw or minimally processed metabolomics data.

Programming Languages and Statistical Environments

- R and RStudio: The cornerstone of many bioinformatics workflows. R provides a vast ecosystem of packages specifically for high-dimensional data analysis and visualization.

- Python (Jupyter Notebooks): Increasingly dominant due to its versatility and the powerful data manipulation (pandas, NumPy) and visualization (Matplotlib, Seaborn, Plotly) libraries.

- Julia: Gaining traction for its high performance in computational science, useful for very large-scale datasets.

Specialized Metabolomics Analysis Packages

- R Packages:

xcms: The standard for LC-MS data preprocessing, also used for initial feature inspection.MetaboAnalystR: The R backend of the web platform, enabling programmatic, reproducible exploration.ggplot2: Essential for creating publication-quality exploratory plots (PCA, boxplots, density plots).

- Python Packages:

matchms: For processing and exploring MS/MS data.scikit-learn: Provides essential algorithms for unsupervised exploration (PCA, clustering).

Web-Based Platforms and Workflow Systems

- MetaboAnalyst 6.0: A comprehensive web-based platform that guides users from raw data upload through statistical and functional interpretation. Its "Data Overview" module is designed specifically for initial exploration.

- Galaxy-M (Metabolomics): A workflow system that offers reproducible, tool-chained data exploration without programming.

- Workflow4Metabolomics: The online Galaxy instance tailored for metabolomics, providing curated exploration tools.

Visualization and Dashboard Tools

- Tableau / Spotfire: Used for interactive visualization of sample groups, clinical metadata, and feature intensities.

- MSnbase (R): Enables visualization of raw chromatographic and spectral data for quality assessment.

Quantitative Comparison of Core Platforms

Table 1: Comparison of Key Platforms for Initial Metabolomics Data Exploration

| Tool/Platform | Primary Interface | Key Strengths for Exploration | Learning Curve | Reproducibility Support |

|---|---|---|---|---|

| R/RStudio | Code-based | Maximum flexibility; vast package ecosystem (xcms, ggplot2); seamless for custom scripts. | Steep | High (via RMarkdown/Notebooks) |

| Python/Jupyter | Code-based (Notebook) | Excellent for integration with ML pipelines; strong data science libraries (pandas, scikit-learn). | Steep | High (via Jupyter Notebooks) |

| MetaboAnalyst 6.0 | Web-based GUI | User-friendly; all-in-one suite from upload to analysis; excellent for rapid, standardized assessment. | Low | Medium (R command history saved) |

| Galaxy-M | Web-based GUI | Promotes reproducible workflows visually; no coding required; tool provenance tracking. | Moderate | Very High (saved, shareable workflows) |

| Julia | Code-based | Superior computational speed for massive datasets; emerging package support. | Steep | High (via Pluto.jl notebooks) |

Table 2: Quantitative Analysis of Metabolomics Studies (2020-2024) Citing Exploration Tools

| Tool Category | Approx. % of Studies Using* | Most Common Use Case in Exploration | Typical Data Volume Handled |

|---|---|---|---|

| R (xcms/ggplot2) | ~65% | Chromatogram alignment, feature detection, PCA, quality control plots. | Small to Large (TB-scale possible) |

| Python (pandas/scikit-learn) | ~45% | Data table manipulation, outlier detection, clustering, integration with other 'omics. | Small to Very Large |

| MetaboAnalyst | ~35% | Initial statistical summary, univariate analysis, interactive PCA/PLS-DA. | Small to Medium (< GB) |

| Vendor Software | ~50% | First-pass visualization of raw spectra/chromatograms, peak picking. | Medium (instrument-scale) |

*Percentages exceed 100% as studies often use multiple tools.

Detailed Experimental Protocol for Initial Exploration

Protocol: Systematic Initial Exploration of Untargeted LC-MS Metabolomics Data

Objective: To perform a standardized, tool-assisted initial exploration of raw LC-MS data to assess data quality, detect technical artifacts, and inform preprocessing parameter tuning.

I. Materials and Reagent Solutions

- Raw Data Files: .mzML or .raw formats from the mass spectrometer.

- Metadata File: .csv file containing sample information (Group, Batch, Injection Order, etc.).

- Computing Environment: R (v4.3+) or Python (v3.10+) installation.

- Software: RStudio (with

xcms,MSnbase,ggplot2) or Jupyter Lab (withmatchms,pandas,plotly).

II. Procedure

Step 1: Data Ingestion and Spectral Visualization

- Load raw data files into the chosen environment.

- Using

MSnbase(R) or equivalent, extract and plot Base Peak Chromatograms (BPCs) for representative samples from each experimental group. - Assessment: Visually inspect BPCs for consistent retention time stability, peak shape, and signal intensity across groups.

Step 2: Non-Targeted Feature Detection (Initial Pass)

- Apply a broad feature detection algorithm (e.g.,

xcms::findChromPeakswithcentWave). - Use intentionally permissive parameters to capture a wide range of features without strict filtering.

- Create a feature intensity table (peaks × samples).

Step 3: Quality Control (QC) and Sample-Relationship Visualization

- Perform Principal Component Analysis (PCA) on the unfiltered feature table.

- Generate a PCA scores plot, coloring samples by:

- Experimental Group (biological hypothesis).

- Batch ID (technical artifact detection).

- Injection Order (drift assessment).

- Calculate and plot median Relative Standard Deviation (RSD%) for features in pooled QC samples, if available. Target: <20-30% RSD.

Step 4: Distribution and Outlier Analysis

- Generate boxplots or kernel density plots of log-transformed feature intensities per sample.

- Calculate robust distance measures (e.g., Mahalanobis distance from PCA) to flag potential outlier samples.

- Use hierarchical clustering heatmaps to visualize global sample similarity.

Step 5: Documentation and Parameter Refinement

- Record all observations from visualizations (e.g., "Batch effect visible in PC2", "Sample X is an intensity outlier").

- Use these insights to refine parameters for the subsequent, rigorous preprocessing step (e.g., adjusting alignment tolerance, setting outlier handling flags, defining batch correction need).

Visualizing the Exploration Workflow

Title: Metabolomics Initial Data Exploration Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Metabolomics Data Generation Preceding Exploration

| Item | Function in Metabolomics Workflow |

|---|---|

| Pooled Quality Control (QC) Sample | A homogeneous mixture of all study samples, injected repeatedly throughout the run. Serves as a critical reagent for monitoring system stability, tracking technical variation, and filtering unreliable features during data exploration. |

| Internal Standards (Labeled) | Stable isotope-labeled compounds (e.g., 13C, 15N) spiked into every sample prior to extraction. Used to assess extraction efficiency, correct for ion suppression, and align retention times during data preprocessing. |

| Solvent Blanks | Pure extraction solvent processed identically to samples. Essential for identifying and subtracting background ions and contaminants originating from solvents, tubes, or columns during exploration. |

| NIST SRM 1950 | Standard Reference Material for human plasma. Used as a process control to benchmark instrument performance, validate the overall workflow, and enable inter-laboratory comparability of results. |

| Derivatization Reagents (e.g., MSTFA for GC-MS) | Chemicals that modify metabolite functional groups to improve volatility (GC-MS) or detection. Their consistent use is vital, as variations directly alter the feature table generated for exploration. |

The initial exploration of metabolomics data is a multifaceted process that relies on a strategic selection of computational tools and platforms. By leveraging the structured protocols and comparative insights outlined here, researchers can establish a reproducible and insightful first look at their data. This rigorous approach directly supports the broader thesis of standardizing preprocessing workflows, ensuring that subsequent steps in biomarker discovery and drug development are built upon a foundation of high-quality, well-understood data.

The Core Workflow in Action: Step-by-Step Preprocessing Techniques

Within the comprehensive framework of best practices for metabolomics data preprocessing, the initial step of peak detection and picking is foundational. This stage directly influences all downstream analyses, including metabolite identification, quantification, and biological interpretation. For researchers, scientists, and drug development professionals, selecting and tuning an appropriate algorithm is critical for generating reproducible, high-quality data. This guide provides an in-depth technical overview of contemporary algorithms, their tuning parameters, and practical experimental protocols.

Core Algorithms for Peak Detection

Peak detection algorithms transform raw mass spectrometry (LC/GC-MS) chromatographic data into a list of discrete spectral features characterized by mass-to-charge ratio (m/z), retention time (RT), and intensity. The choice of algorithm depends on instrument type, data density, and the biological question.

Centroiding vs. Profile Mode

Mass spectrometers output data in either profile (continuous) or centroid (discrete peak) mode. Peak picking in metabolomics often reprocesses profile data to extract centroids more accurately than the instrument's onboard software.

Common Algorithm Classes

Matched Filter (XCMS): Models the chromatographic peak shape (e.g., Gaussian) and uses correlation with this shape to detect peaks amidst noise. Effective for low signal-to-noise ratio (SNR) data.

CentWave (XCMS): Optimized for high-resolution LC-MS data. It detects regions of interest (ROIs) in the m/z domain and then identifies chromatographic peaks within these ROIs using continuous wavelet transform.

Massifquant (OpenMS): A centroiding algorithm designed for high-resolution data that does not require transformation into profile mode, directly detecting features in the raw data.

Limits of Detection (LOD)-based: Simple thresholding methods that identify peaks above a baseline noise estimate (e.g., signal > 3 * σ_noise).

Critical Parameters and Tuning Strategies

Algorithm performance is highly sensitive to parameter settings. Incorrect tuning leads to false positives (noise identified as peaks) or false negatives (true peaks missed).

Table 1: Key Parameters for Common Peak Detection Algorithms

| Algorithm | Core Parameters | Typical Value Range | Effect of Increasing Parameter |

|---|---|---|---|

| CentWave (XCMS) | peakwidth (min, max in sec) |

(5, 20) to (10, 60) | Wider peaks detected; may merge adjacent peaks. |

snthresh (signal-to-noise threshold) |

5 - 20 | Higher value increases stringency, reduces false positives. | |

ppm (m/z tolerance in parts-per-million) |

5 - 30 | Wider m/z grouping; may incorrectly merge co-eluting isobars. | |

prefilter (k, I) |

(3, 100) to (5, 5000) | Pre-filters ROI; higher I requires stronger initial signal. |

|

| Matched Filter | fwhm (full width half max, sec) |

10 - 30 | Width of template Gaussian; must match expected peak shape. |

sigma (noise standard deviation) |

Calculated or user-defined | Directly impacts SNR calculation. | |

| General | noise (absolute threshold) |

Varies by instrument | Higher value removes low-intensity peaks. |

mzdiff (min m/z step) |

0.001 - 0.01 | Minimum difference between adjacent peaks; prevents over-splitting. |

Tuning Methodology

A systematic approach is required:

- Visual Inspection: Manually inspect raw chromatograms (TIC, BPC) and extracted ion chromatograms (XICs) of known standards.

- Parameter Grid Search: Use a subset of representative samples to test a matrix of parameter values.

- Benchmarking with Standards: Spiked-in internal standards with known concentration and RT provide ground truth for evaluating recall (sensitivity) and precision.

- Consistency Assessment: Evaluate the consistency of peak detection across technical replicates and pooled QC samples.

Experimental Protocol for Algorithm Evaluation

The following protocol outlines a robust method for comparing and tuning peak detection algorithms, aligned with best-practice metabolomics workflows.

Protocol: Comparative Evaluation of Peak Picking Algorithms

Objective: To objectively determine the optimal peak detection algorithm and parameter set for a given LC-MS metabolomics dataset.

Materials & Reagents:

- LC-HRMS system (e.g., Q-Exactive, TripleTOF).

- A standardized metabolite mixture (e.g., CAMMI, Biorender).

- Study samples (e.g., plasma, tissue extract).

- Pooled Quality Control (QC) sample.

- Software: R (XCMS, CAMERA, MSnbase), Python (pyOpenMS, pyms), or commercial packages (Compound Discoverer, MarkerView).

- Computing hardware with sufficient RAM (>16 GB recommended).

Procedure:

Sample Preparation:

- Prepare a series of calibration samples by spiking the standardized metabolite mixture into a solvent at a known concentration gradient (e.g., 0.1 µM to 100 µM).

- Include these calibration samples, study samples, and frequent QC injections (every 4-6 samples) in the acquisition sequence.

Data Acquisition:

- Acquire data in full-scan, high-resolution profile mode. Ensure the method captures a wide m/z range (e.g., 70-1200 m/z).

Data Processing & Peak Picking:

- Convert raw files to an open format (e.g., .mzML using MSConvert).

- Apply a parameter grid search. For CentWave, test combinations of:

peakwidth: (4,12), (6,20), (8,30)snthresh: 5, 7, 10ppm: 10, 15, 25

- Run each parameter set through the peak detection algorithm.

Performance Metrics Calculation:

- For the spiked-in standards, calculate:

- Recall: (Detected Standards / Total Injected Standards)

- Precision: (True Positives / (True Positives + False Positives)). Estimate FPs via detection in blank samples.

- Peak Shape Metrics: Assess asymmetry factor and width at half-height for detected standard peaks.

- For the pooled QCs, calculate:

- Feature Reproducibility: %RSD of peak area for features detected in >80% of QC injections.

- Total Feature Count: Monitor for unrealistic inflation.

- For the spiked-in standards, calculate:

Optimal Selection:

- Select the parameter set that maximizes both recall and precision for standards while maintaining high reproducibility (e.g., %RSD < 30%) in QCs. Visual inspection of challenging XICs (low abundance, co-eluting) is mandatory for final validation.

Visualization of the Peak Picking Workflow and Logic

Title: Peak Detection and Parameter Tuning Workflow

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Peak Detection Evaluation

| Item | Function in Peak Detection Context | Example / Specification |

|---|---|---|

| Standard Reference Mixture | Provides ground truth for algorithm tuning. Known m/z and RT enable calculation of detection recall and precision. | CAMMI (Complex Mixture of Metabolites and Isotopologues); U-13C-labeled cell extract. |

| Internal Standards (ISTDs) | Distinguish true peaks from noise and correct for ionization variability. Spiked at known concentration prior to extraction. | Stable isotope-labeled analogs of key metabolites (e.g., d3-Leucine, 13C6-Glucose). |

| Quality Control (QC) Pool | A homogeneous sample injected throughout the run to assess technical reproducibility of peak detection (feature count stability, %RSD). | Pool of equal aliquots from all experimental samples. |

| Process/Solvent Blank | Identifies background contamination and instrumental artifacts, helping to filter out false-positive peaks. | Sample preparation solvent processed identically to real samples. |

| Retention Time Index Markers | Aids in aligning peaks across samples post-detection, improving consistency. | Homologous series of fatty acid methyl esters (FAMEs) or alkyl sulfates. |

| Mass Calibration Standard | Ensures m/z accuracy is maintained, which is critical for correct peak grouping across samples. | Standard solution with ions spanning the m/z range (e.g., ESI Tuning Mix). |

In a metabolomics data preprocessing workflow, retention time (RT) alignment is a critical step following peak picking and preceding peak grouping and gap filling. Chromatographic drift—shifts in RT across samples due to column aging, temperature fluctuations, or mobile phase variations—introduces non-biological variance that compromises downstream statistical analysis. Effective RT alignment corrects these shifts, ensuring that the same metabolite is assigned a consistent RT across all samples, a foundational best practice for generating reliable and reproducible data.

Core Algorithms and Quantitative Performance

Retention time alignment algorithms generally operate in two stages: 1) Landmark Selection: Identifying robust, high-quality peaks common across many samples as anchor points. 2) Warping: Applying a transformation function to stretch or compress the RT axis of each sample to match a reference. The choice of algorithm depends on the severity of drift and data complexity.

Table 1: Comparison of Common RT Alignment Algorithms

| Algorithm | Principle | Strengths | Weaknesses | Typical RT CV Reduction* |

|---|---|---|---|---|

| Dynamic Time Warping (DTW) | Non-linear mapping minimizing distance between chromatograms. | Handles complex, non-linear shifts effectively. | Computationally intensive; may over-warp. | ~50-70% |

| Correlation Optimized Warping (COW) | Divides chromatogram into segments and linearly stretches/compresses them. | Robust to moderate non-linear drift; preserves peak shape. | Requires parameter tuning (segment length, slack). | ~45-65% |

| Peak Groups/landmark-based (e.g., XCMS, OpenMS) | Uses identified chromatographic peaks and groups them across samples before lowess/loess regression. | Integrates with feature detection; biologically relevant anchors. | Performance depends on initial peak picking quality. | ~40-60% |

| Indexed Retention Time (iRT) | Uses a spiked-in standard peptide/metabolite kit with known relative RTs. | Highly reproducible; ideal for cross-laboratory studies. | Requires standardized reagent kit and additional steps. | ~70-85% |

*CV: Coefficient of Variation. Reduction from pre-alignment to post-alignment. Performance is dataset-dependent.

Detailed Experimental Protocol: Landmark-Based Alignment using Lowess Regression

This protocol is commonly implemented in tools like XCMS and is suitable for LC-MS-based untargeted metabolomics.

- Reference Sample Selection: Choose a high-quality sample with a large number of detected peaks as the reference (e.g., a pooled QC sample or a central study sample).

- Landmark (Peak Group) Identification: Using the output from the peak picking step (m/z, RT, intensity), perform preliminary peak grouping across samples within a generous RT window (e.g., 30s). Filter groups to retain only those present in >50-70% of samples and with a low RT CV.

- Pairwise Matching: For each sample i, match its peaks to the landmarks in the reference sample using a combined m/z (e.g., ±10 ppm) and initial RT tolerance.

- Regression Function Fitting: For each sample i, fit a non-parametric local regression model (e.g., lowess or loess) using the matched landmark RTs:

RT_ref = f(RT_sample_i). - RT Transformation: Apply the derived function f to adjust the RT of every detected peak in sample i to the reference time scale.

- Validation: Calculate the RT CV for each landmark peak group before and after alignment. Successful alignment should significantly reduce median RT CV.

Visualization of the RT Alignment Workflow

Title: Logical Flow of Retention Time Alignment Process

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Materials for RT Alignment & QC

| Item | Function in RT Alignment & Quality Control |

|---|---|

| Pooled Quality Control (QC) Sample | An equi-volume mix of all study samples. Injected repeatedly throughout the run to monitor system stability and serve as a robust reference for alignment. |

| Retention Time Index (RTI) Standard Kits | Commercially available mixes of deuterated/synthetic metabolites covering a broad RT range. Spiked into all samples to provide universal, chemically-defined landmarks for alignment. |

| Internal Standards (IS) | Isotopically labeled analogs added to each sample during extraction. While primarily for quantification, they can also serve as alignment landmarks. |

| Mobile Phase Additives | Consistent use of high-purity solvents and additives (e.g., formic acid) is critical to minimize RT drift originating from the chromatographic system. |

| Chromatography Column | A dedicated, high-quality column used only for the study period. Documenting column batch and usage is essential for troubleshooting drift. |

Advanced Considerations and Best Practices

- Batch Effects: Perform RT alignment within analytical batches first, then consider a second-level alignment across batches if a pooled QC was run in all batches.

- QC-Driven Assessment: The RT CV of features in the pooled QC samples, before and after alignment, is the primary metric for evaluating alignment success. Aim for post-alignment median RT CV < 2-3%.

- Avoid Over-warping: Excessive correction can distort chromatographic peak shapes and introduce artifact correlations. Visual inspection of overlayed chromatograms before and after alignment is mandatory.

- Integration with Workflow: RT alignment parameters (e.g., bandwidth for lowess) must be documented and kept consistent across the entire study to ensure reproducibility, a core tenet of a robust preprocessing workflow.

Within the thesis on Best practices for metabolomics data preprocessing workflow research, Step 3 represents the critical transition from single-sample processing to a multi-sample analysis framework. Following peak detection and alignment (Step 2), the challenge is to construct a consensus feature list where each feature is reliably quantified across all samples in the study. This process, known as feature correspondence or peak grouping, directly impacts the quality of downstream statistical analysis and biological interpretation. Errors introduced here, such as misgrouping or missing values, propagate irreversibly. This guide details modern methodologies, algorithms, and experimental considerations for robust cross-sample peak grouping.

Core Algorithms and Quantitative Comparison

The core task involves grouping peaks from multiple liquid chromatography-mass spectrometry (LC-MS) runs based on their chromatographic retention time (RT) and mass-to-charge ratio (m/z). Algorithms differ in their approach to RT correction and grouping tolerance.

Table 1: Comparison of Primary Feature Correspondence Algorithms

| Algorithm/Tool | Primary Method | RT Correction Model | Tolerance Strategy | Key Strength | Reported Mean Alignment Accuracy* |

|---|---|---|---|---|---|

| XCMS (obiwarp) | Density-based peak grouping | Parametric (obiwarp using LOESS) | Adaptive m/z bins & RT windows | High flexibility, handles large cohorts | 92-96% |

| MZmine 2 | Join aligner | Non-parametric (segment alignment) | User-definable m/z & RT balance | Intuitive graphical interface, modular | 88-94% |

| OpenMS (FeatureLinkerUnlabeledQT) | Network-based | Using accurate mass and RT | Quadratic time model for linking | High precision in complex samples | 90-95% |

| CAMERA | EIC correlation grouping | Post-alignment, using peak shape | Groups co-eluting ions (adducts, isotopes) | Specialized for annotation, not primary alignment | N/A |

| MS-DIAL | RI-based alignment | Uses retention index for calibration | Dual tolerance (m/z & RI) | Excellent for GC-MS & LC-MS/MS libraries | 94-98% |

*Accuracy percentages are derived from benchmark studies (e.g., Riquelme et al., 2020; Libiseller et al., 2015) and represent successful alignment of spiked internal standards across typical sample sets (n=10-100). Actual performance varies with platform, sample type, and chromatographic stability.

Detailed Experimental Protocol for Robust Peak Grouping

This protocol assumes prior peak picking (Step 2) has been completed.

3.1. Materials & Pre-Alignment Preparation

- Input Data: A list of detected peaks per sample with m/z, RT, and intensity.

- Internal Standards (IS): A set of spiked, non-biological compounds evenly spanning the RT and m/z range.

- Quality Control (QC) Samples: A pooled sample injected at regular intervals throughout the run sequence.

3.2. Stepwise Procedure

- RT Reference Selection: Choose the sample with the highest number of high-quality peaks (often a QC or a central study sample) as the reference for alignment.

- RT Deviation Calibration:

- Extract the RTs of the spiked internal standards from all samples.

- Fit a regression model (e.g., LOESS, quadratic) for each sample, mapping its IS RTs to the reference's IS RTs.

- Apply this model to correct the RT of all detected peaks in that sample.

- Peak Grouping Execution:

- Define a matching tolerance. A typical starting point is ±0.005-0.01 Da (or ppm) for m/z and ±0.1-0.2 min for RT (after correction).

- Using the chosen algorithm (e.g., XCMS), perform density analysis: across all samples, clusters of peaks in the 2D space (m/z vs. corrected RT) are identified. Each dense cluster becomes a "feature group."

- Missing Value Imputation:

- For peaks absent in some samples within a feature group, distinguish between true biological absence and technical miss.

- Apply a mild imputation method (e.g., k-nearest neighbors or minimum intensity imputation) only for peaks suspected to be missed due to low signal, avoiding false positives.

3.3. Validation Checkpoints

- IS Alignment: Calculate the standard deviation of RT for each IS across all samples post-alignment. It should be drastically reduced (e.g., from >0.5 min to <0.05 min).

- QC Correlation: Calculate the coefficient of variation (CV%) for features in the replicate QC samples. >70% of features should show a CV < 20-30% after grouping, indicating technical precision.

Visualization of the Peak Grouping Workflow

Title: Workflow for LC-MS Feature Correspondence Across Samples

The Scientist's Toolkit: Essential Reagents & Materials

Table 2: Key Research Reagent Solutions for Step 3

| Item | Function in Feature Correspondence |

|---|---|

| Stable Isotope-Labeled Internal Standard Mix | A cocktail of compounds (e.g., amino acids, lipids) with known, distinct RTs and m/z, spiked uniformly into all samples. Provides anchors for non-linear RT alignment and monitors process performance. |

| Pooled Quality Control (QC) Sample | An equal-pool aliquot of all experimental samples. Injected repeatedly, its feature intensities assess technical precision post-grouping (via CV%) and identify system drift. |

| Blank Solvent Samples | Pure LC-MS grade solvent (e.g., water/acetonitrile) processed identically to samples. Used to identify and filter out background/contaminant features that group erroneously. |

| Retention Index Calibration Kit (for GC-MS) | A series of n-alkanes or fatty acid methyl esters. Creates a universal, instrument-independent RT scale (Kovats Index), making grouping more robust than absolute RT. |

| LC-MS Grade Solvents & Additives | High-purity water, acetonitrile, methanol, and volatile buffers (e.g., ammonium formate). Minimize background chemical noise that can create spurious peaks and complicate grouping. |

Within a comprehensive thesis on Best practices for metabolomics data preprocessing workflow research, Steps 1-3 typically cover raw data conversion, alignment, and basic filtering. Step 4, detailed here, is critical for enhancing data integrity prior to statistical analysis. Advanced noise reduction and baseline correction are essential to distinguish true biological signals from analytical artifacts, directly impacting the accuracy of subsequent biomarker discovery and pathway analysis in drug development.

Core Methodologies & Protocols

Advanced Baseline Correction

Baseline drift, caused by instrumental variations, obscures true spectral peaks.

Protocol: Asymmetric Least Squares (AsLS)

- Input: A chromatographic or spectral vector y of length n.

- Parameters: Set smoothing parameter λ (typical range: 10² to 10⁹) and asymmetry parameter p (for positive peaks, p ~ 0.001-0.1).

- Iteration: Minimize the function ∑ᵢ wᵢ (yᵢ - zᵢ)² + λ ∑ᵢ (Δ²zᵢ)², where z is the fitted baseline, Δ² is the second difference, and weights wᵢ are updated each iteration as: wᵢ = p if yᵢ > zᵢ, else wᵢ = 1-p.

- Output: Corrected signal y - z.

Protocol: Morphological (Top-Hat) Filter

- Input: Spectral vector y.

- Structuring Element: Define a flat structuring element (e.g., a line) with a width greater than the widest peak but narrower than baseline features.

- Operation: Perform an opening operation (erosion followed by dilation) on the signal using the structuring element. The baseline is estimated as this opened signal.

- Output: Corrected signal obtained by subtracting the opened signal from the original.

Advanced Noise Reduction

Stochastic noise reduces sensitivity and obscures low-abundance metabolites.

Protocol: Savitzky-Golay Smoothing

- Input: Discrete data points of a spectrum/chromatogram.

- Parameters: Choose a polynomial order (m, typically 2 or 3) and a window size (n, must be odd and > m).

- Calculation: For each point i, fit a polynomial of degree m by least squares to n points centered on i. The smoothed value at i is the value of the polynomial at i.

- Output: Smoothed signal with preserved higher moments (peak shape).

Protocol: Wavelet Transform Denoising

- Input: Signal S.

- Decomposition: Apply a Discrete Wavelet Transform (DWT) using a chosen mother wavelet (e.g., Symmlet) to decompose S into approximation (low-frequency) and detail (high-frequency) coefficients across multiple levels.

- Thresholding: Apply a thresholding rule (e.g., Stein's Unbiased Risk Estimate - SURE) to the detail coefficients to suppress noise.

- Reconstruction: Reconstruct the denoised signal via the Inverse DWT using the original approximation and thresholded detail coefficients.

Quantitative Performance Comparison

Table 1: Performance metrics of baseline correction methods on a simulated NMR spectrum with known baseline and Gaussian noise (SNR=10).

| Method | Parameters Used | Root Mean Square Error (RMSE) | Execution Time (ms) | Peak Shape Preservation (Correlation) |

|---|---|---|---|---|

| AsLS | λ=1e7, p=0.01 | 0.024 | 120 | 0.998 |

| Morphological (Top-Hat) | Width=100 | 0.031 | 15 | 0.990 |

| Polynomial Fit | Degree=5 | 0.045 | 5 | 0.982 |

Table 2: Performance of noise reduction methods on a simulated LC-MS chromatogram.

| Method | Parameters Used | Signal-to-Noise Ratio (SNR) Improvement | % Reduction in Peak Area RSD* | Artifact Introduction |

|---|---|---|---|---|

| Savitzky-Golay | Window=11, Poly=2 | 2.5x | 15% | Low |

| Wavelet Denoising (SURE) | Symmlet-8, Level=5 | 3.8x | 28% | Medium |

| Moving Average | Window=11 | 1.8x | 8% | High (Peak Broadening) |

RSD: Relative Standard Deviation for replicate peaks. Controlled via threshold selection.

Visualizing the Integrated Workflow

Title: Step 4 in the Metabolomics Preprocessing Pipeline

Title: Wavelet-Based Denoising Process Flow

The Scientist's Toolkit: Essential Reagents & Software

Table 3: Key Research Reagent Solutions and Tools for Method Implementation.

| Item | Function/Description | Example Vendor/Software |

|---|---|---|

| Quality Control (QC) Pool Sample | A pooled aliquot of all study samples; injected repeatedly throughout analytical batch to monitor and correct for instrumental drift and noise. | Prepared in-house from study samples. |

| Deuterated Solvent for NMR | Provides a stable lock signal for NMR spectrometers, essential for consistent data acquisition and baseline stability. | Cambridge Isotope Laboratories |

| Matlab/Python (SciPy) Library | Provides implemented algorithms for AsLS, Savitzky-Golay, and Wavelet transforms for custom scripting. | MathWorks / Python Software Foundation |

| Proprietary Processing Suites | GUI-based software with optimized implementations of advanced correction algorithms. | MATLAB, Python (SciPy, PyWavelets) |

| MS/NMR Reference Standards | Chemical standards for system suitability testing, ensuring instrument performance is optimal prior to sample runs. | IROA Technologies, Chenomx |

| XCMS Online / MetaboAnalyst | Web-based platforms incorporating advanced preprocessing modules for direct application and comparison. | Scripps Center / MetaboAnalyst Team |

In the broader context of establishing best practices for metabolomics data preprocessing workflows, normalization is a critical step to correct for unwanted systematic variation (e.g., sample dilution, matrix effects, instrument drift) while preserving biological variation. This technical guide details prevalent strategies.

Core Normalization Methodologies

Total Intensity (or Signal) Normalization

- Principle: Each sample's feature intensities are scaled by its total ion current (TIC) or total signal sum.

- Protocol: For a sample with n features, the normalized intensity ( I{norm} ) for feature *i* is calculated as: ( I{norm,i} = \frac{I{raw,i}}{\sum{j=1}^{n} I_{raw,j}} \times \text{median}(\text{global sample sums}) ) The multiplication by the global median total intensity restores the data to a biologically relevant scale.

- Use Case: Simple, assumption-free correction for overall concentration differences. Highly sensitive to large, dominant peaks.

Probabilistic Quotient Normalization (PQN)

- Principle: Assumes that the concentration changes of most metabolites are constant across samples. It corrects for a dilution factor by using a median reference spectrum.

- Experimental Protocol:

- Choose a reference sample (often the median/mean spectrum of all quality control (QC) samples).

- Calculate the quotient between each feature in a test sample and the corresponding feature in the reference.

- Determine the median of all quotients for that test sample—this is the estimated dilution factor.

- Divide all feature intensities in the test sample by this factor.

- Use Case: Effective for urine or other biofluids where overall sample dilution is the primary variance. Requires a representative reference.

Normalization to Internal Standard(s)

- Principle: Uses spiked-in, known compounds (not endogenous to the sample) to correct for technical variance.

- Protocol:

- Standard Selection & Addition: A known amount of stable isotope-labeled analog(s) of endogenous metabolites or chemically similar non-native compounds is added to every sample prior to extraction.

- Data Acquisition: Analyze all samples, measuring intensities for both endogenous features and internal standard (IS) peaks.

- Correction: For each sample, normalize all endogenous feature intensities ((I{endo})) by the intensity of one or multiple IS ((I{IS})): ( I{norm} = \frac{I{endo}}{I_{IS}} )

- For multiple IS, a response curve or robust average may be used.

- Use Case: Gold standard for targeted assays. Corrects for extraction efficiency, instrument response drift, and matrix effects. Limited by the number and chemical coverage of IS.

Other Advanced Methods

- Quality Control-Based (QC-RLSC): Uses repeated injections of a pooled QC sample to model and correct for temporal instrument drift.

- Batch Normalization: Employs statistical models (e.g., ComBat) to remove variation associated with processing batch.

- Sample-Specific Factors: Normalization to creatinine (urine), protein content (cell lysate), or cell count.

Table 1: Quantitative and Qualitative Comparison of Key Normalization Strategies.

| Method | Primary Correction For | Requires Reference | Robustness to Large Peaks | Best For |

|---|---|---|---|---|

| TIC | Global concentration differences | No (uses own sum) | Low | Exploratory, simple screening |

| PQN | Sample dilution effects | Yes (median spectrum) | Medium | Biofluids (e.g., urine, plasma) |

| Internal Standard | Technical variance (extraction, MS drift) | Yes (spiked standards) | High | Targeted assays, quantitative work |

| QC-RLSC | Temporal instrument drift | Yes (pooled QC samples) | Medium | Large-scale LC/MS batch runs |

| Sample-Specific | Biomass/input variation | Yes (e.g., protein assay) | High | Cell/tissue studies with measured input |

Experimental Protocol: Implementing PQN Normalization

A detailed step-by-step protocol for PQN normalization in an LC-MS metabolomics experiment is as follows:

- Prerequisite: A data matrix of pre-processed (peak-picked, aligned) feature intensities [Samples × Features].

- Reference Spectrum Creation:

- Calculate the median intensity for each feature across all QC samples (or all samples if no QCs) to create the reference vector ( R ).

- Quotient Calculation per Sample:

- For each sample vector ( S ), calculate the quotient vector ( Q_s = S / R ) (element-wise division).

- Dilution Factor Estimation:

- For each sample, find the median of its quotient vector ( Qs ). This value ( ds ) is the estimated dilution factor.

- Normalization:

- Divide all feature intensities in sample ( S ) by its dilution factor ( ds ): ( S{norm} = S / d_s ).

- Validation:

- Assess effectiveness using PCA plots of QC samples pre- and post-normalization (QCs should cluster more tightly).

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Metabolomics Normalization Experiments.

| Item | Function & Rationale |

|---|---|

| Stable Isotope-Labeled Internal Standards (e.g., ¹³C, ¹⁵N-labeled amino acids, lipids) | Chemically identical to analytes with distinct mass; corrects for losses during sample preparation and ionization variability. Essential for quantification. |

| Chemical Analog Internal Standards (e.g., non-natural fatty acids) | Not found biologically; used as surrogate IS for compound classes where labeled versions are unavailable or too costly. |

| Pooled Quality Control (QC) Sample | An aliquot made by combining equal volumes of all study samples. Injected repeatedly throughout the analytical sequence to monitor and correct for instrument performance drift. |

| Solvent Blanks (LC-MS grade water, solvent) | Injected to assess and subtract background noise and carryover from the LC-MS system. |

| NIST SRM 1950 | Standard Reference Material for Metabolites in Human Plasma. Used as a system suitability test and for inter-laboratory method benchmarking. |

| Derivatization Reagents (e.g., MSTFA for GC-MS) | For chemical derivatization techniques; often a single internal standard is added pre-derivatization to normalize for reaction efficiency. |

Normalization Decision Workflow Diagram

Internal Standard Normalization Pathway Diagram

Within a comprehensive metabolomics data preprocessing workflow, scaling and transformation constitute a critical step that directly influences the outcome of subsequent univariate and multivariate analyses. Following steps like normalization and missing value imputation, this phase addresses the heteroscedasticity and varying dynamic ranges inherent to mass spectrometry and NMR data. The choice of method—whether Pareto scaling, mean-centering, or logarithmic transformation—systematically alters the data structure to meet the assumptions of statistical models, thereby ensuring that biological signals, rather than technical artifacts, drive the discovery of biomarkers and pathway perturbations in drug development research.

Core Transformation Methods: Theory and Application

The primary goal of scaling and transformation is to adjust the relative weighting of metabolites so that high-abundance, high-variance features do not dominate the analysis, allowing lower-abundance but potentially biologically significant compounds to contribute to the model.

Logarithmic Transformation

Applied to reduce right-skewness and heteroscedasticity, making data more approximately normally distributed. It is particularly effective for mass spectrometry intensity data.

Methodology: For a raw intensity value ( x{ij} ) for metabolite ( i ) in sample ( j ), the transformed value ( x'{ij} ) is: [ x'{ij} = \log{10}(x{ij}) \quad \text{or} \quad x'{ij} = \ln(x{ij}) ] In practice, a constant (e.g., 1) is often added prior to transformation to handle zero values: [ x'{ij} = \log{10}(x{ij} + 1) ]

Mean-Centering

A scaling method that shifts the data to have a mean of zero for each variable. It is essential for Principal Component Analysis (PCA) as it focuses on the variance.

Methodology: For metabolite ( i ) with mean ( \bar{x}i ) across all samples: [ x'{ij} = x{ij} - \bar{x}i ] This process removes the bias due to the mean, allowing comparison of variations around the mean.

Pareto Scaling

A compromise between no scaling and unit variance (auto) scaling. It reduces the relative importance of large values but keeps data structure partially intact.

Methodology: The mean-centered value is divided by the square root of the standard deviation ( \sqrt{si} ) of metabolite ( i ): [ x'{ij} = \frac{x{ij} - \bar{x}i}{\sqrt{si}} ] where ( si ) is the standard deviation.

Table 1: Characteristics and Applications of Common Scaling Methods

| Method | Formula | Effect on Data | Best Used For | Key Consideration |

|---|---|---|---|---|

| Log Transformation | ( x' = \log(x + c) ) | Compresses dynamic range, stabilizes variance, reduces skew. | MS data with large intensity ranges. Pre-processing for many parametric tests. | Choice of base and constant ( c ) affects results. Not applicable to negative values. |

| Mean-Centering | ( x' = x - \bar{x} ) | Shifts data mean to zero. | Preparing data for PCA, PLS-DA. | Does not change variance structure; large-variance features still dominate. |

| Pareto Scaling | ( x' = \frac{x - \bar{x}}{\sqrt{s}} ) | Reduces but does not eliminate variance magnitude differences. | General-purpose scaling for untargeted metabolomics. | A recommended default starting point in many workflows. |

| Unit Variance (Auto) | ( x' = \frac{x - \bar{x}}{s} ) | Forces all variables to unit variance. | When all metabolites should be weighted equally. | Can artificially inflate noise from low-abundance metabolites. |

| Range Scaling | ( x' = \frac{x - \bar{x}}{max(x)-min(x)} ) | Scales data to a specified range (e.g., -1 to 1). | When bounds on data range are required. | Highly sensitive to outliers. |

Experimental Protocols for Method Evaluation

A standard protocol to determine the optimal scaling method within a metabolomics workflow involves parallel processing and assessment of model performance.

Protocol 1: Comparative Evaluation of Scaling Methods for PCA

- Input: A normalized, imputed data matrix ( X ) (m samples x n metabolites).

- Parallel Transformation: Create three copies of ( X ). Apply:

- Path A: Log transformation (base 10, +1 offset).

- Path B: Mean-centering only.

- Path C: Pareto scaling.

- PCA Execution: Perform PCA on each transformed matrix using singular value decomposition (SVD).

- Assessment Metrics: For each PCA model, calculate:

- Q² (Cumulative): Via cross-validation to estimate predictive ability.

- Group Separation: Measure the distance between group centroids (e.g., control vs. treatment) in the scores plot (PC1 vs. PC2) using Mahalanobis distance.

- Variable Influence: Examine the loadings plot to assess if known biologically relevant metabolites are highly weighted.

- Selection Criterion: Choose the method yielding the most biologically interpretable model with robust group separation and high Q².

Protocol 2: Assessing Impact on Univariate Statistics

- Apply different scaling methods to the same preprocessed dataset.

- For each metabolite, perform a t-test (or ANOVA) between experimental groups.

- Record the number of significant metabolites (p < 0.05, FDR-corrected) and the list of their identities.

- Compare the overlap (e.g., Venn diagram) of significant metabolite lists derived from each scaling method. The optimal method often maximizes the recovery of metabolites known to be associated with the experimental perturbation from prior literature.

Visualizing the Decision Workflow

Diagram 1: Decision Workflow for Data Scaling & Transformation

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials for Metabolomics Data Preprocessing & Validation

| Item | Function in Context of Scaling/Transformation |

|---|---|

| QC Sample Pool | A homogeneous pool of sample used to monitor technical variance. Consistency in QC profiles after transformation indicates stable processing. |

| Certified Reference Materials (CRMs) | Metabolite standards of known concentration. Used to validate that transformations do not distort quantitative relationships for key analytes. |

| Internal Standard Mix (IS) | Stable isotope-labeled compounds spiked pre-extraction. Their variance after scaling indicates the effectiveness of removing non-biological variance. |

| Statistical Software (R/Python) | Platforms like R (with pmp, MetaboAnalystR) or Python (with scikit-learn, plotly) provide validated, reproducible code for implementing scaling algorithms. |

| Benchmarking Dataset | A well-characterized public dataset (e.g., from Metabolights) with known outcomes, used to test and compare the performance of different scaling pipelines. |

Implications for Downstream Analysis

The choice of scaling method has profound effects:

- Biomarker Discovery: Pareto or log transformation often improves the detectability of lower-abundance biomarkers.

- Pathway Analysis: Incorrect scaling can bias enrichment results by over-representing high-variance pathways not biologically relevant.

- Multivariate Modeling: Mean-centering is mandatory for PCA/PLS-DA, but pairing it with Pareto scaling typically yields more robust and interpretable models than auto-scaling in the presence of biological noise.

Therefore, Step 6 is not a mere technicality but a decisive point in the preprocessing workflow. Best practice mandates that researchers test multiple methods, using the protocols outlined above, and select the one that maximizes biological insight and model robustness for their specific dataset and research question.

Within a comprehensive thesis on Best Practices for Metabolomics Data Preprocessing, the imputation of missing values represents a critical inflection point. Metabolomics datasets, derived from techniques like LC-MS and GC-MS, are inherently plagued by missing values arising from technical (e.g., ion suppression, instrumental detection limits) and biological (e.g., metabolite concentrations below detection) sources. The choice of imputation method directly influences downstream statistical analysis, biomarker discovery, and biological interpretation. This step evaluates three distinct approaches: a distance-based method (k-Nearest Neighbors, KNN), a machine learning ensemble method (Random Forest), and a simple, assumption-driven method (Half-Minimum), providing a framework for selecting an appropriate strategy based on data characteristics and research goals.

Detailed Methodologies & Protocols

k-Nearest Neighbors (KNN) Imputation

Protocol: The KNN imputation algorithm identifies the k most similar samples (neighbors) for each sample with a missing value, based on a distance metric (typically Euclidean or Pearson correlation) computed over non-missing metabolite features. The missing value is then estimated as the mean (or median) of the corresponding metabolite's values from these k neighbors.

- Data Preparation: The data matrix (samples x metabolites) is normalized (e.g., Pareto scaling) to ensure distance metrics are not dominated by high-abundance metabolites.

- Parameter Selection: The number of neighbors (

k) is optimized, often via cross-validation on a subset of artificially introduced missing values. A common starting range is k=5-10. - Distance Calculation: For each sample