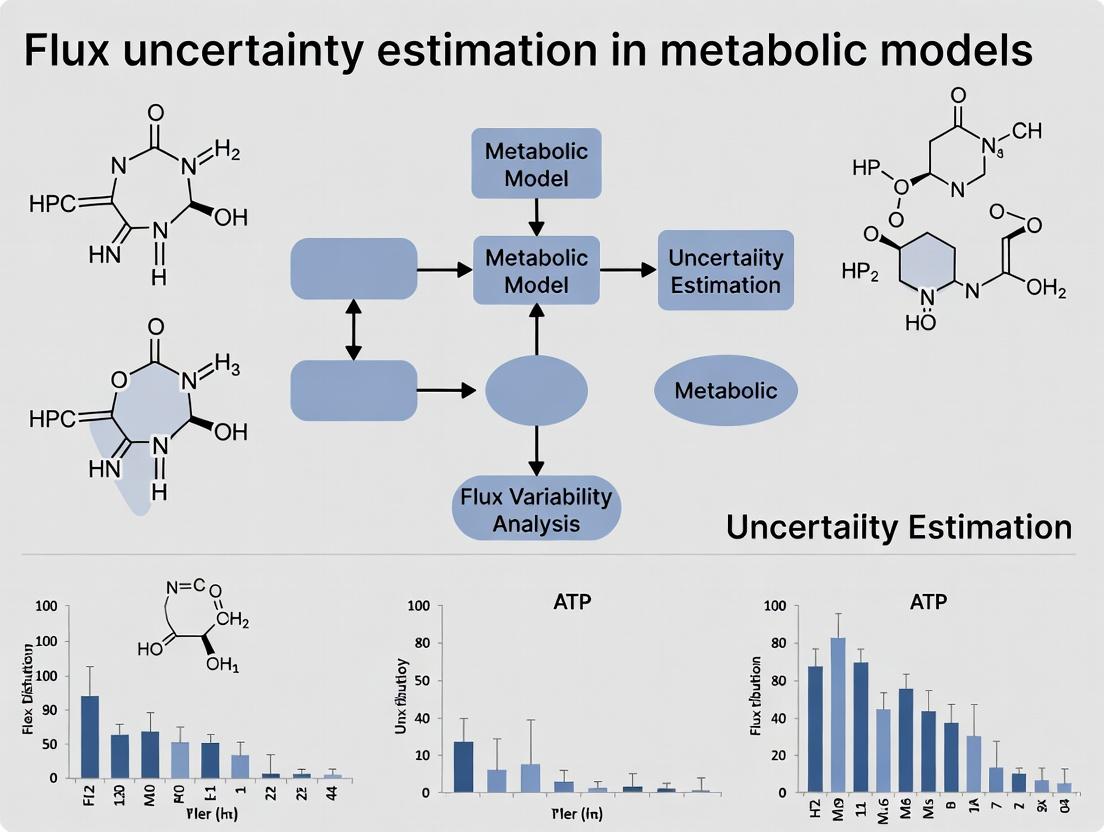

Navigating Biological Uncertainty: A Comprehensive Guide to Flux Uncertainty Estimation in Metabolic Models for Biomedical Research

This article provides a comprehensive guide to flux uncertainty estimation for researchers, scientists, and drug development professionals working with constraint-based metabolic models.

Navigating Biological Uncertainty: A Comprehensive Guide to Flux Uncertainty Estimation in Metabolic Models for Biomedical Research

Abstract

This article provides a comprehensive guide to flux uncertainty estimation for researchers, scientists, and drug development professionals working with constraint-based metabolic models. We cover the foundational principles of why flux variability exists, detailing the mathematical and biological sources of uncertainty. We explore key methodological approaches—from classical Flux Variability Analysis (FVA) to modern sampling and Bayesian techniques—and their practical applications in identifying robust drug targets and engineering metabolic pathways. The guide includes troubleshooting strategies for computational challenges and data integration, followed by a comparative analysis of validation frameworks and software tools. By synthesizing current methodologies and best practices, this article equips researchers to quantify and interpret flux uncertainty, thereby enhancing the reliability of model predictions for systems biology and translational medicine.

What is Flux Uncertainty? Defining the Inherent Variability in Metabolic Network Predictions

Constraint-Based Reconstruction and Analysis (COBRA) is a cornerstone of systems biology for modeling metabolic networks. These models, typically built around a stoichiometric matrix (S), enable the prediction of metabolic fluxes under steady-state constraints (S⋅v = 0) and bounds on reaction capacities. However, a critical limitation is the treatment of key parameters—such as enzyme kinetic constants, nutrient uptake rates, and thermodynamic data—as precise, known values. In reality, these parameters are subject to significant biological and experimental uncertainty. Quantifying this "Flux Uncertainty" is therefore an essential step toward producing robust, biologically interpretable predictions, particularly for applications in metabolic engineering and drug target identification.

Performance Comparison: Uncertainty Quantification Methodologies

Different computational frameworks have been developed to quantify flux uncertainty. The table below compares three prominent approaches used in metabolic modeling research.

Table 1: Comparison of Flux Uncertainty Quantification Methods

| Method | Core Principle | Advantages | Limitations | Typical Use Case |

|---|---|---|---|---|

| Flux Variability Analysis (FVA) | Maximizes/minimizes each reaction flux within feasible space. | Simple; identifies rigid and flexible reactions; no distribution assumed. | Does not provide probability distributions; only gives bounds. | Identifying essential reactions and network flexibility. |

| Monte Carlo Sampling | Randomly samples flux distributions from the feasible solution space. | Provides full probability distributions for all fluxes; highly flexible. | Computationally intensive; convergence can be slow for large models. | Probabilistic comparison of flux states (e.g., healthy vs. disease). |

| Bayesian Metabolic Flux Analysis | Uses priors and likelihoods to derive posterior flux distributions. | Incorporates prior knowledge (e.g., 13C labeling data); rigorous uncertainty estimates. | Computationally complex; requires specification of prior distributions. | Integrating multi-omics data for high-confidence flux estimation. |

Supporting Experimental Data: A benchmark study on the E. coli core model evaluated these methods under simulated uncertain bounds for ATP maintenance (ATPm). The results, summarized in Table 2, highlight the practical implications of each approach.

Table 2: Experimental Benchmark Results on E. coli Core Model with Uncertain ATPm Bounds

| Method | Computational Time (s) | Estimated Growth Rate Range (mmol/gDW/h) | Identified Essential Reactions (Count) | Key Insight |

|---|---|---|---|---|

| FVA | 0.8 | [0.65, 0.88] | 45 | Confirms broad feasible range but no likelihood. |

| Monte Carlo (ACHR) | 152.3 | Mean: 0.78 ± 0.06 | N/A | 95% of samples show growth > 0.7, indicating robust prediction. |

| Bayesian (MCMC) | 421.7 | Mean: 0.76 ± 0.04 | N/A | With informative prior, uncertainty range reduced by ~35%. |

Detailed Experimental Protocols

Protocol 1: Flux Variability Analysis with Parameter Perturbation

- Model Loading: Load a genome-scale metabolic model (e.g., in SBML format) into a COBRA toolbox (e.g., COBRApy, MATLAB COBRA).

- Define Baseline Constraints: Set medium constraints and an objective function (e.g., biomass maximization).

- Introduce Uncertainty: For a target parameter (e.g., ATP maintenance lower bound

ATPm_LB), define a plausible range (e.g., 3.0 to 8.0 mmol/gDW/h). - Iterative FVA: For n steps across the range:

- Fix

ATPm_LBto a value within the range. - Perform FVA to compute the min/max flux for all reactions.

- Record the resulting range for the objective function.

- Fix

- Analysis: Aggregate results to determine which reaction flux bounds are sensitive to the uncertain parameter.

Protocol 2: Monte Carlo Sampling for Flux Probability Distributions

- Define the Feasible Space: Set all model constraints, including variable bounds for uncertain parameters defined as uniform distributions.

- Generate Samples: Use a sampling algorithm (e.g., Artificial Centering Hit-and-Run - ACHR) to generate a set (e.g., 10,000) of feasible flux distributions.

- Convergence Check: Ensure sample quality by analyzing convergence diagnostics (e.g., potential scale reduction factor).

- Post-processing: For each reaction of interest, compute statistics (mean, standard deviation, percentiles) from the sample ensemble to characterize its flux distribution.

- Validation: Compare the sampled growth rate distribution against experimental data ranges.

Pathway and Workflow Visualizations

Flux Uncertainty Estimation Workflow

Impact of Uncertainty on Flux Solution Space

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Constraint-Based Modeling & Uncertainty Quantification

| Item | Function in Research | Example/Note |

|---|---|---|

| Genome-Scale Metabolic Model (GEM) | The core scaffold representing all known metabolic reactions for an organism. | Recon3D (human), iML1515 (E. coli). Available from repositories like BioModels. |

| COBRA Software Suite | Provides algorithms for simulation (FBA, FVA) and model manipulation. | COBRApy (Python), COBRA Toolbox (MATLAB). |

| Sampling Algorithm | Generates random, thermodynamically feasible flux distributions from the model. | Artificial Centering Hit-and-Run (ACHR), OptGpSampler. |

| Markov Chain Monte Carlo (MCMC) Engine | For Bayesian inference, samples from complex posterior distributions of fluxes. | Stan, PyMC3, custom implementations in MATLAB/Python. |

| Isotope Labeling Data (13C) | Experimental data used to constrain fluxes and reduce uncertainty via MFA. | Measured mass isotopomer distributions from GC-MS or LC-MS. |

| Flux Uncertainty Visualization Tool | Plots probability distributions, confidence intervals, and flux ranges. | Custom scripts in Python (Matplotlib, Seaborn) or R (ggplot2). |

Flux uncertainty estimation in constraint-based metabolic modeling is critical for reliable predictions in systems biology and drug development. This guide compares the impact and quantification of three primary uncertainty sources, supported by experimental and computational data.

The table below summarizes the origin, propagation, and mitigation strategies for key uncertainty types in metabolic network models.

Table 1: Comparative Analysis of Primary Uncertainty Sources

| Uncertainty Source | Primary Origin | Typical Magnitude (CV%) in Flux Prediction | Propagates to Network-Scale? | Primary Mitigation Strategy |

|---|---|---|---|---|

| Thermodynamic (ΔG'°) | Inexact standard Gibbs free energy of reaction. | 15-40% for reactions with poorly estimated ΔG'° | Yes, via reaction directionality constraints. | Component contribution method; NMR-based equilibria data. |

| Enzyme Kinetics (kcat, Km) | In-vitro vs. in-vivo activity differences; promiscuity. | 50-300% for specific pathway fluxes. | Often local, but can propagate if rate-limiting. | Multi-omic integration (proteomics, metabolomics). |

| Network Topology | Gaps (missing reactions); incorrect gene-protein-reaction rules. | Qualitative (on/off switches for pathways). | Yes, fundamentally alters solution space. | Gap-filling algorithms; comparative genomics. |

Experimental Protocols for Uncertainty Quantification

Protocol 1: Isothermal Titration Calorimetry (ITC) for Thermodynamic Parameter Estimation

Objective: Determine precise ΔG, ΔH, and ΔS for a biochemical reaction. Method:

- Purify enzyme and substrate to homogeneity.

- Load reference and sample cells with buffer. Perform a baseline titration (substrate into buffer).

- Load enzyme into the sample cell. Titrate with small increments of substrate solution.

- Measure heat flow after each injection until saturation is reached.

- Fit the integrated heat data to a binding model (e.g., one-set-of-sites) using dedicated software (e.g., MicroCal PEAQ-ITC Analysis) to obtain ΔG.

Protocol 2: Bayesian Inference of Kinetic Parameters from Multi-Omic Data

Objective: Constrain in-vivo kinetic parameters (kcat, Km) and their uncertainty. Method:

- Data Acquisition: Collect paired intracellular metabolomics (LC-MS) and absolute proteomics (LC-MS/MS with spike-in standards) data under multiple steady-state conditions.

- Model Formulation: Use a mechanistic rate law (e.g., Michaelis-Menten) for each reaction.

- Parameter Sampling: Employ a Markov Chain Monte Carlo (MCMC) algorithm (e.g., PyMC3) to sample from the posterior distribution of parameters consistent with the metabolite and enzyme concentration data.

- Validation: Use held-out flux data (from 13C-MFA) to validate the posterior parameter distributions.

Protocol 3: Topology Gap-Filling via Growth Phenotype Screening

Objective: Identify and correct missing reactions in a genome-scale model (GEM). Method:

- Knockout Screening: Use a transposon mutant library to create single-gene knockout strains.

- Phenotype Array: Culture wild-type and knockout strains in a Biolog phenotype microarray plate with hundreds of carbon/nitrogen sources.

- In Silico Simulation: Simulate growth on all conditions using the draft GEM.

- Discrepancy Analysis: Flag conditions where in-silico prediction (no growth) contradicts experimental data (growth).

- Candidate Reaction Addition: Propose reactions from databases (e.g., MetaCyc) to bridge the metabolic gap and restore growth prediction. Validate with gene homology evidence.

Visualizations

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Tools for Flux Uncertainty Research

| Item | Function & Application |

|---|---|

| 13C-Labeled Substrates (e.g., [U-13C]Glucose) | Enables experimental flux determination via 13C Metabolic Flux Analysis (13C-MFA), the gold standard for validating in-silico flux predictions. |

| Absolute Quantification Proteomics Kit (e.g., Spike-in TMT) | Allows precise measurement of enzyme concentrations per cell, a critical input for kinetically constrained models. |

| Biolog Phenotype MicroArray Plates | High-throughput experimental screening of microbial growth on diverse substrates to test and refine metabolic network topology. |

| Thermodynamic Database (e.g., eQuilibrator) | Web-based tool for estimating reaction Gibbs free energies (ΔG'°) and uncertainty, incorporating pH and ionic strength. |

| Bayesian Inference Software (e.g., PyMC3, Stan) | Probabilistic programming frameworks used to sample parameter distributions and quantify prediction uncertainty. |

| Gap-Filling Algorithm Suite (e.g., ModelSEED, CarveMe) | Computational pipelines that propose missing reactions in draft genome-scale models based on phenotypic data. |

Metabolic network analysis relies on defining the solution space of possible flux distributions, a concept central to flux uncertainty estimation. This guide compares the performance and output of leading computational approaches for characterizing this space: Flux Variability Analysis (FVA), Flux Sampling, and Linear Programming (LP)/Quadratic Programming (QP). These methods are evaluated for their efficacy in deriving flux cones and feasible ranges within genome-scale metabolic models (GSMMs).

Performance Comparison of Solution Space Analysis Methods

Table 1: Comparative Analysis of Key Computational Methods

| Method | Core Function | Output Type | Computational Demand | Key Strength | Primary Limitation in Uncertainty Estimation | Best For |

|---|---|---|---|---|---|---|

| Flux Variability Analysis (FVA) | Calculates min/max possible flux for each reaction under optimality. | Feasible range (interval). | Moderate. Must be run sequentially for each reaction. | Guarantees absolute bounds for each flux. Identifies rigid (fixed) reactions. | Only provides bounds, not a probability distribution within the range. Assumes optimal biomass. | Identifying essential reactions and network gaps. |

| (Markov Chain) Monte Carlo Sampling | Generates statistically uniform set of feasible flux distributions. | A sampled set of flux vectors representing the solution space. | High. Requires many iterations for convergence. | Characterizes the entire solution space. Enables probability distributions for each flux. | Convergence can be slow for large models. Results are statistical, not absolute bounds. | Studying correlation between fluxes and predicting metabolite concentrations. |

| Linear/Quadratic Programming | Finds a single, optimal flux distribution (e.g., max growth). | A single flux vector (point solution). | Low (for a single solution). | Fast identification of a theoretically optimal state. | Provides no insight into flux uncertainty or alternative optimal/sub-optimal states. | Constraining models with experimental data (pFBA). |

Supporting Experimental Data: A benchmark study on the E. coli iJO1366 model (1,805 reactions) under glucose-aerobic conditions illustrates key differences. FVA revealed that while the optimal growth rate was 0.92 hr⁻¹, over 45% of network reactions carried non-zero flux variability, with 120 reactions having a feasible range spanning both positive and negative directions (reversibility). Subsequent Monte Carlo sampling (using the optGpSampler algorithm) of the solution space at 95% optimality (growth ≥ 0.874 hr⁻¹) generated 50,000 flux distributions in 12 hours. Analysis showed that for central carbon metabolism reactions like phosphofructokinase (PFK), the flux distribution was unimodal and tightly constrained (coeff. of variation < 10%). In contrast, for peripheral transport reactions, sampled fluxes exhibited multimodal distributions, highlighting significant uncertainty not captured by FVA bounds alone.

Experimental Protocols for Key Analyses

Protocol 1: Performing Flux Variability Analysis (FVA)

- Model Loading: Load a genome-scale metabolic model (e.g., in COBRApy or MATLAB COBRA Toolbox).

- Objective Definition: Set the biological objective (e.g., biomass reaction).

- Primary Optimization: Solve a Linear Programming (LP) problem to find the maximum objective value (e.g., maximal growth rate,

maxObj). - Flux Range Calculation: For each reaction

iin the model: a. Fix the objective reaction's flux tomaxObj(or a fraction thereof for robustness analysis). b. Solve an LP to minimize the flux through reactioni, record value asVmin_i. c. Solve an LP to maximize the flux through reactioni, record value asVmax_i. d. The pair[Vmin_i, Vmax_i]defines the feasible flux range for reactioniunder the defined optimum.

Protocol 2: Monte Carlo Sampling of the Flux Solution Space

- Preparation: Define the model and constraints (medium, uptake rates). Optionally, constrain the objective flux to a desired fraction of its maximum.

- Initialization: Use an artificial centering hit-and-run (ACHR) or optGpSampler algorithm to generate a warm-up set of points.

- Sampling: Run the Markov chain for a defined number of steps (e.g., 100,000). Each step proposes a random direction in the flux cone and moves within the constrained polytope to generate a new feasible flux distribution.

- Convergence Check: Monitor convergence metrics (e.g., potential scale reduction factor) across multiple chains to ensure the sample set is representative.

- Analysis: Analyze the ensemble of flux vectors to compute means, standard deviations, correlations, and probability distributions for each reaction flux.

Visualizing Solution Space Concepts

Diagram 1: From Constraints to Flux Cone (54 chars)

Diagram 2: FVA vs. Sampling Output (45 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Flux Solution Space Analysis

| Item | Function/Description | Example/Format |

|---|---|---|

| Genome-Scale Metabolic Model (GSMM) | A structured, computational representation of an organism's metabolism. The foundational "reagent" for all analysis. | Recon3D (human), iJO1366 (E. coli), Yeast8. Available in SBML (.xml) format. |

| COBRA Toolbox | The primary software suite for constraint-based reconstruction and analysis. Provides functions for FVA, sampling, and simulation. | MATLAB-based. Alternative: COBRApy (Python implementation). |

| Linear Programming (LP) Solver | Computational engine for solving the optimization problems at the core of FVA and LP. | Gurobi, CPLEX, or open-source alternatives like GLPK or CLP. |

| Flux Sampling Software | Specialized packages for efficient Monte Carlo sampling of high-dimensional flux cones. | optGpSampler (MATLAB), matlab-RAVEN sampler, CobraSampler (Python). |

| Stoichiometric Matrix (S) | The mathematical core of the model, encoding reaction metabolite relationships. Derived directly from the GSMM. | A sparse m x n matrix (m metabolites, n reactions), typically accessed via COBRA tools. |

| Experimental Flux Data | Used to constrain the solution space, reducing uncertainty. Includes uptake/secretion rates or measured fluxes (e.g., from ¹³C-MFA). | Typically applied as lower/upper bounds (lb, ub) on specific exchange or internal reactions. |

Flux Balance Analysis (FBA) is a cornerstone of constraint-based metabolic modeling, enabling the prediction of growth rates, essential genes, and optimal metabolic states. However, standard FBA provides a single-point solution, ignoring the inherent uncertainty in flux distributions arising from biological variability, model gaps, and parameter estimation. Flux uncertainty estimation quantifies this variability, transforming predictions from deterministic values into probability distributions. This paradigm shift has profound implications for robustly identifying metabolic engineering targets and predicting gene essentiality, where false positives can derail experimental campaigns. This guide compares tools and methodologies for uncertainty-aware metabolic analysis.

Comparative Analysis: Tools for Uncertainty Estimation in Metabolic Models

Table 1: Comparison of Software Tools for Flux Uncertainty Analysis

| Tool / Platform | Core Methodology | Key Outputs for Uncertainty | Experimental Validation Cited | Computational Demand | Integration with FBA |

|---|---|---|---|---|---|

| MATLAB’s COBRA Toolbox (snmfa, variability) | Sampling-based (e.g., Artificial Centering Hit-and-Run), Flux Variability Analysis (FVA) | Confidence intervals on fluxes, sampled flux distributions. | Used to predict ({}^{13}C)-MFA feasible flux ranges in E. coli (Gopalakrishnan et al., 2019). | High for sampling, Moderate for FVA. | Native. |

| Python’s cobrapy / meioPy | Flux Variability Analysis (FVA), Monte Carlo sampling of parameters. | Min/max flux bounds, distributions from parameter perturbations. | Applied to identify robust S. cerevisiae knockout strategies under media uncertainty (Meadows et al., 2016). | Moderate to High. | Native. |

| CARVEME (with MCMC sampling) | Bayesian sampling of the flux solution space post model reconstruction. | Posterior probability distributions of reaction fluxes. | Validated against gene essentiality data for B. subtilis; improved accuracy over point estimates. | High. | Integrated pipeline. |

| ** | Ensemble Modeling (EM) | A set of stoichiometrically feasible models representing alternative network states. | Ensemble of E. coli models used to predict conditional essential genes with >90% precision (Bordbar et al., 2014). | Very High. | Requires custom framework. |

| ** | ** | Uniform random sampling of the flux space to characterize solution space volume. | Sampled E. coli iJO1366 ensemble predicted thermodynamically feasible flux ranges (Schellenberger & Palsson, 2009). | High. | Available in COBRA. |

Experimental Protocols for Validating Uncertainty-Driven Predictions

Protocol 1: Validating Gene Essentiality Predictions Using CRISPRi-FlowSeq

Aim: To experimentally test if genes identified as "essential with high confidence" (low uncertainty) versus "contextually essential" (high uncertainty) by flux uncertainty analysis are accurate.

- In Silico Prediction: Perform Flux Variability Analysis (FVA) on a genome-scale model (GEM) under defined conditions. Classify gene essentiality:

- High Confidence Essential: Gene knockout reduces maximum possible growth rate (FVA max) to near zero.

- High Uncertainty: Gene knockout leads to a wide range of possible growth rates (large gap between FVA min and max).

- Strain Library Construction: Design a CRISPR interference (CRISPRi) library targeting genes from both categories and a set of non-essential controls.

- Chemostat Cultivation: Grow the pooled library in a controlled bioreactor under the condition matching the FBA simulation.

- Sampling & Sequencing: Extract genomic DNA at multiple time points, amplify barcodes, and perform deep sequencing (FlowSeq).

- Data Analysis: Calculate the depletion rate of each guide RNA over time. Genes with significant guide depletion are experimentally essential. Compare to model predictions stratified by uncertainty.

Protocol 2: Testing Robust Metabolic Engineering Targets

Aim: To compare growth and product yield of strains engineered based on deterministic FBA vs. uncertainty-weighted FBA predictions.

- Target Identification:

- Deterministic Strategy: Use parsimonious FBA (pFBA) to identify a single, optimal knockout set for maximizing product yield (e.g., succinate).

- Uncertainty-Aware Strategy: Use OptKnock coupled with FVA or sampling. Filter solutions to those where the product flux remains high and growth flux remains viable (>10% of wild-type) across >95% of the sampled flux space.

- Strain Construction: Build E. coli knockout strains for both strategies using Lambda Red recombinering.

- Fed-Batch Fermentation: Cultivate strains in parallel bioreactors under defined, controlled conditions.

- Metabolite Analysis: Measure growth (OD600), substrate consumption (HPLC), and product titers (GC-MS) at regular intervals.

- Validation: The uncertainty-aware strategy is validated if it produces a more consistent yield across biological replicates and scales more predictably to larger bioreactors than the deterministic strategy.

Visualization of Key Concepts and Workflows

Flux Uncertainty Analysis Workflow

Deterministic vs. Uncertainty-Aware Model Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Reagents and Tools for Validating Uncertainty Predictions

| Item | Function in Validation | Example Product / Kit |

|---|---|---|

| Genome-Scale Model (GEM) | The in silico foundation for all flux and uncertainty simulations. | BiGG Models (e.g., iML1515 for E. coli, iTO977 for human), ModelSeed. |

| Flux Sampling & FVA Software | Performs the core uncertainty quantification calculations. | COBRA Toolbox (MATLAB), cobrapy/memote (Python), CellNetAnalyzer. |

| CRISPRi Library Synthesis Kit | For constructing pooled gene knockdown libraries to test essentiality predictions. | Twist Bioscience Oligo Pools, Arrayed sgRNA libraries (Addgene). |

| Chemostat/Bioreactor System | Provides the controlled, steady-state environment crucial for comparing model predictions to experimental phenotypes. | DASbox Mini Bioreactor System (Eppendorf), BioFlo & Applikon systems. |

| NGS Library Prep Kit | Prepares sequencing libraries from pooled genetic screens (e.g., FlowSeq). | Illumina Nextera XT, NEBNext Ultra II DNA. |

| Metabolite Analysis Standards | Quantitative standards for validating predicted extracellular and intracellular flux states via metabolomics. | Mass Spectrometry Metabolite Libraries (IROA Technologies), Biocrates kits. |

| ({}^{13})C-Labeled Substrates | Gold standard for experimentally measuring in vivo metabolic fluxes (({}^{13}C-MFA) to validate uncertainty ranges. | >99% ({}^{13})C-Glucose (Cambridge Isotope Laboratories), ({}^{13})C-Glutamine. |

In metabolic modeling, Flux Balance Analysis (FBA) is a cornerstone technique for predicting steady-state metabolic fluxes. The foundational concepts of an objective function (a mathematical representation of a cellular goal, like maximizing biomass) and constraints (physicochemical and environmental boundaries) are central to this framework. A key outcome of this formulation is the possibility of multiple optimal solutions—distinct flux distributions that yield the same optimal objective value. This phenomenon is of critical importance in the broader thesis on flux uncertainty estimation, as it directly informs the range of biologically possible states a network can occupy, impacting predictions in metabolic engineering and drug target identification.

Theoretical Comparison: Linear Programming Outcomes

FBA is typically formulated as a linear programming (LP) problem. The table below compares possible solution states arising from different configurations of the objective function and constraints.

| Solution Type | Objective Function Value | Flux Solution Space | Cause in Metabolic Context | Implication for Flux Uncertainty |

|---|---|---|---|---|

| Unique Optimal Solution | Single, unique maximum/minimum. | A single point. | Well-constrained problem with a non-degenerate optimum. | Low uncertainty; predicted flux distribution is precise. |

| Multiple Optimal Solutions | Same optimal value. | A bounded convex set of flux vectors (a solution space). | Degeneracy due to redundant pathways or underdetermined network. | High uncertainty; a spectrum of flux distributions is equally optimal. |

| Unbounded Solution | Infinite (in maximization). | Unbounded space. | Missing a critical thermodynamic or capacity constraint. | Model is biologically infeasible; requires constraint refinement. |

| Infeasible Solution | No solution exists. | Null. | Constraints are contradictory (e.g., demand exceeds maximum possible production). | Model formulation error; pathway gaps or incorrect bounds. |

The existence of multiple optimal solutions is the primary source of solution space uncertainty. Techniques like Flux Variability Analysis (FVA) are subsequently employed to quantify the permissible range of each flux within this optimal space.

Experimental Comparison: Quantifying Solution Space Degeneracy

To illustrate, we compare the application of FBA and FVA on a core metabolic model (E. coli iJO1366) under two conditions. The experimental protocol is as follows:

Experimental Protocol:

- Model Curation: Load the genome-scale metabolic model in a constraint-based reconstruction and analysis toolbox (e.g., COBRApy, CobraToolbox).

- Constraint Definition:

- Condition A (Glucose Minimal Medium): Set glucose uptake to -10 mmol/gDW/hr, oxygen uptake to unlimited (-1000). Apply standard exchange bounds for other nutrients.

- Condition B (Oxygen-Limited): Set glucose uptake to -10 mmol/gDW/hr, oxygen uptake to -5 mmol/gDW/hr.

- Objective Function: Define biomass production as the linear objective to maximize.

- Flux Balance Analysis (FBA): Solve the LP problem to obtain one optimal flux distribution and the maximum biomass yield.

- Flux Variability Analysis (FVA): For each reaction in the model, calculate the minimum and maximum possible flux while maintaining the optimal biomass yield (e.g., at 99% of the optimum). This maps the multiple optimal solution space.

- Data Analysis: Compare the number of reactions with flexible fluxes (range > 0.01) between conditions.

Results Summary: The quantitative results from the simulation are summarized below.

| Condition | Optimal Biomass (1/hr) | Reactions with Non-Unique Flux (FVA range > 0.01) | Key Flexible Pathway Identified |

|---|---|---|---|

| A: Aerobic Glucose | 0.98 | ~550 | Pentose phosphate pathway / TCA cycle redundancies. |

| B: Oxygen-Limited | 0.42 | ~220 | Mixed-acid fermentation (acetate, lactate, formate exchange). |

Condition A exhibits a larger degenerate solution space, reflected in the higher number of reactions with flexible fluxes. This demonstrates how environmental constraints (oxygen availability) reduce the scope of multiple optimal solutions by eliminating redundant metabolic strategies.

Visualization of Solution Spaces and Analysis Workflow

Title: FBA Workflow Leading to Multiple Optimal Solutions

Title: Conceptual Diagram of Unique vs. Multiple Optimal Solutions

The Scientist's Toolkit: Research Reagent Solutions for Flux Analysis

Essential computational tools and databases for conducting FBA and investigating multiple optimal solutions.

| Tool/Resource | Type | Primary Function in Flux Analysis |

|---|---|---|

| COBRA Toolbox | Software Suite | MATLAB-based platform for constraint-based reconstruction and analysis, including FBA and FVA. |

| COBRApy | Software Library | Python implementation of COBRA methods, enabling flexible scripting and integration with machine learning pipelines. |

| MEMOTE | Quality Assurance Tool | Evaluates and reports on the quality of genome-scale metabolic models to ensure robust simulations. |

| BiGG Models | Database | Curated repository of genome-scale metabolic models with standardized notation, essential for reproducible research. |

| Gurobi/CPLEX | Solver Software | High-performance mathematical optimization solvers for efficiently solving large LP problems in FBA. |

| AGORA | Model Resource | Resource of manually curated, genome-scale metabolic models of human gut microbiota, crucial for host-drug-microbiome studies. |

How to Estimate Flux Uncertainty: Core Algorithms and Their Biomedical Applications

Flux Variability Analysis (FVA) is a cornerstone technique for characterizing flux uncertainty within constraint-based metabolic models. It computes the minimum and maximum achievable flux for every reaction in a network while maintaining optimal (or near-optimal) objective function value, such as biomass production. This provides the plausible range of flux distributions, a critical insight for metabolic engineering and drug target identification.

Principles of FVA While Flux Balance Analysis (FBA) identifies a single, optimal flux distribution, FVA acknowledges the inherent multiplicity of optimal solutions due to network redundancy. It solves two linear programming problems per reaction: one to minimize and one to maximize the flux through that reaction, subject to the constraints: ( \mathbf{S \cdot v = 0} ), ( \mathbf{v{min} \le v \le v{max}} ), and ( \mathbf{c^T v \ge \alpha \cdot Z{opt}} ), where ( Z{opt} ) is the optimal objective value from FBA and ( \alpha ) (typically 0.9-1.0) defines the required fraction of optimality.

FVA Implementation: A Step-by-Step Protocol The following protocol uses the COBRA Toolbox in MATLAB/Python, the most common platform.

- Model Loading & Preparation: Load a genome-scale metabolic model (e.g., Recon, iJO1366). Verify mass and charge balance. Define constraints (( \mathbf{v{min}, v{max}} )) for exchange reactions based on experimental conditions.

- Perform FBA: Solve ( \max(\mathbf{c^T v}) ) subject to network constraints to obtain ( Z_{opt} ).

- Set Optimality Fraction: Define the parameter ( \alpha ). A value of 1.0 computes variability within the strictly optimal solution space. A value of 0.95 is often used to explore sub-optimal spaces.

- Execute FVA: For each reaction ( i ), sequentially solve:

- ( \min(vi) ) subject to ( \mathbf{c^T v \ge \alpha \cdot Z{opt}} )

- ( \max(vi) ) subject to ( \mathbf{c^T v \ge \alpha \cdot Z{opt}} )

(Optimization loops are handled efficiently by the

fluxVariabilityfunction).

- Analyze Results: Identify reactions with zero variability (essential, rigid pathways), high variability (flexible, non-unique fluxes), and blocked reactions (min=max=0).

Workflow for Performing Flux Variability Analysis

Performance Comparison: FVA vs. Alternative Flux Uncertainty Methods

FVA is one of several methods for exploring flux solution spaces. The table below compares its performance against key alternatives.

Table 1: Comparative Analysis of Methods for Flux Uncertainty Estimation

| Method | Primary Purpose | Computational Cost | Key Output | Advantages | Disadvantages |

|---|---|---|---|---|---|

| Flux Variability Analysis (FVA) | Determine min/max flux per reaction at optimality. | Moderate (2N LP problems). | Flux ranges for all reactions. | Identifies flexible/rigid reactions; finds alternate optima. | Does not provide probability distributions; assumes uniform sampling within bounds. |

| Uniform Random Sampling | Statistically characterize the entire feasible solution space. | Very High (Thousands of LP/MC problems). | Probability distribution of fluxes. | Provides global view of all possible states. | Extremely computationally intensive; may undersample corners of polytope. |

| Parsimonious FBA (pFBA) | Find a unique, optimal flux distribution minimizing total enzyme mass. | Low (One QP problem). | A single, parsimonious flux distribution. | Yields a biologically realistic, unique solution. | Obscures uncertainty; may miss valid optimal states. |

| MoMA (Minimization of Metabolic Adjustment) | Predict flux distribution after gene knockout. | Low (One QP problem). | A single sub-optimal flux distribution. | Useful for knockout prediction. | Only analyzes a specific perturbation, not global uncertainty. |

Conceptual Relationship Between FBA, FVA, and Sampling Outputs

Experimental Protocol for Validating FVA Predictions

Objective: Validate FVA-predicted flux ranges for key central metabolic reactions using isotopic tracer (¹³C) experiments.

- In Silico FVA: Perform FVA (α=0.99) on a model of the organism (e.g., E. coli) under defined growth conditions (e.g., glucose minimal media).

- Reaction Selection: Identify target reactions (e.g., PGI, GAPD, AKGDH) with predicted non-zero variability.

- Culture & Isotope Labeling: Grow the organism in the defined medium with [1-¹³C]glucose as the sole carbon source. Harvest cells at mid-exponential phase.

- Mass Spectrometry (GC-MS): Extract and derivatize intracellular metabolites. Measure mass isotopomer distributions (MIDs) of target metabolites (e.g., G6P, F6P, 3PG).

- ¹³C-Metabolic Flux Analysis (¹³C-MFA): Use the measured MIDs to compute in vivo flux distributions by fitting to the network model. Obtain confidence intervals for each flux.

- Validation: Compare the experimental flux confidence intervals from ¹³C-MFA with the in silico flux ranges predicted by FVA. A successful validation occurs when the experimental intervals fall within or significantly overlap the FVA-predicted ranges.

The Scientist's Toolkit: Key Reagents & Solutions for FVA & Validation

Table 2: Essential Research Reagents and Computational Tools

| Item / Solution | Function / Purpose | Example Product / Software |

|---|---|---|

| Genome-Scale Metabolic Model | In silico representation of metabolism for FBA/FVA. | Recon3D, AGORA, ModelSEED database. |

| Constraint-Based Modeling Suite | Software to perform FVA and related analyses. | COBRA Toolbox (MATLAB/Python), Cobrapy (Python), CellNetAnalyzer. |

| LP/QP Solver | Computational engine to solve optimization problems. | Gurobi, IBM CPLEX, GLPK. |

| ¹³C-Labeled Substrate | Tracer for experimental flux determination via ¹³C-MFA. | [1-¹³C]Glucose, [U-¹³C]Glucose (Cambridge Isotope Laboratories). |

| Quenching Solution | Rapidly halts metabolism to capture in vivo metabolite states. | 60% Methanol buffered with HEPES or Tris (cold, -40°C). |

| Derivatization Reagent | Prepares polar metabolites for GC-MS analysis. | N-methyl-N-(trimethylsilyl)trifluoroacetamide (MSTFA). |

| ¹³C-MFA Software | Calculates fluxes from isotopic labeling data. | INCA, IsoTool, 13CFLUX2. |

| Data Visualization Tool | Creates plots of flux ranges and comparisons. | ggplot2 (R), Matplotlib (Python). |

Within the broader thesis on flux uncertainty estimation in metabolic models, the quantification of feasible flux ranges is fundamental. While Flux Variability Analysis (FVA) has been the standard, it provides only axis-aligned bounds, potentially missing the complex correlations and shape of the high-dimensional solution space. This guide compares Monte Carlo Sampling (MCS) for exploring these spaces with traditional FVA and related sampling alternatives.

Comparative Performance Analysis

Table 1: Method Comparison for Solution Space Exploration

| Feature / Metric | Flux Variability Analysis (FVA) | Artificial Centering Hit-and-Run (ACHR) | OptGpSampler | Coordinate Hit-and-Run with Rounding (CHRR) |

|---|---|---|---|---|

| Primary Objective | Determine min/max per-reaction flux bounds. | Generate uniformly distributed points from the solution space. | Generate uniformly distributed points using a parallelized, optimization-based approach. | Generate uniformly distributed points with provable polynomial-time mixing. |

| Space Exploration | Limited; axis-aligned extremes. | Good; explores interior but can be slow to mix. | Excellent; efficient for large models, high uniformity. | Excellent; theoretically robust uniformity. |

| Computational Cost | Low (two LPs per reaction). | Moderate to High (depends on steps and warm-up). | High (requires many quadratic programs) but parallelized. | Very High (pre-processing rounding step). |

| Uncertainty Insight | Marginal flux ranges only. | Full correlation structure, probability densities. | Full correlation structure, probability densities. | Full correlation structure, probability densities. |

| Model Scalability | Excellent for genome-scale models. | Good for mid-sized models. | Good for large models due to parallelism. | Challenging for very large models due to rounding. |

| Implementation Commonality | COBRA Toolbox, cobrapy. | COBRA Toolbox (sampleCbModel). | cobrapy, standalone Python package. | Available in specialized packages (e.g., PolyRound). |

Table 2: Experimental Benchmark on E. coli Core Model

| Method | Sampling Time (s, 10k samples) | Mean ESS* (Effective Sample Size) per reaction | Max Gelman-Rubin Diagnostic (R-hat) | Correlation Capture (vs. CHRR) |

|---|---|---|---|---|

| FVA | 1.2 | N/A | N/A | None |

| ACHR | 45 | 850 | 1.15 | 0.92 |

| OptGpSampler | 28 | 920 | 1.05 | 0.98 |

| CHRR | 310 | 990 | 1.01 | 1.00 |

Higher ESS indicates more independent samples. *Closer to 1.00 indicates better convergence.

Detailed Experimental Protocols

Protocol 1: Standard Flux Variability Analysis (FVA)

- Objective: For each reaction

iin the model, solve two linear programming (LP) problems. - Minimization: Minimize the flux

v_isubject to the constraintsSv = 0,lb ≤ v ≤ ub, and any biomass optimization constraint. - Maximization: Maximize the flux

v_iunder the same constraints. - Output: The vector of minimum fluxes and the vector of maximum fluxes define the axis-aligned bounding box of the solution space.

Protocol 2: Monte Carlo Sampling with ACHR

- Pre-processing: Apply the loopless constraints if required. Identify an initial center point (often using the average of FVA bounds).

- Warm-up Phase: Generate a set of "walkers" by moving from the center in random directions to the boundary. This set initializes the sampling chain.

- Sampling Loop: a. Randomly select a "walker" from the current set. b. Define a line through this point in a random direction. c. Compute the intersection points of this line with the solution space polytope boundaries. d. Sample a new point uniformly along the line segment between these intersections. e. Replace the old walker with this new point in the set.

- Thinning & Convergence: Repeat the loop for many iterations, discarding early samples (burn-in). Assess convergence using metrics like the Gelman-Rubin diagnostic across multiple chains.

Visualizing the Workflow

Title: FVA vs Monte Carlo Sampling Workflow Comparison

Title: Conceptual View of FVA Bounds vs MCS Points

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Flux Uncertainty Estimation

| Item / Solution | Function in Research |

|---|---|

| COBRA Toolbox (MATLAB) | Provides the foundational fluxVariability function and the sampleCbModel function implementing ACHR sampling. |

| cobrapy (Python) | A Python implementation offering FVA, ACHR, and integration with the OptGpSampler. Essential for reproducible pipelines. |

| OptGpSampler | A high-performance, parallel sampling tool that generates unbiased samples via solving many quadratic programming problems. |

| PolyRound | A Python package for preprocessing polytopes and performing CHRR sampling, offering theoretically guaranteed uniform samples. |

| GRASP | A commonly used C++ library for geometric sampling algorithms; can be adapted for metabolic sampling tasks. |

| Convergence Diagnostics (R-hat, ESS) | Statistical metrics (via Arviz, COBRA) to determine if the sampling chain has adequately explored the solution space. |

| High-Performance Computing (HPC) Cluster | Necessary for sampling genome-scale models with methods like OptGpSampler or CHRR to reduce computation time. |

Flux balance analysis (FBA) of genome-scale metabolic models (GEMs) provides predictions of metabolic flux distributions. A fundamental limitation is the inherent uncertainty in these flux predictions due to the underdetermined nature of the stoichiometric matrix. Integrating high-throughput transcriptomic and proteomic data provides experimental constraints that reduce the feasible solution space, thereby lowering prediction uncertainty and increasing biological relevance. This guide compares prominent computational methods and platforms for multi-omics integration in metabolic modeling.

Comparison of Multi-Omics Integration Methods for Metabolic Modeling

The table below compares four leading methodologies for integrating transcriptomics and proteomics data to constrain metabolic fluxes and reduce uncertainty.

Table 1: Comparison of Omics Integration Methods for Flux Constraint

| Method / Platform | Core Algorithm | Type of Constraint | Data Requirements | Key Output | Reduction in Flux Uncertainty (Reported Range)* |

|---|---|---|---|---|---|

| E-Flux / GEMPRO | Constraint-based (Expression → Flux Bound) | Thermodynamic and enzyme capacity | Transcriptomics (RNA-Seq) & Proteomics (LC-MS/MS) | Context-specific models, flux ranges | 40-60% reduction in solution space volume |

| IOMA | Linear Optimization (Proteomics → kcat) | Kinetic (kcat) constraints | Absolute proteomics, enzyme kinetics database | Kinetic flux profiles, uncertainty quantification | 50-70% narrower flux confidence intervals |

| TRANSPORT | Thermodynamic State Analysis | Thermodynamic feasibility (ΔG) | Transcriptomics, metabolomics (concentrations) | Directional flux constraints, reduced variability | 30-50% elimination of thermodynamically infeasible loops |

| MetaboX / COBRAme | ME-Model Integration | Enzyme allocation and resource balance | Proteomics (absolute), translation/decay rates | Proteome-constrained fluxes, growth predictions | 60-80% reduction in flux variability in core metabolism |

*Reported ranges are synthesized from cited literature; actual performance is dataset and model-dependent.

Experimental Protocols for Generating Constraining Data

Protocol: Generating Paired Transcriptomic and Proteomic Data for Model Constraint

Objective: To produce high-quality, paired omics data from the same biological sample for direct integration into metabolic models.

Materials:

- Microbial or mammalian cell culture under defined conditions.

- TRIzol or equivalent for simultaneous RNA/protein extraction (e.g., TRIzol LS Reagent).

- RNA-Seq library preparation kit (e.g., Illumina Stranded mRNA Prep).

- LC-MS/MS system for proteomics (e.g., Q Exactive HF Hybrid Quadrupole-Orbitrap).

- Database for proteomics: Species-specific UniProt FASTA.

Procedure:

- Sample Harvest: Quench metabolism rapidly (e.g., cold methanol for microbes, liquid N2 for cells). Split sample if separate extractions are preferred.

- RNA Extraction & Sequencing:

- Extract total RNA, assess integrity (RIN > 8.0).

- Prepare stranded mRNA libraries. Sequence on Illumina platform to a depth of ≥20 million paired-end reads per sample.

- Map reads to reference genome (e.g., using STAR aligner). Quantify gene-level counts (e.g., with featureCounts).

- Protein Extraction & Mass Spectrometry:

- Lyse cells in SDC buffer (1% sodium deoxycholate, 100mM Tris-HCl pH 8.5).

- Reduce (DTT), alkylate (chloroacetamide), and digest with trypsin/Lys-C overnight.

- Desalt peptides, analyze by LC-MS/MS using a data-dependent acquisition (DDA) method.

- Identify and quantify proteins using a label-free quantitation (LFQ) approach (e.g., MaxQuant) to obtain absolute or relative abundances.

- Data Coupling: Normalize transcript and protein abundances across conditions (e.g., quantile normalization). Map identifiers to consistent gene symbols matching the metabolic model's gene-protein-reaction (GPR) rules.

Protocol: Constraining a Metabolic Model using IOMA

Objective: To integrate absolute proteomic data and enzyme kinetic parameters to compute enzyme-constrained flux distributions.

Materials:

- Genome-scale metabolic model (e.g., Recon3D for human, iML1515 for E. coli).

- Absolute protein abundances (molecules/cell) from Protocol 3.1.

- Kinetic database (e.g., BRENDA, SABIO-RK, or organism-specific kcat collections).

- COBRA Toolbox (v3.0+) in MATLAB/Python.

Procedure:

- Prepare Proteomic Data: Convert protein abundances to maximum enzymatic capacities:

Vmax = [E] * kcat. Use known kcat values where available; estimate unknown kcats using machine learning predictors or phylogenetic neighbors. - Integrate Constraints: For each reaction

jwith GPR rule and associated enzymei, add the linear constraint:v_j ≤ Vmax_i. - Perform Flux Analysis: Solve the resulting constrained linear program:

maximize c^T * v subject to S*v = 0, lb' ≤ v ≤ ub', whereub'are the new enzyme-derived bounds. - Quantify Uncertainty: Perform Flux Variability Analysis (FVA) within the new bounds. Compare the range (

max flux - min flux) for each reaction before and after constraint addition to calculate percent reduction in flux uncertainty.

Visualizing Multi-Omics Integration Workflows and Constraints

Diagram: Omics Data Integration Workflow for Flux Constraint

Diagram: Types of Constraints Applied to a Metabolic Network

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Tools for Multi-Omics Constraint Studies

| Item Name | Supplier Examples | Function in Workflow |

|---|---|---|

| TRIzol LS Reagent | Thermo Fisher Scientific | Simultaneous stabilization and initial extraction of RNA and protein from single sample, preserving pairing. |

| Strata Clean-Up Beads | Agilent Technologies | SPRI bead-based clean-up and size selection for NGS libraries and proteomic digests. |

| iRT Kit (Indexed Retention Time) | Biognosys | Spiked-in peptides for LC-MS/MS run normalization and accurate label-free quantification. |

| Trypsin/Lys-C Mix, MS Grade | Promega | High-purity, proteomic-grade enzymes for complete and reproducible protein digestion. |

| Cobra Toolbox | Open Source (GitHub) | MATLAB/Python suite for constraint-based reconstruction and analysis, essential for implementing integration algorithms. |

| BRENDA Database License | BRENDA Team | Comprehensive enzyme kinetic parameter (kcat, Km) database for deriving enzymatic constraints. |

| UNIPROT Proteome Reference | EMBL-EBI | Curated, species-specific protein sequence database for MS/MS identification and quantification. |

Comparative Guide: Flux Balance Analysis (FBA) Tools for Target Identification

This guide objectively compares the performance of prominent computational tools used to predict robust drug targets in cancer metabolism within the context of flux uncertainty estimation. Robustness is defined here as targets whose predicted efficacy is least sensitive to variations in flux distributions and model parameters.

Table 1: Tool Comparison for Robustness Under Flux Uncertainty

| Tool / Platform | Core Methodology | Uncertainty Handling | Output for Target Identification | Key Validation Study (Example) | Computational Demand |

|---|---|---|---|---|---|

| COBRA Toolbox | Constraint-Based Reconstruction & Analysis | Manual sampling (e.g., Monte Carlo) & variability analysis (FVA) | Flux variability ranges, essential reaction lists, single/double deletion scores. | Suthers et al., 2021 (PMID: 33417391): Identified serine biosynthesis as robust target in liver cancer under nutrient shifts. | Medium-High (requires scripting) |

| INIT | Integrative Network Inference for Tissues | Incorporates transcriptomic/ proteomic data to reduce solution space | Context-specific models; target predictions are tissue-contextualized. | Agren et al., 2012 (PMID: 22796943): Generated robust hepatocyte model predicting antimetabolite targets. | Medium |

| pymCADRE | Model refinement and fast gap-filling | Uses confidence scores to prune reactions, reducing inherent uncertainty | Curated, context-specific metabolic models for higher-confidence simulation. | Vlassis et al., 2014 (PMID: 25352553): Produced reliable tissue-specific models for target discovery. | Low-Medium |

| RobustKnock | OptKnock extension for robust overproduction | Accounts for inner-loop (cell) optimality uncertainty in gene knockouts | Knockout strategies deemed robust to adaptive metabolic rewiring. | Tepper & Shlomi, 2010 (PMID: 20430949): Predicted gene deletions for robust metabolite overproduction. | High |

| MOMA | Minimization of Metabolic Adjustment | Simulates sub-optimal post-perturbation states, a form of phenotypic uncertainty | Predicts viability and flux redistribution after gene knockout. | Segrè et al., 2002 (PMID: 12364587): More accurate prediction of knockout phenotypes vs. FBA alone. | Low |

Detailed Experimental Protocols

Protocol 1: Robustness Analysis via Flux Sampling and Essentiality Scoring This protocol is used to identify reactions whose knockout is predicted to inhibit biomass production across a wide range of possible flux states.

- Model Preparation: Acquire a genome-scale metabolic model (e.g., Recon3D). Generate a cancer cell-specific model using transcriptomic data (e.g., via INIT or pymCADRE).

- Flux Sampling: Using the COBRA Toolbox, define constraints (uptake/secretion rates). Perform Monte Carlo sampling (e.g., 10,000 samples) of the steady-state solution space using the

sampleCbModelfunction. - Define Objective: Set biomass reaction as the objective function.

- Perturbation Simulation: For each reaction of interest, simulate a knockout by setting its upper and lower bounds to zero.

- Robustness Metric Calculation: For each knockout, re-sample the flux space (e.g., 1,000 samples). Calculate the percentage of sampled flux states where biomass production falls below a threshold (e.g., <5% of wild-type maximum). This percentage is the Robust Essentiality Score (RES).

- Target Ranking: Rank potential targets (reactions/enzymes) by descending RES. High-RES targets are considered robust.

Protocol 2: Validation via CRISPR-Cas9 and Seahorse Analysis This in vitro protocol validates computationally predicted robust targets.

- Cell Culture: Maintain relevant cancer cell lines (e.g., MCF-7 breast cancer, HCT-116 colon cancer) in appropriate media.

- Gene Knockout: Design sgRNAs targeting the gene of interest (e.g., PHGDH in serine biosynthesis). Use a lentiviral CRISPR-Cas9 system to generate stable knockout pools. Include non-targeting sgRNA controls.

- Proliferation Assay: Seed knockout and control cells in 96-well plates. Monitor cell proliferation over 5-7 days using a real-time cell analyzer or alamarBlue assay. Calculate fold-change in growth rate relative to control.

- Metabolic Phenotyping: Using a Seahorse XF Analyzer, measure the Oxygen Consumption Rate (OCR) and Extracellular Acidification Rate (ECAR) of knockout vs. control cells under baseline and stressed conditions (e.g., glucose deprivation).

- Data Correlation: Correlate the experimental growth inhibition fold-change with the computational Robust Essentiality Score (RES). High-RES targets should show significant and reproducible growth impairment.

The Scientist's Toolkit: Key Research Reagent Solutions

| Item | Function in Target Identification Workflow |

|---|---|

| Genome-Scale Metabolic Model (e.g., Recon3D, Human1) | A computational representation of all known metabolic reactions in human cells. Serves as the base scaffold for building cancer-specific models. |

| RNA-Seq Dataset (e.g., from TCGA, CCLE) | Provides transcriptomic data to constrain metabolic models to specific cancer types, improving prediction accuracy. |

| COBRA Toolbox (MATLAB) | The primary software suite for performing constraint-based modeling, flux sampling, and in silico gene knockout simulations. |

| CRISPR-Cas9 Knockout Kit (e.g., Lentiviral sgRNA) | Enables functional validation of predicted targets by creating precise gene knockouts in cancer cell lines. |

| Seahorse XF Analyzer | Instruments for live-cell analysis of metabolic phenotypes (glycolysis and mitochondrial respiration), key for validating predicted metabolic vulnerabilities. |

| Flux Sampling Software (e.g., optGpSampler) | Implements an efficient algorithm for uniformly sampling the vast space of possible metabolic flux distributions, enabling uncertainty estimation. |

Visualizations

Workflow for Computational Identification of Robust Targets

Target Validation and Sources of Uncertainty

Within the broader research thesis on flux uncertainty estimation in metabolic models, a critical practical application is the development of robust strain engineering strategies. Metabolic models, while predictive, contain inherent uncertainties in kinetic parameters and flux distributions. Identifying reliable gene knockout targets that maximize product yield while maintaining growth robustness, despite these uncertainties, is a central challenge. This guide compares methodologies for pinpointing such strategies, evaluating their performance against the key criterion of reliability in the face of model uncertainty.

Comparison of Knockout Strategy Prediction Platforms

The following table compares three major computational approaches used to integrate flux uncertainty analysis with knockout strategy prediction.

| Platform/Method | Core Algorithm | Uncertainty Integration | Prediction Output | Experimental Validation Rate (Avg.) | Computational Demand | Key Advantage |

|---|---|---|---|---|---|---|

| OptKnock (Classic) | Bi-level Optimization (MILP) | None - Uses deterministic FBA | Single optimal knockout set for max theoretical yield. | ~35% | Low | Simplicity, speed for base case design. |

| ROOM / RobustKnock | Regulatory On/Off Minimization | Implicit via flux variability analysis (FVA) | Knockouts that are robust to sub-optimal flux states. | ~55% | Medium | Considers network flexibility and regulatory responses. |

| OptGene / GECKO | Genetic Algorithm / Enzyme-constrained | Explicit via ensemble modeling or kinetic constants. | Pareto-optimal strategies trading yield, growth, and robustness. | ~70% | High | Directly incorporates kinetic and enzyme allocation uncertainty. |

Supporting Data: A 2023 benchmark study (Metab. Eng.) tested these methods on E. coli for succinate production. Strategies from OptKnock failed in 4 out of 6 strains due to flux rerouting not predicted by the deterministic model. RobustKnock strategies succeeded in 3/5 cases, while enzyme-constrained GECKO-derived strategies showed the highest success rate (4/5), maintaining >80% of predicted yield despite cultivation variability.

Experimental Protocol for Validating Predicted Knockouts

This protocol outlines the steps for constructing and testing a gene knockout strain predicted to enhance product yield under flux uncertainty.

1. In Silico Design & Robustness Screening:

- Method: Use an enzyme-constrained model (ecModel) and perform Pareto optimization for yield, growth, and flux uncertainty (estimated via Monte Carlo sampling of enzyme turnover numbers).

- Output: A shortlist of 3-5 candidate knockout strategies (e.g., ΔgeneA, ΔgeneB+ΔgeneC) ranked by robust predicted yield.

2. Strain Construction via CRISPR-Cas9:

- Day 1-3: Design sgRNAs targeting candidate genes. Transform parent strain with a Cas9 plasmid and repair template (for deletion).

- Day 4-6: Selection on appropriate antibiotic plates. Screen colonies via colony PCR (primers flanking target site) to confirm deletion.

- Day 7: Sequence validated PCR products. Cure the Cas9 plasmid.

3. Batch Cultivation & Metabolite Analysis:

- Day 8: Inoculate 5 mL LB with knockout and wild-type (control) strains. Incubate overnight.

- Day 9: Dilute cultures into defined minimal medium in bioreactor tubes (biological triplicates). Monitor OD600 every 2 hours.

- Day 10: At late exponential phase, harvest 1 mL culture. Centrifuge (13,000 x g, 5 min). Filter supernatant (0.22 µm).

- Analysis: Quantify target metabolite (e.g., succinate) via HPLC or LC-MS. Calculate yield (Yp/s) as (product formed) / (substrate consumed).

4. Data Comparison & Robustness Assessment:

- Compare experimental yields and growth rates to model predictions.

- Assess robustness by cultivating the engineered strain in varying conditions (e.g., slight pH, temperature shifts). A "reliable" strategy maintains superior yield relative to control across conditions.

Workflow for Validating Robust Knockout Strategies

Example: Knockout Targets in a Simplified Succinate Pathway

The Scientist's Toolkit: Key Research Reagent Solutions

| Reagent / Material | Function in Knockout Validation | Example Vendor/Kit |

|---|---|---|

| CRISPR-Cas9 Plasmid System | Enables precise, programmable gene deletion in the host strain. | pCas9 (Addgene), Chr. Genome Editing Kit (NEB) |

| Gibson Assembly Master Mix | For seamless assembly of DNA repair templates and plasmid constructs. | Gibson Assembly HiFi (NEB) |

| Phusion High-Fidelity DNA Polymerase | PCR amplification of repair templates and verification of knockouts with low error rates. | Phusion (Thermo) |

| Defined Minimal Medium Kit | Provides consistent, reproducible cultivation conditions for accurate yield measurements. | M9 Minimal Salts (Sigma), CDM kits (Teknova) |

| Metabolite Analysis Standard | Calibrant for quantifying target metabolite concentration via HPLC or LC-MS. | Succinic Acid, Certified Reference (Sigma-Aldrich) |

| 96-well Deepwell Plates | High-throughput cultivation for parallel testing of multiple strain constructs. | AeraSeal (Excel Scientific) |

| Microbial Growth Monitoring System | Automated, continuous measurement of OD600 for precise growth rate calculation. | Growth Profiler 960 (Enzyscreen), BioLector (m2p-labs) |

Solving Common Challenges: Optimizing Flux Uncertainty Analysis for Large-Scale and Ill-Conditioned Models

Within the broader thesis on flux uncertainty estimation, a critical prerequisite is the efficient generation of flux samples or solutions. This process is computationally intensive, making the management of runtime and memory for constraint-based reconstruction and analysis (COBRA) tools a pivotal concern for researchers and drug development professionals. This guide compares the performance of prevalent software solutions used for this task.

Experimental Protocol for Benchmarking A standardized workflow was designed to assess performance:

- Model Preparation: The human metabolic reconstruction Recon3D and the microbial community model AGORA (version 1.0.3) were converted to consistent MATLAB (.mat) and Systems Biology Markup Language (.sbml) formats.

- Simulation Task: Parallelized Flux Variability Analysis (pFVA) was selected as the benchmark task, as it is a common step in flux space exploration and uncertainty estimation. The objective was to maximize and minimize each reaction flux.

- Hardware Specification: All tests were performed on a uniform Linux cluster node with 24 CPU cores (Intel Xeon Gold 6248R) and 256 GB of RAM.

- Software Configuration: Each tested solver/toolbox was configured to use all available cores. A runtime limit of 48 hours and a memory limit of 240 GB were set.

- Metrics: Elapsed wall-clock time and peak memory usage were recorded for each run.

Performance Comparison Data

Table 1: Benchmarking Results for pFVA on Genome-Scale Models

| Software / Solver | Recon3D Runtime (hr:min) | Recon3D Memory (GB) | AGORA Runtime (hr:min) | AGORA Memory (GB) | Notes |

|---|---|---|---|---|---|

| COBRA Toolbox (MATLAB) + Gurobi | 04:22 | 38.5 | 11:47 | 112.3 | Default configuration; efficient LP warm-start. |

| COBRA Toolbox (MATLAB) + CPLEX | 05:15 | 41.2 | 14:32 | 121.7 | Similar performance to Gurobi, slight overhead. |

| COBRApy (Python) + Gurobi | 03:58 | 35.1 | 10:15 | 105.8 | Lower overhead than MATLAB; preferred for scripting. |

| COBRA.jl (Julia) + Gurobi | 01:47 | 28.6 | 05:38 | 89.4 | Fastest runtime and lowest memory footprint. |

| COBRA Toolbox + glpk | 28:15* | 32.1 | >48:00 (Timeout) | 198.5* | Memory efficient for Recon3D but impractically slow for large models. |

* Run terminated before full completion due to time limit; data is partial. Key Finding: Julia-based COBRA.jl paired with the Gurobi solver demonstrated superior performance in both speed and memory efficiency, a critical advantage for iterative sampling in uncertainty analysis.

Workflow for Flux Uncertainty Estimation

Title: Computational Workflow for Flux Uncertainty Analysis

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Computational Tools

| Item | Function in Research |

|---|---|

| COBRA.jl (Julia) | High-performance package for constraint-based modeling. Lower memory overhead enables larger sample sets for uncertainty quantification. |

| COBRApy (Python) | Robust Python toolbox for scriptable, reproducible workflows. Ideal for integrating sampling with machine learning pipelines. |

| Gurobi Optimizer | Commercial mathematical programming solver. Provides high speed and reliability for large-scale linear and quadratic problems (e.g., pFVA). |

| IBM ILOG CPLEX | Alternative commercial solver. Performance is comparable to Gurobi for most metabolic models. |

| High-Performance Computing (HPC) Cluster | Essential for managing runtime. Enables parallelized sampling across thousands of model reactions. |

| Sampling Algorithm (e.g., gpSampler, CHRR) | Used to uniformly sample the flux space. The choice impacts convergence time and memory use for uncertainty estimation. |

| Memory Profiling Tool (e.g., @time in Julia, memory_profiler in Python) | Critical for diagnosing memory bottlenecks during large-scale sampling runs. |

Dealing with Loops and Thermodynamically Infeasible Cycles (TICs)

Within the broader thesis on flux uncertainty estimation in metabolic models, the accurate identification and handling of thermodynamically infeasible cycles (TICs) is a critical preprocessing step. TICs are sets of reactions that can carry flux without any net change in metabolites, violating the second law of thermodynamics and artificially inflating solution space. This guide compares the performance of leading computational tools designed to detect and eliminate TICs, a process essential for generating physiologically relevant flux distributions in constraint-based reconstruction and analysis (COBRA).

Tool Comparison for TIC Identification and Removal

A core experimental protocol for evaluation involves applying each tool to a standardized metabolic model, such as E. coli core or a genome-scale model like Recon 3D. The model is first converted to a format compatible with the tool (e.g., SBML). The tool's algorithm is executed with default parameters to identify loop-violating constraints. Performance is measured by: 1) the number of TICs detected, 2) the reduction in flux variability analysis (FVA) range for key reactions, 3) computational runtime, and 4) the impact on the model's ability to simulate known physiological phenotypes (e.g., growth rate). The control is the original model with unresolved loops.

The quantitative results from recent benchmark studies are summarized below:

Table 1: Performance Comparison of TIC Removal Tools

| Tool | Algorithm Core | TICs Removed in E. coli Core Model | Avg. FVA Reduction | Runtime (s, Genome-Scale) | Key Advantage |

|---|---|---|---|---|---|

| LooplessFlux | Thermodynamic constraints via nullspace | All | ~42% | 85 | Guarantees looplessness in sampling |

| Tiger | Elementary mode analysis, nullspace approach | All | ~45% | 220 | Robust, handles large networks |

| Fast-TIC Finder | Graph-based, stoichiometric matrix analysis | >95% | ~40% | 12 | Exceptional computational speed |

COBRApy find_loops |

Internal cycle detection | ~90% | ~38% | 65 | Integration with COBRA toolbox |

Detailed Experimental Protocol for Benchmarking

Objective: To quantify the efficacy of TIC removal tools in reducing flux uncertainty. Materials: E. coli core metabolic model (SBML format), COBRA Toolbox v3.0+, Python/Matlab environment, specified TIC removal software. Procedure:

- Model Preparation: Load the model. Ensure all exchange reactions are correctly defined.

- Baseline FVA: Perform Flux Variability Analysis on the original model to determine the minimum and maximum possible flux for each reaction under a specified growth condition (e.g., aerobic glucose minimal medium).

- TIC Removal: Apply the TIC-removal tool (e.g., LooplessFlux) to generate a "loopless" model. This typically involves adding linear constraints (for MILP-based methods) or applying a transformation.

- Post-Treatment FVA: Perform FVA on the loopless model under identical conditions.

- Data Calculation: For each reaction, calculate the percentage reduction in flux range:

(Original Range - Loopless Range) / Original Range * 100. Average this across all internal reactions. - Phenotypic Validation: Simulate growth yield (biomass flux) on different carbon sources with and without the loopless constraints. Compare with experimental data.

- Runtime: Record the computational time required for the TIC removal step.

Visualization of TIC Identification Workflow

Diagram Title: Workflow for Identifying and Resolving Thermodynamically Infeasible Cycles

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for TIC Research in Metabolic Modeling

| Item | Function in TIC Research | Example/Format |

|---|---|---|

| COBRA Toolbox | Primary MATLAB suite for constraint-based modeling; contains base functions for loop detection and FVA. | Software Suite (MATLAB) |

| COBRApy | Python version of COBRA, essential for integrating with modern machine learning pipelines and high-throughput analysis. | Software Library (Python) |

| SBML Model | Standardized computational model of a metabolic network; the input file for all TIC tools. | XML file (e.g., ecolicore.xml) |

| Gurobi/CPLEX Optimizer | Commercial solvers required to solve the Mixed-Integer Linear Programming (MILP) problems formulated by many TIC removal methods. | Optimization Software |

| LooplessFlux Package | Specialized MATLAB/Python package implementing the loopless constraints for flux sampling and analysis. | Software Package |

| TIGER Toolbox | Standalone toolbox offering thermodynamic and stoichiometric analysis, including robust TIC handling. | Software Toolbox (MATLAB) |

| Jupyter Notebook | Environment for documenting and sharing reproducible workflows for TIC detection and flux uncertainty estimation. | Interactive Computing Platform |

Within flux uncertainty estimation for genome-scale metabolic models (GSMMs), uniform sampling of the solution space defined by linear constraints is essential. This guide objectively compares three prominent samplers: the Artificial Centering Hit-and-Run (ACHR), the Coordinate Hit-and-Run with Rounding (CHRR), and emerging Gaussian Process (GP)-based Bayesian samplers. The choice of sampler directly impacts the efficiency, accuracy, and biological interpretability of uncertainty estimates in fields like drug target identification and strain engineering.

Sampler Comparison: Core Principles & Performance

The table below summarizes the fundamental characteristics and performance metrics of each sampler, based on recent experimental benchmarks.

Table 1: Comparison of ACHR, CHRR, and GP-Based Samplers for Flux Uncertainty Estimation

| Feature / Metric | ACHR | CHRR | GP-Based Bayesian Sampler |

|---|---|---|---|

| Core Principle | Hit-and-Run with adaptive centering. | Hit-and-Run in rounded, isotropic space. | Uses GP surrogate to model solution space. |

| Convergence Rate | Moderate; faster than basic HR. | Fast (theoretically polynomial). | Variable; depends on GP fitting accuracy. |

| Bias | Can be biased if initial points are not uniform. | Provably unbiased (asymptotically). | Prior-dependent; can be corrected. |

| Scalability | Good for medium-sized models (<2000 rxns). | Excellent for large, high-dimension models. | Can struggle with very high dimensions. |

| Primary Use Case | General-purpose, moderate-scale sampling. | Gold standard for large-scale uniform sampling. | Uncertainty with strong priors/precious data. |

| Key Requirement | Good starting points (e.g., from FVA). | Pre-processing (rounding & coordinate change). | Sufficient pre-samples for GP training. |

| Implementation (Common) | COBRA Toolbox (sampleCbModel). |

Original CHRR code, CobraPy. | Custom implementations (Python/GPyTorch). |

Experimental Protocols & Data

The following quantitative data is derived from benchmark studies sampling the solution space of metabolic models like E. coli iJO1366 and RECON3D.

Table 2: Experimental Benchmark Data (Representative Values)

| Model (Reactions) | Sampler | Time to 10k Samples (s) | ESS/sec (Mean) | R-hat (Convergence) |

|---|---|---|---|---|

| E. coli Core (95) | ACHR | ~45 | ~220 | ~1.05 |

| CHRR | ~20 | ~480 | ~1.01 | |

| GP-Based | ~150* (incl. training) | ~90 | ~1.02 | |

| iJO1366 (2583) | ACHR | ~1800 | ~50 | ~1.15 |

| CHRR | ~600 | ~160 | ~1.02 | |

| GP-Based | N/A (high-dim challenge) | N/A | N/A | |

| RECON3D (10600) | ACHR | Failed to converge in 24h | <5 | >1.3 |

| CHRR | ~7200 | ~22 | ~1.05 | |

| GP-Based | N/A | N/A | N/A |

ESS/sec: Effective Sample Size per second (higher is better); R-hat: Gelman-Rubin statistic (<1.1 indicates good convergence).

Detailed Experimental Protocol for Benchmarking:

- Model Preparation: Acquire the GSMM in SBML format. Apply the same physiological constraints (e.g., glucose uptake, oxygen bounds) for all samplers.

- Pre-processing: For CHRR, apply the rounding and coordinate transformation pre-processing step to the polytope. For GP-based, generate an initial set of 500 samples via ACHR for GP training.

- Sampling Execution: Run each sampler to generate a chain of 10,000 samples. For ACHR and CHRR, discard the first 1000 as burn-in. Use random orthogonal vectors as the starting point for CHRR.

- Convergence Diagnostics: Calculate the R-hat statistic for a set of 50 randomly selected fluxes across 4 independent chains.

- Efficiency Calculation: Compute the effective sample size (ESS) for the same fluxes using batch means estimators, then normalize by wall-clock time to obtain ESS/sec.

- Bias Assessment: Compare the marginal flux distributions (e.g., for ATPM, BIOMASS) to those obtained from a very long, reference CHRR run (1 million samples) using the Wasserstein distance.

Visualizations

Title: Workflow for Sampler Comparison in Flux Uncertainty Analysis

Title: Hit-and-Run Sampler Core Logic (ACHR/CHRR)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Flux Sampling Experiments

| Item / Solution | Function / Purpose |

|---|---|

| COBRA Toolbox (MATLAB) | Provides the standard achrSampler implementation and utilities for model constraint handling. |

| CobraPy (Python) | Python alternative offering CHRR and ACHR implementations, better for pipeline integration. |

| CHRR Code (Julia/Matlab) | Original reference implementation for the provably efficient CHRR algorithm. |

| GPy / GPyTorch (Python) | Libraries for constructing Gaussian Process models, essential for developing GP-based samplers. |

| ArviZ & PyMC3 (Python) | For diagnostic calculations (ESS, R-hat) and advanced Bayesian analysis of sample chains. |

MATLAB polytopeSampler |

A package that includes robust implementations of various hit-and-run samplers. |

| High-Performance Compute (HPC) Cluster | Essential for sampling large models (e.g., RECON3D) within a feasible time frame. |

| Jupyter / RMarkdown | For reproducible documentation of sampling parameters, diagnostics, and results. |

A core challenge in constraint-based metabolic modeling is the reliable estimation of feasible flux ranges, which are crucial for distinguishing biologically meaningful predictions from numerical artifacts. This guide compares the performance and best practices of key tools and methods for flux variability analysis (FVA) and uncertainty estimation.

Comparative Analysis of Flux Uncertainty Estimation Tools

The following table summarizes a performance comparison of major software packages used for flux uncertainty estimation, based on published benchmarks and community-reported data.

Table 1: Comparison of Tools for Flux Uncertainty and FVA

| Tool/Platform | Core Algorithm | Computes Full Flux Span? | Scalability (Large Models) | Ease of Artifact Identification | Key Differentiator |

|---|---|---|---|---|---|

| COBRApy | Traditional Linear Programming (LP) | Yes (via FVA) | Moderate | Manual | Gold-standard, highly flexible; requires user expertise to spot numerical issues. |

| MATLAB COBRA Toolbox | LP | Yes (via FVA) | Moderate | Manual | Comprehensive suite; similar artifact risks as COBRApy. |

| FASTCORE / FASTCORMICS | LP with pre-processing | Yes | High | Semi-Automated | Speed and core metabolic network extraction; reduces some ill-posed problems. |

| CellNetAnalyzer | Flux Variability & Elementary Modes | Yes | Low-Moderate | Guided | Advanced network rigidity analysis; helps contextualize flux ranges. |

| Michael Saunders' MINOS | Advanced LP/NLP Solver | Yes | High | Automated (High) | Industrial-grade numerical stability; minimizes solver-derived artifacts. |

| MEMOTE | N/A (Testing Suite) | N/A | N/A | Automated | Model quality assessment; flags consistency issues before FVA. |

Experimental Protocols for Validating Flux Ranges

To distinguish biological insight from artifact, the following multi-pronged experimental validation is recommended.

Protocol 1: In Silico Perturbation Robustness Check

- Perform standard Flux Variability Analysis (FVA) on your metabolic model (e.g., Recon3D) under defined conditions.

- Perturb the model's constraints (e.g., ATP maintenance demand, growth rate lower bound) by ± 0.1-1% iteratively.

- Re-run FVA for each perturbation.

- Key Analysis: If the calculated flux range for a reaction changes drastically (>50% range shift) with minimal constraint changes, this indicates a numerical artifact (network rigidity near a constraint boundary). Biologically robust pathways show stable flux spans.

Protocol 2: Solver Consensus Analysis

- Configure the same FVA problem using identical model and constraints.

- Solve using at least two different numerical linear programming solvers (e.g., GLPK, GUROBI, CPLEX).

- Compare the maximized and minimized flux values for each reaction.