Targeted vs Untargeted Metabolomics: A Strategic Framework for Cross-Validation and Data Integration in Biomedical Research

This article provides a comprehensive guide for researchers and drug development professionals on cross-validating targeted and untargeted metabolomics results.

Targeted vs Untargeted Metabolomics: A Strategic Framework for Cross-Validation and Data Integration in Biomedical Research

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on cross-validating targeted and untargeted metabolomics results. It explores the foundational principles distinguishing these approaches, with targeted metabolomics offering high sensitivity for predefined metabolites and untargeted providing broad hypothesis-generating coverage. The content details methodological workflows from sample preparation to data analysis, addresses common troubleshooting scenarios and optimization strategies using advanced computational tools, and presents rigorous validation frameworks through clinical case studies. By synthesizing these elements, the article offers a strategic pathway for effectively integrating both methodologies to enhance data reliability, drive discovery, and accelerate translational applications in precision medicine and therapeutic development.

Core Principles and Strategic Value of Targeted and Untargeted Metabolomics

In scientific research, particularly in the field of metabolomics, two fundamental paradigms guide investigation: discovery-oriented science and hypothesis-driven science. These approaches represent philosophically distinct paths to scientific knowledge, each with unique strengths and applications. Discovery science, often described as inductive research, involves gathering data first and then developing theories to explain the findings [1]. In contrast, hypothesis-driven science, based on deductive reasoning, begins with a specific question or tentative explanation that is then tested through experimentation [2]. The former is primarily about observing and describing nature, while the latter seeks to explain it [2].

The distinction between these paradigms is particularly salient in modern 'omics' technologies, where the choice between untargeted (discovery) and targeted (hypothesis-driven) approaches shapes experimental design, analytical methods, and interpretation of results. This guide objectively compares these methodologies within the context of cross-validation in metabolomics research, providing researchers with a framework for selecting and integrating these powerful approaches.

Conceptual Foundations: Discovery vs. Hypothesis-Driven Research

Core Philosophical Differences

The distinction between discovery and hypothesis-driven science can be understood through their underlying reasoning processes. Discovery science uses inductive reasoning, where general conclusions are drawn from specific observations [2]. This approach is inherently exploratory, aiming to "discover" new knowledge about the natural world through comprehensive observation and description [2]. It is exemplified by untargeted metabolomics, which systematically measures a vast number of metabolites without preconceived notions about which might be important.

Conversely, hypothesis-driven science employs deductive reasoning, beginning with a general theory or hypothesis from which specific predictions are made and tested [1] [2]. This approach follows the traditional scientific method, formulating a hypothesis to explain natural phenomena then designing experiments to test its validity [2]. In metabolomics, this philosophy underpins targeted approaches that focus on predefined metabolites based on existing knowledge.

The Sherlock Holmes Analogy

A helpful analogy contrasts these approaches using investigative methods. Discovery research resembles Sherlock Holmes piecing together clues at a crime scene without knowing the culprit beforehand—collecting evidence first, then developing a theory [1]. Hypothesis-driven research is like focusing specifically on Colonel Mustard because of a pre-existing suspicion—gathering evidence specifically to confirm or refute his involvement [1]. The weakness of the latter approach is that if the hypothesis is incorrect, time and resources may be wasted while the true "culprit" remains undetected [1].

Scientific Workflow Comparison

Methodological Implementation in Metabolomics

Untargeted Metabolomics: The Discovery Engine

Untargeted metabolomics represents the quintessential discovery approach, aiming to comprehensively measure as many metabolites as possible in a biological sample without bias [3]. This global analysis encompasses both known and unknown metabolites, generating hypotheses for further investigation [3]. The primary strength of untargeted metabolomics lies in its unbiased nature, allowing researchers to detect unexpected metabolic changes and identify novel biomarkers [3].

Key characteristics of untargeted metabolomics include:

- Goal: Hypothesis generation and discovery of novel pathways [3]

- Scope: Analysis of thousands of metabolites simultaneously [3]

- Quantification: Relative quantification (semi-quantitative) [3]

- Sample Preparation: Global metabolite extraction procedures [3]

- Standards: Does not require internal standards for unknown metabolites [3]

In practice, untargeted approaches have identified diagnostic metabolic signatures for various conditions. For example, in pregnancy loss research, untargeted metabolomics analysis of plasma samples from 70 patients and 122 controls identified 57 significantly altered metabolites, with three key metabolites (testosterone glucuronide, 6-hydroxymelatonin, and (S)-leucic acid) showing strong diagnostic potential (AUC values: 0.991, 0.936, and 0.952 respectively) [4].

Targeted Metabolomics: The Hypothesis-Testing Tool

Targeted metabolomics employs a hypothesis-driven approach, focusing on precise measurement of a predefined set of chemically characterized metabolites [3]. This method leverages prior knowledge of metabolic pathways to answer specific biological questions [3]. Targeted analyses are particularly valuable for validating discoveries from untargeted screens and for absolute quantification of key metabolites in large cohorts.

Key characteristics of targeted metabolomics include:

- Goal: Hypothesis testing and validation of specific metabolites [3]

- Scope: Analysis of typically 20-100 predefined metabolites [3]

- Quantification: Absolute quantification using internal standards [3]

- Sample Preparation: Optimized extraction for specific metabolites [3]

- Standards: Requires isotopically labeled internal standards [3]

Targeted approaches demonstrate superior precision for quantitative applications. In rheumatoid arthritis research, targeted metabolomics validated six diagnostic biomarkers initially identified through untargeted screening, enabling development of classification models that robustly differentiated RA from healthy controls (AUC: 0.8375-0.9280) across multiple validation cohorts [5].

Comparative Analysis: Strengths and Limitations

Table 1: Methodological Comparison of Untargeted vs. Targeted Metabolomics

| Parameter | Untargeted Metabolomics | Targeted Metabolomics |

|---|---|---|

| Primary Goal | Hypothesis generation, discovery of novel biomarkers and pathways [3] | Hypothesis testing, validation of known metabolites [3] |

| Number of Metabolites | Thousands of metabolites [3] | Typically ~20 metabolites, up to 100s in semi-targeted [3] |

| Quantification Approach | Relative quantification [3] | Absolute quantification with internal standards [3] |

| Sample Preparation | Global metabolite extraction [3] | Optimized for specific metabolites [3] |

| Analytical Precision | Lower precision due to relative quantification [3] | Higher precision with isotopic standards [3] |

| Risk of False Positives | Higher, requires multiple testing correction [3] | Lower, reduced by targeted analysis [3] |

| Coverage of Unknowns | Can detect unknown metabolites [3] | Limited to known, predefined metabolites [3] |

| Bias | Detection bias toward high-abundance metabolites [3] | Reduced bias through optimized preparation [3] |

Table 2: Performance Metrics from Comparative Studies

| Study Focus | Untargeted Sensitivity | Targeted Concordance | Clinical Application |

|---|---|---|---|

| Genetic Disorders (n=87 patients) | 86% (95% CI: 78-91) for detecting diagnostic metabolites vs. targeted [6] | 50% mean concordance (range: 0-100%) across 81 metabolites [6] | Diagnostic yield of untargeted metabolomics: 0.7% in patients without diagnosis [6] |

| Diabetic Retinopathy (n=110 samples) | Identified L-Citrulline, IAA, CDCA, EPA as distinctive biomarkers [7] | ELISA validation confirmed 4 key metabolites [7] | Accuracy of targeted metabolomics higher for serum metabolite expression [7] |

| Rheumatoid Arthritis (n=2,863 samples) | Initial discovery of biomarker candidates [5] | Validation of 6 diagnostic biomarkers across 7 cohorts [5] | RA vs. HC classifiers AUC: 0.8375-0.9280 [5] |

Experimental Protocols and Cross-Validation Frameworks

Integrated Workflow for Metabolomics Research

The most powerful applications of metabolomics combine both discovery and hypothesis-driven approaches in a sequential workflow. This integrated strategy leverages the strengths of both paradigms while mitigating their individual limitations.

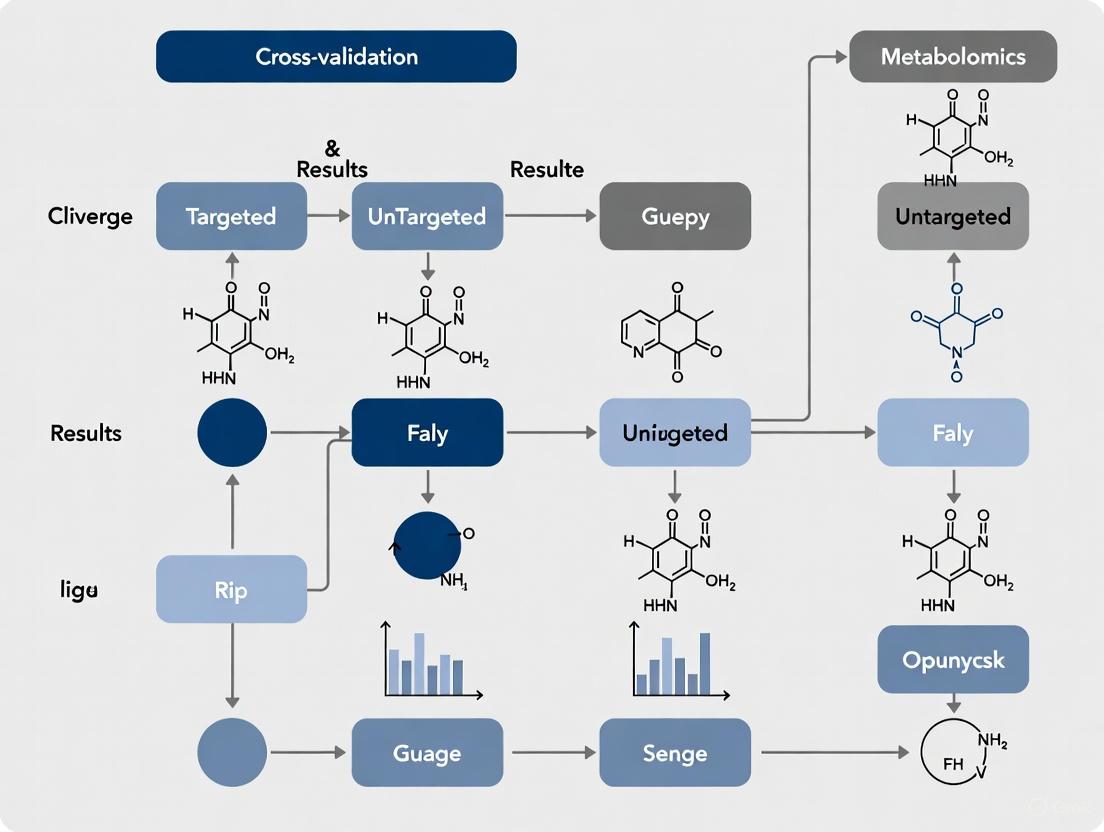

Integrated Metabolomics Workflow

Detailed Methodological Protocols

Untargeted Metabolomics Protocol

Based on recent studies, a robust untargeted metabolomics protocol includes these key steps:

Sample Preparation:

- Collect blood samples in EDTA tubes and centrifuge at 1,500×g for 10 minutes at 4°C to obtain plasma [4]

- Aliquot 100 μL plasma and mix with 200-400 μL prechilled methanol or methanol/acetonitrile (1:1) solution [4] [5]

- Vortex for 30 seconds, sonicate in 4°C water bath for 10 minutes [5]

- Incubate at -40°C for 1 hour to precipitate proteins [5]

- Centrifuge at 12,000-15,000×g for 15-20 minutes at 4°C [4] [5]

- Transfer supernatant for LC-MS analysis [4]

LC-MS Analysis:

- Utilize UHPLC system with reversed-phase or HILIC columns (e.g., Hypersil Gold C18 or Waters BEH Amide) [4] [5]

- Employ gradient elution with mobile phases containing acid/buffer modifiers [4]

- Interface with high-resolution mass spectrometer (e.g., Orbitrap Exploris 120 or Q Exactive HF-X) [4] [5]

- Operate in both positive and negative electrospray ionization modes [4]

- Use full MS and data-dependent MS/MS acquisition [4]

Data Processing:

- Process raw data using software (e.g., Compound Discoverer, XCMS) for peak picking, alignment, and integration [4]

- Identify metabolites by matching accurate mass, retention time, and MS/MS spectra against databases (mzCloud, HMDB, KEGG) [4]

- Perform statistical analysis using multivariate methods (PCA, OPLS-DA) and univariate tests [4]

Targeted Metabolomics Validation Protocol

For validation of discoveries from untargeted analyses:

Sample Preparation for Targeted Analysis:

- Use standardized kits (e.g., Biocrates P500) for reproducible metabolite extraction [7]

- Aliquot 10 μL plasma and derivatize if necessary [7]

- Include isotopically labeled internal standards for each target metabolite [3]

Targeted LC-MS/MS Analysis:

- Employ specific LC conditions optimized for target metabolites [5]

- Use multiple reaction monitoring (MRM) on triple quadrupole mass spectrometers [5]

- Establish calibration curves with authentic standards for absolute quantification [3]

Validation and Statistical Analysis:

- Apply machine learning algorithms (LASSO regression, random forests) for biomarker selection [4] [5]

- Evaluate diagnostic performance using ROC curves and AUC values [4] [5]

- Validate findings in independent cohorts across multiple centers [5]

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents for Metabolomics Studies

| Reagent/Material | Function | Application Examples |

|---|---|---|

| EDTA-coated Blood Collection Tubes | Prevents coagulation and preserves metabolite stability during plasma separation [4] [5] | Standard for plasma metabolomics in rheumatoid arthritis and pregnancy loss studies [4] [5] |

| Methanol and Acetonitrile | Protein precipitation and metabolite extraction solvents [4] [5] | Used in 1:1 ratio for global metabolite extraction in untargeted studies [5] |

| Isotopically Labeled Internal Standards | Enables absolute quantification and corrects for analytical variability [3] | Essential for targeted metabolomics assays (e.g., Biocrates kits) [7] [3] |

| UHPLC Columns (C18 and HILIC) | Separation of metabolites based on hydrophobicity or hydrophilicity [4] [5] | Hypersil Gold C18 for reversed-phase; BEH Amide for HILIC chromatography [4] [5] |

| Mass Spectrometry Quality Control Pools | Monitors instrument stability and technical variability across runs [4] | Pooled samples injected regularly throughout analytical sequence [4] |

| Compound Identification Databases | Annotates metabolites using mass, retention time, and fragmentation data [4] | mzCloud, HMDB, KEGG for metabolite identification [4] |

Cross-Validation in Practice: Case Studies and Data Integration

Successful Cross-Validation Applications

The integration of discovery and hypothesis-driven approaches has proven particularly powerful in large-scale metabolomics studies. The UK Biobank recently completed the world's largest metabolomic dataset, providing metabolomic profiles for approximately 500,000 participants [8]. This unprecedented resource enables both discovery of novel biomarkers and hypothesis-driven research on specific metabolic pathways, with data from 20,000 participants collected at two time points five years apart to track metabolic changes [8].

In rheumatoid arthritis research, a comprehensive multi-center study analyzed 2,863 samples across seven cohorts [5]. The research team first employed untargeted metabolomics to identify potential biomarkers, then developed targeted assays to validate six key metabolites (imidazoleacetic acid, ergothioneine, N-acetyl-L-methionine, 2-keto-3-deoxy-D-gluconic acid, 1-methylnicotinamide, and dehydroepiandrosterone sulfate) [5]. The resulting classification models demonstrated robust performance across geographically distinct validation cohorts, with AUC values of 0.8375-0.9280 for distinguishing RA from healthy controls [5].

In diabetic retinopathy, researchers performed both targeted and untargeted metabolomics on the same sample set, followed by cross-validation and confirmation with ELISA [7]. This integrated approach identified L-Citrulline, indoleacetic acid, chenodeoxycholic acid, and eicosapentaenoic acid as distinctive biomarkers that could differentiate disease stages [7]. The study demonstrated that targeted metabolomics provided higher accuracy for serum metabolite expression, while untargeted approaches revealed a broader metabolic landscape [7].

Validation Frameworks and Performance Metrics

Table 4: Cross-Validation Performance Across Disease Areas

| Disease Context | Sample Size | Untargeted Discovery Findings | Targeted Validation Results | Clinical Utility |

|---|---|---|---|---|

| Genetic Disorders (IEMs) | 226 patients (87 with known disorders) [6] | 86% sensitivity for detecting diagnostic metabolites vs. targeted [6] | Concordance ranged from 0-100% across metabolites [6] | Diagnostic yield: 0.7% in undiagnosed patients [6] |

| Schistosomiasis (Multiple species) | 14 studies reviewed [9] | Identified alterations in glycolysis, TCA cycle, amino acid metabolism [9] | Succinate and citrate as key biomarkers across species [9] | Potential for diagnostic biomarkers and novel therapeutics [9] |

| Pregnancy Loss | 192 participants (70 PL, 122 controls) [4] | 57 significantly altered metabolites; 3 key biomarkers with AUC 0.936-0.991 [4] | Combined biomarker panel achieved AUC of 0.993 [4] | Noninvasive diagnostic potential for early detection [4] |

The dichotomy between discovery-oriented and hypothesis-driven approaches represents a false choice in modern metabolomics research. Rather than opposing methodologies, they form complementary pillars of a robust scientific strategy. Discovery science casts a wide net to identify novel patterns and generate hypotheses, while hypothesis-driven research provides the rigorous validation necessary for scientific credibility and clinical translation.

The most successful metabolomics studies strategically integrate both paradigms, using untargeted approaches for initial discovery and targeted methods for validation and quantification. This integrated framework has demonstrated substantial utility across diverse research areas, from rheumatoid arthritis and diabetic retinopathy to pregnancy loss and genetic disorders. As metabolomics continues to evolve, the synergistic combination of these approaches will remain essential for advancing biological understanding and developing clinically useful biomarkers.

For researchers designing metabolomics studies, the key consideration is not which approach to use, but how to most effectively sequence and integrate them to address specific research questions. By leveraging the strengths of both discovery and hypothesis-driven science, the metabolomics community can continue to unravel the complex metabolic underpinnings of health and disease.

Metabolomic strategies are fundamentally categorized into two distinct approaches: targeted and untargeted metabolomics [3]. This division represents a critical methodological choice for researchers, balancing the depth of quantitative analysis against the breadth of metabolic coverage. The core distinction lies in their scope; targeted metabolomics focuses on the precise measurement of a predefined set of characterized and biochemically annotated analytes, while untargeted metabolomics aims for a global, comprehensive analysis of all measurable metabolites in a sample, including unknown entities [3] [10]. This analytical framework is essential for cross-validation in metabolomics research, where the strengths of one approach often compensate for the weaknesses of the other, enabling a more robust and comprehensive biological interpretation [3] [7]. This guide provides an objective comparison of their performance based on experimental data, framing the discussion within the broader context of validating metabolomic findings.

Analytical Framework: A Direct Comparison of Performance Metrics

The choice between targeted and untargeted metabolomics significantly impacts experimental outcomes, influencing factors such as biomarker discovery potential, quantitative accuracy, and data complexity. The table below summarizes the core characteristics of each approach.

Table 1: Core Characteristics of Targeted and Untargeted Metabolomics

| Feature | Targeted Metabolomics | Untargeted Metabolomics |

|---|---|---|

| Primary Goal | Hypothesis-driven validation and precise quantification [3] [11] | Hypothesis-generating discovery [3] [11] |

| Analytical Scope | Narrow; focuses on a predefined set of known metabolites (e.g., ~20 to a few hundred) [3] [11] | Broad; aims to detect all possible metabolites, known and unknown (hundreds to thousands) [3] [10] |

| Quantification | Absolute quantification using calibration curves and labeled internal standards [3] [11] | Relative quantification (semi-quantitative); compares metabolite levels between sample groups [3] [11] |

| Sensitivity & Specificity | High sensitivity and specificity for targeted metabolites [11] | Variable sensitivity; can miss low-abundance metabolites; lower specificity for individual compounds [11] |

| Key Strength | High precision, reproducibility, and reduced false positives [3] | Unbiased coverage, potential for novel biomarker discovery [3] |

| Primary Limitation | Limited scope; risk of missing unexpected metabolites of interest [3] | Complex data analysis; challenges in metabolite identification and quantification [3] [12] |

Experimental Data and Validation Protocols

Case Study in Diabetic Retinopathy: Cross-Validation in Practice

A 2022 study on Diabetic Retinopathy (DR) in a Chinese population provides a powerful example of how targeted and untargeted metabolomics can be cross-validated [7]. The research aimed to identify biomarkers critical to the development of DR.

- Experimental Protocol: The study utilized a case-control design with 83 participants with type 2 diabetes and 27 matched controls [7]. Plasma samples were analyzed using both targeted and untargeted liquid chromatography-mass spectrometry (LC-MS) platforms. The results from the two approaches were directly compared. Key mutual differential metabolites, including L-Citrulline, indoleacetic acid (IAA), chenodeoxycholic acid (CDCA), and eicosapentaenoic acid (EPA), were then validated using a separate technique, enzyme-linked immunosorbent assay (ELISA) [7].

- Key Findings and Quantitative Data: The cross-validation revealed distinct metabolic shifts associated with disease progression. The following table summarizes the experimentally verified changes in key metabolite levels.

Table 2: Experimentally Validated Metabolite Changes in Diabetic Retinopathy Progression [7]

| Metabolite | T2DM vs. Control | DR vs. T2DM | PDR vs. NPDR |

|---|---|---|---|

| L-Citrulline (Cit) | Not Specified | Decreased | Not Specified |

| Indoleacetic Acid (IAA) | Not Specified | Increased | Not Specified |

| Chenodeoxycholic Acid (CDCA) | Not Specified | Not Specified | Significantly Decreased |

| Eicosapentaenoic Acid (EPA) | Not Specified | Not Specified | Significantly Decreased |

- Conclusion: The study confirmed that the progression of DR was significantly correlated with increased IAA and decreased Cit, CDCA, and EPA. It also concluded that targeted metabolomics offered higher accuracy for metabolite expression in serum compared to the untargeted approach, underscoring the value of validation [7].

The Critical Role of Cross-Validation and Permutation Testing

A major challenge in untargeted metabolomics, particularly when using multivariate models like Partial Least Squares-Discriminant Analysis (PLS-DA), is the risk of overfitting and chance classifications [13]. Proper validation is not just beneficial but essential.

- Experimental Protocol: To avoid over-optimistic results, a robust validation strategy based on permutation testing is recommended [13]. This involves:

- Randomly shuffling the class labels (e.g., "case" and "control") of the samples.

- Building a new PLS-DA model with the permuted, and therefore meaningless, labels.

- Repeating this process many times (e.g., 100-1000 iterations) to create a null distribution of model performance metrics (e.g., Q2, classification accuracy) that would be expected by chance.

- Comparing the performance of the real model (with correct labels) to this null distribution. A statistically significant model must perform better than the vast majority of the permuted models [13].

- Key Findings: Without this rigorous validation, PLS-DA models can produce seemingly perfect separation between groups even with random data, leading to false discoveries [13]. Permutation testing provides a statistically sound reference to ensure the biological validity of the findings.

Methodological Workflows and Emerging Hybrid Approaches

Visualizing Metabolomic Workflows and Their Integration

The fundamental workflows for targeted and untargeted metabolomics involve distinct steps, from initial hypothesis to final validation. Furthermore, the integration of their results is key to a comprehensive analysis.

Bridging the Divide: Pseudotargeted and Hybrid Metabolomics

To overcome the inherent limitations of both traditional approaches, several hybrid strategies have been developed [14] [15]. Among these, pseudotargeted metabolomics has emerged as a powerful compromise [14].

- Principle: This strategy transforms an untargeted method into a targeted one by using the ion pairs (precursor and product ions) discovered during the initial untargeted profiling phase [14] [15]. It typically uses a high-resolution mass spectrometer (HRMS) like a Q-TOF for broad discovery, followed by the creation of a custom ion-pair list. This list is then applied on a highly sensitive triple quadrupole (QQQ) mass spectrometer in Multiple Reaction Monitoring (MRM) mode for precise, quantitative analysis [10] [14].

- Experimental Protocol:

- Discovery Phase: Analyze pooled quality control (QC) samples using UHPLC-HRMS in data-dependent acquisition (DDA) mode to collect MS/MS spectra for a wide array of metabolites [14].

- Ion Pair Selection: Software-assisted identification of optimal precursor-product ion pairs for thousands of detected features [14].

- Quantification Phase: Develop a dynamic MRM method based on the acquired ion list and run the biological samples on a UHPLC-QQQ-MS system for robust quantification [15].

- Key Findings: The pseudotargeted approach combines the high coverage of untargeted metabolomics with the quantitative accuracy and sensitivity of targeted methods [14]. It has been successfully applied in diverse fields, including disease diagnostics, traditional Chinese medicine research, and food authenticity testing [14]. Studies have demonstrated improved repeatability and quantitative capability in large-scale metabolomics studies compared to using either targeted or untargeted approaches alone [15].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Essential Research Reagent Solutions for Metabolomics

| Item | Function | Application Context |

|---|---|---|

| Isotopically Labeled Internal Standards | Correct for matrix effects and losses during sample preparation; enable absolute quantification [3] [11] | Targeted Metabolomics |

| Solvents for Metabolite Extraction | Methanol, acetonitrile, water, and chloroform are used in various combinations to efficiently precipitate proteins and extract a wide range of metabolites [11] | Untargeted & Targeted Metabolomics |

| Derivatization Reagents | Chemically modify metabolites to enhance their volatility, stability, or detectability (e.g., for GC-MS analysis) [15] | Untargeted & Targeted Metabolomics (especially GC-MS) |

| Quality Control (QC) Pooled Samples | A pooled sample from all study samples, used to monitor instrument stability and performance throughout the analytical batch [12] | Untargeted & Targeted Metabolomics |

| Commercial Metabolite Standards | Pure chemical standards used for compound identification, method development, and creating calibration curves [11] | Targeted Metabolomics & Method Development |

| Metabolomics Kits | Pre-configured kits with optimized protocols and standards for quantifying a defined panel of metabolites (e.g., Biocrates MxP kits) [7] [15] | Targeted Metabolomics |

Targeted and untargeted metabolomics are not opposing but complementary strategies. Targeted metabolomics provides the sensitivity, specificity, and quantitative rigor necessary for hypothesis testing and biomarker validation. In contrast, untargeted metabolomics offers an unbiased, systems-level view ideal for discovery and hypothesis generation. The most powerful metabolomics research frameworks strategically employ both, using untargeted methods to map the metabolic terrain and targeted methods to drill down into key findings with precision. Furthermore, the emergence of hybrid and pseudotargeted approaches provides a practical pathway to harness the strengths of both worlds, enabling larger-scale studies with both broad coverage and confident quantification.

The metabolome, representing the complete set of small-molecule metabolites in a biological system, serves as the ultimate functional readout of cellular processes, reflecting the complex interplay between genetic predisposition, environmental influences, and lifestyle factors [16]. Unlike other omics layers, metabolites lie closest to phenotype and provide a direct snapshot of an organism's physiological state at a specific point in time [16] [17]. The concept of "metabolic phenotypes" has emerged as a powerful framework for understanding how metabolic profiles bridge healthy homeostasis and disease-related metabolic disruption [16]. These phenotypes precisely capture the outcome of multidimensional interactions among genetic background, environmental exposures, lifestyle choices, and gut microbiome composition, thereby serving as key molecular links to phenotypic expression [16]. Recent technological advances in high-throughput metabolomics have enabled researchers to systematically quantify and analyze these metabolites, transforming our ability to decipher the metabolic signatures underlying diverse physiological states and disease conditions [16] [18].

The fundamental premise that makes the metabolome such a valuable functional readout is its position as the terminal downstream product of the genome [3]. Metabolites, typically defined as molecules with a molecular weight below 1,500 Da, include diverse classes such as amino acids, sugars, lipids, fatty acids, steroids, and other small molecules that participate in metabolic reactions or are produced as intermediates or end products [18]. Their levels can be dynamically altered in response to various stimuli, making them sensitive indicators of physiological stress, disease processes, or therapeutic interventions [16]. This proximity to actual phenotypic manifestation means that metabolic changes often provide the most immediate and functional information about the current state of a biological system, offering unique insights that complement genomic, transcriptomic, and proteomic data [17].

Methodological Approaches in Metabolomics

Comparative Analysis of Targeted vs. Untargeted Metabolomics

Metabolomic methodologies are broadly categorized into two primary approaches: untargeted and targeted metabolomics, each with distinct advantages, limitations, and appropriate applications [10] [3]. The choice between these approaches represents a fundamental strategic decision in metabolomics study design, with significant implications for experimental outcomes, data interpretation, and biological insights.

Table 1: Core Characteristics of Targeted and Untargeted Metabolomics

| Feature | Targeted Metabolomics | Untargeted Metabolomics |

|---|---|---|

| Scope | Focused analysis of predefined metabolites | Comprehensive analysis of all detectable metabolites |

| Philosophy | Hypothesis-driven | Discovery-oriented |

| Number of Metabolites | Typically ~20 metabolites per assay [10] | Thousands of metabolites [10] |

| Quantitation | Absolute quantification using internal standards [10] | Relative quantification [10] |

| False Positives | Minimal due to predefined parameters [3] | Higher potential without proper validation [3] |

| Data Complexity | Low to moderate | High, requiring extensive processing [10] |

| Ideal Application | Validation of specific metabolic pathways | Hypothesis generation, biomarker discovery [10] |

Targeted metabolomics is a hypothesis-driven approach that focuses on identifying and characterizing a predefined set of known metabolites, leveraging existing knowledge of metabolic processes and molecular pathways [3]. This method utilizes isotopically labeled internal standards and clearly defined analytical parameters to achieve high precision and accuracy in metabolite quantification [3]. The key advantage of targeted approaches lies in their ability to provide absolute quantification of specific metabolites with high sensitivity and reproducibility, making them particularly valuable for validating potential biomarkers or investigating specific metabolic pathways [10] [3]. However, the targeted approach is limited by its dependency on prior knowledge and its restricted scope, which may cause researchers to miss unexpected but biologically relevant metabolites [10].

In contrast, untargeted metabolomics adopts a global, comprehensive analytical perspective, aiming to capture as many metabolites as possible within a sample, including unknown compounds [10] [3]. This discovery-oriented approach does not require extensive prior knowledge of metabolite identities and enables the systematic measurement of thousands of metabolites in an unbiased manner [3]. The primary strength of untargeted metabolomics lies in its ability to reveal novel metabolic patterns and unexpected biological relationships, making it ideal for hypothesis generation and comprehensive metabolic profiling [10]. However, this approach generates massive, complex datasets that require sophisticated statistical analysis and computational processing [10] [3]. Additional challenges include decreased analytical precision due to relative quantification, difficulty in identifying unknown metabolites without reference standards, and a detection bias toward higher abundance metabolites [10] [3].

Analytical Platforms and Technologies

The execution of both targeted and untargeted metabolomics studies relies primarily on two analytical platforms: mass spectrometry (MS) and nuclear magnetic resonance (NMR) spectroscopy [18]. Each platform offers distinct advantages and limitations that must be considered when designing metabolomics investigations.

MS-based metabolomics is typically preceded by a separation step using liquid chromatography (LC-MS) or gas chromatography (GC-MS), which reduces sample complexity and enhances compound detection [18]. LC-MS is particularly suitable for detecting moderately polar to highly polar compounds, including fatty acids, alcohols, phenols, vitamins, organic acids, polyamines, nucleotides, polyphenols, terpenes, and flavonoids [18]. GC-MS is limited to volatile compounds or those that can be derivatized into volatile forms, such as amino acids, organic acids, fatty acids, sugars, and polyols [18]. The major advantages of MS-based approaches include high sensitivity, reliable metabolite identification, and the ability to detect compounds at low concentrations [18]. The main disadvantages include the high cost of instrumentation and the requirement for sample separation or purification prior to analysis [18].

NMR spectroscopy, on the other hand, is based on the principle of energy absorption and re-emission by atomic nuclei in response to variations in an external magnetic field [18]. This technique generates spectral data that can be used to quantify metabolite concentrations and characterize chemical structures. Key advantages of NMR include its non-destructive nature, high reproducibility, minimal sample preparation requirements, and ability to provide rich structural information quickly [18]. However, NMR has lower sensitivity compared to MS, meaning that lower concentration metabolites may be undetectable amidst more abundant compounds [18].

Table 2: Comparison of Major Analytical Platforms in Metabolomics

| Parameter | LC-MS | GC-MS | NMR |

|---|---|---|---|

| Sensitivity | High (pM-nM) | High (pM-nM) | Moderate (μM-mM) |

| Sample Preparation | Moderate | Extensive (derivatization) | Minimal |

| Reproducibility | Moderate | Moderate | High |

| Structural Information | Moderate (via fragmentation) | Moderate | High |

| Throughput | Moderate | Moderate | High |

| Quantitation | Good (with standards) | Good (with standards) | Excellent |

| Destructive | Yes | Yes | No |

| Key Applications | Polar to non-polar metabolites | Volatile/semi-volatile metabolites | Structure elucidation, flux analysis |

Emerging Approaches and Integration Strategies

To address the limitations of both targeted and untargeted approaches, researchers have developed hybrid strategies that leverage the strengths of each method [10] [3]. The "widely-targeted" metabolomics approach represents one such innovation, combining the comprehensive coverage of untargeted methods with the precise quantification of targeted approaches [10]. This methodology typically involves initial untargeted analysis using high-resolution mass spectrometers to collect primary and secondary mass spectrometry data from various samples, followed by targeted analysis using low-resolution triple quadrupole (QQQ) mass spectrometers in multiple reaction monitoring (MRM) mode to obtain quantitative data for metabolites identified during the discovery phase [10].

Another emerging trend is the integration of metabolomics with genome-wide association studies in mGWAS, which helps establish genetic associations with fluctuating metabolite levels and provides deeper insights into the causal mechanisms underlying physiology and disease [10]. This integration has been instrumental in identifying key metabolites associated with disease risk, such as branched-chain amino acids in pancreatic cancer development [3].

Experimental Protocols and Workflows

Standardized Metabolomics Workflow

A robust metabolomics study follows a systematic workflow encompassing sample collection, preparation, data acquisition, processing, and statistical analysis [18]. Adherence to standardized protocols is essential for generating reliable, reproducible data that accurately reflects biological variation rather than technical artifacts.

Sample Preparation Protocols

Sample preparation varies significantly between targeted and untargeted approaches. For targeted metabolomics, specific extraction procedures optimized for the metabolites of interest are employed, typically requiring appropriate internal standards for quantification [10] [3]. In contrast, untargeted metabolomics requires global metabolite extraction procedures designed to capture the broadest possible range of metabolites without bias toward specific chemical classes [10] [3]. Common to both approaches is the critical need for immediate sample stabilization after collection, typically through flash-freezing in liquid nitrogen, to preserve metabolic profiles and prevent ongoing enzymatic activity that could alter metabolite levels [18].

The quality control framework incorporates multiple elements: procedural blanks to identify contamination, technical replicates to assess analytical variance, and pooled quality control samples (typically created by combining small aliquots of all biological samples) that are analyzed at regular intervals throughout the analytical sequence [18] [19]. These QC samples are essential for monitoring instrument performance, evaluating technical variation, and correcting for batch effects [18] [19]. For large-scale studies, standardized reference materials such as the National Institute of Standards and Technology (NIST) standard reference material (SRM) 1950 for plasma metabolomics may be incorporated [19].

Data Processing and Statistical Analysis

Data processing represents a critical phase in the metabolomics workflow, particularly for untargeted studies where the volume and complexity of data are substantially greater [18]. Raw data from mass spectrometry instruments must undergo multiple processing steps including noise reduction, retention time correction, peak detection and integration, chromatographic alignment, and compound identification [18]. Specialized software tools such as XCMS, MAVEN, and MZmine3 are commonly employed for these tasks [18].

A key challenge in metabolomics data analysis is the proper handling of missing values, which can arise from various sources including analytical issues or metabolite abundances falling below detection limits [19]. The most appropriate strategy for dealing with missing values depends on their nature: missing completely at random (MCAR), missing at random (MAR), or missing not at random (MNAR) [19]. Commonly used imputation methods include replacement with a constant value (e.g., a percentage of the lowest concentration measured), k-nearest neighbors (kNN) imputation, or random forest-based imputation [19].

Statistical analysis in metabolomics typically involves both unsupervised and supervised methods. Unsupervised approaches such as principal component analysis (PCA) are used for exploratory data analysis and quality control, while supervised methods like partial least squares-discriminant analysis (PLS-DA) are employed for classification and biomarker discovery [13]. However, PLS-DA is particularly prone to overfitting, especially with the high-dimensional data typical of metabolomics studies, making rigorous validation essential [13]. Proper validation strategies include cross-model validation and permutation testing, which generates a null distribution for assessing statistical significance [13].

Cross-Validation in Metabolomics Studies

The Critical Need for Validation

Cross-validation is particularly crucial in metabolomics due to the high dimensionality of the data, where the number of variables (metabolites) often far exceeds the number of samples (observations) [13] [19]. This data structure makes metabolomics studies highly susceptible to overfitting, where models appear to perform well on the data used to build them but fail to generalize to new samples [13]. The problem is exacerbated with unsupervised methods, where apparent patterns can emerge purely by chance in high-dimensional space [13]. Demonstrating this risk, one study showed that applying PLS-DA to randomly generated data with arbitrary class assignments frequently produces score plots showing apparent "separation" between groups, despite the absence of any true biological differences [13].

Validation Strategies for Targeted vs. Untargeted Approaches

Targeted metabolomics studies typically employ more straightforward validation protocols focused on analytical performance, including determination of precision, accuracy, linearity, limit of detection, and limit of quantification using authentic standards [10] [3]. Method validation also includes stability assessments and evaluation of matrix effects [3]. Because targeted analyses measure a predefined set of metabolites, statistical multiple testing correction is more manageable, with false discovery rates typically controlled using methods such as Benjamini-Hochberg correction [13].

Untargeted metabolomics requires more extensive validation due to the exploratory nature of the approach and the large number of statistical tests performed [13]. Proper validation should include both internal and external validation components [13]. Internal validation techniques include cross-validation (e.g., leave-one-out or k-fold) and permutation testing, which assesses whether the observed classification accuracy exceeds what would be expected by chance [13]. External validation through independent sample sets is considered the gold standard but is not always feasible due to cost or sample availability constraints [13].

Advanced Validation Frameworks

Permutation testing has emerged as a particularly valuable validation technique in metabolomics [13]. This approach involves repeatedly randomizing class labels and rebuilding the classification model to generate a null distribution of model performance metrics [13]. The actual model performance can then be compared to this null distribution to assess statistical significance [13]. This method has the advantage of accounting for the specific characteristics of the dataset, including sample size, data structure, and variation patterns [13].

For studies intending to develop clinical biomarkers, validation across multiple cohorts is essential [17]. Large-scale studies such as the UK Biobank, which has incorporated NMR-based metabolomic profiling of over 274,000 participants, provide unprecedented opportunities for both discovery and validation of metabolic biomarkers across diverse populations [17]. Such large datasets enable robust assessment of metabolite-disease associations and facilitate the development of machine learning-based metabolic risk scores with improved classification performance [17].

Metabolic Pathways and Phenotypic Links

Metabolic Dysregulation in Disease

Metabolomic studies have revealed characteristic patterns of metabolic dysregulation across numerous disease states, providing insights into underlying pathological mechanisms and potential therapeutic targets [16] [18]. These metabolic alterations often involve multiple interconnected pathways rather than isolated metabolite changes, highlighting the systems biology perspective inherent to metabolomics.

Table 3: Characteristic Metabolic Pathway Alterations in Human Diseases

| Disease Category | Dysregulated Pathways | Key Metabolite Changes |

|---|---|---|

| Cancer | Tricarboxylic acid cycle, Amino acid metabolism, Fatty acid metabolism, Choline metabolism [18] | Succinate, uridine, lactate (gastric cancer) [16]; Kanzonol Z, Xanthosine, Nervonyl carnitine (lung cancer) [16]; N1-acetylspermidine (T-cell leukemia) [16] |

| Diabetes | Acylcarnitine metabolism, Palmitic acid metabolism, Linolenic acid metabolism, Carbohydrate metabolism [18] | Elevated branched-chain amino acids (early insulin resistance) [16]; Glycine, serine alterations [18] |

| Cardiovascular Diseases | Lipid metabolism, Fatty acid oxidation, Energy metabolism [16] | Cholesterol to total lipids ratio in LDL particles [17]; Altered HDL and VLDL subfractions [17] |

| Obesity | Glycolysis, TCA cycle, Urea cycle, Glutathione metabolism [18] | Gut microbiota-derived metabolites affecting energy absorption [16]; Altered SCFA profiles [16] |

| Neurodegenerative Disorders | Amino acid metabolism, Fatty acid metabolism, Cholesterol metabolism, Polyamine metabolism [18] | Amyloid-beta peptides (Alzheimer's) [16]; Glycerophospholipid alterations [18] |

The tricarboxylic acid cycle emerges as a commonly dysregulated pathway across multiple cancer types, including bladder, colorectal, and liver cancers [18]. Similarly, alterations in lipid metabolism represent a recurring theme across diverse conditions including cardiovascular disease, diabetes, and cancer [18]. The ratio of cholesterol to total lipids in LDL particles has been identified as one of the most frequently disease-associated metabolic measures, linked to hundreds of different disease conditions in large-scale studies [17].

Temporal Dynamics of Metabolic Changes

A key advantage of metabolic profiling is its ability to detect alterations that precede clinical disease manifestation, offering potential opportunities for early intervention [17]. Longitudinal studies have demonstrated that more than half (57.5%) of metabolites show statistically significant variations from healthy baselines over a decade before disease diagnosis [17]. These temporal patterns vary by disease type, with some conditions showing progressive metabolic alterations beginning many years before clinical onset, while others demonstrate more acute metabolic shifts closer to diagnosis [17].

The gut microbiome plays a particularly important role in shaping host metabolic phenotypes through the production of microbial metabolites such as short-chain fatty acids, which significantly influence energy homeostasis, insulin sensitivity, and inflammatory responses [16]. The gut microbiota also participates in bile acid metabolism, vitamin synthesis, and direct regulation of host lipid and glucose homeostasis [16]. Differences in microbiota composition have been associated with susceptibility to various metabolic diseases, including obesity and diabetes [16].

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful metabolomics studies require carefully selected reagents, standards, and materials to ensure analytical quality and reproducibility. The following table outlines essential components of the metabolomics research toolkit.

Table 4: Essential Research Reagents and Materials for Metabolomics Studies

| Category | Specific Examples | Function and Application |

|---|---|---|

| Internal Standards | Isotopically labeled compounds (¹³C, ¹⁵N, ²H), Stable Isotope-Labeled Internal Standards (SILIS) | Absolute quantification, correction for matrix effects and analytical variation [10] [3] |

| Quality Control Materials | Pooled QC samples, NIST SRM 1950, Procedural blanks, Solvent blanks | Monitoring instrument performance, assessing technical variability, batch effect correction [18] [19] |

| Chromatography Supplies | LC columns (C18, HILIC), GC columns (DB-5MS), Derivatization reagents (BSTFA, methoxyamine) | Compound separation, volatility enhancement for GC-MS, improved detection [18] |

| Sample Preparation Reagents | Organic solvents (methanol, acetonitrile, chloroform), Buffers, Protein precipitation reagents, Solid-phase extraction cartridges | Metabolite extraction, protein removal, sample cleanup, metabolite enrichment [18] |

| Reference Databases | Human Metabolome Database (HMDB), METLIN, MassBank, LipidMaps | Metabolite identification, spectral matching, pathway analysis [18] |

| Data Processing Tools | XCMS, MZmine, MAVEN, MS-DIAL | Peak detection, alignment, normalization, metabolite quantification [18] |

| Statistical Analysis Software | R packages (metabolomics, mixOmics), Python libraries (pandas, scikit-learn), MetaboAnalyst | Data normalization, multivariate statistics, biomarker discovery [19] |

The selection of appropriate internal standards is particularly critical for obtaining accurate quantification, especially in targeted metabolomics [3]. Isotopically labeled standards (with ¹³C, ¹⁵N, or ²H atoms) are ideal because they closely mimic the chemical and physical properties of the target analytes while being distinguishable by mass spectrometry [3]. For untargeted studies, where comprehensive standards may not be available, pooled quality control samples become especially important for monitoring instrument stability and performing data normalization [18] [19].

The metabolome serves as a powerful functional readout that provides unique insights into phenotypic expression, capturing the integrated effects of genetic, environmental, and lifestyle factors on physiological states [16]. Both targeted and untargeted metabolomics approaches offer complementary strengths, with targeted methods providing precise, quantitative data for hypothesis testing, and untargeted methods enabling comprehensive, discovery-oriented profiling [10] [3]. The cross-validation of findings between these approaches strengthens the biological insights gained from metabolomic studies [13].

Future directions in metabolomics research include increased integration with other omics technologies, the application of artificial intelligence for data analysis and pattern recognition, and the development of more sophisticated dynamic metabolic profiling methods [16]. Large-scale population studies such as the UK Biobank are systematically mapping the complex relationships between metabolic profiles and diverse health outcomes, creating comprehensive atlases of metabolic-phenotypic associations [17]. These advances are expected to accelerate the translation of metabolomic discoveries into clinical applications, including early disease detection, personalized risk assessment, and targeted therapeutic interventions [16] [17].

As the field continues to evolve, rigorous validation practices will remain essential for distinguishing true biological signals from analytical artifacts and statistical chance [13]. Proper cross-validation strategies, including permutation testing and independent cohort validation, are critical components of robust metabolomics study design [13] [17]. When implemented with careful attention to methodological details and validation requirements, metabolomics provides an exceptionally powerful approach for linking metabolic profiles to phenotype and advancing our understanding of health and disease.

In the field of metabolomics, the choice between targeted and untargeted strategies is fundamental and dictates the entire experimental workflow, from sample preparation to data interpretation. Targeted metabolomics is a hypothesis-driven approach focused on the precise quantification of a predefined set of known metabolites, often used for validation and absolute quantification [3] [20]. In contrast, untargeted metabolomics is a hypothesis-generating approach that comprehensively captures as many metabolites as possible, both known and unknown, to uncover novel biomarkers and pathways [3] [10]. This guide objectively compares their performance, supported by experimental data, and frames the findings within the broader thesis of cross-validating metabolomics results, providing researchers and drug development professionals with a clear framework for deployment.

Core Differences and Performance Characteristics

The fundamental distinction between the two strategies lies in their scope and objective. The performance characteristics stemming from this difference are quantified in the table below.

Table 1: Core Characteristics and Performance Comparison of Metabolomics Strategies

| Feature | Targeted Metabolomics | Untargeted Metabolomics |

|---|---|---|

| Philosophy | Hypothesis-driven, confirmatory [3] [20] | Hypothesis-generating, discovery-based [3] [20] |

| Scope | Analysis of a predefined set of known metabolites (typically ~20 or more) [3] [21] | Global analysis of all detectable metabolites, known and unknown [3] [10] |

| Quantification | Absolute quantification using internal standards [3] [21] | Relative quantification (semi-quantitative) [3] [10] |

| Precision & Accuracy | High precision and accuracy due to optimized protocols and standards [3] [21] | Lower precision; potential for analytical artifacts and false discoveries [3] [10] |

| Metabolite Coverage | Limited to pre-selected targets; risk of missing unexpected metabolites [3] | Wide coverage (100s-1000s of features); enables unbiased discovery [3] [22] |

| Detection Bias | Reduced bias from high-abundance molecules [3] | Bias towards detecting higher-abundance metabolites [3] |

| Primary Application | Validation of specific metabolic pathways or biomarkers [3] | Biomarker discovery, pathway mapping, and novel insights [3] [23] |

Experimental data directly comparing the two methods highlight these performance trade-offs. One study evaluating the accuracy of substance detection found that a targeted method (QqQHILIC) demonstrated higher accuracy in both technical repetition and inter-batch validation experiments compared to an untargeted method (OrbiHILIC) when analyzing biological samples like NIST plasma, fish liver, and fish brain [21]. This confirms the superior quantitative precision of targeted protocols.

Experimental Protocols and Workflows

The decision between a targeted or untargeted strategy dictates the specific protocols for sample preparation, data acquisition, and data analysis. The following diagram outlines the generalized workflows for both approaches, highlighting key differences.

Sample Preparation and Metabolite Extraction

Sample preparation is a critical step that differs significantly between the two approaches [24].

- Targeted Protocol: Extraction procedures are optimized for the specific physical-chemical properties of the pre-defined target metabolites. This involves using specific solvent systems, such as methanol/isopropanol/water mixtures, to efficiently extract the compounds of interest [3] [24]. A hallmark of targeted methods is the addition of isotopically labeled internal standards for each analyte prior to extraction. This step is crucial for correcting for matrix effects and losses during preparation, enabling highly accurate absolute quantification [3] [21] [24].

- Untargeted Protocol: The goal is global metabolite extraction to capture the broadest possible range of metabolites. This often employs biphasic solvent systems (e.g., methanol/chloroform/water) to simultaneously extract both polar and non-polar metabolites [24]. While internal standards can be added, they are not available for every potential unknown metabolite, which contributes to the semi-quantitative nature of the data [3].

Data Acquisition and Instrumentation

The choice of instrumentation is driven by the need for either high quantitative sensitivity or broad mass range and high resolution.

- Targeted Data Acquisition: Typically employs triple quadrupole (QQQ) mass spectrometers coupled with liquid or gas chromatography (LC or GC). These instruments operate in Multiple Reaction Monitoring (MRM) mode, which offers high sensitivity, selectivity, and a wide dynamic range for precise quantification of known compounds [21] [10].

- Untargeted Data Acquisition: Relies on high-resolution mass spectrometers (HRMS) such as Quadrupole-Time of Flight (Q-TOF), Orbitrap, or Fourier Transform Ion Cyclotron Resonance (FT-ICR-MS) instruments [22] [10]. FT-ICR-MS offers the highest mass resolution and accuracy, allowing for the exact mass measurement necessary to determine elemental composition and identify unknown metabolites in complex mixtures [22]. Data acquisition is often performed in Data-Dependent Acquisition (DDA) or Data-Independent Acquisition (DIA) modes to fragment and collect structural data on as many ions as possible [25].

Data Processing and Analysis

The data analysis pipelines diverge to meet the different end goals.

- Targeted Analysis: Processing is relatively straightforward. The area under the chromatographic peak for each target metabolite is quantified relative to its internal standard, and concentration is determined using a calibration curve. Results are compared to reference ranges for biological interpretation [3].

- Untargeted Analysis: This involves complex computational workflows. Raw data undergoes peak picking, alignment, and normalization to create a data matrix of thousands of metabolite features [23] [20]. Multivariate statistical analyses like Principal Component Analysis (PCA) and Partial Least Squares-Discriminant Analysis (PLS-DA) are used to identify patterns and significant features. Subsequent metabolite identification using databases (e.g., HMDB, KEGG) is a major challenge, particularly for novel metabolites [3] [22].

Cross-Validation: An Integrated Framework

The most powerful applications of metabolomics arise from integrating targeted and untargeted strategies in a cross-validation framework. This hybrid approach leverages the strengths of each method to generate robust and biologically insightful findings.

Table 2: Clinical Validation Performance in a Diagnostic Setting

| Metric | Targeted Metabolomics | Untargeted Metabolomics |

|---|---|---|

| Sensitivity (vs. Targeted as Benchmark) | Benchmark (100% for its targets) | 86% (95% CI: 78–91) [6] |

| Key Strengths | Gold standard for validating and monitoring known IEMs [6] | Detects novel biomarkers; provides functional validation for VUS from genomics [6] |

| Reported Limitations | Can miss diagnostically relevant patterns outside predefined panel [6] | May miss specific key metabolites (e.g., homogentisic acid in alkaptonuria) [6] |

A seminal 3-year comparative clinical study underscores the complementary nature of these strategies. The study found that while untargeted metabolomics showed high sensitivity in detecting known inborn errors of metabolism (IEMs), there were clinically relevant discrepancies. For example, it failed to detect homogentisic acid in alkaptonuria patients, a key diagnostic metabolite [6]. Conversely, in a patient with a variant of unknown significance (VUS) in the ODC1 gene, extensive targeted analysis was unremarkable, but untargeted metabolomics successfully identified elevated levels of N-acetylputrescine, a novel biomarker that functionally validated the genetic finding [6]. This demonstrates the unique discovery power of untargeted profiling.

The following diagram illustrates a robust, integrated workflow for cross-validating metabolomics results.

This integrated model is exemplified in studies of hyperuricemia, where untargeted metabolomics was first used to screen for novel candidate biomarkers, which were subsequently verified using targeted metabolomics [3] [10]. This two-phase approach ensures that discoveries are not merely observational but are quantitatively validated. Advances in "semi-targeted" or widely-targeted metabolomics further formalize this integration. This method uses high-resolution MS data to build a library of metabolites, which is then used to develop a targeted MRM assay on a QQQ instrument, allowing for the high-throughput and precise quantification of hundreds of pre-identified metabolites [10].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Essential Research Reagent Solutions for Metabolomics

| Item | Function | Application Notes |

|---|---|---|

| Isotopically Labeled Internal Standards (e.g., ¹³C, ¹⁵N) | Enables absolute quantification by correcting for matrix effects and extraction efficiency [21] [24]. | Critical for Targeted analysis. Added at the beginning of sample preparation. |

| Methanol-Chloroform Solvent System | Biphasic extraction system for comprehensive recovery of both polar (methanol/water phase) and non-polar (chloroform phase) metabolites [24]. | Common in Untargeted workflows for global metabolite coverage. |

| Quality Control (QC) Pools | A pooled sample created from aliquots of all samples; analyzed repeatedly throughout the batch to monitor instrument stability and data quality [24]. | Essential for both strategies, but particularly critical for detecting drift in long untargeted runs. |

| NIST SRM 1950 Plasma | Standard reference material with certified concentrations of numerous metabolites [21]. | Used for method validation and benchmarking in both targeted and untargeted assays. |

| Solid Phase Extraction (SPE) Kits | Sample clean-up to remove interfering salts and proteins, reducing ion suppression [22]. | Used when sample complexity or matrix effects are high. |

| Derivatization Reagents (e.g., MSTFA for GC-MS) | Chemically modify metabolites to improve volatility, thermal stability, and detection sensitivity [25]. | Commonly used in GC-MS based metabolomics for a wider range of metabolites. |

The choice between targeted and untargeted metabolomics is not a matter of which is superior, but which is appropriate for the research objective. The following guidelines will ensure strategic deployment:

- ↳ Deploy Untargeted Metabolomics when the goal is hypothesis generation, biomarker discovery in uncharted disease areas, or functional characterization of unknown phenotypes [3] [23]. It is the first choice for explorative studies and when an unbiased overview of the metabolic state is needed.

- ↳ Deploy Targeted Metabolomics when the goal is hypothesis testing, absolute quantification of specific pathway metabolites, validation of biomarkers from a prior untargeted study, or high-throughput clinical validation of known biochemical disorders [3] [6].

- ↳ Adopt an Integrated, Cross-Validation Framework for the most robust and impactful results. Use untargeted discovery to cast a wide net and generate candidate biomarkers, then employ targeted validation to confirm these findings with precision and rigor [6] [10]. This synergistic approach is the future of metabolomics research and is essential for advancing drug development and precision medicine.

From Bench to Data: Executing Integrated Metabolomics Workflows

The choice between targeted and untargeted metabolomics is a fundamental strategic decision that dictates every subsequent step in the experimental workflow, beginning with sample preparation. While targeted metabolomics focuses on the precise quantification of a predefined set of metabolites, untargeted metabolomics aims to comprehensively detect as many metabolites as possible, both known and unknown [3]. This fundamental difference in objective necessitates distinct approaches to sample preparation and extraction, which are critical for generating reliable, reproducible, and biologically meaningful data. The growing field of metabolomics has highlighted the necessity of cross-validating findings between these two approaches, a process that begins with optimal and tailored sample preparation [7].

The overarching goal of sample preparation in metabolomics is to effectively extract metabolites while removing interfering compounds, particularly proteins and phospholipids, that can compromise analytical performance. However, the specific priorities for extraction protocols diverge significantly between targeted and untargeted paradigms. This guide provides an objective comparison of sample preparation methods for targeted and untargeted metabolomics, detailing experimental protocols, performance data, and practical considerations for researchers and drug development professionals working to validate metabolomic findings.

Fundamental Distinctions: Targeted vs. Untargeted Metabolomics

Core Objectives and Methodological Philosophies

Targeted metabolomics is a hypothesis-driven approach designed for the precise identification and absolute quantification of a predefined set of biologically relevant metabolites. It requires a priori knowledge of specific metabolic pathways or mechanisms of interest [3]. The sample preparation is optimized for these specific analytes, often employing isotopically labeled internal standards to correct for matrix effects and variations in extraction efficiency, thereby achieving high accuracy and precision [3]. This approach is ideally suited for validating specific biomarkers or testing defined metabolic hypotheses.

In contrast, untargeted metabolomics adopts a discovery-oriented approach to comprehensively profile the metabolome, detecting both known and unknown metabolites without bias [3]. The sample preparation strategy prioritizes broad metabolite coverage and the preservation of chemical diversity over the optimization for any specific compound. Consequently, untargeted methods provide relative quantification rather than absolute concentrations and are powerful tools for hypothesis generation and novel biomarker discovery [3].

Table 1: Core Conceptual Differences Between Targeted and Untargeted Metabolomics

| Feature | Targeted Metabolomics | Untargeted Metabolomics |

|---|---|---|

| Primary Objective | Hypothesis testing; Absolute quantification of predefined metabolites | Hypothesis generation; Comprehensive relative profiling of known/unknown metabolites |

| Metabolite Coverage | Limited (typically 20-100s of metabolites) [3] | Extensive (1000s of metabolites) |

| Quantification | Absolute (using internal standards) | Relative |

| Sample Preparation | Optimized for specific metabolite properties | Generalized for broad chemical diversity |

Experimental Workflows and Cross-Validation Strategy

The experimental workflows for targeted and untargeted metabolomics, while sharing common steps, are defined by their distinct sample preparation and data processing objectives. The following diagram illustrates these parallel pathways and the critical process of cross-validating their results.

Comparative Evaluation of Extraction Methods and Matrices

Performance Metrics for Extraction Protocols

The selection of an optimal extraction method must be guided by well-defined performance metrics that align with the study's goals. For untargeted studies, metabolite coverage is paramount, whereas targeted studies prioritize accuracy, precision, and sensitivity. A comprehensive evaluation of five common extraction methods in both plasma and serum provides critical quantitative data for this decision-making process [26].

Table 2: Performance Comparison of Extraction Methods in Plasma and Serum [26]

| Extraction Method | Total Features (Plasma) | Total Features (Serum) | Repeatability (Plasma) | Linearity (R²) | Matrix Effect (%) |

|---|---|---|---|---|---|

| Methanol | 15,689 | 14,977 | Good | >0.99 | -25 to 15 |

| Methanol/Acetonitrile (1:1) | 15,221 | 14,512 | Good | >0.99 | -30 to 10 |

| Acetonitrile | 13,890 | 13,205 | Moderate | >0.98 | -35 to 5 |

| Methanol-SPE | 12,450 | 11,880 | Excellent | >0.99 | -15 to 5 |

| Acetonitrile-SPE | 11,923 | 11,345 | Excellent | >0.98 | -20 to 8 |

Key findings from this systematic comparison indicate that methanol-based protein precipitation provides the broadest metabolome coverage in both plasma and serum, making it highly suitable for untargeted studies [26]. The addition of Solid-Phase Extraction (SPE) cleanup, while reducing overall feature count, significantly improves method repeatability and reduces ion suppression/enhancement effects (matrix effects), which is beneficial for targeted assays requiring high precision [26]. The data also confirms that plasma generally yields a higher number of detected features compared to serum across all extraction methods, establishing it as the preferred matrix for comprehensive metabolomic analysis [26].

Multiomics Protocol Comparison

The challenge of sample preparation is further compounded in integrated multiomics studies. A recent systematic comparison of a biphasic extraction (e.g., MTBE-based) and a monophasic bead-based extraction for the simultaneous analysis of metabolites, lipids, and proteins from HepG2 cells offers valuable insights [27].

The biphasic protocol separates polar metabolites (aqueous phase) and lipids (organic phase), with proteins recovered from the interphase pellet for subsequent digestion and proteomic analysis [27]. In contrast, the monophasic approach uses a solvent like n-butanol:ACN to simultaneously extract metabolites and lipids, while proteins are aggregated on silica beads for accelerated on-bead tryptic digestion [27]. The bead-based monophasic method was found to be the most reproducible, efficient, and cost-effective solution for an integrated multiomics workflow from plated cells, though the optimal choice may depend on the specific analytical setup and research priorities [27].

Detailed Experimental Protocols for Cross-Validation

Protocol for Untargeted Metabolomics: Methanol Precipitation

This protocol is optimized for maximum metabolite coverage from blood-derived samples (plasma or serum) and is widely used in untargeted discovery phases [26].

- Thawing: Thaw frozen plasma/serum samples on ice.

- Aliquoting: Aliquot 50-100 µL of sample into a microcentrifuge tube.

- Protein Precipitation: Add 3-4 volumes of ice-cold methanol (e.g., 300 µL methanol to 100 µL plasma). For broader coverage, a 1:1 mixture of methanol and acetonitrile can be used.

- Mixing: Vortex vigorously for 30-60 seconds.

- Incubation: Incubate the mixture at -20°C for at least 60 minutes to enhance protein precipitation.

- Centrifugation: Centrifuge at >14,000 × g for 15 minutes at 4°C.

- Collection: Carefully collect the supernatant, which contains the extracted metabolites, into a new LC-MS vial.

- Storage: Evaporate the supernatant to dryness using a vacuum centrifuge and store the dry extract at -80°C until analysis. Reconstitute in a solvent compatible with the LC-MS method (e.g., ACN:water 1:1, v:v) [27].

Protocol for Targeted Metabolomics: Hybrid SPE Cleanup

This protocol builds upon solvent precipitation by incorporating a phospholipid removal step (SPE) to enhance analytical robustness and precision, which is critical for targeted assays [26].

- Precipitation: Follow steps 1-5 of the untargeted protocol using methanol as the precipitating solvent.

- SPE Conditioning: Condition a phospholipid removal SPE cartridge (e.g., Phree plates) with 1 mL of methanol.

- Equilibration: Equilibrate the cartridge with 1 mL of water or a weak solvent.

- Loading: Load the supernatant (from step 6 of the untargeted protocol) onto the conditioned SPE cartridge.

- Elution: Apply vacuum or positive pressure to elute the metabolites. Collect the flow-through. A second elution with a stronger solvent may be used to recover a wider range of metabolites.

- Concentration: Evaporate the eluent to dryness under a stream of nitrogen or in a vacuum centrifuge.

- Reconstitution: Reconstitute the dried extract in a precise volume of initial LC-MS mobile phase, ensuring compatibility with the analytical method and the use of appropriate internal standards.

Cross-Validation Workflow from a Clinical Study

A clinical study on diabetic retinopathy (DR) provides a concrete example of a cross-validation workflow. The study first used untargeted metabolomics on plasma samples to discover potential biomarkers associated with DR progression. Key differential metabolites, including L-Citrulline, indoleacetic acid (IAA), chenodeoxycholic acid (CDCA), and eicosapentaenoic acid (EPA), were identified [7]. These candidate biomarkers were then subjected to a targeted metabolomics assay for precise quantification using a predefined panel [7]. Finally, the findings for these specific metabolites were further validated using a orthogonal technique, Enzyme-Linked Immunosorbent Assay (ELISA), to confirm their association with the disease stages [7]. This sequential use of untargeted → targeted → orthogonal validation represents a robust model for confirming metabolomic discoveries.

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents, materials, and instrumentation critical for executing the sample preparation protocols described in this guide.

Table 3: Essential Research Reagents and Materials for Metabolite Extraction

| Item | Function/Application | Example Specifications |

|---|---|---|

| Methanol (LC-MS Grade) | Primary solvent for protein precipitation; offers broad metabolite coverage [26]. | Optima LC/MS grade |

| Acetonitrile (LC-MS Grade) | Precipitation solvent; often used with methanol to modulate selectivity [26]. | Optima LC/MS grade |

| Internal Standards (Isotope-Labeled) | Critical for targeted assays; corrects for loss during prep and matrix effects during MS analysis [7] [26]. | e.g., Succinic acid-13C2, L-Leucine-d3 |

| Phospholipid Removal SPE Cartridges | Removes phospholipids to reduce ion suppression and improve data quality in targeted work [26]. | e.g., Phree plates (Phenomenex) |

| Formic Acid (LC-MS Grade) | Acid additive to mobile phases to promote protonation in positive ion mode MS. | Pierce LC/MS grade, 0.1% |

| Ammonium Acetate/Formate | Volatile buffers for LC-MS mobile phases, suitable for negative ion mode. | LC-MS Grade |

| Silica Beads (for multiomics) | Used in monophasic multiomics protocols for on-bead protein aggregation and digestion [27]. | e.g., SeraSil-Mag (400 nm, 700 nm) |

| Trypsin (Mass Spec Grade) | Enzyme for on-bead protein digestion in integrated proteomics/metabolomics workflows [27]. | Trypsin Gold, Rapid Trypsin Gold |

The strategic selection and tailoring of sample preparation methods are foundational to the success of any metabolomics study. The experimental data and protocols presented herein demonstrate that no single extraction method is universally superior; rather, the optimal choice is dictated by the analytical goals.

For untargeted metabolomics and initial discovery phases, methanol-based protein precipitation provides the most extensive metabolite coverage and is the recommended starting point. For targeted metabolomics and biomarker validation, methods that incorporate SPE cleanup, while sacrificing some breadth, deliver the superior repeatability and reduced matrix effects necessary for precise quantification. Furthermore, the integration of these approaches—using untargeted methods for discovery and targeted methods for validation, as demonstrated in the clinical cross-validation workflow—represents the most powerful and rigorous paradigm in modern metabolomics research.

Metabolomics, the comprehensive analysis of small molecule metabolites, provides a direct readout of cellular activity and physiological status, positioning it as a cornerstone of functional genomics and systems biology [24]. The field employs two primary methodological approaches: targeted metabolomics, which focuses on precise quantification of predefined metabolites, and untargeted metabolomics, which aims to globally profile as many metabolites as possible without prior hypothesis [6]. The cross-validation of results from these complementary approaches significantly enhances the reliability of metabolomic findings and provides a more holistic view of the metabolic network.

The effectiveness of metabolomic studies hinges on the selection and integration of analytical platforms, primarily liquid chromatography-mass spectrometry (LC-MS), gas chromatography-mass spectrometry (GC-MS), and nuclear magnetic resonance (NMR) spectroscopy. Each technique offers distinct capabilities and limitations in metabolite coverage, sensitivity, and structural elucidation [28]. This guide objectively compares the performance of these platforms, supported by experimental data, to inform researchers and drug development professionals in designing robust metabolomic studies with comprehensive metabolite coverage.

Platform Comparisons: Technical Specifications and Performance Metrics

The choice of analytical platform profoundly influences the scope, depth, and reliability of metabolomic data. Below we present a structured comparison of the major analytical techniques.

Table 1: Performance comparison of major analytical platforms in metabolomics

| Feature/Parameter | NMR Spectroscopy | LC-MS | GC-MS |

|---|---|---|---|

| Sensitivity | Low (μM-mM) | High (pM-nM) | High (nM-μM) |

| Analytical Reproducibility | Excellent | Moderate | Good |

| Sample Destruction | Non-destructive | Destructive | Destructive |

| Structural Elucidation Power | Excellent | Moderate | Good |

| Metabolite Identification | Direct, based on chemical shift | Relies on fragmentation patterns & databases | Relies on fragmentation patterns & retention indices |